Professional Documents

Culture Documents

JWeszka76-A Comparative Study of Texture Measures For Terrain Classification

Uploaded by

Luis PortillaOriginal Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

JWeszka76-A Comparative Study of Texture Measures For Terrain Classification

Uploaded by

Luis PortillaCopyright:

Available Formats

IEEE TRANSACTIONS ON SYSTEMS, MAN, AND CYBERNETICS, VOL. SMC-6, NO.

4, APRIL 1976

269

[18] C. K. Chow, "On optimum recognition error and reject tradeoff," IEEE Trans. Inform. Theory, vol. IT-16, pp. 41-46, Jan. 1970. [19] P. W. Cooper, "Hyperplanes, hyperspheres, and hyperquadrics as decision boundaries," in Computer and Information Sciences, J. T. Tou, Ed. Washington, D.C.: Spartan, 1964, ch. 4.

[20] R. Deutsch, Estimation Theory. Englewood Cliffs: PrenticeHall, 1965, ch. 10. [21] M. Ichino and K. Hiramatsu, "Suboptimum linear feature selection in multiclass problem," IEEE Trans. Syst., Man, Cybern., vol. SMC-4, pp. 28-33, Jan. 1974.

A Comparative Study of Texture Measures for Terrain Classification

JOAN S. WESZKA, CHARLES R. DYER, AND AZRIEL ROSENFELD, FELLOW, IEEE

Abstract-Three standard approaches to automatic texture classification make use of features based on the Fourier power spectrum, on second-order gray level statistics, and on first-order statistics of gray level differences, respectively. Feature sets of these types, all designed analogously, were used to classify two sets of terrain samples. It was

the other feature sets all performned comparably.

II. FEATURES USED

used.

This section describes the classes of features that were

found that the Fourier features generally performed more poorly, while

A. Fourier Power Spectrum

The Fourier transform of a picturef(x,y) is defined by

00

J been studied for at least 15 years. One of the earliest applications to be investigated was that of terrain analysis. A recent review of work on texture classification can be found in Haralick et al. [1]. A number of approaches to the texture classification problem have been developed over the years. One approach makes use of features derived from the texture's Fourier power spectrum. Another is based on gray level co-occurrences, i.e., on joint probability densities of pairs of gray levels. A third approach uses statistics derived from the probability densities of values of various local properties measured on the texture. In this paper, feature sets of these three types were used to classify two sets of terrain samples. An attempt was made to equalize the feature sets with respect to their orientation and size sensitivity. The feature sets and classification scheme used are described in Section II. Section III reports on pilot studies using a set of 54 aerial photographic terrain samples belonging to nine land use classes. Section IV describes a larger-scale study, using 180 LANDSAT imagery samples belonging to three geological terrain types.

Manuscript received July 16, 1975; revised September 10, 1975 and

T HE PROBLEM of automatic texture classification has

H. INTRODUCTION

and the Fourier power spectrum is IF2 - FF* (where * denotes the complex conjugate). It is well known that the radial distribution of values in IFl2 is sensitive to texture coarseness inf A coarse texture concentrated near the origin, will have high values of 122 while in a fine texture the values of IFl2 will be more spread out. Thus if one wishes to analyze texture coarseness, a set of features that should be useful are the averages of IF12 taken over ring-shaped regions centered at the origin, i.e., features of the form 2x Or J IF(r,=)12 dO

for various values of r, the ring radius. Similarly, it is well known that the angular distribution of values in IFl2 is sensitive to the directionality of the texture in f A texture with many edges or lines in a given direction 0 will have high values of IFl2 concentrated around the perpendicular direction 0 + (7/2), while in a nondirectional texture, IFl2 should also be nondirectional. Thus a good set of features for analyzing texture directionality should be the averages of IFl2 taken over wedge-shaped

regions entered at the origin, i.e., features of the form

F(u,v)

j4 27r1 (ux+VY

dx dy

November 4, 1975. This work was supported in part by the U.S. Air Force Office of Scientific Research under Contract F44620-72C-0062 r F(,) dr <>-and in part by the Division of Engineering, National Science Foundation, under Grant ENG74-22006.Jo The authors are with the Computer Science Center, University offovaiuvlesf0,tewdelp.

Maryland, College Park, MD 20742.frvrosvle

f ,tewdesoe

270

IEEE TRANSACTIONS ON SYSTEMS, MAN, AND CYBERNETICS, APRIL 1976

For n-by-n digital pictures, instead of the continuous Fourier transform defined above, one uses the discrete transform defined by ~ f(i,I)e2~~V1(iu+Jv01223 -I (iu) j)e F(u,v) = 2 n i,j= o 0 < u, v < n- 1.

the picture is

01123 00233

12322 22332

This transform, however, treats the input picture f(i,j) as periodic. If, in fact, it is not, the transform is affected by the discontinuities that exist between one edge of f and the opposite edge. These have the effect of introducing spurious horizontal and vertical directionality, so that high values are present in IF12 along the u and v axes. The standard set of texture features based on ringshaped samples of the discrete Fourier power spectrum are of the form

=

and (Ax,Ay) = (1,0), then these numbers are given by the matrix 0 12 3 _ 0 1 2 1 0 1 0 1 3 0 2 0 0 3 5 3 0 0 2 2

where the entry in row i and column] is the number of times gray level i occurs immediately to the left of gray levelj. [Note that for (Ax,Ay) = (1,0), this entry is related to the transition probability from level i to level j when the picture is scanned row by row.] A matrix of this form is sometimes called a gray level co-occurrence matrix. It is sometimes convenient to use a symmetric matrix in which pairs of gray levels at separation either 6 or -6 are counted; we shall denote this matrix by M,. If a texture is coarse, and ( is small compared to the sizes of the texture elements, the pairs of points at separation ( should usually have similar gray levels. This means that the high values in the matrix MA should be concentrated on or near its main diagonal. Conversely, for a fine texture, if ( is comparable to the texture element size, then the gray levels of points separated by ( should often be quite different, so that the values in M6 should be spread out relatively uniformly. Thus a good way to analyze texture coarseness would be to compute, for various values of the magnitude of 6, some measure of the scatter of the M6 values around the main diagonal. Similarly, if a texture is directional, i.e., coarser in one direction than another, then the degree of spread of the values about the main diagonal in Mal should vary with the direction of (5 (assuming that the (5 magnitude is in the proper range). Thus texture directionality can be analyzed by comparing spread measures of the M,6 for various directions of (. Haralick [1] has proposed a variety of measures that can be employed to extract useful textural information from Ma6 matrices. Only four of these, which he regards as particularly useful, will be defined here. In what follows, p(i,j) is the (i,j)th element of the given matrix (which has size m by m), divided by the sum of all the matrix elements. 1) Contrast: CON _ ( -j])2p(i,]). This is essentially ( the moment of inertia of the matrix around its main diagonal; it is a natural measure of the degree of spread of the matrix values. 2) Angular Second Moment: ASM-E p(i,j)2. This measure is smallest when the p(i,j) are all as equal as possible; it is large when some values are high and others

rl2<U2+v2<r22

IF(u,v)12

for various values of the inner and outer ring radii r1 and r2. Similarly, the features based on wedge-shaped samples are of the form

= +8182 <tan E

Oi O<u,v.n- 1 1lF(u,v)12. -l(VIU)<02

Note that in this last set of features, the "DC value" (u,v) = (0,0) has been omitted, since it is common to all the wedges. These standard features are sensitive to size (spatial frequency) only, or to orientation only, but not to both. On the other hand, the other two classes of features, to be described below, are all sensitive to both size and orientation. In order to obtain comparable feature sets, it was therefore decided to use Fourier features-based on intersections of rings and wedges. The rings used in most of the experiments (except for the first pilot study) were [2,4), [4,8), [8,16), and [16,31). Their upper frequency limits correspond, for a 64-by-64 picture, to object sizes 8 (4 objects and 4 spaces across the picture), 4, 2, and 1, respectively. The wedges used were 450 wide, centered at 00, 450, 90, and 135. B. Second-Order Gray Level Statistics Let ( = (Ax,Ay) be a vector in the (x,y) plane. For any such vector and for any picturef(x,y), we can compute the joint probability density of the pairs of gray levels that occur at pairs of points separated by (. If there are only finitely many gray levels (e.g., 0, . ,63), this joint density takes the form of an array ha, where h,(i,j) is the probability of the pair of gray levels (i,j) occurring at separation (5. This array is mn by mn, where mn is the number of possible gray levels, If the picture f is discrete, it is easy to compute the h6) array for f, where Ax and Ay are integers, by counting the number of times each pair of gray levels occur at separation (5 =(Ax,Ay) in the picture. As a very simple example, if

WESZKA et al.: TEXTURE MEASURES FOR TERRAIN CLASSIFICATION

271

are low, as is true, for example, when the values are clustered near the main diagonal. 3) Entropy: ENT - E p(i,j) log p(i,j). This measure is largest for equal p(i,j) and small when they are very unequal. 4) Correlation: COR - [ijp(i,j) - yJ(o.,y), where p,u ,- and r, are the mean and standard deviation of the row sums of matrix Ma, and jy and ay are analogous statistics of the column sums. This measures the degree to which the rows (or columns) of the matrix resemble each other. It is high when the values are uniformly distributed in the matrix, and low otherwise (e.g., when the values off the diagonal are small). In our experiments (except for Sections 111-A and IV-D), only the CON feature was used; the displacements were 6 (Ax,Ay)

(1,O),(0,1),(2,O),(0,2),(4,0),(0,4),(8,0),(0,8)

(1,1),(l,- 1),(2,2),(2,-2),(3,3),(3,-3),(6,6),(6,-6).

The first eight of these are in the horizontal and vertical directions at distances 1, 2, 4, 8. The remaining eight are in the diagonal directions at distances /2, 2-12, 312 _ 4, and 61/2 _ 8. Another approach to defining features of this class is to use matrices Ma based on pairs of average gray levels, taken over neighborhoods whose centers are 6 apart, rather than on pairs of gray levels of single points. In the main study reported in Section IV, features based on both M, and M6 matrices were used. The same 6 were used for both sets of features. The averaging neighborhoods were square and were of the same size as the displacements 6-in other words, for distances 1, 2, 4, and 8, we used averaging neighborhoods of sizes 1 by 1 (i.e., no averaging), 2 by 2, 4 by 4, and 8 by 8, respectively. Note that for sizes 4 and 8 in the diagonal directions, this implies that the neighborhoods overlap slightly. C. Gray Level Diffierence Statistics A third useful class of picture properties that can be employed for texture analysis are (first-order) statistics of local property values, i.e., the means, variances, etc., of the values of various local picture properties computed at every point of the given picture. In particular, we consider here a class of local properties based on absolute differences between pairs of gray levels or of average gray levels. For any given displacement 6 =(Ax,Ay), let f,(x,y) = lf(x,y) - f(x + Ax, y + Ay)j. Let p6 be the probability density of f6(x,y). If there are m gray levels, this has the form of an rn-dimensional vector whose ith component is the probability that f6(x,y) will have value i. If the picture fis discrete, it is easy to compute p by counting the number of times each value of f,(x,y) occurs, where Ax and Ay are

concentrated near i = 0. Conversely, for a fine texture, with 6 comparable to the element size, the gray levels of points 6 should often be quite different, so that f,(x,y) will often be large, i.e., the values in p, should be more spread out. Thus a good way to analyze texture coarseness would be to compute, for various magnitudes of 6, some measure of the spread of values in pa away from the origin. Four such measures might be the following. 1) Contrast: CON _ i2p6(i). This is the second moment of pb, i.e., its moment of inertia about the origin. 2) Angular Second Moment: ASM -E p(i)2. This is smallest when the p6(i) are all as equal as possible and large when some values are high and others low, e.g., when the values are concentrated near the origin. 3) Entropy: ENT -Z-p(i) log p.(i). This is largest for equal p6(i) and small when they are very unequal. 4) MEAN (1/rm) Z ip6(i). This is small when the p6(i) are concentrated near the origin and large when they are far from the origin. (There is no simple analog of Haralick's COR measure for the p6 nor would the MEAN measure be useful for the M,.) If a texture is directional, the degree of spread of the values in p6 should vary with the direction of 6 (if its magnitude is in the proper range). Thus texture directionality can be analyzed by comparing spread measures of the p6 for various directions of 6. In our experiments (except for the first pilot study) the 6 used were the same as in Section II-B. In all of the pilot studies, only the MEAN feature was used; in the main study, both the MEAN and the CON features. Another approach to defining features of this type is to use vectors P- based on differences between pairs of average gray levels, taken over neighborhoods whose centers are 6 apart, rather than using pairs of gray levels of single points. In the pilot studies, only average gray levels were used; in the main study, we used both single points and averages. When averaging was used, the averaging neighborhoods were square and were of the same size as the displacements 6, as in Section II-B. It should be pointed out that there is a close relationship between the vectors p, and the matrices M65. If we sum the elements of Ma along lines parallel to its main diagonal, we obtain total numbers of point pairs having a given gray level difference (ji - j1 = k), up to a proportionality constant. Thus features derived from M, matrices will be quite similar to features derived from pa vectors; in fact, the CON feature should be essentially the same for both.

integers,

If a texture is coarse, and 6i is small compared to the texture element size, the pairs of points at separation 6i should usually have similar gray levels, so that f6(x,y) should usually be small, i.e., the values in p6 should be

employed in the first pilot study. If we examine the points of the picture that lie along some given line, we will occasionally find runs of consecutive points that all have the same gray level. In a coarse texture, we would expect that relatively long runs would occur relatively often, whereas a fine texture should contain primarily short runs. In a directional texture, the run lengths that occur along a given

A set of features based on gray level run lengths was also

272

IEEE TRANSACTIONS ON SYSrEMS, MAN, AND CYBERNETICS, APRIL 1976

_Urban

Suburb

S|

Woods

~~Scrub

Railroad

~~~Swamp

Mar,h

||CliF|CEOrchard

Fig. 1. Terrain samples.

line should depend on the direction of the line. The run length features used were defined as follows [2]. Let p(i,j) be the number of runs of length j, in some direction 0, consisting of points whose gray levels lie in the ith range (we used the ranges (0,7),(8,15), * *,(56,63)). Then we can define the following features. 1) Long Runs Emphasis: LRE = Ej2p(i,j) p(i,j). This gives greater weight to long runs, of any gray level. 2) Gray Level Distribution: GLD = Yi (j p(i,j))2/ p(i,j). This is smallest when runs are evenly distributed over the gray levels. 3) Run Length Distribution: RLD = yj (i p(i,j))2/ X p(i,j). This is smallest when the run lengths are evenly distributed.

4) Run Percentage: RPC = Y_p(i,])/N2 where N2 is the number of points in the picture. This is largest when the runs are all short. The directions used were 0 = 00, 450, 900, and 1350. E. Classification Schieme Used The Fisher linear discriminant technique was used to classify the pictures based on their measured feature values.

The set of features measured on a picture is treated as a feature vector Z. Suppose we are given a pair of classes C, and Cj and a set of feature vectors Zil,Zi2,'-- ,Zik, and Zjl,Zj2, ,Zjk2 obtained from pictures belonging to C, and Cj, respectively. Let the sample means and the sample covariances be p,,pj and i,Y_j, respectively. A linear discriminant direction a is obtained such that when we project the feature vectors on this direction, (pi -_ j)2/(ai2 + oj2) is maximized, where pi and uj are the mean values of the projected samples from classes C, and Cj, and ai,2 and aj2 are their variances. It can easily be shown that the optimal linear direction o is given by - P). a = (, +

X)-'(p

To decide the class to which a sample Z belongs, we compute a.Z and compare it against (p,iaj + 1ujai)/ (uf + aj). The Fisher linear classifier so constructed is the optimal linear classifier and yields minimum error probability classification if both classes have a multivariate normal distribution with equal covariances. (It should be pointed out that the classifications obtained in this way are not necessarily maximum likelihood classifications.)

WESZKA et

al.:

TEXTURE MEASURES FOR TERRAIN CLASSIFICATION

273

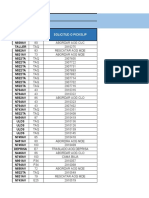

OF THE

NUMBERS

Fourier features Ring (1,1)

Number correctly classified

OF

TABLE I PICTURES CORRECTLY CLASSIFIED USING EACH

Number

64 SINGLE FEATURES

Number

Second-order statistics

correctly classified

17

Difference statistics

correctly classified

19

Run length features

Num,ber

19 11 14 14 15 14 12 16 19 17 17 17 19 18 17 19

correctly classified

15 16

20

11

CON(1,0)

(1,0)

(2,0)

LRE(0)

Ring (1,2)

Ring (2,4) Ring (4,8)

ASM(1,0)

ENT(1,0)

COR(1,0)

14

16

20

GLD(0)

17

18

(3,0)

(4,0)

RLD(0)

RPC(0)

LRE(90) GLD(90) RLD(90) RPC(90) LRE(45)

GLD(45) RLD(45) RPC(45)

16 19

Ring (8,12) Ring (12,16)

Ring (16,31)

Wedge (0,20)

18 21

19

CON(0,1)

ASM (0,1)

18 17

16

(5,0)

(6, 0)

(7, 0)

19

17 16 19 18 20 13 11 13 12 11

ENT(0,1) COR(0,1)

CON(l,l)

11 10

17

15

16

14 14

( 8, 0)

( 0,1)

Wedge (20,40) Wedge (40,60)

Wedge (60,80) Wedge (80,100)

15

10

ASM(l,l)

(0,2)

(0,3)

(0, 4)

ENT(l,l) COR(l,l)

CON(l,-l)

13

Wedge (100,120) Wedge (120,140)

Wedge (140,160)

15 13

8

19

22 19 18

(0, 5)

(0, 6)

( 0, 7) ( 0,8)

LRE(135)

GLD(135)

ASM(l,-l)

ENT(l,-l)

COR(l,-l)

RLD(135)

Wedge (160,180)

13

RPC(135)

When we have more than two classes, let us say C,, ,C,, we can use a voting scheme to classify a given measurement Z. For each pair of classes Ci,Cj, we project Z on the appropriate line and classify it as described above. This gives us t(t - 1)/2 different classifications of Z. Finally, we assign Z to the class that received the most votes. Suppose, for example, that Z comes from class Ch; then most of the votes between Ch and the other classes Ck should be in favor of Ch. On the other hand, the votes between classes Ci and Cj, with i,j both different from h, should be essentially random. Thus class Ch should normally receive the greatest number of votes.

II-A-II-C as well as the run length features of Section II-D. These feature sets were not equalized with respect to orientation and size sensitivity. Using the notation of Section II, the feature sets, each of which consisted of 16 features, were as follows. 1) Fourier Power Spectrum Features: 1-7) SOrlr29 for (r,,r2) = (1,1), (1,2), (2,4), (4,8), (8,12), (12,16), and (16,31). 8-16) 04,o2, for (01,02) = (0,20), (20,40), (40,60), (60,80), (80,100), (100,120), (120,140), (140,160), and (160,180). 2) Second-Order Gray Level Statistics: CON, ASM, ENT, and COR of M,6, for 3 _ (Ax,Ay) = (1,0), (0,1), (1,1), and (1,- 1). 3) Gray Level Difference Statistics: MEAN of pa, for III. PILOT STUDIES 3 = (2,0),(3,0), -,(9,0) and (0,2),(0,3), -,(0,9); here (in The terrain samples used in the pilot studies, which were this study only) the neighborhood sizes were I less than the provided by Prof. R. M. Haralick of the University of separations, i.e., the sizes were 1,2,-- *,8, respectively. 4) Gray Level Run Lengths: LRE, GLD, RLD, and RPC, Kansas, are shown in Fig. 1. These samples are 64-by-64 arrays whose elements have 64 possible gray levels (0, * *,63). for directions 0, 45, 90, and 135. Note that in set 1) there are seven sizes (spatial frequencies) The samples have been subjected to a gray-scale "histogram flattening" transformation to make each gray level occur and nine directions; in set 2), essentially only one size (1 or equally often. This was done in order to remove the effects /2) and four directions; in set 3), eight sizes and only two of unequal overall brightness and contrast in the original directions; and in set 4), four directions. The Fisher classification scheme described in Section II-E images; these effects might otherwise have dominated the measured feature values. A discussion of histogram flatten- was used to classify the 54 terrain samples of Fig. 1 into the nine classes shown there, using each of these 64 individual ing transformations can be found in [ 1] . features. The results are shown in Table I. Only a few Stdy A. Prliminry Usng Unqualied Feturesfeatures in each class correctly classified 20 or more of the A preliminary study was conducted (by Eileen J. Carton 54 samples, so that the results are rather poor. Nevertheless, of our laboratory') using sets of commonly employed the results are far above chance, since there are nine classes, features of each of the three classes described in Sections and one would expect a picture to be classified correctly by chance only 1/9 of the time, i.e., only six of the classifications should have been correct. ' Now with D;igital Equipment Corp.

-

274

IEEE TRANSACTIONS ON SYSTEMS, MAN, AND CYBERNETICS, APRIL

1976

BEST-PERFORMING PAIRS OF FEATURES

TABLE II

Feature set Fourier

Feature pair Ring (2,4) and ring (16,31) Ring (8,12) and ring (16,31) Ring (1,2) and ring (16,31) Ring (12,16) and ring (16,31)

Ring (12,16) and wedge (100,120)

Nurrmber correctly

37

classified 37

35

35 35 34 33 33

Second-order statistics

ENT(1,0) and ENT(1,-1) ASM(1,-1) and ENT(1,-1) COR(1,0) and CON(1,-1) CON(1,0) and CON(1,-l)

one exception) pairs of rings, one of which is the largest to the highest spatial frequency, or finest detail). Similarly, the best pairs of difference statistics are always both horizontal or both vertical, and always involve small sizes (0 or 1, with 0 best); they never involve the very large sizes. These results again imply that coarseness is important for classifying our set of features; the eight best pairs involve comparing a fine-detail feature (ring (16,31), or a difference of size 0 or 1) with a coarser feature. The reason that the second-order statistics did poorly is probably that they involved almost no size variation; all the 5 used were based on nearest-neighbor point

ring (corresponding

Difference

statistics

(H,0) (V,0) (V,1) (H,1)

and (H,2) and (V,1)

and (V,3) and (H,3)

40 39 38 38

31 31

30

Run length

RPC(135) and RPC(90) RPC(135) and RPC(0)

GLD(135)

and GLD(0)

.LD (135) and RLD (0)

30

Substantially better results are obtained when we use pairs of features values. This was done for each of the 120 pairs of features belonging to each of the four classes. Here again, each picture was then classified using the Fisher criteria computed from the entire set of pictures. The results are not given here in full, but Table II shows the four feature pairs in each class (or more, in case of a tie) which scored highest. Here the performance is much better; one feature pair did as well as 40 out of 54 correct, i.e., nearly 75 percent. A few comments can be made about the results shown in Tables I and II. For the single features, the second-order gray level statistics did best; of these, the (1,- 1) direction was consistently best for all four features. This may reflect the presence of some diagonal structure in the pictures. For gray level differences, the vertical results were consistently poor for large amounts of averaging (k . 3), but the horizontal results were not; this could be due to unequal sampling in the two directions. For the Fourier features, the wedges did substantially worse than the rings, indicating that coarseness is more important than directionality for these pictures. For the pairs of features shown in Table II, the features based on difference statistics seem to have done significantly better than the Fourier features, and these in turn seem significantly better than the second-order statistics and run length features. (No attempt will be made here to quantify these remarks, since the present study used such a small number of pictures.) Here again, for the second-order statistics, one of the features in each best pair was always a 135 feature. The best Fourier feature pairs are (with

pairs, i.e., distances of 1 or 2. The run length features did generally more poorly than the other feature types, in both Tables I and II, possibly because these features are more sensitive to noise than the other types. For this reason, run length features were not used in the remaining studies. Since size or coarseness appears to be important in the classification of our texture samples, it is evidently unfair to use feature sets that do not have comparable size sensitivity. For this reason, in the subsequent studies feature sets that were equalized in orientation and size sensitivity were used. B. Study Using Equalized Features In this study, the feature sets used all involved four sizes (or spatial frequency bands) and four directions, as described in Sections II-A-Il-C: four rings intersected with four wedges; the CON feature for M., and the MEAN feature for pj, with distances 1, 2, 4, 8 in four directions. Thus there were again 16 features in each set. Each of the 48 features just described was measured for each of the 54 pictures in Fig. 1, and classifications were obtained using the Fisher voting scheme of Section II-E. The single-feature results are shown in Table III. They are not very different from the results in Table I, except that one Fourier feature does remarkably well (25 correct out of 54). The pairs of features yield much better results, as shown in Table IV, which gives the feature pairs in each set having the top four scores. (For the detailed scores see Table 4 of [3].) The Fourier features do slightly better than the results in Table IL (the top four scores are 38, 37, 36, 34, rather than 37's and 35's); the difference statistics do somewhat better (43, 42, 41, 39 rather than 40, 39, and 38's); and the second-order statistics do substantially better (40, 39, and 38's rather than 34's and 33's). The improvement in performance of the second-order statistics is very likely due to the fact that more than one distance is used in the new features. The best score, 43 out of 54 (for difference statistics (2, 135) and (4, 135)), is nearly 80 percent correct, which is quite good for a nine-class problem using only two features. The pairs of second-order and difference statistics seem to do systematically better than the Fourier feature pairs. This can be seen if we look at histograms of the scores obtained using the three feature sets; the Fourier pair

WESZKA et al.: TEXTURE MEASURES FOR TERRAIN CLASSIFICATION

275

TABLE III NUMBERS OF PICTURES CORRECTLY CLASSIFIED USING EQUALIZED SINGLE FEATURES

Feature No.

Fourier features

Ring

n)

Wedge

Number correctly classified 10 19 14

25

Second-order statistics Distance 1 1

v2

Direction 0 45 90 135

Number correctly classified 17 15 18 19

Difference statistics Size 1 1 1 1 Direction 0 45 90 135

Number correctly classified 19 18 15 20

1 2 3

4

(16,31) (16,31)

(16,31)

(16,31)

0 45

90

135

(8,16)

18 15

17

2 2 2 2

4

15

6

7

(8,16)

(8,16)

45

90

17

14

2/2

2

45

90

45

90

14

16

(8,16)

(4,8)

135

20

12

2/2

4

135

0

20

22

135

0

17

17 16 12 20 11

18

10 11 12

(4,8)

45 90 135

0 45 90

15 15 17

7 17

3v/2

4

45 90 135

0

20 17 18

9

4 4 4

8 8

45 90 135

0

(4,8)

(4,8)

(2,4)

(2,4)

3/2

8

13

14 15

6/2

8

45

90

18

20

45

90

(2,4)

13 13

13 19

16

(2,4)

135

6/2

135

16

135

TABLE IV BEST-PERFORMING PAIRS OF EQUALIZED FEATURES

Feature set Feature pair

Number correctly

and and and and and Ring (16,32) n Wedge 45 Ring (16,32) C Wedge 135 38 37 36 34 34

classified

Fourier

Ring (8,16) n Wedge 45

Ring (8,16) n Wedge 45

Ring (8,16) n Wedge 135

Ring (4,8) Ring (2,4)

Second-order statistics

fl Wedge 135

Ring (16,32) fl Wedge 135

Ring (16,32) n Wedge 135

fl Wedge 135

and

and and and

Ring (16,32) n Wedge 135

Dist /2, Dir 135

Dist 6/2, Dir 135

40

39 38 38

38

Dist /2, Dir 135

Dist Dist Dist Dist

Dist 3/E, Dir 135

1, Dir 90 1, Dir 0

1, Dir 0

Dist 6/2, Dir 45 Dist 2/2, Dir 45 Dist

Dist

Dist /2, Dir 45

and

and and

4, Dir 0

6/2,

Dir 45

38 38

38

2, Dir 0

2, Dir 0

2, Dir 135 1, Dir 135 1, Dir 45 1, Dir 45

Dist

Dist

4, Dir 0

8, Dir 0

Dist

Difference statistics Size Size Size Size

and

and and and and

Size 4, Dir 135 Size 2, Dir 135 Size 4, Dir 45 Size 2, Dir 45

43 42 41 39

276

4)

0 4-44

*0H

z

I

I*B

u*

O -40 4(4)

0>t

s4- 4I

C

4.'

0* -4.-

~ ~ ~ ~ ~ ~ Ct,X

C

IEEE TRANSACTIONS ON

SYSTEMS, MAN, AND CYBERNETICS,

APRIL 1976

.1

*o

*0

c)

-*

**

*41*

*+

** ,* 0; ~~~~~~~~~~~~~~~~~~~~~~~~~

***

** *

S

+fi

L-*

41~~~~~~~~~~~~~~

*1 * * ***

z

* 4****

a)

Fourier

a)

Fourier

C~~~~~~~~~~~~~~~~~~~~~~~~~~~

c

Cs

0a)

0*

I*

*f*

**

4

* z

(-0 Id

9 *r4 D I

44

b) ~

Second-order

) Second-order ~~~~~~~~~~~b

D

la) 0 ~ ~ ~

r,A

B -

~~~0(L~~~.)

cr

I** **

*1

*1111

40

b) **********., * * ** +* Second-order~~~~~~~~~~~~~~~~~C)C ** *0 ** ,0 g *** *41-41T,* 7 B

.--

-.

~-

.-,

o.Z-3-a,

41~~~~~~t

tt *

* y

** KY-4*4

**

414141*41

41414r14141*

* *)

K)--o

-"\Nj \

** *4 UN'NInP

**1 -

co

*

.,

b)

~ ~ ~ ~ ~ ~ ~ ~

Sifend-rer I****************

-,

********

c)

O

SFfen-rder

4N

1)1

70

t grams, he

axis repreentrten

rec

obandDiffey

iial frteote etrenets

ftedsacso

axis, the number of feature pairs. which yielded that score. sizes is always small (1, \/2, or 2). The single features of all three types do about equally These results suggest that we could also do well if we well (with the exception of an unusually good F;ourier used certain combinations of our features as single, "high-erdifrec already mentioned). etcldrcinapasol feature, sttsis an h There appears to be a bias order" features. For example, suppose that we computed he ordrcin,frecOie(rdsac,o pta once The* twIetrsi reunybn) four directions, for each n h enadsadr eito in favor of diagonalonc~ ahpi in ~ sal performing the mean over ie,frec ieto.I size or distance, directions~ r the best ntesm iniatnagi*htcasns smr vrtefu the tion,~ ~ of ~ ~~~~~~~s ~ htflosh features im- ran(three outdietonlt eve diciiaigtee faue*fScinIIBwl erfre feature pairs rw four tha difference statistics are the and used these means as features, then oa h conbest for 0ha tetues whe the f 0atcua size the Not ee 41etosaue,i otatwt tecmoiefaue meie should 135 difer directio'n; als top Fourier features are all in the 45 h lsiiainrslsfr h large-size ean *h *41*r 41hteFuirfaue,oe itn of a small-size mean and a igecmoiefaue o andth135 directions,*from unusually good *41 rqec pta r hw the other hand, if we one pair1 is alay with the oprsnwt *ihs at 135), do well. OnnTbl .I sse,bcomputed means overal the ban may corsodn fact that the distances in the t which(1,3) be due to the *to h4mletsieo1itne;1I*htte4obndFuie1etrsd*wreta as four sizes for each direction a-nd used these means diagonal directions are 'not quite the same as those in the features, we should not expect to do well. A supplementary horizontal and vertical directions. The horizontal direction study, based on such composite features, will now be is consistently poor for the Fourier features, and the vertical described. This method of combining features was also direction is not very good either, perhaps du'e to the spurious used by Haralick in [1]. high values in those directions (resulting from the nonperiodicity of the pictures), which may mask the real textural C td sn opst etr differences. For each of the three sets of features used in Section Among the good feature pairs shown in Table IV, only III-B, a set of composite features was computed. This the diagonal directions appear, for the Fourier features and was done by taking the mean and standard deviation over

WESZKA et

al.:

TEXTURE MEASURES FOR TERRAIN CLASSIFICATION

277

TABLE V NUMBERS OF PICTURES CORRECTLY CLASSIFIED USING COMPOSITE SINGLE FEATURES

Feature No.

Fourier

Mean over sizes in direction 0

45

Second-order

16

19

Difference

1

2

8

13

17

13

16

23

3 4

90

135 S.D. over sizes in direction 0

9

14 9

17

22 14

5

6

17

45 90

135

16 13

14

19 20

16

23

18

7

8

12

Size 1: 17

Mean over directions

Ring (16,31): 18

Distance 1 or

/2:

16

10

Ring (8,16):

21 14

17

8

Distance 2

or2/2: 17

Size 2: 16

11

12

13 S.D. over directions

Ring (4,8):

Ring (2,4):

Ring (16,31):

Distance 4 or 3/2: 21

Distance 8 or 6/2: 14 Distance 1 or

Distance 2

Size 4: 18

Size 8: 20 Size 1: 17

Size 2: 15 Size 4: 14

/2:

16

19 15

14

15 16

Ring (8,16):

Ring (4,8):

9

10

or2/2:

Distance 4 or

Ring

(2,4):

14

Distance 8 or

3/2: 6/2:

16

Size 8: 13

raw features, while the combined second-order statistics do about as well as, and the combined difference statistics slightly better than, the corresponding raw features. For the latter two sets, the mean over sizes does surprisingly well, particularly in the 135 direction. Note that the com-. bined mean Fourier features are similar to the Fourier features that were used in the first pilot study of Section III-A except that the wedges used are wider. The results for pairs of composite features are given in Table 7 of [3]; the best few pairs in each feature set are shown for convenience in Table VI. Here the combined Fourier features and difference statistics both do somewhat worse than the corresponding raw features, while the combined second-order statistics do slightly better than the raw features. Histograms of the feature pair performances are shown in Fig. 3. It can be concluded that for the combined features too, the Fourier set is substantially worse than the other two sets. It will be noted that in the best feature pairs, one feature is almost always a mean over directions, confirming once again that size is more important than direction. Moreover, one of the sizes in each best feature pair is always the smallest or next smallest size that was used. When a mean or standard deviation (SD) over sizes is present, the direction is always diagona!, as before. These conclusions are similar to those discussed in Section Ill-B. There is no apparent advantage in using combined rather than raw features.

sets. In fact, inspection of the pictures in Fig. 1 indicates that this result should not be surprising, since some of the pictures do not closely resemble the other pictures in their classes. For example, the last picture in the urban class has more detail in it than the other five pictures, the last two marsh pictures are more finely textured than the first four, and so on. A detailed analysis of how the individual pictures were misclassified is beyond the scope of this paper. For a list of pictures most often misclassified by the best feature pairs see [3], Table 9. Appendix A of [3] explores how well the pictures in each class are clustered. This was investigated by plotting, for each class, a one-parameter family of feature values (specifically, the Fourier ring features for rings (1,1),(2,2), ,(33,33)) for each picture in the class; clustering or dispersedness is more apparent for these plotted curves than it would be for single-point feature values.

E. Discussion The studies described in this section involved a very small data set, which was used because similar pictures had previously been analyzed by Haralick (see [1]). Unfortunately, the smallness of the data set makes any conclusions drawn from these studies tentative at best. Since there were only six samples in each of the nine classes, no attempt was made to use more than two features at a time. (Even with only two features, it is possible that the results are influenced by artifacts in the samples; e.g., why is 1350 generally better than 45?) For similar reasons, it seemed inappropriate to use a higher-order classifier than the Fisher linear discriminant. Division into test and training sets would not have been reasonable, and the computational

D. Analysis of the Errors In many cases, the errors made in classifying the pictures show consistent patterns; a particular picture is often misclassified in the same way by many different feature

278

IEEE TRANSACTIONS ON SYSTEMS, MAN, AND CYBERNETICS, APRIL

1976

BEST-PERFORMING PAIRS OF COMPOSITE FEATURES

Number correctlv alasified

TABLE VI

Feature set

Fourier

Feature pair

Mean over dirs.,ring (2,4), and mean over

dirs., ring (16,31)

34

33

mean over dirs.,ring (8,16), and mean over sizes, dir. 45

mean over dirs.,ring (4,8), and mean over dirs., ring (16,31)

Mean over dirs.,ring (8,16), and mean over dirs., ring (16,31)

32

31

31

Mean

sizes, dir. 135

over dirs.,ring (8,16), and mean over

Mean over dirs.,ring (8,16), and S.D. over sizes, dir. 135

Mean over dirs.,ring (16,31), and S.D. over sizes, dir. 45

31

31

order

Second-

Mean over dirs., dist. 2 or 217, and mean

over dirs., dist. 4 or 317

Mean over dirs., dist. 1 or 17, and mean

42

dire., diet 8 or 6/1 Mean over dists. ,dir. 45, and dire., diet. 1 or 17

over

41

man over

40

39 39

Fig. 4. LANDSAT image used in main study, with regions outlined. B-Lower Pcnnsylvanian A-Mississippian limestone and shale. shale. shale. C-Pennsylvanian sandstonc and

S.D. over dists., dir 45, and mean over dirs., dist. 1 or 17 S.D. over dists., dir. 135, and S.D. over dirs., dist. 1 or 17

Mean over dirs., 4 dist.317 or 17, and mean over dire., diet. or 1 S.D. over dirs., dist. 1 or 17, and S.D. over dirs., dist. 2 or 217

Difference

Mean over dirs., size 1, and S.D. over dirs.,

39

39

size 1

1

40

two of the classes, scrub and woods. In our study, we used only 16 features (of each type), and obtained nearly 80 percent correct classifications with the best pairs of those, using all nine classes. It is difficult to estimate how much worse our results would have been if we had used a leaveone-out testing scheme; but under the circumstances, our

results can be regarded as reasonably good.

Meaniover dirs.,

size

size 1, and man over dirs.

39

38

Mean over sizes, dir. 135, and mean over dirs.,

IV. MAIN STUDY I.M I TD

A. Data and Features Used

Mean over sizes, dir. 45, and mean over dirs.,

Mean ov-r dirs., size 1, and mean over size 2 Mean over dire., size 2, and mean over

size 1

38

38

dire.,

dire.,

38

The terrain samples used in the main study were selected Kentucky us by from a LANDSAT-1 image of Eastern available to(frame E 1354-15424, band 6), which was made

features.

cost of a leave-one-out testing scheme would have been prohibitive. In spite of these inadequacies, the comparative results obtained from these studies may still be of some value. These results suggest the following tentative conclusions. 1) Fourier features do not do as well as second-order or difference statistics (except on the first pilot study.)2 2) Difference statistics do about as well as second-order statistics (better, on the first pilot study). 3) Composite features seem to do no better than raw

The first two conclusions are substantiated by the results of the main study, which is described in Section IV. In [1], using 170 terrain samples of the same types and a leave-one-out test procedure, Haralick obtained 82.3 percent correct classifications; but he used 33 composite features (means, ranges, and standard deviations over the four directions, derived from an initial set of 44 second-order statistics, II in each direction at distance 1). He also merged

2The second-order and difference statistics are also less costly to compute than the Fourier features, which is a further argument in their

favor.

the Earth Satellite Corporation; see Fig. 4. A geologist, Dr. John R. Everett of EarthSat, demarcated a set of regions on this image, as shown on Fig. 4, and identified these regions as having three geological terrain types: Mississippian limestone and shale; Lower Pennsylvanian shale; and Pennsylvanian sandstone and shale (labeled A, B, C on Fig. 4). A set of 60 windows, each 64 by 64 pixels, was selected from each of the three regions. The image gray-scale was modified to cover just 64 gray levels, and histogram flattening was performed on each of these 180 windows. The resulting normalized windows are shown in Figs. 5-7. Seven sets of 16 features each, equalized in size and orientation sensitivity, were used. Each set involved four sizes (or displacements, or spatial frequency bands), and four directions, just as in Section III-B; these are listed, for convenience, in Table VII. The Fourier feature set was the same as in Section III-B. There were two feature sets involving second-order statistics, using the CON feature for both the Ma and the M. Finally, there were four feature sets involving difference statistics; these used the CON and MEAN features for the pj and for the pi. Note that for distance 1, the MJ and Ma features are the same, and the p, and wa features are the same. The Fisher classification s m u a scheme was used, just as in Section 111.

j5,

WESZKA et al.: TEXTURE MEASURES FOR TERRAIN CLASSIFICATION

279

Windows 1-16

Windows 17-32

Windows 1-16

windows 17-32

Windows 33-48

Windows 49-60

Fig. 5. Windows of terrain type A (Mississippian limestone and shale).

1-~~~~~~~~~~~~~~~~

shale).

FEATURE NUMBERING

Windows 33-48 Windows 49-60 7. Windows of terrain type C (Pennsylvanian sandstone and Fig.

TABLE VII

135

No.

or

Size,

1

displa;cement, spatial frqecy band

1

Direction

~~~~~~~~~~~~~0

45 90

1 1

Windows 1-16

Windows 17-32

5 6

7

2 2

~~ ~~2

0 45 90 135 0

45 90 135

2 4 4 4 4

10

11

12 13

Windows 33-48

Windows 49-60

8 8

0

45

14

Fig. 6. Windows of terrain type B (Lower Pennsylvanian shale).

B. Resuilts

The 180 terrain samples shown in Figs. 5-7 were classified into the three classes, using each of the 16 individual

15

16

Notes:

8

8

90

135

2_2, 3r2, 6/2) correspond to spatial frequency bands (r , r2 ) = (16, 31), (8, 16), (4, 8), and (2, 4).

Directions 0, 45, 90, 135 are the centers of the

Sizes 1, 2, 4, 8 and displacements 1, 2, 4, 8 (or

V/7,

features in each set, and also using each of the 120 pairs of features in each set. The numbers of samples classified correctly by the individual features are shown in Table VIII,

spatial frequency sectors.

280

IEEE TRANSACTIONS ON SYSTEMS, MAN, AND CYBERNETICS, APRIL 1976

TABLE VIII NUMBERS OF SAMPLES CORRECTLY CLASSIFIED USING INDIVIDUAL FEATURES

FEATURE SET Feature No.

1 2 3 4

Fourier

130 117 116 133 108 81 92 102 94 94 111 101

Second-order

(points)

131 123 136

Second-orda

(averages)

131 123 136 128 101 86 112 89

Difference (points) CON MEAN

130 122 135 128 133 108 128 120 132 123 136 128 126 110 126 119

Difference (averages) CON MEAN

130 122 135 128 105 106 116 123 132 123 136 128 101 87 112 91

5 6 7 8 9 10 11 12 13 14 15 16

128 126 110 126 119 99 92 113 99

116 106 117 112

99

97 108 100

99

92 113 99

115

105 108 109

117

106 116 112

77 95 90 86

99 68 82 80

107 117 116 107

100 71 79 77

99 68 82 80

107 108 108 103

107 117 116 108

and the numbers correctly classified by the best feature pairs are shown, for each feature set, in Table IX. (See Tables 3-9 of [4] for the details.) The following remarks can be made about these results. 1) The best results obtained using each feature set always come from feature pairs involving small sizes (1, 12, or 2). Feature pair (1,3), i.e., size 1, horizontal direction, paired with size 1, vertical, occurs as one of the three best-scoring pairs for each of the seven sets. 2) The Fourier features do not do quite as well as the other types of feature pairs. See Section IV-E for a discussion of possible reasons for this. 3) Statistical features (MEAN or CON) based on average gray levels (Ma or p) do better, especially for large sizes, than those based on single points (M, or p.); see Tables 3-9 of [4]. 4) The MEAN features, derived from the pa, do at least as well as the CON features derived either from the pa or the Ma. Since these MEAN features are computationally cheapest to implement, they would seem to be the preferred choice for the present classification problem. All of the statistical features did about equally well; the best single feature in each set correctly classified 135 or 136 of the 180 samples (about 75 percent), while the best feature pair in each set correctly classified 165, 166, or 167 of the samples (about 93 percent). These results were not verified by independent testing (i.e., the classifier was designed and tested on the same sample set), but since such a large number of training samples was used ((samples per class)/(number of features) = 30), this should make little difference.

samples misclassified by one pair were often misclassified by other pairs as well. Often, but not always, these samples did appear to differ visually from the general appearance of their classes. A geologist and a naive subject were asked to classify the 180 samples, presented in random order and out of context, into three classes. The geologist got 143 of the 180 correct, while the naive subject got 145 correct. (The errors made by each of them are listed in Table 13 of [4]). There was only partial overlap among the misclassifications made by the features, the naive subject, and the geologist. Note, however, that both subjects made about 20 percent errors, which is poorer performance than that of even the good Fourier feature pairs.

D. Supplemental Study As a check on the results of the main study, a supplemental study was conducted using the ASM and ENT features (see Sections Il-B and II-C) for the same set of Ma and Ma cooccurrence matrices, and p6 and p,, difference histograms. Another feature proposed by Haralick [I], the "Inverse Difference Moment" IDM - p(i,)/((i - j)2 + 1)-or E p6(k)/(k2 + 1), for the difference histograms-was also tested. These twelve sets of features were computed for the same 16 values of 5 indicated in Table VII, and the classification scheme of Section Il-E was used, as in the main study, to classify the 180 terrain samples into the three classes, using each single feature and each pair of features in each set. The single-feature classification results are given in Table X. The feature pairs giving the best scores are listed in Table XI; for the detailed scores of the feature pairs see [5, t C. Analysis ofhe Errors Tables 2-13]. For convenience, the maximum, mean, The errors made by the highest-scoring feature pairs median, and minimum scores of the single features in all in each set are given in Tables 10-12 of [4]; the details 18 feature sets (the 12 new sets, plus the six non-Fourier are beyond the scope of this paper. It was found that the sets used in the main study) are summarized in Table XII,

WESZKA et al.: TEXTURE MEASURES FOR TERRAIN CLASSIFICATION

281

TABLE IX BEST-PERFORMING PAIRS OF FOURIER, CON, AND MEAN FEATURES

Number

Feature set

Feature pair

classified

correctly

158

153

Fourier

Ring (16,31) n Wedge

Ring (16,31) n Wedge

0

0

and

and

Ring (16,31) n Wedge 135

Ring (16,31) n Wedge

90

Ring (16,31)

n Wedge 45

and

Ring ( 8,16) n Wedge 2, Dir

0

90

151

Second-order CON, single

Dist /2, Dir

Dist

1, Dir

45

and and and and

Dist

Dist

166

166

points

90

0

0

/4,

Dir 135

Dist

Dist

1, Dir

1, Dir

Dist /2, Dir

Dist

1, Dir

45

90

163

163

Dist

1, Dir 1, Dir 1, Dir

1, Dir

90 90 90

0

and

and

Dist

Size

2, Dir

16.0

166

CON, averages

Second-order

Size

1, Dir 135

Size

Size Size

and

and

Size

Size

1, Dir

1, Dir

45

90

163

163

1, Dir 135

45 90 0

and

and and and

Size

Dist

2, Dir

2, Dir

0

0

158

166

Difference CON, single points

Dist /2, Dir

Dist Dist

1, Dir 1, Dir

Dist /2, Dir 135 Dist /2, Dir

45 90

0

166

162

Dist

Dist

1, Dir

1, Dir

1, Dir 1, Dir

0

90

and

and

Dist

Dist

1, Dir

2, Dir

162

160

Difference CON,

averages

Size

Size

90

0

and and

Size

Size

1, Dir 135 1, Dir

45

166 162

Size

Size

1, Dir

and

and

Size Size

Dist

1, Dir 2, Dir

2, Dir

90

0

162

158

167

165 164

1, Dir 135

MEAN, single points

Difference

Dist Dist Dist Dist

/1,

Dir

45 0 0 90

0

and

and and and and

0

90

45

1, Dir

1, Dir 1, Dir 1, Dir

Dist

1, Dir

Dist /2, Dir

Dist

Size

/r,

Dir 135

45

164

165

MEAN, averages

Difference

Size

Size

1, Dir

1, Dir 1, Dir

90

0

and

Size Size

1, Dir 135

1, Dir

164

163

Size

and

90

and a similar summary for the feature pairs is given in Table XIII. The following may be seen from these tables. 1) Like the CON features of [1], the best-scoring pairs of IDM and ENT features do about as well when measured for the difference histograms as when measured for the cooccurrence matrices; while the best ASM features (singles and pairs) do even better on the histograms than on the matrices. 2) The 1DM features do more poorly than the other sets. This may be because the 1DM features place greatest weight on pairs of gray levels (or averages) that are (nearly) equal, and least on pairs that are very unequal. Even in "busy" textures (short of pure "noise" textures), there will be some redundancy between the gray levels of nearby points; thus the matrix or histogram values for nearly equal pairs, or

nearly zero differences, will always tend to be higher than those for unequal pairs or large differences. The distinction between busy and coarse textures will be more apparent at the very unequal or large difference end of the scale. This end is emphasized by the CON features, but it is deemphasized by the IDM features. This suggests that in many cases the CON features will perform better than the 1DM features. 3) The worst features based on average gray levels do better than the worst features based on single-point gray levels for both single features and pairs, as already observed in Section LV-B. It was not surprising in the main study that the CON features based on difference histograms did as well as those based on cooccurrence matrices, since the CON feature for a

282

IEEE TRANSACTIONS ON SYSTEMS, MAN, AND CYBERNETICS, APRIL 1976

TABLE X NUMBERS OF SAMPLES CORRECTLY CLASSIFIED USING INDIVIDUAL ENT, ASM, AND IDM FEATURES

SECOND-ORDER STATISTICS

FEATURE

NO.

1 2

3 4

(poits)

130

118 136 124

ENT

(averages)

130

ENT

(points)

124

ASM

(averages)

124

ASM

IDM (Points)

113 112

(averages)

113

IDM

118

136

124

125 126 127

125

126 127 91 82 90 95

112

113

113 123 120

98 106 99 81 84

84

123

5 6 7 8 9

120

111

99

113

115

110

97

86

116

113

75

95

99 124

113

115

106

98

94

78 91

104

126

10

11

91

95

133

135

129

131

81

84

12 13

14

15

87

68 74

131

116 113

93

67 74

127

111 110

86

75 63

87

102

101

79

110

78 69

118 111

69 59

85 93

16

71

113

DIFFERE1NCE STATISTICS

FEATURE

NO.

ENT (plt)

(averages)

127

ENT

ASM (pit)

(averages)

127

ASM

(pit)

IDM

113

(averages)

113

IDM

1

2

127 126 133

127

127

126

133

124

137

124

137 126 106 94

112

113 123 121 99

112

113 123 103 87

3

4 5

127

126 130

127

97 85

6

7 8

108 127 118 93 96

107

129

115

101 103

109

90 117

108

112 105

118 109

99

99 93

106 72

106

85 89

9 10

11

12

110

99

97 69 79 74

115

112 111 114

109 102 104

71

104

111

81

88

78

85

94 99 85

94

13

108

115

77

66

14

15

119

109

77 76

110

72

62

16

109

matrix depends only on the spread of its values away from the main diagonal; but it is interesting that the same results were obtained for the ASM and ENT features.

F. Conclusions The following conclusions can be drawn from the main study; they confirm the tentative conclusions of Section

III-E (except for the conclusion about composite features, which were not used in the main study). 1) Good classification results (over 90 percent correct) were obtained on terrain samples representing three geological classes. This confirms the general usefulness of texture features, even in the absence of spectral information, for terrain classification [6].

WESZKA et

al.:

TEXTURE MEASURES FOR TERRAIN CLASSIFICATION

283

OF

BEST-PERFORMING PAIRS

Feature set

Feature pair

TABLE XI ENT, ASM,

AND

IDM FEATURES

Number correctly classified

Second-order ENT, single

Dist

1, Dir

0

0

and

and and

Dist

1, Dir

90

45

163

points

Dist

Dist

1, Dir 1, Dir 1, Dir

1, Dir

Dist /T, Dir

160 156 163 161

90

0

Dist /T, Dir 135 Size Size

1, Dir

90

ENT, averages

Second-order

Size

Size Size

and and

and

and

90

0

4, Dir

1, Dir

45 45

1, Dir

1, Dir

Size

Dist

Dist

160 164

163

Difference ENT, single points

Dist

90 0

VT,

Dir 135

45

Dist

Dist

1, Dir

and

and

Dist /, Dir

1, Dir

1, Dir

I

45

1, Dir

2, Dir

2, Dir

90

0

0

163 163

159

Dist /2, Dir

Dist

and

and

Dist

Dist

91

90

0

Difference ENT,

averages

Size

Size

1, Dir 1, Dir

and

and and and and and

and

Size Size Size Size

Size

1, Dir 135 1, Dir

45. 90

0

164

163 163

156 156

Size

Size

Size

1, Dir

1, Dir 1, Dir

1, Dir

2, Dir 2, Dir 2, Dir

90

0

0 45

Size

Second-order ASM, single

1, Dir 135

1, Dir 1, Dir 1, Dir

0

Size

156

158

158 152 151

158

Dist Dist

Dist

Dist VT, Dir

Dist

Dist Dist

Size

points

0 90

45

0

and and

and and

1, Dir 2, Dir

2, Dir 1, Dir

90

0

0 45

Dist /2, Dir

Second-order ASM, averages

Size

1, Dir

Size

Size

1, Dir

2, Dir

0

0

and

and

Size

Size

1, Dir

4, Dir

90

90

158

154

Size Size

1, Dir

90 0

45

and

Size

1, Dir 135

150

2, Dir

and

and

Size

Dist

4, Dir

2, Dir

0

0

150

167

Difference ASM, single points

Dist /, Dir

Dist

1, Dir

0

0 90

and

Dist /2, Dir Dist

1, Dir

45

90

165

165

161

Dist

Dist Dist

1, Dir

1, Dir

and

and

Dist /2, Dir 135

1, Dir

90

and and

and

Dist

2, Dir

161 161

165 165 161 160 140 140 133

Dist

1, Dir

1, Dir 1, Dir 1, Dir

90

0 0

Dist /2, Dir 135

Size Size

1, Dir 1, Dir

Difference ASM,

averages

Size Size Size Size

45

90

and and and

and

90

45 0

90

Size Size

Dist

1, Dir 135

1, Dir

1, Dir

2, Dir

1, Dir

IDM, single points

Second-order

Dist

Dist

90

0

1, Dir

and and and and and

Dist

Dist

2, Dir

Dist

Dist

2, Dir

1, Dir

0 90 45 90

Dist 2/2, Dir

45

2/F,

/f,

Dir 135

0

133 132

132

Dist Dist Dist

/2,

Dir

Dist

Dist

2, Dir

1, Dir

Dir 135

0

1, Dir

1, Dir

90

0

and

and and and and

and and and and and

Dist

Size

4, Dir

1, Dir

132 140

140 140 138 134 141

140

Second-order IDM, averages

Size Size Size

Size Size

90

0

1, Dir 1, Dir

1, Dir 1, Dir

1, Dir

45 90

Size Size

Size Size Dist

2, Dir 2, Dir

2, Dir

0 0 45

0 90

1, Dir 135 90

90 0 45

8, Dir

2, Dir 1, Dir

Difference IDM, single points

Dist

Dist

Diet

Dist

Size Size Size

Dist */2, Dir

Difference 1DM, average s

Size 1, Dir

2, Dir

1, Dir 2, Dir 8, Dir

0

90

135

140

0 90

Size

Size

1, Dir

and

and

0

45

139 137

1, Dir 135

284

IEEE TRANSACTIONS ON SYSTEMS, MAN, AND CYBERNETICS, APRIL 1976

TABLE XII MAXIMUM, MEAN, MEDIAN, AND MINIMUM SCORES FOR THE 16 SINGLE FEATURES IN EACH SET

The four scores in each box are the

SECOND-ORDER

maximum, mean, median, and minimum scores for that set of single features

STATISTICS

DIFFERENCE

STATISTICS

Single Points

136 100 95 68

127 101 104 67 123 93 86

Averages

Single Points

133 107 108 69

137 109 107 71 123 94 89 62 136

Averages

ENT

136 116 116 86

131 114 118 82 123 100 98 81 136

133 112 112 85

137 110 94

ASM

113

1DM

59

136

CON CON

123 97 94 72

136

~~~~110

68

108

113 112 86

108 110 68

113 112 87 135 114 108 103

MEAN

135 108 108 71

TABLE XIII MAXIMUM, MEAN, MEDIAN, AND MINIMUM SCORES FOR THE 120 PAIRS OF FEATURES IN EACH SET

The four scores in each box are the maximum, mean, median, and minimum scores for that set of feature pairs

Single Points

163 119

SECOND-ORDER STATISTICS

Averages

Single

DIFFERENCE STATISTICS

Points

ENT ENT

~~~~~123 74

158 118

122 70

163 138 138 105

158 133

164 129 129 79

167 131

164 134 134 99

165 133

ASM

134

131

131

97 140 115

115

80 141 111

114

108

IDM 1DM

~~~~~109 113

140 62 166 130

140 113

114

82

69

82

CON

_

129

166 134

134

72

96

166 166 134 130 130 n134

72 96

MEAN

167 131 131

73

165 135 133

115

WESZKA et

al.: TEXTURE MEASURES FOR TERRAIN CLASSIFICATION

285

2) Features based on second-order and difference statistics do about equally well (except for IDM) and perform somewhat better than Fourier features. Two reasons can be given for the poorer performance of the Fourier features. a) The discrete Fourier transform treats a picture as though it is periodic, even if, in fact, it is not. Thus the transforms of the terrain samples contain spurious high values in the horizontal and vertical directions, arising from "discontinuities" between the left and right columns, and the top and bottom rows, of the pictures. The presence of these spurious values may degrade the Fourier features. b) The textures of the terrain samples may be more appropriately modeled statistically in the space domain (e.g., as random fields with specified autocorrelations), rather than as sums of sinusoids. Thus our statistical features may capture the essential differences among the samples more effectively than do the Fourier features. 3) Statistics based on gray level averages gave better performance for larger sizes and distances than statistics based on single gray levels. This is presumably because at large distances, the single gray levels are relatively uncorrelated, so that the data become noisy; whereas the averages

remain

analysis exist, e.g., Bajcsy's [7] Fourier-based features. However, it was felt that in a comparative study, it was desirable to use equalized feature sets. It is hoped that our results will encourage others to carry out further comparative studies, which should lead to an increased understanding of the nature of visual texture and the choice of features for texture classification. ACKNOWLEDGMENTS

The authors wish to thank Prof. R. M. Haralick of the University of Kansas for providing the aerial photographic terrain samples; Mr. R. Michael Hord and Dr. John R. Everett of the Earth Satellite Corp., for providing and classifying the LANDSAT data; Prof. A. K. Agrawala of the University of Maryland, for providing the Fisher classification program; Eileen J. Carton, Robert L. Kirby, and Jeffrey M. Mohr, for conducting some of the classification studies; and Donald Kent, Andrew Pilipchuk, and Shelly Rowe, for their help in preparing this paper.

REFERENCES

adjacent [1] R. M. Haralick, K. Shanmugam, and I. Dinstein, "Textural features for image classification," IEEE Trans. Syst., M7an, Cybern., neighborhoods. vol. SMC-3, pp. 610-621, Nov. 1973. 4) The MEANs of the difference histograms pa3 did about M. M. Computer Graphics and Image using gray level pp. as well as the other statistical features (CON, ENT, ASM) [2] lengths,"Galloway, "Texture classificationProcessing, vol. 4, run 172-179, June 1975. or measured on either the j,5onsinlepoitsdidabou[3] J. S. Weszka and A. Rosenfeld, "A comparative study of texture pairs,thesttisticbasethe Mb. For the best feature the statistics based on single points did about as well pairs, measures for terrain classification," Computer Science Center, Univ. Md., College Park, Tech. Rep. TR-361, Mar. 1975. as those based on averages.3 Thus it seems that there C. R. Rosenfeld, "Experiments in should be no loss in classification power if one uses the [4] terrain Dyer, J. S. Weszka, and A. imagery by texture analysis," classification on LANDSAT Computer Science Center, Univ. Md., College Park, Tech. Rep. computationally cheapest of the statistical features, namely, TR-383, June S. Weszka, and A. Rosenfeld, "Further experiments C. R. Dyer, J. 1975. the EANsof the MEANs of the single-point difference histograms. [5] in terrain classification by texture analysis," Computer Science The feature sets used in these studies were not necessarily Park, Tech. Rep. textural Center, Univ. Md., R. Bosley,_ "Spectral andTR-417, Sept. 1975. the best ones of each class. Other approaches to texture processing 6]R. M. Haralick andCollege

correlated,

since

they

arise

from

3This is because the best pairs almost always involved small displacements, for which little or no averaging was done.

of ERTS imagery," in Proc. 3rd ERTS-1 Symp., vol. I, pp. 19291969, Dec. 1973. [7] R. Bajcsy, "Computer description of textured surfaces," in Proc. 3rd Int. Joint Conf. Artificial Intelligence, Aug. 1973, pp. 572-579.

You might also like

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeFrom EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeRating: 4 out of 5 stars4/5 (5794)

- NX 1926 Series Release Notes 1942 PDFDocument81 pagesNX 1926 Series Release Notes 1942 PDFJeferson GevinskiNo ratings yet

- Shoe Dog: A Memoir by the Creator of NikeFrom EverandShoe Dog: A Memoir by the Creator of NikeRating: 4.5 out of 5 stars4.5/5 (537)

- Technical Notification: Using The Old TT-3026x Cable Together With The TT-3027x TransceiverDocument5 pagesTechnical Notification: Using The Old TT-3026x Cable Together With The TT-3027x TransceiverNishant PandyaNo ratings yet

- Organization Transaction Processing System (TDocument15 pagesOrganization Transaction Processing System (TankurchandaniNo ratings yet

- The Yellow House: A Memoir (2019 National Book Award Winner)From EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Rating: 4 out of 5 stars4/5 (98)

- DL DefineDocument19 pagesDL DefineIrvan FirmansyahNo ratings yet

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceFrom EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceRating: 4 out of 5 stars4/5 (895)

- Atte. Profr. Bernardo Vázquez Aguilar Asignatura.-Español: Grupos 2dos (B-D-F) (Matutino) 1ros (A-B-C-D-E) (Vespertino)Document40 pagesAtte. Profr. Bernardo Vázquez Aguilar Asignatura.-Español: Grupos 2dos (B-D-F) (Matutino) 1ros (A-B-C-D-E) (Vespertino)Bernardo Vazquez AguilarNo ratings yet

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersFrom EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersRating: 4.5 out of 5 stars4.5/5 (344)

- Prepared By: Team Skylarks Submitted To: Prof. Md. Mahbubul Course: MIS 205 Section: 10Document21 pagesPrepared By: Team Skylarks Submitted To: Prof. Md. Mahbubul Course: MIS 205 Section: 10Md. Ashiqur Rahman Bhuiyan 1721733No ratings yet

- The Little Book of Hygge: Danish Secrets to Happy LivingFrom EverandThe Little Book of Hygge: Danish Secrets to Happy LivingRating: 3.5 out of 5 stars3.5/5 (399)

- Formato Reporte Solicitudes Mantto Turno L 04 Marzo 2021 Alfonso GuevaraDocument10 pagesFormato Reporte Solicitudes Mantto Turno L 04 Marzo 2021 Alfonso GuevaraFABIANNo ratings yet

- Grit: The Power of Passion and PerseveranceFrom EverandGrit: The Power of Passion and PerseveranceRating: 4 out of 5 stars4/5 (588)

- Control Box Gen3 Spec SheetDocument2 pagesControl Box Gen3 Spec SheetTuyên VũNo ratings yet

- The Emperor of All Maladies: A Biography of CancerFrom EverandThe Emperor of All Maladies: A Biography of CancerRating: 4.5 out of 5 stars4.5/5 (271)

- An Automated IFC-based WorkflowDocument16 pagesAn Automated IFC-based WorkflowSATINDER KHATTRANo ratings yet

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaFrom EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaRating: 4.5 out of 5 stars4.5/5 (266)

- Ziehm Vision - Technical ManualDocument152 pagesZiehm Vision - Technical ManualМиша Жигалкин100% (4)

- Never Split the Difference: Negotiating As If Your Life Depended On ItFrom EverandNever Split the Difference: Negotiating As If Your Life Depended On ItRating: 4.5 out of 5 stars4.5/5 (838)

- Tables SapDocument21 pagesTables SapLos VizuetinesNo ratings yet

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryFrom EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryRating: 3.5 out of 5 stars3.5/5 (231)

- CNC Lab Manual 2023 Med 3102Document66 pagesCNC Lab Manual 2023 Med 3102G. RajeshNo ratings yet

- 100% Solid Capacitors Optimized Thermal Design World's #1 ASUS Motherboards US Military-Grade StandardsDocument1 page100% Solid Capacitors Optimized Thermal Design World's #1 ASUS Motherboards US Military-Grade StandardsrasyidiNo ratings yet

- On Fire: The (Burning) Case for a Green New DealFrom EverandOn Fire: The (Burning) Case for a Green New DealRating: 4 out of 5 stars4/5 (73)

- Enterprise Level SecurityDocument752 pagesEnterprise Level Securityvruizshm0% (1)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureFrom EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureRating: 4.5 out of 5 stars4.5/5 (474)

- Next : Java JavaDocument10 pagesNext : Java JavaAmarjeet KumarNo ratings yet

- Team of Rivals: The Political Genius of Abraham LincolnFrom EverandTeam of Rivals: The Political Genius of Abraham LincolnRating: 4.5 out of 5 stars4.5/5 (234)

- The Ultimate Outbound Sales CadenceDocument1 pageThe Ultimate Outbound Sales CadencertrwtNo ratings yet

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyFrom EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyRating: 3.5 out of 5 stars3.5/5 (2259)

- BCSL056Document25 pagesBCSL056r06677624No ratings yet

- Juno Lighting Vector Track Lights Brochure 1995Document12 pagesJuno Lighting Vector Track Lights Brochure 1995Alan MastersNo ratings yet

- Cô MAI PHƯƠNG (Chủ đề SCIENCE AND TECHNOLOGY)Document2 pagesCô MAI PHƯƠNG (Chủ đề SCIENCE AND TECHNOLOGY)Giang VũNo ratings yet

- DM - Disconnection Processing Variants and Device RemovalDocument8 pagesDM - Disconnection Processing Variants and Device RemovalMonis ShakeelNo ratings yet

- Facebook APIDocument8 pagesFacebook APITricahyadi WitdanaNo ratings yet

- The Unwinding: An Inner History of the New AmericaFrom EverandThe Unwinding: An Inner History of the New AmericaRating: 4 out of 5 stars4/5 (45)

- Golden Software Voxler v4 - User's Guide (Voxler4UserGuide-eBook)Document970 pagesGolden Software Voxler v4 - User's Guide (Voxler4UserGuide-eBook)wantssomebook100% (1)

- Setting Up SITL On Windows - Dev DocumentationDocument13 pagesSetting Up SITL On Windows - Dev Documentationsfsdf ısıoefıoqNo ratings yet

- WebNMS Technical Guide PDFDocument2,204 pagesWebNMS Technical Guide PDFMuhamad Fauzi HusseinNo ratings yet

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreFrom EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreRating: 4 out of 5 stars4/5 (1090)

- Apis Iq Software Installation v70 enDocument17 pagesApis Iq Software Installation v70 enЕвгений БульбаNo ratings yet

- Q 1. Discuss Design Specification of A Assembler With Diagram? AnsDocument9 pagesQ 1. Discuss Design Specification of A Assembler With Diagram? AnsSurendra Singh ChauhanNo ratings yet

- Cs201 SetsDocument38 pagesCs201 SetsMaricel D. RanjoNo ratings yet

- Cmip9c82w 28sd 4g SpecDocument5 pagesCmip9c82w 28sd 4g SpecOscar QuiñonezNo ratings yet

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)From EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Rating: 4.5 out of 5 stars4.5/5 (120)

- Addressing Modes and Instructions in 8085Document18 pagesAddressing Modes and Instructions in 8085Ishmeet KaurNo ratings yet

- HBQT - The Tutorial About Harbour and QT Programming - by Giovanni Di MariaDocument195 pagesHBQT - The Tutorial About Harbour and QT Programming - by Giovanni Di Mariajoe_bernardesNo ratings yet

- Her Body and Other Parties: StoriesFrom EverandHer Body and Other Parties: StoriesRating: 4 out of 5 stars4/5 (821)