Professional Documents

Culture Documents

Human Error - Models and Management

Uploaded by

imahumanOriginal Description:

Original Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Human Error - Models and Management

Uploaded by

imahumanCopyright:

Available Formats

Education and debate

Human error: models and management

James Reason

The human error problem can be viewed in two ways: the person approach and the system approach. Each has its model of error causation and each model gives rise to quite different philosophies of error management. Understanding these differences has important practical implications for coping with the ever present risk of mishaps in clinical practice.

Department of Psychology, University of Manchester, Manchester M13 9PL James Reason professor of psychology reason@psy. man.ac.uk

BMJ 2000;320:76870

Summary points

Two approaches to the problem of human fallibility exist: the person and the system approaches The person approach focuses on the errors of individuals, blaming them for forgetfulness, inattention, or moral weakness The system approach concentrates on the conditions under which individuals work and tries to build defences to avert errors or mitigate their effects High reliability organisationswhich have less than their fair share of accidentsrecognise that human variability is a force to harness in averting errors, but they work hard to focus that variability and are constantly preoccupied with the possibility of failure

Person approach

The longstanding and widespread tradition of the person approach focuses on the unsafe actserrors and procedural violationsof people at the sharp end: nurses, physicians, surgeons, anaesthetists, pharmacists, and the like. It views these unsafe acts as arising primarily from aberrant mental processes such as forgetfulness, inattention, poor motivation, carelessness, negligence, and recklessness. Naturally enough, the associated countermeasures are directed mainly at reducing unwanted variability in human behaviour. These methods include poster campaigns that appeal to peoples sense of fear, writing another procedure (or adding to existing ones), disciplinary measures, threat of litigation, retraining, naming, blaming, and shaming. Followers of this approach tend to treat errors as moral issues, assuming that bad things happen to bad peoplewhat psychologists have called the just world hypothesis.1

System approach

The basic premise in the system approach is that humans are fallible and errors are to be expected, even in the best organisations. Errors are seen as consequences rather than causes, having their origins not so much in the perversity of human nature as in upstream systemic factors. These include recurrent error traps in the workplace and the organisational processes that give rise to them. Countermeasures are based on the assumption that though we cannot change the human condition, we can change the conditions under which humans work. A central idea is that of system defences. All hazardous technologies possess barriers and safeguards. When an adverse event occurs, the important issue is not who blundered, but how and why the defences failed.

Evaluating the person approach

The person approach remains the dominant tradition in medicine, as elsewhere. From some perspectives it

768

has much to commend it. Blaming individuals is emotionally more satisfying than targeting institutions. People are viewed as free agents capable of choosing between safe and unsafe modes of behaviour. If something goes wrong, it seems obvious that an individual (or group of individuals) must have been responsible. Seeking as far as possible to uncouple a persons unsafe acts from any institutional responsibility is clearly in the interests of managers. It is also legally more convenient, at least in Britain. Nevertheless, the person approach has serious shortcomings and is ill suited to the medical domain. Indeed, continued adherence to this approach is likely to thwart the development of safer healthcare institutions. Although some unsafe acts in any sphere are egregious, the vast majority are not. In aviation maintenancea hands-on activity similar to medical practice in many respectssome 90% of quality lapses were judged as blameless.2 Effective risk management depends crucially on establishing a reporting culture.3 Without a detailed analysis of mishaps, incidents, near misses, and free lessons, we have no way of uncovering recurrent error traps or of knowing where the edge is until we fall over it. The complete absence of such a reporting culture within the Soviet Union contributed crucially to the Chernobyl disaster.4 Trust is a

BMJ VOLUME 320 18 MARCH 2000 www.bmj.com

Education and debate

failures have a direct and usually shortlived impact on the integrity of the defences. At Chernobyl, for example, the operators wrongly violated plant procedures and switched off successive safety systems, thus creating the immediate trigger for the catastrophic explosion in the core. Followers of the person approach often look no further for the causes of an adverse event once they have identified these proximal unsafe acts. But, as discussed below, virtually all such acts have a causal history that extends back in time and up through the levels of the system. Latent conditions are the inevitable resident pathogens within the system. They arise from decisions made by designers, builders, procedure writers, and top level management. Such decisions may be mistaken, but they need not be. All such strategic decisions have the potential for introducing pathogens into the system. Latent conditions have two kinds of adverse effect: they can translate into error provoking conditions within the local workplace (for example, time pressure, understaffing, inadequate equipment, fatigue, and inexperience) and they can create longlasting holes or weaknesses in the defences (untrustworthy alarms and indicators, unworkable procedures, design and construction deficiencies, etc). Latent conditionsas the term suggestsmay lie dormant within the system for many years before they combine with active failures and local triggers to create an accident opportunity. Unlike active failures, whose specific forms are often hard to foresee, latent conditions can be identified and remedied before an adverse event occurs. Understanding this leads to proactive rather than reactive risk management.

Hazards

Losses

The Swiss cheese model of how defences, barriers, and safeguards may be penetrated by an accident trajectory

key element of a reporting culture and this, in turn, requires the existence of a just cultureone possessing a collective understanding of where the line should be drawn between blameless and blameworthy actions.5 Engineering a just culture is an essential early step in creating a safe culture. Another serious weakness of the person approach is that by focusing on the individual origins of error it isolates unsafe acts from their system context. As a result, two important features of human error tend to be overlooked. Firstly, it is often the best people who make the worst mistakeserror is not the monopoly of an unfortunate few. Secondly, far from being random, mishaps tend to fall into recurrent patterns. The same set of circumstances can provoke similar errors, regardless of the people involved. The pursuit of greater safety is seriously impeded by an approach that does not seek out and remove the error provoking properties within the system at large.

The Swiss cheese model of system accidents

Defences, barriers, and safeguards occupy a key position in the system approach. High technology systems have many defensive layers: some are engineered (alarms, physical barriers, automatic shutdowns, etc), others rely on people (surgeons, anaesthetists, pilots, control room operators, etc), and yet others depend on procedures and administrative controls. Their function is to protect potential victims and assets from local hazards. Mostly they do this very effectively, but there are always weaknesses. In an ideal world each defensive layer would be intact. In reality, however, they are more like slices of Swiss cheese, having many holesthough unlike in the cheese, these holes are continually opening, shutting, and shifting their location. The presence of holes in any one slice does not normally cause a bad outcome. Usually, this can happen only when the holes in many layers momentarily line up to permit a trajectory of accident opportunitybringing hazards into damaging contact with victims (figure). The holes in the defences arise for two reasons: active failures and latent conditions. Nearly all adverse events involve a combination of these two sets of factors. Active failures are the unsafe acts committed by people who are in direct contact with the patient or system. They take a variety of forms: slips, lapses, fumbles, mistakes, and procedural violations.6 Active

BMJ VOLUME 320 18 MARCH 2000 www.bmj.com

We cannot change the human condition, but we can change the conditions under which humans work

To use another analogy: active failures are like mosquitoes. They can be swatted one by one, but they still keep coming. The best remedies are to create more effective defences and to drain the swamps in which they breed. The swamps, in this case, are the ever present latent conditions.

Error management

Over the past decade researchers into human factors have been increasingly concerned with developing the tools for managing unsafe acts. Error management has two components: limiting the incidence of dangerous errors andsince this will never be wholly effective creating systems that are better able to tolerate the occurrence of errors and contain their damaging effects. Whereas followers of the person approach direct most of their management resources at trying to make individuals less fallible or wayward, adherents of the system approach strive for a comprehensive management programme aimed at several different targets: the person, the team, the task, the workplace, and the institution as a whole.3 High reliability organisationssystems operating in hazardous conditions that have fewer than their fair share of adverse eventsoffer important models for what constitutes a resilient system. Such a system has

769

Education and debate

High reliability organisations can reconfigure themselves to suit local circumstances. In their routine mode, they are controlled in the conventional hierarchical manner. But in high tempo or emergency situations, control shifts to the experts on the spotas it often does in the medical domain. The organisation reverts seamlessly to the routine control mode once the crisis has passed. Paradoxically, this flexibility arises in part from a military traditioneven civilian high reliability organisations have a large proportion of ex-military staff. Military organisations tend to define their goals in an unambiguous way and, for these bursts of semiautonomous activity to be successful, it is essential that all the participants clearly understand and share these aspirations. Although high reliability organisations expect and encourage variability of human action, they also work very hard to maintain a consistent mindset of intelligent wariness.8

High reliability organisations

So far, three types of high reliability organisations have been investigated: US Navy nuclear aircraft carriers, nuclear power plants, and air traffic control centres. The challenges facing these organisations are twofold: Managing complex, demanding technologies so as to avoid major failures that could cripple or even destroy the organisation concerned Maintaining the capacity for meeting periods of very high peak demand, whenever these occur. The organisations studied7 8 had these defining characteristics: They were complex, internally dynamic, and, intermittently, intensely interactive They performed exacting tasks under considerable time pressure They had carried out these demanding activities with low incident rates and an almost complete absence of catastrophic failures over several years. Although, on the face of it, these organisations are far removed from the medical domain, they share important characteristics with healthcare institutions. The lessons to be learnt from these organisations are clearly relevant for those who manage and operate healthcare institutions.

US NAVY

Blaming individuals is emotionally more satisfying than targeting institutions.

Perhaps the most important distinguishing feature of high reliability organisations is their collective preoccupation with the possibility of failure. They expect to make errors and train their workforce to recognise and recover them. They continually rehearse familiar scenarios of failure and strive hard to imagine novel ones. Instead of isolating failures, they generalise them. Instead of making local repairs, they look for system reforms.

Conclusions

High reliability organisations are the prime examples of the system approach. They anticipate the worst and equip themselves to deal with it at all levels of the organisation. It is hard, even unnatural, for individuals to remain chronically uneasy, so their organisational culture takes on a profound significance. Individuals may forget to be afraid, but the culture of a high reliability organisation provides them with both the reminders and the tools to help them remember. For these organisations, the pursuit of safety is not so much about preventing isolated failures, either human or technical, as about making the system as robust as is practicable in the face of its human and operational hazards. High reliability organisations are not immune to adverse events, but they have learnt the knack of converting these occasional setbacks into enhanced resilience of the system.

Competing interests: None declared.

1 Lerner MJ. The desire for justice and reactions to victims. In: McCauley J, Berkowitz L, eds. Altruism and helping behavior. New York: Academic Press, 1970. Marx D. Discipline: the role of rule violations. Ground Effects 1997;2:1-4. Reason J. Managing the risks of organizational accidents. Aldershot: Ashgate, 1997. Medvedev G. The truth about Chernobyl. New York: Basic Books, 1991. Marx D. Maintenance error causation. Washington, DC: Federal Aviation Authority Office of Aviation Medicine, 1999. Reason J. Human error. New York: Cambridge University Press, 1990. Weick KE. Organizational culture as a source of high reliability. Calif Management Rev 1987;29:112-27. Weick KE, Sutcliffe KM, Obstfeld D. Organizing for high reliability: processes of collective mindfulness. Res Organizational Behav 1999;21: 23-81.

intrinsic safety health; it is able to withstand its operational dangers and yet still achieve its objectives.

Some paradoxes of high reliability

Just as medicine understands more about disease than health, so the safety sciences know more about what causes adverse events than about how they can best be avoided. Over the past 15 years or so, a group of social scientists based mainly at Berkeley and the University of Michigan has sought to redress this imbalance by studying safety successes in organisations rather than their infrequent but more conspicuous failures.7 8 These success stories involved nuclear aircraft carriers, air traffic control systems, and nuclear power plants (box). Although such high reliability organisations may seem remote from clinical practice, some of their defining cultural characteristics could be imported into the medical domain. Most managers of traditional systems attribute human unreliability to unwanted variability and strive to eliminate it as far as possible. In high reliability organisations, on the other hand, it is recognised that human variability in the shape of compensations and adaptations to changing events represents one of the systems most important safeguards. Reliability is a dynamic non-event.7 It is dynamic because safety is preserved by timely human adjustments; it is a non-event because successful outcomes rarely call attention to themselves.

770

2 3 4 5 6 7 8

BMJ VOLUME 320

18 MARCH 2000

www.bmj.com

You might also like

- Guidelines For - Managing Abnormal SituationsDocument266 pagesGuidelines For - Managing Abnormal Situationsrahman setiaNo ratings yet

- Reason Paper Human ErrorDocument4 pagesReason Paper Human ErrorKrisNo ratings yet

- Reason, J (2000) - Human Error - Model and ManagementDocument3 pagesReason, J (2000) - Human Error - Model and ManagementBob SmithNo ratings yet

- People or Systems To Blame Is Human. The Fix Is To Engineer - Richard J. Holden, Ph.D.Document17 pagesPeople or Systems To Blame Is Human. The Fix Is To Engineer - Richard J. Holden, Ph.D.GiovanyNo ratings yet

- People or Systems To Blame Is Human TheDocument8 pagesPeople or Systems To Blame Is Human Theingdimitriospino_110No ratings yet

- Graling, Sanchez - 2017 - Learning and Mindfulness Improving Perioperative Patient SafetyDocument5 pagesGraling, Sanchez - 2017 - Learning and Mindfulness Improving Perioperative Patient SafetyDave SainsburyNo ratings yet

- Human Errors Models and Management (James Reason)Document10 pagesHuman Errors Models and Management (James Reason)Grazielle Samara PereiraNo ratings yet

- Educating Physicians Prepared To Improve Care and Safety Is No Accident: It Requires A Systematic ApproachDocument6 pagesEducating Physicians Prepared To Improve Care and Safety Is No Accident: It Requires A Systematic Approachujangketul62No ratings yet

- Human Performance Tools ArticleDocument11 pagesHuman Performance Tools ArticlePriyo ArifNo ratings yet

- Human Error in Accidents-Ug-1Document10 pagesHuman Error in Accidents-Ug-1Aman GautamNo ratings yet

- Safe Programming Booket DigitalDocument9 pagesSafe Programming Booket DigitalAbdirahman IsmailNo ratings yet

- Residual Risk ReductionDocument9 pagesResidual Risk ReductionGiftObionochieNo ratings yet

- 10 1001@jama 2019 9788Document2 pages10 1001@jama 2019 9788ale2204No ratings yet

- MTC College research on situational awareness and human errorDocument6 pagesMTC College research on situational awareness and human errorDmuel Eller Adrian LabanesNo ratings yet

- A-Trauma-Informed-Approach-to-Work 2024Document26 pagesA-Trauma-Informed-Approach-to-Work 2024hcintiland sbyNo ratings yet

- Medication SafetyDocument3 pagesMedication SafetyJan Aerielle AzulNo ratings yet

- Understanding the Root Causes of Workplace AccidentsDocument3 pagesUnderstanding the Root Causes of Workplace AccidentsAsri KarimaNo ratings yet

- VanDyck Et Al JAPDocument13 pagesVanDyck Et Al JAPSteven RandallNo ratings yet

- (Behavioral Economics) Michael Choi - The Possibility Analysis of Eliminating Errors in Decision Making ProcessesDocument26 pages(Behavioral Economics) Michael Choi - The Possibility Analysis of Eliminating Errors in Decision Making ProcessespiturdabNo ratings yet

- Patient Safety and Quality An Evidence Based Handbook For NursesDocument41 pagesPatient Safety and Quality An Evidence Based Handbook For NursesPedro PlataNo ratings yet

- Who MC Topic-2Document8 pagesWho MC Topic-2Muhammad Rizal AlifandiNo ratings yet

- F3Ferg 0713Document9 pagesF3Ferg 0713Reynold HutapeaNo ratings yet

- Hospicon Write Up-2Document80 pagesHospicon Write Up-2jyoti a salunkheNo ratings yet

- Disaster Preparedness of The Residents of Tabuk City: An AssessmentDocument6 pagesDisaster Preparedness of The Residents of Tabuk City: An AssessmentTipsy MonkeyNo ratings yet

- Theories, Strategies, and Next Steps: Risk PerceptionDocument12 pagesTheories, Strategies, and Next Steps: Risk PerceptionmjbotelhoNo ratings yet

- 2022 Disaster Psychology - Dispelling The Myths of Panic 8pDocument8 pages2022 Disaster Psychology - Dispelling The Myths of Panic 8panaNo ratings yet

- COGNITIVE BIASES - A Brief Overview of Over 160 Cognitive Biases: + Bonus Chapter: Algorithmic BiasFrom EverandCOGNITIVE BIASES - A Brief Overview of Over 160 Cognitive Biases: + Bonus Chapter: Algorithmic BiasNo ratings yet

- Understanding Emotional Abuse: CareesDocument10 pagesUnderstanding Emotional Abuse: CareesRavennaNo ratings yet

- McGaffigan AvoidingDriftIntoHarm HCExec Sep2022Document3 pagesMcGaffigan AvoidingDriftIntoHarm HCExec Sep2022Arun M SNo ratings yet

- Whitman SyndromeDocument6 pagesWhitman SyndromebluebermudaNo ratings yet

- Tu 2003Document7 pagesTu 2003Stephany BoninNo ratings yet

- Residual Risk ReductionDocument9 pagesResidual Risk ReductionfrankoufaxNo ratings yet

- Psicologia Do RiscoDocument17 pagesPsicologia Do RiscoTamires NunesNo ratings yet

- A Framework For Understanding Sources of Harm Throughout The Machine Learning Life CycleDocument9 pagesA Framework For Understanding Sources of Harm Throughout The Machine Learning Life CycleRohit Baghel RajputNo ratings yet

- For Friday Article Review TwoDocument23 pagesFor Friday Article Review TwoDani Wondime DersNo ratings yet

- Theories of Accident Causation ModelsDocument7 pagesTheories of Accident Causation ModelsWiZofFaTeNo ratings yet

- Original Article Burn InjuriesDocument11 pagesOriginal Article Burn InjuriesmfaradibahNo ratings yet

- Safety First (Chapter15)Document19 pagesSafety First (Chapter15)Hk Lorilla QuongNo ratings yet

- Patient Safety Level 1: Study GuideDocument11 pagesPatient Safety Level 1: Study GuideAnnabelle KingfulNo ratings yet

- Swans, Swine, and Swindlers: Coping with the Growing Threat of Mega-Crises and Mega-MessesFrom EverandSwans, Swine, and Swindlers: Coping with the Growing Threat of Mega-Crises and Mega-MessesNo ratings yet

- Comprehensive Healthcare Simulation: AnesthesiologyFrom EverandComprehensive Healthcare Simulation: AnesthesiologyBryan MahoneyNo ratings yet

- Crim 2 Module BuqueDocument99 pagesCrim 2 Module Buquecps7tm8yqtNo ratings yet

- 1.5 The Meaning of Human Factors: MaintenanceDocument66 pages1.5 The Meaning of Human Factors: MaintenancedsenpenNo ratings yet

- Frameworks For Understanding Error: Sidebar ADocument1 pageFrameworks For Understanding Error: Sidebar ADonatoNo ratings yet

- Falls and Cognition in Older Persons: Fundamentals, Assessment and Therapeutic OptionsFrom EverandFalls and Cognition in Older Persons: Fundamentals, Assessment and Therapeutic OptionsManuel Montero-OdassoNo ratings yet

- ACCIDENT PREVENTIONDocument23 pagesACCIDENT PREVENTIONdilantha chandikaNo ratings yet

- Accident Investigation Human FactorsDocument34 pagesAccident Investigation Human Factorsgamingengineer2000No ratings yet

- Chapter 5. Adverse EventDocument19 pagesChapter 5. Adverse EventÈkä Sêtýä PrätämäNo ratings yet

- INPO's Strategic Approach to Reducing Human Error and Strengthening DefensesDocument6 pagesINPO's Strategic Approach to Reducing Human Error and Strengthening DefensesВладимир МашинNo ratings yet

- F3 - 0517 NewDocument8 pagesF3 - 0517 NewmahendranNo ratings yet

- Understanding How Human Factors Impact Patient SafetyDocument20 pagesUnderstanding How Human Factors Impact Patient Safetymauliana mardhira fauzaNo ratings yet

- What Readers Are Saying: Don't Overlook Total System RiskDocument2 pagesWhat Readers Are Saying: Don't Overlook Total System Riskpavlov2No ratings yet

- It Is Time To Talk About People: A Human-Centered Healthcare SystemDocument7 pagesIt Is Time To Talk About People: A Human-Centered Healthcare SystemEskadmas BelayNo ratings yet

- Responses, Human Behavior and Role of Leadership in DisastersDocument16 pagesResponses, Human Behavior and Role of Leadership in Disasterstony saraoNo ratings yet

- The Human Factor in Cybersecurity PDFDocument1 pageThe Human Factor in Cybersecurity PDFAleksandre MirianashviliNo ratings yet

- Workplace Bullying InvestigationsDocument11 pagesWorkplace Bullying InvestigationsGötz-Kocsis ZsuzsannaNo ratings yet

- Human Factors and Medication Errors A Case StudyDocument6 pagesHuman Factors and Medication Errors A Case StudyDevNo ratings yet

- Occupational Safety and Accident Prevention: Behavioral Strategies and MethodsFrom EverandOccupational Safety and Accident Prevention: Behavioral Strategies and MethodsNo ratings yet

- Wachinger-Et-Al-Rsik Paradox-Risk AnalysisDocument18 pagesWachinger-Et-Al-Rsik Paradox-Risk AnalysisGuillermoNo ratings yet

- Paediatric Safety Huddles Script TemplateDocument1 pagePaediatric Safety Huddles Script TemplateDanielaGarciaNo ratings yet

- Root Cause Analysis and Corrective Action Plan Template: Process Issues Check All That ApplyDocument17 pagesRoot Cause Analysis and Corrective Action Plan Template: Process Issues Check All That ApplyDanielaGarciaNo ratings yet

- Module 06 - TechnologyDocument37 pagesModule 06 - TechnologyDanielaGarciaNo ratings yet

- Effective 1 of March 2021Document51 pagesEffective 1 of March 2021DanielaGarcia100% (1)

- OncologySelfAssessmentLaunch Release 4 2 12Document2 pagesOncologySelfAssessmentLaunch Release 4 2 12DanielaGarciaNo ratings yet

- Jenis Pelatihan CPD PDFDocument52 pagesJenis Pelatihan CPD PDFHardaniNo ratings yet

- Post Event Safety Huddles Info For Clinicians 1 FINAL DEC 2017Document1 pagePost Event Safety Huddles Info For Clinicians 1 FINAL DEC 2017patientsafetyNo ratings yet

- Safety Huddles Info For Clinicians 1 FINAL DEC 2017Document2 pagesSafety Huddles Info For Clinicians 1 FINAL DEC 2017patientsafetyNo ratings yet

- High Alert Medications Part 1Document26 pagesHigh Alert Medications Part 1DanielaGarciaNo ratings yet

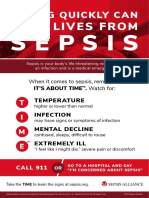

- Poster What Is Sepsis 8.5x11 1Document1 pagePoster What Is Sepsis 8.5x11 1DanielaGarciaNo ratings yet

- Medication Management StudyDocument4 pagesMedication Management StudyDanielaGarciaNo ratings yet

- Global Patient Safety Action Plan 2021-2030 Towards Zero Patient HarmDocument84 pagesGlobal Patient Safety Action Plan 2021-2030 Towards Zero Patient HarmDanielaGarciaNo ratings yet

- A New, Evidence Based Estimate of Patient HarmsDocument7 pagesA New, Evidence Based Estimate of Patient Harmscharwill1234No ratings yet

- How-To Guide: Prevent Ventilator-Associated Pneumonia: Updated February 2012Document45 pagesHow-To Guide: Prevent Ventilator-Associated Pneumonia: Updated February 2012DanielaGarciaNo ratings yet

- How-To Guide: Prevent Central Line-Associated Bloodstream Infections (CLABSI)Document40 pagesHow-To Guide: Prevent Central Line-Associated Bloodstream Infections (CLABSI)DanielaGarciaNo ratings yet

- Using Care Bundles To Improve Health Care Quality: Innovation Series 2012Document18 pagesUsing Care Bundles To Improve Health Care Quality: Innovation Series 2012DanielaGarciaNo ratings yet

- (2015) Extemporaneous Formulation 2015Document80 pages(2015) Extemporaneous Formulation 2015DanielaGarciaNo ratings yet

- TeamSTEPPS Canada Pocket GuideDocument35 pagesTeamSTEPPS Canada Pocket GuideDanielaGarciaNo ratings yet

- Webinar 10 12 17 PDFDocument73 pagesWebinar 10 12 17 PDFDanielaGarciaNo ratings yet

- Sepsis FactSheet v4Document2 pagesSepsis FactSheet v4DanielaGarciaNo ratings yet

- Sepsis and Antibiotic Resistance: What Antibiotics DoDocument2 pagesSepsis and Antibiotic Resistance: What Antibiotics DoDanielaGarciaNo ratings yet

- Poster Acting Quickly v02Document1 pagePoster Acting Quickly v02DanielaGarciaNo ratings yet

- Look-Alike, Sound-Alike Medication NamesDocument4 pagesLook-Alike, Sound-Alike Medication NamesBenjel AndayaNo ratings yet

- Its About TIME 2020 Digital Social Media ImageDocument1 pageIts About TIME 2020 Digital Social Media ImageDanielaGarciaNo ratings yet

- (2015) Extemporaneous Formulation 2015Document80 pages(2015) Extemporaneous Formulation 2015DanielaGarciaNo ratings yet

- Sitrep 21 NcovDocument6 pagesSitrep 21 NcovDanielaGarciaNo ratings yet

- 6-Eliminating Facility-Acquired Pressure Ulcers PDFDocument11 pages6-Eliminating Facility-Acquired Pressure Ulcers PDFDanielaGarciaNo ratings yet

- How-To Guide: Prevent Pressure UlcersDocument19 pagesHow-To Guide: Prevent Pressure UlcersDanielaGarcia100% (1)

- Brad en ScaleDocument2 pagesBrad en Scaleantoniusa_1No ratings yet

- Viofor JPS System Clinic / Delux - User Manual (En)Document52 pagesViofor JPS System Clinic / Delux - User Manual (En)GaleriaTechniki.PLNo ratings yet

- Pebc Evaluating Exam Sample QuestionDocument39 pagesPebc Evaluating Exam Sample Questionmahyar_ro79% (14)

- Midwifery Pharmacology-9Document1 pageMidwifery Pharmacology-9georgeloto12No ratings yet

- Holding Health Care AccountableDocument37 pagesHolding Health Care AccountableGulalayNo ratings yet

- Homeopathy Introduction GuideDocument4 pagesHomeopathy Introduction GuideIrina DomentiNo ratings yet

- Group 2B: A Pulmonary Histoplasmosis CaseDocument58 pagesGroup 2B: A Pulmonary Histoplasmosis CaseAngela NeriNo ratings yet

- What Is HIV Antiretroviral Drug TreatmentDocument24 pagesWhat Is HIV Antiretroviral Drug TreatmentDhrishya PadmakumarNo ratings yet

- Vaccinations Argumentative Essay - Lyla AlmoninaDocument3 pagesVaccinations Argumentative Essay - Lyla Almoninaapi-4441503820% (1)

- Exogen 4000+ Instructions For UseDocument16 pagesExogen 4000+ Instructions For UseRoss JacobsNo ratings yet

- SimuDocument13 pagesSimuPrince Rener Velasco PeraNo ratings yet

- Mock Questions 2Document40 pagesMock Questions 2Kamlesh KishorNo ratings yet

- Perception On Covid-19 Vaccinations Among Tribal Communities in East Khasi Hills in MeghalayaDocument5 pagesPerception On Covid-19 Vaccinations Among Tribal Communities in East Khasi Hills in MeghalayaInt J of Med Sci and Nurs Res100% (1)

- Analytics in Pharma and Life SciencesDocument13 pagesAnalytics in Pharma and Life SciencesPratik BhagatNo ratings yet

- Institute For Safe Medication Practices 2011 ReportDocument25 pagesInstitute For Safe Medication Practices 2011 ReportLaw Med BlogNo ratings yet

- Formulary Book - Hajer (1) Final To Print1 MOH HospitalDocument115 pagesFormulary Book - Hajer (1) Final To Print1 MOH HospitalspiderNo ratings yet

- Oet SpeakingDocument5 pagesOet SpeakingJuvy Anne VelitarioNo ratings yet

- Steroid PaperDocument16 pagesSteroid PaperkhyatisethiaNo ratings yet

- Zoladex FDADocument34 pagesZoladex FDAdianaristinugraheni100% (1)

- College of Health Sciences: Urdaneta City UniversityDocument3 pagesCollege of Health Sciences: Urdaneta City UniversityJorgie CastroNo ratings yet

- Bip03051f UgDocument2 pagesBip03051f UgdellalalaNo ratings yet

- Pharmacology Midterms (Practice Questions)Document7 pagesPharmacology Midterms (Practice Questions)JhayneNo ratings yet

- Seatone InfoDocument7 pagesSeatone InfoJugal ShahNo ratings yet

- Appendix 3 Guideline Clinical Evaluation Anticancer Medicinal Products Summary Product - en 0Document4 pagesAppendix 3 Guideline Clinical Evaluation Anticancer Medicinal Products Summary Product - en 0Rob VermeulenNo ratings yet

- Music Therapy As A Post-OperatDocument33 pagesMusic Therapy As A Post-OperatLia octavianyNo ratings yet

- Patient-Safe Communication StrategiesDocument7 pagesPatient-Safe Communication StrategiesSev KuzmenkoNo ratings yet

- Mikulec Faraji Kinsella. Evaluation of The Efficacy of Caffeine Cessation, Nortriptyline, and Topiramate Therapy in Vestibular Migraine and Complex Dizziness of Unknown Etiology .Document7 pagesMikulec Faraji Kinsella. Evaluation of The Efficacy of Caffeine Cessation, Nortriptyline, and Topiramate Therapy in Vestibular Migraine and Complex Dizziness of Unknown Etiology .HelderSouzaNo ratings yet

- Vec TrineDocument3 pagesVec TrinerwdNo ratings yet

- Drug StudyDocument12 pagesDrug StudyJessie Cauilan CainNo ratings yet

- Child - ImmunizationsDocument1 pageChild - ImmunizationsJOHN100% (1)

- 157 - Clinical Trials Adverse Events ChaDocument6 pages157 - Clinical Trials Adverse Events ChaWoo Rin ParkNo ratings yet