Professional Documents

Culture Documents

05541172

Uploaded by

Akanksha SinghOriginal Description:

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

05541172

Uploaded by

Akanksha SinghCopyright:

Available Formats

201O International Conference On Computer Design And Appliations (ICCDA 2010)

Study of Data Mining Algorithm Based on Decision Tree

Linna Li

Changchun institute of technology

Changchu, China

lilinna586@yeah.net

Abstact-Decision tree algorithm is a kind of data mining

model to make induction learning algorithm based on

examples. It is easy to extract display rule, has smaller

computation amount, and could display important decision

property and own higher classifcation precision. For the study

of data mining algorithm based on decision tree, this article

put forward specifc solution for the problems of property

value vacancy, multiple-valued property selection, property

selection criteria, propose to introduce weighted and simplifed

entropy into decision tree algorithm so as to achieve the

improvement of ID3 algorithm. The experimental results show

that the improved algorithm is better than widely used ID3

algorithm at present on overall performance.

Keyword-Data mining, Decision Tree, ID3, Weighted

Simplication Entopy

I. INTRODUCTION

Data mining is a process to extract information fom

database which is critic but has potential value. It is a new

data analysis technology, and has widely applied in the

areas of fnance, insurance, goverment, transport and

national defense. Data classifcation is an important part in

data mining. Classifcation contains many methods. The

common classifcation model includes decision tree, neutral

network, genetic algorithm, rough set, statistical model, etc

[1-5]. Decision algorithm is induction learing algorithm

based on examples, which is widely used for the advantages

of easy to extract display rule, relatively smaller calculated

amount, ability to display important decision property and

higher accuracy rate for classifcation [6]. According to

statistics, decision tree algorithm is one of the most widely

data mining algorithms at present. But in practical

application process, the existed decision tee algorithm also

has many defciencies, such as lower computational

efciency, variety bias, and so on. Therefore, is has

signifcance in theory and reality to frther improve

decision tree, enhace the performance of decision tree, and

to make it suit the application requirements of data mining

technology. Focusing on the defciencies fouded in above

database knowledge, deep research is made to explore

optimization algorithm of decision tree classifcation of data

mining in this paper, with the hope of better promoting the

accuracy of classifcation and being applied in practical

work.

II. THE PROBLEMS EXISTED IN DATA MINING ALGORITHM

OF DECISION TREE

The main algorithm of ID3 [7-10] algorithm is very

Xuemin Zhang

Changchun institute of technology

Changchu, China

zhangxueminI203@163.com

simple: frstly, select a window at random from training set,

and form a decision tree for the curent window (the sample

subset including both positive example and negative

example) with tree building algorithm; secondly, determine

the classifcation of samples in training set (except window)

with obtained decision tree so as to fnd misjudged

examples; if there are misjudge examples, insert them into

window and transfer them to tee building process, if not,

stop. ID3 algorithm has the following problems:

When traversing the space of decision tee, ID3

algorithm just maintains single, current hypothesis, and

loses the advantages brought by presenting all the

hypothesis, for example, it could not determine how many

other decision trees are consistent with curent training data,

or use new examples query to optimally determine these

competitive hypothesis.

ID3 algorithm does not backtrack in searching.

Whenever certain layer of tree chooses a property to test, it

will not backtrack to reconsider this choice. In this way,

algorithm could easily converged local optimal answer, but

not global optimal answer.

The computational method based on mutual information

and used by ID3 algorithm relies on the property which has

more property value. But this property is not sue to be the

property with optimal classifcation.

ID3 algorithm is a kind of greedy algorithm. For

incremental learing task, ID3 algorithm could not accept

training sample incrementally, so the each increase of

example requires to abandon original decision tree, to

restructure new decision tree, and to cause lots of overhead.

So ID3 algorithm does not suit incremental learing.

ID3 algorithm is sensitive to noise. Quinlan defnes the

noise as the wrong property value and wrong classifcation

of training sample data.

ID3 algorithm focuses attention on the selection of

property, but this method has been doubted by some

scholars. Whether property selection greatly afects the

precision of decision tree is still not concluded now.

Generally speaking, ID3 algorithm fts for dealing with

large-scale leaing problems for clear theory, simple

methods, and stronger learing capacity. It is a very good

example in data mining ad machine learing area, as well

as a usefl tool for obtaining knowledge.

III. PRIORITIZATION SCHEME OF DATA MINING

ALGORITHM OF DECISION TREE

A data set often lacks some property value for some

samples---this incompleteness is very typical in practical

978-1-4244-7164-51$26.00 2010 IEEE VI-ISS Volume 1

2010 Interational Conference On Computer Design And Appliations (ICCDA 2010)

application. Because this property value is irrelevat to

certain sample, or not recorded when searching sample, or

made mistake by hand when inputting data into database, so

property value may lose [11]. In order to avoid losing

property value, there are two choices: abandon the samples

lost data in database. Defne a new algorithm or improve

the current algorithm to deal with the lost data. The frst

solution is ver

y

simple, but can not be adopted when lots of

lost values exist in sample set. In order to explain the

second solution, the several problems shall be answered:

how to compare two samples that have diferent quantity of

unknown property value? Training sample with unknown

value and specifc value for inspection has no relation, so

they can not be allocated to any subset, then how to treat

these samples? During the inspection stage of classifcation,

how to treat lost value if the property with lost value is

detected? These and other problems will be caused when

trying to fnd to solution of losing data. The central

classifcation algorithm for treating lost data is usually to

fll these lost values with the most probable values, or to

consider the probability distribution of all the values of

properties that is difcult to go through. However, all these

methods are not good methods. In ID3 algorithm, the

sample with unknown value is distributed at random

according to the relative frequency of known value, this is

the rule widely used [12]. ID3 algorithm adopts flling

method based on probability distribution to give a

probability for each possible value of unknown property,

but not to simply give the most common value. These

probabilities could be estimated according to the frequency

of appearance of diferent value on node n. For example,

setting a Boolean property A, if node no contains seven

known A=1 and three A=O samples, the probability of A=1

is 0.7, the probability of A=O is 0.3, so 70% of example X is

allocated to the branch of A=I, 30% of example X is

allocated to the branch of A=O. In addition, if the property

that has second missing value must be tested, these samples

fragment could be frther subdivided in subsequent branch.

This strategy also could be used in new example of missing

property of classifcation. In this situation, the classifcation

of new example is the most probable classifcation. The

computational method is to select the appearance

probability of diferent value in line with property and to

sum by weight of sample fragment classifed according to

different way at leaf node of tee.

According to the theor

y

of sample similarity, select a

better flling value by compaing other complete samples in

training set; fll the missing property value in taining set

before constructing decision tree. The specifc flling

process is described as follows:

Ai indicates N property collections in training set, and is

one in Al,A2, ... ,A, among which a sample S misses data

at property Ai. Suppose K=N-l.

Find the examples that K property values are consistent

with example S in training set so as to constitute an

example collection T;

If collection T: 0 , t to 4), if not t to 3)

Suppose K=K-l, if K<N/2, delete example S in training

set, otherwise, tur to 1);

Compute the proportion of diferent values of ci in

collection T, make the flling with the value that has biggest

proportion.

For te vacancy of property value in testing set, value

shall be flled by the same method.

IV. IMPROVED DATA MINING ALGORITHM OF DECISION

TREE

The key to construct a good decision tree is to select

good property [13]. Generally speaking, in numerous

decision trees that could ft the given training example, the

tree is smaller, and the predictive ability of decision tree is

stronger. The key to construct smallest decision tree is to

select suitable property. Because the constuction of

smallest decision tree is a N complete problem, so lots of

research could only adopt heuristic strategy to select good

property. But large numbers of practices testif [14,15] that

conventional property selection criteria could not refect the

ability of property to corectly diferentiate the

classifcations in nature, and the computation is also

complex. From the basic principle of decision tree, we

know that decision tree is formed based on the principle of

information theory. And information content of data

classifcation is mainly used, so each selection of a splitting

node will make algorithm interfere many times of

logaritmim. For numerous data, this algorithm will

obviously afect the efciency of the generation of decision

tree, so the consideration to change the criteria of property

selection in data set could reduce the computational cost

and save the generation time of decision tree. From the

basic principle of decision tree algorithm, we could know

that ID3 algorithm utilizes the information entropy value of

each property to determine the splitting property of data set,

and the selection of property information entropy ofen

prefers to the property with more value. Aiming at this

problem, people put forward many methods, such as: gain

ratio method, Gini indexing method, G statistic method, and

so on. From the current many improved algorithm principle

and basic formula of information gain, we could know that

the size of information gain is determined by information

entropy, however information entropy is used to refect the

uncertain degree of each property to the whole data set.

This article introduce weight K for information entropy of

each property which is used for balancing the ucertain

degree of each property to data set so as to make it conform

to actual data distribution. For the introduction of weight,

this article adopts the number of value of each propert

y

in

data set, then makes this weight multiply information

entropy and causes that information entropy result still

depends on the value number of property, that is to say to

take the value of each property in account with the purose

of overcoming the preference problem when ID3 algorithm

selects testing property.

A. Desig

n

of algorithm

This article converts the gain formula of information to

fnd new criteria of property selection. The property

selected by new criteria could overcome the defect that ID3

algorithm is easy to prefer to select the property with more

Vl-156 Volume I

2010 Interational Conference On Computer Design And Appliations (ICCDA 2010)

value and ueven values as testing property, reduce greatly

the time for the generation of decision tree, decrease the

computational cost of algorithm, and speed up the

construction speed of decision tree, as well as promote the

efciency of decision tree classifer. For the construction of

decision tree, we could know from gain(A) = I (P, N) - E(A),

the I(P,N) at each node is a ration" so the entropy E(A) of

property A could be selected as the competitive criteria

between nodes.

(.,- _

:(,

-

,

I

I

(

-

, -

.

,

.

,

- - - -

And put the above formula into E (A), then,

E

(A)

=

Pi

+

ni

(-

log

2

log

2

J

i

P

+

N

Pi + ni Pi + ni Pi + ni Pi + ni

f 1 ( ,

-

J

-

L

..

I

-

..

I

(-

,...

- -

Because (P

+

N) In2 is a constant in taining data set, so

we could suppose a fction S(A) to meet the following

formula:

s(., - _

(

,

-

-

,

I -

J

I

-

S(A) just contains add, multiply, division operations, so

operation time is certainly shot than that of E(A) which

contains multiple logarithmic terms. So, S(A) fnction is

selected to compute the entropy of each property for

comparison, the biggest information gain that is smallest

node of entropy of property is selected to be splitting node.

In this article, S(A) is named simplifed information entropy.

But the generation of S(A) is in line with I(l

+

x) " x. So,

before giving the number N of property value for S(A), the

accuracy for data classifcation of decision tree classifer

must be afected. However, seeing the experimental data

results, this afuence is very small from the whole

performance of classifer to data classifcation.

B. A

n

alysis of algorithm

Simplifed operation is easy to be extended to multiple

classes. Suppose sample set S has C classifcations of

sample training set in total, with the quantity of each sample

classifcation as pi, i=I,2, ... ,C. If propert A is considered

as root of decision tree, A has v values vI, v2, ... , vv, it

divide E into V subsets EI,E2, ... ,Ev. Suppose the quantity

of j sample classifcation contained in Ei is Pij,j =1,2, ... ,C,

then the entropy of subset Ei is:

__

||

N

,

|

Because IEilIn 2 is a constat, the substitution of the

number N of property is made to get that Entropy (EIA) is

c

v

,

|

,

equal to

f

]1

,

,

]1 N,

this polynomial is very

similar with two situations, and the situation of knowledge

classifcation is more. So in the same situation, the

computation of information entropy

C v

,

,

with

f

]

I

,

I

]1 N

is much faster than that with

E(A) fnction, and could promote the effciency of decision

tree classifer.

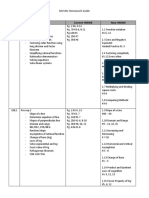

V. Sample validation

A specifc example is used to compare the advantages

and disadvatages of the improved method mentioned

above ad ID3 algorithm. Training sample is described in

table 1. Figure 1 is the decision tee generated by ID3

algorithm. From the fgue, we could obviously fnd the

multiple-valued preference of property selection, so we

reconstruct a decision tree with optimization method

ofered in this article: frstly, reconstruct decision tree

according to the method that is put forward to overcome

multiple-valued property selection. Seeing fgure 2, we

could fnd that this method better overcome the problem of

multiple-valued preference of ID3 algorithm, decision tee

is simpler afer optimized, and could maintain the precision

of original algorithm well. Decision tree fgure 3 could be

obtained by selecting body situation as root node of

decision and calling recursively this method to construct

various sub-trees.

property

One

two

three

four

fve

six

seven

eight

nine

ten

eleven

twelve

thirteen

fourteen

ffeen

1 bl 1 1 bl f a e a e o trammg sample

Monthly

physical condition gender

income

no healthy male

no sufering three disease female

no healthy male

yes sufering two disease male

no sufering three disease female

no sufering one disease female

yes sufering two disease male

no sufering one disease male

no sufering two disease female

no sufering two disease female

no healthy male

no sufering one disease female

no sufering three disease female

no healthy male

yes sufering one disease female

classifcation

N

P

N

P

P

N

P

N

N

P

P

P

P

N

P

VI-157 Volume 1

2010 Interational Conference On Computer Design And Appliations (ICCDA 2010)

Figure

algorithm

The decision tree structured with ID3

Figure 2: decision tree constcted in the condition of

avoiding multi value preference

Figure 3: the decision tree constructed with new

property selection criteria

From the construction of decision tree, we could know

that property selection criteria changes, but constructed

decision tree form and ID3 algorithm is basically consistent,

and the computation is simple, the computational speed is

promoted greatly. It is suitable to do mining in large-scale

database or data warehouse.

V. CONCLUSION

With the analysis for the common problems in structure

process of decision tree, this article focuses on the problems

of decision tree algorithm existed in the links of property

vacancy and property selection to put forward the concept

of Weighted Simplifcation Entropy, to utilize the size of

Weighted Simplifcation Entropy to determine splitting

property, to put forward optimization strategy based on ID3

algorithm, then to structure decision tree classifer. The

example verifes that the method put forward in this article

precedes ID3 algorithm, and could efectively promotes the

effciency, predicted precision and rule conciseness of

algorithm

REFERENCE

[I] Mobasher B,Cooley R,Jaideep S,etal. Comments on decision tree.

New York:IEEE Press, I 999.

[2] Shahabi C, Zarkesh A M, Adibi J, et al. Introduction of neutral

network [C] . . Biningham: IEEE Press,2001.

[3] Yan T,JacobesnM,Garcia-Mo Lina H,et al Introduction of genetic

algorithm. [C]. :Paris WAM Press,1999.

[4] Xu L. The conception of rough set VO. Alaska:Anchorage

Press,200 1.

[5] Bezdek J C. Introduction of statistical model Ph. D. D,M athematics,

Co mell University, Ithaca, N ew York, 1973

[6] KamelM and Selim S Z.The application of induction learing

algorithm. 1994,27 (3) : 421 - 428

[7] Zadeh L A. Introduction of ID3 algorithm . 1965, 8: 338 - 353

[8] KamelM and Selim S Z. Analysis of ID3 algorithm. 1994, 61: 177-

188

[9] A 1- Sultan K S and Selim S Z. Application of decision tree. 1993, 26

(9) : 1357 - 1361

[10] M iyamo to S and N akayama K.The introduction of training set.

1986,16 (3): 479 - 482

[II] Bezdek J C, Hathaway R, SabinM and TuckerW. The analysis of

missing property value.: Man. Cybemet. 1987, 17 (5): 873 -877

[12] KameciM and Selina S Z. Centrol classifcation algorithm .. 1994,27

(3) : 421 - 428

[13] JamelM ad Salim S Z. The construction of decision tree. 1994,61:

177- 188

[14] A 1- Susan K S and Selim S Z. The introduction of heuristic strategy

1993,26 (9): 1357 - 1361

[15] Meyanmo to S and Naayama K. I Infonation content of data

classifcation . IEEE T

VI-I58 Volume I

You might also like

- The Yellow House: A Memoir (2019 National Book Award Winner)From EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Rating: 4 out of 5 stars4/5 (98)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceFrom EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceRating: 4 out of 5 stars4/5 (895)

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeFrom EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeRating: 4 out of 5 stars4/5 (5794)

- The Little Book of Hygge: Danish Secrets to Happy LivingFrom EverandThe Little Book of Hygge: Danish Secrets to Happy LivingRating: 3.5 out of 5 stars3.5/5 (399)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaFrom EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaRating: 4.5 out of 5 stars4.5/5 (266)

- Shoe Dog: A Memoir by the Creator of NikeFrom EverandShoe Dog: A Memoir by the Creator of NikeRating: 4.5 out of 5 stars4.5/5 (537)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureFrom EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureRating: 4.5 out of 5 stars4.5/5 (474)

- Never Split the Difference: Negotiating As If Your Life Depended On ItFrom EverandNever Split the Difference: Negotiating As If Your Life Depended On ItRating: 4.5 out of 5 stars4.5/5 (838)

- Grit: The Power of Passion and PerseveranceFrom EverandGrit: The Power of Passion and PerseveranceRating: 4 out of 5 stars4/5 (588)

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryFrom EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryRating: 3.5 out of 5 stars3.5/5 (231)

- The Emperor of All Maladies: A Biography of CancerFrom EverandThe Emperor of All Maladies: A Biography of CancerRating: 4.5 out of 5 stars4.5/5 (271)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyFrom EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyRating: 3.5 out of 5 stars3.5/5 (2259)

- On Fire: The (Burning) Case for a Green New DealFrom EverandOn Fire: The (Burning) Case for a Green New DealRating: 4 out of 5 stars4/5 (73)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersFrom EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersRating: 4.5 out of 5 stars4.5/5 (344)

- Team of Rivals: The Political Genius of Abraham LincolnFrom EverandTeam of Rivals: The Political Genius of Abraham LincolnRating: 4.5 out of 5 stars4.5/5 (234)

- The Unwinding: An Inner History of the New AmericaFrom EverandThe Unwinding: An Inner History of the New AmericaRating: 4 out of 5 stars4/5 (45)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreFrom EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreRating: 4 out of 5 stars4/5 (1090)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)From EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Rating: 4.5 out of 5 stars4.5/5 (121)

- Her Body and Other Parties: StoriesFrom EverandHer Body and Other Parties: StoriesRating: 4 out of 5 stars4/5 (821)

- Managerial Quality and Productivity Dynamics: Achyuta Adhvaryu Anant Nyshadham Jorge TamayoDocument76 pagesManagerial Quality and Productivity Dynamics: Achyuta Adhvaryu Anant Nyshadham Jorge TamayoHabtamu AyeleNo ratings yet

- Cal1 Ch1 2122 S1Document100 pagesCal1 Ch1 2122 S1Trần Xuân QuỳnhNo ratings yet

- KautilyaDocument19 pagesKautilyaUtsav AnalNo ratings yet

- UCCM1153C1 Lecture Note LatestDocument143 pagesUCCM1153C1 Lecture Note LatestChong Wai LeongNo ratings yet

- C918 PDFDocument6 pagesC918 PDFRam Kumar JaiswalNo ratings yet

- MA6351Document73 pagesMA6351XaviFernandoNo ratings yet

- Quick-and-Dirty Logarithms - DspguruDocument1 pageQuick-and-Dirty Logarithms - DspguruBui Van ThanhNo ratings yet

- A Tutorial On Correlation CoefficientsDocument13 pagesA Tutorial On Correlation CoefficientsJust MahasiswaNo ratings yet

- Algebra 1: Enginneering MathematicsDocument27 pagesAlgebra 1: Enginneering MathematicsMerlin QuipaoNo ratings yet

- Previous Examination Paper (June 2022)Document19 pagesPrevious Examination Paper (June 2022)farayi.gadahNo ratings yet

- Mesl Mastery Part 1Document4 pagesMesl Mastery Part 1Lorence CardenasNo ratings yet

- Classified Catalog of The Carnegie Library of Pittsburgh 1902-1906 v2 1000610249Document478 pagesClassified Catalog of The Carnegie Library of Pittsburgh 1902-1906 v2 1000610249adiseifNo ratings yet

- 2019 Form Four End of Term One EvaluationDocument16 pages2019 Form Four End of Term One EvaluationBaguma MichaelNo ratings yet

- Cambridge O Level: Additional Mathematics 4037/21Document16 pagesCambridge O Level: Additional Mathematics 4037/21lin gagantaNo ratings yet

- The Rapid Rise of Supermarkets?: W. Bruce TraillDocument12 pagesThe Rapid Rise of Supermarkets?: W. Bruce TraillDimitris SergiannisNo ratings yet

- Baranyi Et Al 1993 A Non-Autonomous Differential Equation To Model Bacterial Growth PDFDocument17 pagesBaranyi Et Al 1993 A Non-Autonomous Differential Equation To Model Bacterial Growth PDFCarlos AndradeNo ratings yet

- Properties of LogsDocument26 pagesProperties of Logsrcbern2No ratings yet

- General Mathematics: Quarter 1 - Module 26: Domain and Range of Logarithmic FunctionsDocument19 pagesGeneral Mathematics: Quarter 1 - Module 26: Domain and Range of Logarithmic FunctionsDaphnee100% (1)

- BTEC National in Engineering Unit 01Document72 pagesBTEC National in Engineering Unit 01ayanaemairaNo ratings yet

- MCV4U Homework GuideDocument8 pagesMCV4U Homework GuideChristian KapsalesNo ratings yet

- Middle Years Programme (MYP) : A Curricular Guide For Students, Parents & GuardiansDocument27 pagesMiddle Years Programme (MYP) : A Curricular Guide For Students, Parents & GuardianspriyaNo ratings yet

- A Markov State-Space Model of Lupus Nephritis Disease DynamicsDocument11 pagesA Markov State-Space Model of Lupus Nephritis Disease DynamicsAntonio EleuteriNo ratings yet

- Mathematical ProblemsDocument12 pagesMathematical ProblemsMorakinyo Douglas ElijahNo ratings yet

- Log FunctionsDocument13 pagesLog FunctionsAbdul MoizNo ratings yet

- 12th Syllabus Term1Document2 pages12th Syllabus Term1P I X Ξ LNo ratings yet

- Mathematics n2 August Question Paper 2021Document9 pagesMathematics n2 August Question Paper 2021Dennis Mbegabolawe100% (1)

- Chapter 1 (Tutorial)Document5 pagesChapter 1 (Tutorial)Zikri KiNo ratings yet

- Applied Calculus For Business StudentsDocument33 pagesApplied Calculus For Business StudentsDr Srinivasan Nenmeli -K100% (9)

- Knowledge: Skills: Attitudes:: Instructional PlanningDocument8 pagesKnowledge: Skills: Attitudes:: Instructional PlanningAnonymous GLXj9kKp2No ratings yet

- AES4703 Attack and Release Time Constants IDocument33 pagesAES4703 Attack and Release Time Constants IGionniNo ratings yet