Professional Documents

Culture Documents

Leighton Byline 09

Uploaded by

Abdulkadir ElmasOriginal Description:

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Leighton Byline 09

Uploaded by

Abdulkadir ElmasCopyright:

Available Formats

practice

doi:00.0000/ 000

architect’s control. Achieving good

Given the Internet’s bottlenecks, how can we first-mile performance and reliabil-

ity is now a fairly well-understood and

build fast, scalable, content-delivery systems? tractable problem. From the end user’s

point of view, however, a robust first

by tom Leighton mile is necessary, but not sufficient,

for achieving strong application per-

Improving

formance and reliability.

This is where the middle mile comes

in. Difficult to tame and often ignored,

the Internet’s nebulous middle mile

Performance

injects latency bottlenecks, throughput

constraints, and reliability problems

into the Web application performance

equation. Indeed, the term middle mile

on the Internet

is itself a misnomer in that it refers to

a heterogeneous infrastructure that is

owned by many competing entities and

typically spans hundreds or thousands

of miles.

This article highlights the most

serious challenges the middle mile

presents today and offers a look at the

approaches to overcoming these chal-

lenges and improving performance on

the Internet.

Stuck in the Middle

W hen it com es to achieving performance, While we often refer to the Internet as a

reliability, and scalability for commercial-grade Web single entity, it is actually composed of

13,000 different competing networks,

applications, where is the biggest bottleneck? In many each providing access to some small

cases today, we see that the limiting bottleneck is the subset of end users. Internet capacity

has evolved over the years, shaped by

middle mile, or the time data spends traveling back market economics. Money flows into

and forth across the Internet, between origin server the networks from the first and last

and end user. miles, as companies pay for hosting

and end users pay for access. First- and

This wasn’t always the case. A decade ago, the last last-mile capacity has grown 20- and

mile was a likely culprit, with users constrained to 50-fold, respectively, over the past five

to 10 years.

sluggish dial-up modem access speeds. But recent On the other hand, the Internet’s

high levels of global broadband penetration—more middle mile—made up of the peer-

than 300 million subscribers worldwide—have not ing and transit points where networks

trade traffic—is literally a no man’s

only made the last-mile bottleneck history, they have land. Here, economically, there is very

also increased pressure on the rest of the Internet little incentive to build out capacity. If

anything, networks want to minimize

infrastructure to keep pace.5 traffic coming into their networks that

Today, the first mile—that is, origin infrastructure— they don’t get paid for. As a result, peer-

tends to get most of the attention when it comes to ing points are often overburdened,

causing packet loss and service degra-

designing Web applications. This is the portion of the dation.

problem that falls most within an application The fragile economic model of peer-

1 comm unicatio ns o f the ac m | f ebr ua ry 2009 | vo l . 5 2 | no. 2

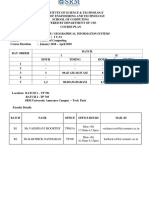

ing can have even more serious conse- Figure 1: Broadband Penetration by Country.

quences. In March 2008, for example,

two major network providers, Cogent

and Telia, de-peered over a business Broadband

dispute. For more than a week, cus- Ranking Country % > 2 Mbps

tomers from Cogent lost access to Telia — Global 59%

and the networks connected to it, and

1 South Korea 90%

vice versa, meaning that Cogent and

Telia end users could not reach certain 2 Belgium 90%

Web sites at all. 3 Japan 87%

Other reliability issues plague the 4 Hong Kong 87%

middle mile as well. Internet outages 5 Switzerland 85%

have causes as varied as transoceanic

6 Slovakia 83%

cable cuts, power outages, and DDoS

(distributed denial-of-service) attacks. 7 Norway 82%

In February 2008, for example, com- 8 Denmark 79%

munications were severely disrupted 9 Netherlands 77%

in Southeast Asia and the Middle East 10 Sweden 75%

when a series of undersea cables were

…

cut. According to TeleGeography, the

cuts reduced bandwidth connectivity 20. United States 71%

between Europe and the Middle East

by 75%.8

Internet protocols such as BGP Fast Broadband

(Border Gateway Protocol, the Inter- Ranking Country % > 5 Mbps

net’s primary internetwork routing al- — Global 19%

gorithm) are just as susceptible as the

1 South Korea 64%

physical network infrastructure. For

example, in February 2008, when Paki- 2 Japan 52%

stan tried to block access to YouTube 3 Hong Kong 37%

from within the country by broadcast- 4 Sweden 32%

ing a more specific BGP route, it acci- 5 Belgium 26%

dentally caused a near-global YouTube

6 United States 26%

blackout, underscoring the vulnerabil-

ity of BGP to human error (as well as 7 Romania 22%

foul play).2 8 Netherlands 22%

The prevalence of these Internet re- 9 Canada 18%

liability and peering-point problems

10 Denmark 18%

means that the longer data must travel

through the middle mile, the more it Source: Akamai’s State of the Internet Report, 02 2008

is subject to congestion, packet loss,

and poor performance. These middle-

mile problems are further exacerbated

by current trends—most notably the tions.4 Akamai Technologies’ data, and performance requirements in turn

increase in last-mile capacity and de- shown in Figure 1, reveals that 59% of put greater pressure on the Internet’s

mand. Broadband adoption continues its global users have broadband con- internal infrastructure—the middle

to rise, in terms of both penetration nections (with speeds greater than 2 mile. In fact, the fast-rising popular-

and speed, as ISPs invest in last-mile Mbps), and 19% of global users have ity of video has sparked debate about

infrastructure. AT&T just spent ap- “high broadband” connections great- whether the Internet can scale to meet

proximately $6.5 billion to roll out er than 5Mbps—fast enough to sup- the demand.

its U-verse service, while Verizon is port DVD-quality content.2 The high- Consider, for example, delivering

spending $23 billion to wire 18 million broadband numbers represent a 19% a TV-quality stream (2Mbps) to an au-

homes with FiOS (Fiber-optic Service) increase in just three months. dience of 50 million viewers, approxi-

by 2010.6,7 Comcast also recently an- mately the audience size of a popular TV

nounced it plans to offer speeds of up A Question of Scale show. The scenario produces aggregate

to 100Mbps within a year.3 Along with the greater demand and bandwidth requirements of 100Tbps.

Demand drives this last-mile boom: availability of broadband comes a rise This is a reasonable vision for the near

Pew Internet’s 2008 report shows that in user expectations for faster sites, term—the next two to five years—but it

one-third of U.S. broadband users have richer media, and highly interactive ap- is orders of magnitude larger than the

chosen to pay more for faster connec- plications. The increased traffic loads biggest online events today, leading to

f e b r ua ry 2 0 0 9 | vo l. 52 | n o. 2 | c om m u n ic at ion s of the acm 2

practice

skepticism about the Internet’s abil- throughput and download times. Five that expensive infrastructure will sit

ity to handle such demand. Moreover, or 10 years ago, dial-up modem speeds underutilized most of the time. In ad-

these numbers are just for a single TV- would have been the bottleneck on dition, accurately predicting traffic

quality show. If hundreds of millions these files, but as we look at the Inter- demand is extremely difficult, and a

of end users were to download Blu-ray- net today and into the future, middle- centralized hosting model does not

quality movies regularly over the Inter- mile distance becomes the bottleneck. provide the flexibility to handle unex-

net, the resulting traffic load would go pected surges.

up by an additional one or two orders Four Approaches to “Big Data Center” CDNs. Content-

of magnitude. Content Delivery delivery networks offer improved

Another interesting side effect of Given these bottlenecks and scalability scalability by offloading the delivery

the growth in video and rich media challenges, how does one achieve the of cacheable content from the origin

file sizes is that the distance between levels of performance and reliability re- server onto a larger, shared network.

server and end user becomes critical quired for effective delivery of content One common CDN approach can be

to end-user performance. This is the and applications over the Internet? described as “big data center” architec-

result of a somewhat counterintuitive There are four main approaches to ture—caching and delivering customer

phenomenon that we call the Fat File distributing content servers in a con- content from perhaps a couple dozen

Paradox: given that data packets can tent-delivery architecture: centralized high-capacity data centers connected

traverse networks at close to the speed hosting, “big data center” CDNs (con- to major backbones.

of light, why does it takes so long for a tent-delivery networks), highly distrib- Although this approach offers some

“fat file” to cross the country, even if uted CDNs, and peer-to-peer networks. performance benefit and economies

the network is not congested? Centralized Hosting.Traditionally ar- of scale over centralized hosting, the

It turns out that because of the way chitected Web sites use one or a small potential improvements are limited

the underlying network protocols work, number of collocation sites to host because the CDN’s servers are still far

latency and throughput are directly content. Commercial-scale sites gen- away from most users and still deliver

coupled. TCP, for example, allows only erally have at least two geographically content from the wrong side of the

small amounts of data to be sent at a dispersed mirror locations to provide middle-mile bottlenecks.

time (that is, the TCP window) before additional performance (by being clos- It may seem counterintuitive that

having to pause and wait for acknowl- er to different groups of end users), reli- having a presence in a couple dozen ma-

edgments from the receiving end. This ability (by providing redundancy), and jor backbones isn’t enough to achieve

means that throughput is effectively scalability (through greater capacity). commercial-grade performance. In

throttled by network round-trip time This approach is a good start, and fact, even the largest of those networks

(latency), which can become the bot- for small sites catering to a localized controls very little end-user access traf-

tleneck for file download speeds and audience it may be enough. The per- fic. For example, the top 30 networks

video viewing quality. formance and reliability fall short of combined deliver only 50% of end-user

Packet loss further complicates the expectations for commercial-grade traffic, and it drops off quickly from

problem, since these protocols back sites and applications, however, as the there, with a very long tail distribution

off and send even less data before end-user experience is at the mercy of over the Internet’s 13,000 networks.

waiting for acknowledgment if packet the unreliable Internet and its middle- Even with connectivity to all the biggest

loss is detected. Longer distances in- mile bottlenecks. backbones, data must travel through

crease the chance of congestion and There are other challenges as well: the morass of the middle mile to reach

packet loss to the further detriment of site mirroring is complex and costly, most of the Internet’s 1.4 billion users.

throughput. as is managing capacity. Traffic levels A quick back-of-the-envelope calcu-

Figure 2 illustrates the effect of dis- fluctuate tremendously, so the need to lation shows that this type of architec-

tance (between server and end user) on provision for peak traffic levels means ture hits a wall in terms of scalability

as we move toward a video world. Con-

Figure 2: Effect of Distance on Throughput and Download Times. sider a generous forward projection

on such an architecture—say, 50 high-

capacity data centers, each with 30

Distance from Server Network Typical Throughput 4GB DVD outbound connections, 10Gbps each.

to User Latency Packet Loss (quality) Download Time This gives an upper bound of 15Tbps

Local: 1.6 ms 0.6% 44 Mbs 12 min. total capacity for this type of network,

<100 mi. (HDTV) far short of the 100Tbps needed to sup-

Regional: 16 ms 0.7% 4 Mbs 2.2 hrs. port video in the near term.

500–1,000 mi. (not quite DVD) Highly Distributed CDNs. Another ap-

Cross-continent: 48 ms 1.0% 1 Mbs 8.2 hrs. proach to content delivery is to leverage

~3,000 mi. (not quite TV)

a very highly distributed network—one

Multi-continent: 96 ms 1.4% 0.4 Mbs 20 hrs. with servers in thousands of networks,

~6,000 mi. (poor)

rather than dozens. On the surface, this

architecture may appear quite similar

to the “big data center” CDN. In reality,

3 communicatio ns o f the ac m | f ebr ua ry 2009 | vo l . 5 2 | no. 2

practice

however, it is a fundamentally different In reality, however, P2P faces some

approach to content-server placement, serious limitations, most notably be-

with a difference of two orders of mag- cause the total download capacity of a

nitude in the degree of distribution. P2P network is throttled by its total up-

By putting servers within end-user

ISPs, for example, a highly distributed As Web sites link capacity. Unfortunately, for con-

sumer broadband connections, uplink

CDN delivers content from the right

side of the middle-mile bottlenecks,

become speeds tend to be much lower than

downlink speeds: Comcast’s standard

eliminating peering, connectivity, increasingly high-speed Internet package, for ex-

routing, and distance problems, and

reducing the number of Internet com-

dynamic, ample, offers 6Mbps for download but

only 384Kbps for upload (one-sixteenth

ponents depended on for success. personalized, and of download throughput).

Moreover, this architecture scales. It

can achieve a capacity of 100Tbps, for

application-driven, This means that in situations such

as live streaming where the number

example, with deployments of 20 serv- however, the ability of uploaders (peers sharing content)

ers, each capable of delivering 1Gbps

in 5,000 edge locations. to accelerate is limited by the number of download-

ers (peers requesting content), average

On the other hand, deploying a high- uncacheable content download throughput is equivalent

ly distributed CDN is costly and time to the average uplink throughput and

consuming, and comes with its own becomes equally thus cannot support even mediocre

set of challenges. Fundamentally, the

network must be designed to scale ef-

critical to delivering Web-quality streams. Similarly, P2P

fails in “flash crowd” scenarios where

ficiently from a deployment and man- a strong end-user there is a sudden, sharp increase in de-

agement perspective. This necessitates

development of a number of technolo-

experience. mand, and the number of downloaders

greatly outstrips the capacity of upload-

gies, including: ers in the network.

˲˲ Sophisticated global-scheduling, Somewhat better results can be

mapping, and load-balancing algo- achieved with a hybrid approach, lever-

rithms aging P2P as an extension of a distribut-

˲˲ Distributed control protocols and ed delivery network. In particular, P2P

reliable automated monitoring and can help reduce overall distribution

alerting systems costs in certain situations. Because the

˲˲ Intelligent and automated failover capacity of the P2P network is limited,

and recovery methods however, the architecture of the non-

˲˲ Colossal-scale data aggregation P2P portion of the network still governs

and distribution technologies (de- overall performance and scalability.

signed to handle different trade-offs Each of these four network architec-

between timeliness and accuracy or tures has its trade-offs, but ultimately,

completeness) for delivering rich media to a global

˲˲ Robust global software-deploy- Web audience, a highly distributed ar-

ment mechanisms chitecture provides the only robust so-

˲˲ Distributed content freshness, in- lution for delivering commercial-grade

tegrity, and management systems performance, reliability, and scale.

˲˲ Sophisticated cache-management

protocols to ensure high cache-hit ratios Application Acceleration

These are nontrivial challenges, and Historically, content-delivery solutions

we present some of our approaches have focused on the offloading and de-

later on in this article. livery of static content, and thus far we

Peer-to-Peer Networks. Because a have focused our conversation on the

highly distributed architecture is criti- same. As Web sites become increasing-

cal to achieving scalability and perfor- ly dynamic, personalized, and applica-

mance in video distribution, it is nat- tion-driven, however, the ability to ac-

ural to consider a P2P (peer-to-peer) celerate uncacheable content becomes

architecture. P2P can be thought of equally critical to delivering a strong

as taking the distributed architecture end-user experience.

to its logical extreme, theoretically Ajax, Flash, and other RIA (rich In-

providing nearly infinite scalability. ternet application) technologies work

Moreover, P2P offers attractive eco- to enhance Web application respon-

nomics under current network pricing siveness on the browser side, but ul-

structures. timately, these types of applications

f e b r ua ry 2 0 0 9 | vo l. 52 | n o. 2 | c om m u n ic at ion s of the acm 4

practice

all still require significant numbers of users, so that users perceive a level of

round-trips back to the origin server. application responsiveness akin to

This makes them highly susceptible

to all the bottlenecks I’ve mentioned

The real growth that of an application being delivered

directly from a nearby server, even

before: peering-point congestion, net- in bandwidth- though, in fact, some of the embedded

work latency, poor routing, and Inter-

net outages. intensive Web content is being fetched from the ori-

gin server across the long-haul Inter-

Speeding up these round-trips is a content, rich media, net. Prefetching by forward caches, for

complex problem, but many optimi-

zations are made possible by using a and Web- and IP- example, does not provide this perfor-

mance benefit because the prefetched

highly distributed infrastructure.

Optimization 1: Reduce transport-

based applications content must still travel over the mid-

dle mile before reaching the end user.

layer overhead. Architected for reliabil- is just beginning. Also, note that unlike link prefetching

ity over efficiency, protocols such as

TCP have substantial overhead. They

The challenges (which can also be done), embedded

content prefetching does not expend

require multiple round-trips (between presented by this extra bandwidth resources and does

the two communicating parties) to set

up connections, use a slow initial rate

growth are many. not request extraneous objects that

may not be requested by the end user.

of data exchange, and recover slowly With current trends toward highly

from packet loss. In contrast, a net- personalized applications and user-

work that uses persistent connections generated content, there’s been growth

and optimizes parameters for efficien- in either uncacheable or long-tail (that

cy (given knowledge of current network is, not likely to be in cache) embedded

conditions) can significantly improve content. In these situations, prefetch-

performance by reducing the number ing makes a huge difference in the us-

of round-trips needed to deliver the er-perceived responsiveness of a Web

same set of data. application.

Optimization 2: Find better routes. Optimization 4: Assemble pages at the

In addition to reducing the number edge. The next three optimizations in-

of round-trips needed, we would also volve reducing the amount of content

like to reduce the time needed for each that needs to travel over the middle

round-trip—each journey across the mile. One approach is to cache page

Internet. At first blush, this does not fragments at edge servers and dynami-

seem possible. All Internet data must cally assemble them at the edge in re-

be routed by BGP and must travel over sponse to end-user requests. Pages can

numerous autonomous networks. be personalized (at the edge) based on

BGP is simple and scalable but not characteristics including the end user’s

very efficient or robust. By leveraging a location, connection speed, cookie val-

highly distributed network—one that ues, and so forth. Assembling the page

offers potential intermediary servers at the edge not only offloads the origin

on many different networks—you can server, but also results in much lower

actually speed up uncacheable com- latency to the end user, as the middle

munications by 30% to 50% or more, by mile is avoided.

using routes that are faster and much Optimization 5: Use compression and

less congested. You can also achieve delta encoding. Compression of HTML

much greater communications reli- and other text-based components can

ability by finding alternate routes when reduce the amount of content travel-

the default routes break. ing over the middle mile to one-tenth

Optimization 3: Prefetch embedded of the original size. The use of delta

content. You can do a number of addi- encoding, where a server sends only

tional things at the application layer to the difference between a cached HTML

improve Web application responsive- page and a dynamically generated ver-

ness for end users. One is to prefetch sion, can also greatly cut down on the

embedded content: while an edge serv- amount of content that must travel

er is delivering an HTML page to an end over the long-haul Internet.

user, it can also parse the HTML and While these techniques are part of

retrieve all embedded content before it the HTTP/1.1 specification, browser

is requested by the end user’s browser. support is unreliable. By using a highly

The effectiveness of this optimiza- distributed network that controls both

tion relies on having servers near end endpoints of the middle mile, com-

5 comm unicatio ns o f the ac m | f ebr ua ry 2009 | vo l . 5 2 | no. 2

practice

pression and delta encoding can be It was briefly mentioned earlier that we took several steps, including the use

successfully employed regardless of building and managing a robust, highly of IP Anycast.

the browser. In this case, performance distributed network is not trivial. At Ak- We also designed our system to take

is improved because very little data amai, we sought to build a system with into account DNS’s use of TTLs (time

travels over the middle mile. The edge extremely high reliability—no down- to live) to fix resolutions for a period

server then decompresses the content time, ever—and yet scalable enough of time. Though the efficiency gained

or applies the delta encoding and deliv- to be managed by a relatively small through TTL use is important, we need

ers the complete, correct content to the operations staff, despite operating in to make sure users aren’t being sent

end user. a highly heterogeneous and unreliable to servers based on stale data. Our ap-

Optimization 6: Offload computations environment. Here are some insights proach is to use a two-tier DNS—em-

to the edge. The ability to distribute ap- into the design methodology. ploying longer TTLs at a global level and

plications to edge servers provides the The fundamental assumption be- shorter TTLs at a local level— allowing

ultimate in application performance hind Akamai’s design philosophy is less of a trade-off between DNS effi-

and scalability. Akamai’s network en- that a significant number of compo- ciency and responsiveness to changing

ables distribution of J2EE applications nent or other failures are occurring at conditions. In addition, we have built

to edge servers that create virtual appli- all times in the network. Internet sys- in appropriate failover mechanisms at

cation instances on demand, as need- tems present numerous failure modes, each level.

ed. As with edge page assembly, edge such as machine failure, data-center Principle 2: Use software logic to pro-

computation enables complete origin failure, connectivity failure, software vide message reliability. This design

server offloading, resulting in tremen- failure, and network failure—all oc- principle speaks directly to scalability.

dous scalability and extremely low ap- curring with greater frequency than Rather than building dedicated links

plication latency for the end user. one might think. As mentioned earlier, between data centers, we use the pub-

While not every type of application for example, there are many causes of lic Internet to distribute data—includ-

is an ideal candidate for edge compu- large-scale network outages—includ- ing control messages, configurations,

tation, large classes of popular applica- ing peering problems, transoceanic monitoring information, and custom-

tions—such as contests, product cata- cable cuts, and major virus attacks. er content—throughout our network.

logs, store locators, surveys, product Designing a scalable system that We improve on the performance of

configurators, games, and the like— works under these conditions means existing Internet protocols—for exam-

are well suited for edge computation. embracing the failures as natural and ple, by using multirouting and limited

expected events. The network should retransmissions with UDP (User Da-

Putting it All Together continue to work seamlessly despite tagram Protocol) to achieve reliability

Many of these techniques require a these occurrences. We have identified without sacrificing latency. We also use

highly distributed network. Route op- some practical design principles that software to route data through inter-

timization, as mentioned, depends on result from this philosophy, which we mediary servers to ensure communica-

the availability of a vast overlay net- share here.1 tions (as described in Optimization 2),

work that includes machines on many Principle 1: Ensure significant redun- even when major disruptions (such as

different networks. Other optimiza- dancy in all systems to facilitate failover. cable cuts) occur.

tions such as prefetching and page as- Although this may seem obvious and Principle 3: Use distributed control for

sembly are most effective if the deliver- simple in theory, it can be challenging coordination. Again, this principle is

ing server is near the end user. Finally, in practice. Having a highly distributed important both for fault tolerance and

many transport and application-layer network enables a great deal of redun- scalability. One practical example is the

optimizations require bi-nodal connec- dancy, with multiple backup possibili- use of leader election, where leadership

tions within the network (that is, you ties ready to take over if a component evaluation can depend on many factors

control both endpoints). To maximize fails. To ensure robustness of all sys- including machine status, connectivity

the effect of this optimized connec- tems, however, you will likely need to to other machines in the network, and

tion, the endpoints should be as close work around the constraints of existing monitoring capabilities. When connec-

as possible to the origin server and the protocols and interactions with third- tivity of a local lead server degrades, for

end user. party software, as well as balancing example, a new server is automatically

Note also that these optimizations trade-offs involving cost. elected to assume the role of leader.

work in synergy. TCP overhead is in For example, the Akamai network Principle 4: Fail cleanly and restart.

large part a result of a conservative ap- relies heavily on DNS (Domain Name Based on the previous principles, the

proach that guarantees reliability in System), which has some built-in con- network has already been architected

the face of unknown network condi- straints that affect reliability. One ex- to handle server failures quickly and

tions. Because route optimization gives ample is DNS’s restriction on the size seamlessly, so we are able to take a

us high-performance, congestion-free of responses, which limits the number more aggressive approach to failing

paths, it allows for a much more ag- of IP addresses that we can return to a problematic servers and restarting

gressive and efficient approach to relatively static set of 13. The Generic them from a last known good state.

transport-layer optimizations. Top Level Domain servers, which sup- This sharply reduces the risk of operat-

ply the critical answers to akamai.net ing in a potentially corrupted state. If

Highly Distributed Network Design queries, required more reliability, so a given machine continues to require

f e b r ua ry 2 0 0 9 | vo l. 52 | n o. 2 | c om m u n ic at ion s of the acm 6

practice

restarting, we simply put it into a “long our worldwide network, without dis- (games, movies, sports) shifts to the

sleep” mode to minimize impact to the rupting our always-on services. Internet from other broadcast media,

overall network. Minimal operations overhead. A the stresses placed on the Internet’s

Principle 5: Phase software releases. large, highly distributed, Internet- middle mile will become increasingly

After passing the quality assurance based network can be very difficult to apparent and detrimental. As such, we

(QA) process, software is released to maintain, given its sheer size, number believe the issues raised in this article

the live network in phases. It is first de- of network partners, heterogeneous and the benefits of a highly distributed

ployed to a single machine. Then, after nature, and diversity of geographies, approach to content delivery will only

performing the appropriate checks, it time zones, and languages. Because grow in importance as we collectively

is deployed to a single region, then pos- the Akamai network design is based work to enable the Internet to scale to

sibly to additional subsets of the net- on the assumption that components the requirements of the next genera-

work, and finally to the entire network. will fail, however, our operations team tion of users.

The nature of the release dictates how does not need to be concerned about

many phases and how long each one most failures. In addition, the team References

lasts. The previous principles, particu- can aggressively suspend machines or 1. Afergan, M., Wein, J., LaMeyer, A. Experience with

larly use of redundancy, distributed some principles for building an Internet-scale reliable

data centers if it sees any slightly wor- system. In Proceedings of the 2nd Conference on Real,

control, and aggressive restarts, make risome behavior. There is no need to Large Distributed Systems 2. (These principles are laid

out in more detail in this 2005 research paper.)

it possible to deploy software releases rush to get components back online 2. Akamai Report: The State of the Internet, 2nd quarter,

frequently and safely using this phased right away, as the network absorbs the 2008; http://www.akamai.com/stateoftheinternet/.

(These and other recent Internet reliability events are

approach. component failures without impact to discussed in Akamai’s quarterly report.)

Principle 6: Notice and proactively overall service. 3. Anderson, N. Comcast at CES: 100 Mbps connections

coming this year. ars technica (Jan. 8, 2008); http://

quarantine faults. The ability to isolate This means that at any given time, it arstechnica.com/news.ars/post/20080108-comcast-

faults, particularly in a recovery-orient- takes only eight to 12 operations staff 100mbps-connections-coming-this-year.html.

4. Horrigan, J.B. Home Broadband Adoption 2008. Pew

ed computing system, is perhaps one members, on average, to manage our Internet and American Life Project; http://www.

of the most challenging problems and network of approximately 40,000 devic- pewinternet.org/pdfs/PIP_Broadband_2008.pdf.

5. Internet World Statistics. Broadband Internet

an area of important ongoing research. es (consisting of more than 35,000 serv- Statistics: Top World Countries with Highest Internet

Here is one example. Consider a hypo- ers plus switches and other networking Broadband Subscribers in 2007; http://www.

internetworldstats.com/dsl.htm.

thetical situation where requests for hardware). Even at peak times, we suc- 6. Mehta, S. Verizon’s big bet on fiber optics. Fortune

a certain piece of content with a rare cessfully manage this global, highly (Feb. 22, 2007); http://money.cnn.com/magazines/

fortune/fortune_archive/2007/03/05/8401289/.

set of configuration parameters trig- distributed network with fewer than 20 7. Spangler T. AT&T: U-verse TV spending to increase.

ger a latent bug. Automatically failing Multichannel News (May 8, 2007); http://www.

staff members. multichannel.com/article/CA6440129.html.

the servers affected is not enough, as Lower costs, easier to scale. In addi- 8. TeleGeography. Cable cuts disrupt Internet in Middle

East and India. CommsUpdate (Jan. 31, 2008); http://

requests for this content will then be tion to the minimal operational staff www.telegeography.com/cu/article.php?article_

directed to other machines, spreading needed to manage such a large net- id=21528.

the problem. To solve this problem, work, this design philosophy has had

our caching algorithms constrain each several implications that have led to Tom Leighton co-founded Akamai Technologies in August

1998. Serving as chief scientist and as a director to the

set of content to certain servers so as reduced costs and improved scalabil- board, he is Akamai’s technology visionary, as well as

to limit the spread of fatal requests. In ity. For example, we use commodity a key member of the executive committee setting the

company’s direction. He is an authority on algorithms for

general, no single customer’s content hardware instead of more expensive, network applications. Leighton is a Fellow of the American

footprint should dominate any other more reliable servers. We deploy in Academy of Arts and Sciences, the National Academy of

Science, and the National Academy of Engineering.

customer’s footprint among available third-party data centers instead of hav-

servers. These constraints are dynami- ing our own. We use the public Internet A previous version of this article appeared in the October

2008 issue of ACM Queue magazine.

cally determined based on current lev- instead of having dedicated links. We

els of demand for the content, while deploy in greater numbers of smaller © 2009 ACM 0001-0782/09/0200 $5.00

keeping the network safe. regions—many of which host our serv-

ers for free—rather than in fewer, larg-

Practical Results and Benefits er, more “reliable” data centers where

Besides the inherent fault-tolerance congestion can be greatest.

benefits, a system designed around

these principles offers numerous other Conclusion

benefits. Even though we’ve seen dramatic ad-

Faster software rollouts. Because the vances in the ubiquity and usefulness

network absorbs machine and regional of the Internet over the past decade,

failures without impact, Akamai is able the real growth in bandwidth-intensive

to safely but aggressively roll out new Web content, rich media, and Web-

software using the phased rollout ap- and IP-based applications is just begin-

proach. As a benchmark, we have his- ning. The challenges presented by this

torically implemented approximately growth are many: as businesses move

22 software releases and 1,000 custom- more of their critical functions on-

er configuration releases per month to line, and as consumer entertainment

7 communicatio ns o f the acm | f ebr ua ry 2009 | vo l . 5 2 | no. 2

practice

f e b r ua ry 2 0 0 9 | vo l. 52 | n o. 2 | c om m u n ic at ion s of the acm 8

You might also like

- An Efficient Resource Allocation For D2D Communications Underlaying in HetNets Base-1Document6 pagesAn Efficient Resource Allocation For D2D Communications Underlaying in HetNets Base-1zaman9206100% (1)

- AP Invoice ConversionDocument22 pagesAP Invoice Conversionsivananda11No ratings yet

- 101 0 6 SiteGenesisWireframeDocument221 pages101 0 6 SiteGenesisWireframeRafa C.No ratings yet

- Stack7 Cloud Runbook R19Document46 pagesStack7 Cloud Runbook R19natarajan100% (2)

- Datasheet Training DLP System Engineer enDocument7 pagesDatasheet Training DLP System Engineer enAbhishek OmPrakash Vishwakarma100% (1)

- Improving Performance On The InternetDocument8 pagesImproving Performance On The InternetFarinNo ratings yet

- Intent Based Networking: Discussion Script (Audience: C-SUITE, Including CIO, CISO, CFO, COO, CMO, Chief Digital Officer)Document2 pagesIntent Based Networking: Discussion Script (Audience: C-SUITE, Including CIO, CISO, CFO, COO, CMO, Chief Digital Officer)Zeus TitanNo ratings yet

- ITW250A Why Cloud ComputingDocument7 pagesITW250A Why Cloud ComputingNajmuddin MohamedNo ratings yet

- Benda 97Document3 pagesBenda 97roger.bastida01No ratings yet

- WP Mobile Backhaul EvolutionDocument8 pagesWP Mobile Backhaul EvolutionAto KwaminaNo ratings yet

- Building A World-Class Data Center Network Based On Open StandardsDocument6 pagesBuilding A World-Class Data Center Network Based On Open StandardsPeterNo ratings yet

- Application-Centric Networking: The Art ofDocument4 pagesApplication-Centric Networking: The Art ofmd ShabirNo ratings yet

- Mobile Computing Unit 1, 2Document276 pagesMobile Computing Unit 1, 2evilanubhav67% (3)

- Ethane: Taking Control of The EnterpriseDocument12 pagesEthane: Taking Control of The Enterprisejessica cuetoNo ratings yet

- Rain TechnologyDocument20 pagesRain TechnologyRoop KumarNo ratings yet

- Cisco Networking Academy ProgramDocument2 pagesCisco Networking Academy ProgramMohammed HosnyNo ratings yet

- Rain TechnologyDocument21 pagesRain Technologymanju100% (2)

- SME Alliance CoDocument13 pagesSME Alliance CoB RNo ratings yet

- Don't Forget The NetworkDocument3 pagesDon't Forget The NetworkquocircaNo ratings yet

- Capacity PlanningDocument6 pagesCapacity Planningtejas kumarNo ratings yet

- World Mobile Chain: A Blockchain Based Solution To Empower A Sharing Economy For Telecommunications InfrastructureDocument12 pagesWorld Mobile Chain: A Blockchain Based Solution To Empower A Sharing Economy For Telecommunications InfrastructureBsbbsNo ratings yet

- Future Generation Computer Systems: Yan Wang Zhensen Wu Yuanjian Zhu Pei ZhangDocument10 pagesFuture Generation Computer Systems: Yan Wang Zhensen Wu Yuanjian Zhu Pei ZhangDilip KumarNo ratings yet

- A Short Guide To A Faster WANDocument4 pagesA Short Guide To A Faster WANquocircaNo ratings yet

- EnterpriseDocument18 pagesEnterpriseyogendrasthaNo ratings yet

- Denali: Lightweight Virtual Machines For Distributed and Networked ApplicationsDocument14 pagesDenali: Lightweight Virtual Machines For Distributed and Networked ApplicationsYueqiang ChengNo ratings yet

- Ocomc Datasheet 2022Document4 pagesOcomc Datasheet 2022Komal PatelNo ratings yet

- Accenture Wi Fi Small Cell Challenges Opportunities PDFDocument8 pagesAccenture Wi Fi Small Cell Challenges Opportunities PDFabdelNo ratings yet

- Chapter 20Document35 pagesChapter 20zakiya hassanNo ratings yet

- SCANDEX: Service Centric Networking For Challenged Decentralised NetworksDocument6 pagesSCANDEX: Service Centric Networking For Challenged Decentralised NetworksLiang WangNo ratings yet

- Rain TechnologyDocument19 pagesRain TechnologyReddy SumanthNo ratings yet

- Adl Future of Enterprise Networking PDFDocument20 pagesAdl Future of Enterprise Networking PDFXavier HernánNo ratings yet

- Core Banking Transformation: Solutions To Standardize Processes and Cut CostsDocument16 pagesCore Banking Transformation: Solutions To Standardize Processes and Cut CostsIBMBanking100% (1)

- Cloud Computing-Issues in MCCDocument4 pagesCloud Computing-Issues in MCCSiraan ShaadinNo ratings yet

- NMIMS Global Access School For Continuing Education (NGA-SCE) Course: Cloud Computing Internal Assignment Applicable For June 2021 ExaminationDocument11 pagesNMIMS Global Access School For Continuing Education (NGA-SCE) Course: Cloud Computing Internal Assignment Applicable For June 2021 ExaminationRohit MotsraNo ratings yet

- Broadband Wireless Comm in Vehicles Jntu AnantapurDocument10 pagesBroadband Wireless Comm in Vehicles Jntu Anantapursarathkumar.hi2947No ratings yet

- IP Routing Analysis White PaperDocument6 pagesIP Routing Analysis White PaperAnkur VermaNo ratings yet

- Enhance The Interaction Between Mobile Users and Web Services Using Cloud ComputingDocument9 pagesEnhance The Interaction Between Mobile Users and Web Services Using Cloud ComputingAmoxcilinNo ratings yet

- HCPP-03 - Small - and Medium-Sized Campus Network Design Guide-2022.01Document77 pagesHCPP-03 - Small - and Medium-Sized Campus Network Design Guide-2022.01MaheeNo ratings yet

- 5G Core Microservices and Containers ISSUE 1.7Document45 pages5G Core Microservices and Containers ISSUE 1.7Mohamed EldessoukyNo ratings yet

- The Time To Modernize Your Middleware Is NowDocument8 pagesThe Time To Modernize Your Middleware Is NowGuru ReddyNo ratings yet

- Nms 1st UnitDocument9 pagesNms 1st UnitjilikajithendarNo ratings yet

- Mobile Agent-Based Applications: A Survey: Abdelkader OuttagartsDocument9 pagesMobile Agent-Based Applications: A Survey: Abdelkader OuttagartsMuzamil Hussain MariNo ratings yet

- Huawei Intelligent Operations White Paper enDocument39 pagesHuawei Intelligent Operations White Paper enmichael dapoNo ratings yet

- Paragon Automation March 2023 - JLMDocument17 pagesParagon Automation March 2023 - JLMdetek kNo ratings yet

- The Future of ConnectivityDocument10 pagesThe Future of ConnectivitySingle DigitsNo ratings yet

- Cloud Computing and Green ComputingDocument18 pagesCloud Computing and Green ComputingAndres Ricardo Lopez DazaNo ratings yet

- 01 Availability and Reliability Modeling of Mobile Cloud ArchitecturesDocument13 pages01 Availability and Reliability Modeling of Mobile Cloud ArchitecturesCayo OliveiraNo ratings yet

- Cooperative Communications in Machine To Machine M2M Solutions Challenges and Future WorkDocument17 pagesCooperative Communications in Machine To Machine M2M Solutions Challenges and Future WorkPoornimaNo ratings yet

- Bulding A Better CDN - PDF Curl DL Whitepapers Bulding A Better CDNDocument10 pagesBulding A Better CDN - PDF Curl DL Whitepapers Bulding A Better CDNJayaprakash SubramanianNo ratings yet

- Improving Delay and Energy Efficiency of Vehicular Networks Using Mobile Femto Access PointsDocument10 pagesImproving Delay and Energy Efficiency of Vehicular Networks Using Mobile Femto Access PointsSarita SarahNo ratings yet

- Evolve or Die: High-Availability Design Principles Drawn From Google's Network InfrastructureDocument15 pagesEvolve or Die: High-Availability Design Principles Drawn From Google's Network InfrastructureNb A DungNo ratings yet

- Mobile Web Browsing - ArticleDocument8 pagesMobile Web Browsing - ArticleVrihad AgarwalNo ratings yet

- Dec 03 Focus On Network QualityDocument1 pageDec 03 Focus On Network QualityFatih ÖzcanNo ratings yet

- An Efficient Survivable Design With Bandwidth Guarantees For Multi-Tenant Cloud NetworksDocument15 pagesAn Efficient Survivable Design With Bandwidth Guarantees For Multi-Tenant Cloud NetworksSelva RajNo ratings yet

- Role of Mobile Agents in Mobile Computing: Amanpreet Kaur, Sukhdeep KaurDocument4 pagesRole of Mobile Agents in Mobile Computing: Amanpreet Kaur, Sukhdeep KaurpavanguptaNo ratings yet

- Challenges in Mobile Computing PDFDocument5 pagesChallenges in Mobile Computing PDFRishabh GuptaNo ratings yet

- 1.1 Unleashing The Future of InnovationDocument8 pages1.1 Unleashing The Future of Innovation275108006No ratings yet

- MIS535M (GRP 1 - Telecoms & Network Resources v4)Document83 pagesMIS535M (GRP 1 - Telecoms & Network Resources v4)Irish June TayagNo ratings yet

- Reliability-Aware Virtualized Network Function Services Provisioning in Mobile Edge ComputingDocument14 pagesReliability-Aware Virtualized Network Function Services Provisioning in Mobile Edge ComputingNguyen Van ToanNo ratings yet

- LimitationsDocument26 pagesLimitationsSeethal KumarsNo ratings yet

- Charting An Intent Driven Network: November 2017Document10 pagesCharting An Intent Driven Network: November 2017Daniel Arsene KrauszNo ratings yet

- Network Automation: Efficiency, Resilience, and The Pathway To 5GDocument18 pagesNetwork Automation: Efficiency, Resilience, and The Pathway To 5GkarthickNo ratings yet

- The Network Is Your BusinessDocument7 pagesThe Network Is Your BusinessIpswitchWhatsUpGold2No ratings yet

- An Introduction to SDN Intent Based NetworkingFrom EverandAn Introduction to SDN Intent Based NetworkingRating: 5 out of 5 stars5/5 (1)

- Deduplication SchoolDocument61 pagesDeduplication SchoolharshvyasNo ratings yet

- Leave Management System AbstractDocument4 pagesLeave Management System AbstractQousain Qureshi50% (2)

- Devtools CheatsheetDocument2 pagesDevtools CheatsheetMiguel Hinojosa100% (1)

- HMI Using VBS PDFDocument13 pagesHMI Using VBS PDFIbrahim KhleifatNo ratings yet

- Analysis Sheet of ISNP: S.No. Name Qualification Experience Conduct Performance Relation To ISNP CommentDocument6 pagesAnalysis Sheet of ISNP: S.No. Name Qualification Experience Conduct Performance Relation To ISNP CommentTarun Kumar SinghNo ratings yet

- Chapter 10Document46 pagesChapter 10Bicycle ThiefNo ratings yet

- Javascript QuestionsDocument34 pagesJavascript QuestionsVijayan.JNo ratings yet

- QUBO Tutorial Version1Document46 pagesQUBO Tutorial Version1Khalil KafrouniNo ratings yet

- Open Source Lab ManualDocument84 pagesOpen Source Lab ManualRamesh KumarNo ratings yet

- 3 RISC + PipelinesDocument55 pages3 RISC + PipelinesOanaMaria92No ratings yet

- Assignment No.: 12: TheoryDocument4 pagesAssignment No.: 12: TheorypoopapuleNo ratings yet

- Multi-Core ArchitecturesDocument43 pagesMulti-Core Architecturesvinotd1100% (1)

- Agfa Dicom PDFDocument93 pagesAgfa Dicom PDFVremedSoluCionesNo ratings yet

- Client Server Programming With WinsockDocument4 pagesClient Server Programming With WinsockJack AzarconNo ratings yet

- Infrastructure DocumentationDocument16 pagesInfrastructure DocumentationRamone ScratchleyNo ratings yet

- (Naveen Prakash, Deepika Prakash) Data Warehouse RDocument183 pages(Naveen Prakash, Deepika Prakash) Data Warehouse RdikesmNo ratings yet

- B.tech 15CS329E Geographical Information SystemsDocument5 pagesB.tech 15CS329E Geographical Information SystemsRajalearn2 Ramlearn2No ratings yet

- Ucs Faults RefDocument866 pagesUcs Faults Refconnect2praveenNo ratings yet

- MEC CAD Installation Download From INTERNET: System RequirementsDocument6 pagesMEC CAD Installation Download From INTERNET: System RequirementsfamusNo ratings yet

- CS161 Project 2 SpecsDocument13 pagesCS161 Project 2 SpecstehstarbuckzNo ratings yet

- SQL GetDocument213 pagesSQL GetMano Haran100% (1)

- Suse Admin GuideDocument629 pagesSuse Admin GuideevteduNo ratings yet

- 1) Siebel ArchitectureDocument12 pages1) Siebel ArchitecturejanaketlNo ratings yet

- Embedded Real Time SystemDocument12 pagesEmbedded Real Time SystemKottai eswariNo ratings yet

- Code Composer StudioDocument4 pagesCode Composer StudioPrathmesh ThergaonkarNo ratings yet

- 4 DosDocument81 pages4 DosaerhaehNo ratings yet