Professional Documents

Culture Documents

A System To Measure Text Similarity-IJAERDV04I0190947

Uploaded by

Editor IJAERDOriginal Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

A System To Measure Text Similarity-IJAERDV04I0190947

Uploaded by

Editor IJAERDCopyright:

Available Formats

e-ISSN (O): 2348-4470

Scientific Journal of Impact Factor (SJIF): 4.72

p-ISSN (P): 2348-6406

International Journal of Advance Engineering and Research

Development

Volume 4, Issue 1, January -2017

A System To Measure Text Similarity

Ankush koul1, Manvi Gupta2, Pranav Tervankar3, Prof. Rahul Kadam4

1,2,3,4

Department of Computer Engineering, Dr D.Y .Patil College of Engineering.Pune

Abstract The aim for proposing a text similarity system is to live the linguistics similarity between 2 documents by

victimization the foremost economical formula to live the similarity. The necessity for mensuration text similarity is to

boost each program question speed and accuracy, so it's become of nice importance with the advance in web. For

humans it's straightforward to search out 2 similar documents supported a selected topic except for a laptop to be

trained to live this, stemming and question enlargement techniques is used.The "text similarity tool is used for

mensuration the degree of plagiarism between documents and its originality to an exact extent.

Keywords: text similarity tool, Fuzzy algorithm

I. INTRODUCTION

Textual similarity could be a thought which may be seen as the way of describing the similarity between strings. A string

will contain that means, i.e. linguistics are often derived from it. The linguistics similarity of strings could be a special

case of linguistics connectedness, that has roots in computing and dates back to 1968. linguistics similarity is employed

as a tool to finish similar ideas, whereas linguistics connectedness is employed to finish connected ideas. for example, a

automotive and a handwheel square measure additional connected than a automotive and a bicycle, however the latter

would be thought of additional similar. linguistics connectedness is employed within the Google programme, wherever

it's wont to verify whether or not strings square measure connected in terms of occurrences on a webpage. AN usually

used tool for similarity measurements is that the WordNet project developed by Princeton. It consists of words from

English people language, wherever every word is expounded to alternative words victimisation relations like synonymity,

word and additional advanced ideas like subordination and superordinate word. These relations allows the user of

WordNet to find that a automotive and a handwheel square measure connected, dark and lightweight square measure

antonyms, bike and bicycle square measure synonyms etc. This project proposes 2 completely different solutions for

mensuration matter similarity:

One victimisation the WordNet project to live the linguistics similarity and one victimisation the questionable edit

distance between strings. These solutions square measure terribly completely different naturally, therefore a comparison

between them are created in addition. This report describes the event of a tool for mensuration matter similarity.

Semantic similarity live could be a central issue in computing, science and science for several years. it's been wide

utilized in linguistic communication process, data retrieval, acceptation clarification, text segmentation, question

responsive, recommender system, data extraction then on. In recent years the measures supported WordNet have

attracted nice concern. They show their skills and create these applications additional intelligent. several linguistics

similarity measures are planned. On the entire, all the measures are often classified into four classes: path length based

mostly measures, data content based mostly measures, feature based mostly measures, and hybrid measures

II. LITERATURE SURVEY

1. Learning Semantic Similarity for very short texts

Levering knowledge on social media, like Twitter and Facebook , needs data retrieval algorithms to become able to relate

terribly short text fragments to every alternative. ancient text similarity strategies like tf-idf cosinesimilarity, supported

word overlap, largely fail to supply smart leads to this case, since word overlap is small or nonexistent. Recently,

distributed word representations, or word embeddings, are shown to with success permit words to match on the

linguistics level. so as to try short text fragmentsas a concatenation of separate wordsan adequate distributed

sentence illustration is required, in existing literature usually obtained by naively combining the individual word

representations. we have a tendency to thus investigated many text representations as a mixture of word embeddings

within the context of linguistics try matching. This paper investigates the effectiveness of many such naive techniques,

also as ancient tf-idf similarity, for fragments of various lengths. Our main contribution may be a start towards a hybrid

technique that mixes the strength of dense distributed representations as hostile thin term matchingwith the strength

of tf-idf primarily based strategies to mechanically scale back the impact of less informative terms. Our new approach

outperforms the prevailing techniques during a toy experimental set-up, resulting in the conclusion that the mixture of

word embeddings and tf-idf data would possibly cause a stronger model for linguistics content at intervals terribly short

text fragments.

@IJAERD-2017, All rights Reserved 332

International Journal of Advance Engineering and Research Development (IJAERD)

Volume 4, Issue 1, January -2017, e-ISSN: 2348 - 4470, print-ISSN: 2348-6406

2. Using NLP techniques and fuzzy semantic similarity for automatic plagiarism detection

Plagiarism is one amongst the foremost serious crimes in academe and analysis fields. during this era, wherever access to

data has become abundant easier, the act of plagiarism is speedily increasing. This paper aligns on external plagiarism

detection methodology, wherever the supply assortment of documents is out there against that the suspicious documents

area unit compared. Primary focus is to find intelligent plagiarism cases wherever linguistics and linguistic variations

play a crucial role. The paper explores the various pre-processing strategies supported tongue process (NLP) techniques.

It any explores fuzzy-semantic similarity measures for document comparisons. The system is finally evaluated

mistreatment PAN 20121 information set and performances of various strategies area unit compared.

3. Research on Text Similarity computing based on word vector model of neural networks

Text similarity computing plays a vital role in tongue process. during this paper, we tend to build a word vector model

supported neural network, and train Chinese corpus from Sohu News, World News, and so on. Meanwhile, a technique of

hard the text linguistics similarity exploitation word vector is projected. Finally, through comparison with the standard

calculation methodology TF-IDF, the experimental results prove the strategy is effective.

III. PROPOSED SYSTEM

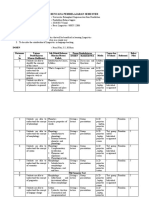

The system of linguistics matter similarity task has 2 main modules: one is lexical module and another one is dependency

parsing based mostly grammar module. each these module have some preprocessing tasks like stop word removal, co-

reference resolution and dependency parsing etc.

Figure one displays the design of the system.

Figure: Planned System Design

ADVANTAGES OF PLANNED SYSTEM:

Computing text similarity could be a foundational technique for a large vary of tasks In tongue process like duplicate

detection, question respondent, or automatic essay grading.Text similarity measures play associate more and more

necessary role in text connected analysis and applications in tasks like info retrieval, text classification, document cluster,

topic detection, topic pursuit, queries generation, question respondent, essay rating, short answer rating, MT, text report

et al. Finding similarity between words could be a elementary a part of text similarity that is then used as a primary stage

for sentence, paragraph and document similarities.

@IJAERD-2017, All rights Reserved 333

International Journal of Advance Engineering and Research Development (IJAERD)

Volume 4, Issue 1, January -2017, e-ISSN: 2348 - 4470, print-ISSN: 2348-6406

V. CONCLUSION

The main goal of this project was to implement a tool for measure matter similarity between texts. 2 strategies are

projected as solutions to the present problem: measure the linguistics similarity, a special case of matter similarity,

wherever the that means of words area unit taking into consideration, exploitation the computer database WordNet and

measure the similarity exploitation edit distance. each of the strategies are with success enforced within the final tool. As

a minimum demand, it was

specified that it should be doable to perform performance comparisons of the strategies. This practicality has been

enforced and envisioned within the graphical interface as 2 bar charts showing the performance of every tool.

We have conducted many tests on the practicality and therefore the code to make sure the tool is functioning properly.

These tests was run with success, therefore we have a tendency to conclude that every one identified errors area unit

removed and therefore the practicality of every methodology is evidently. This truth is, however, not enough for the

ultimate tool to be satisfactory. If the measurements area unit inaccurate in terms of what a person's would take into

account similar, the tool isn't terribly helpful. Therefore, many check persons are evaluating the similarity of variety of

texts. the typical similarity price for every try of texts has then been compared to the results given by the tool, to validate

that the tool is providing helpful information. The results have shown that use of edit distance yield the most effective

results, with a correlation of 0:96, whereas use of WordNet gave a constant of 0:76. many of the projected extension are

enforced. the look of the similarity engine created it straightforward to implement extensions that permits the user to

check quite 2 texts at a time. By exploitation genetic algorithms we've got envisioned the results of a comparison

between multiple texts, by making a map wherever similar texts area unit classified along. The tool has been subject to

totally different optimizations, all of that have improved the general performance. the foremost effective improvement

was a restructuring of text process code, that cause a rise in performance by up to four times. Threads was enforced to

permit use of multiple process units. This improvement inflated performance by up to fifteen on a laptop with 2 process

units. the ultimate result has been satisfactory. The tool meet the minimum necessities and a number of other extensions

has been enforced. By examination the measures performed by the tool with human evaluations, we will conclude that

the tool is ready to live matter similarity with very little deviation from what a person's would take into account similar.

ACKNOWLEDGMENT

We might want to thank the analysts and also distributers for making their assets accessible. We additionally appreciative

to commentator for their significant recommendations furthermore thank the school powers for giving the obliged base

and backing.

REFRENCES

1. Textual Similarity: Comparing texts in order to discover how closely they discuss the same topics Andreas

Schmidt Jensen & Niklas Skamriis.

2. Textual Similarity Johan van Beusekom Peter Gammelgaard Poulsen

3. Eneko Agirre, Mona Diab, Daniel Cer, and Aitor Gonzalez-Agirre,"Semeval 2012 task 6: A pilot on semantic

textual similarity, In Proceedings of the First Joint Conference on Lexical and Computational Semantics-

Volume 1: Proceedings of the main conference and the shared task, and Volume 2: Proceedings of the Sixth

International Workshop on Semantic Evaluation, pp. 385-393.Association for Computational Linguistics, 2012.

4. David Jurgens, Mohammad Taher Pilehvar, and Roberto Navigli, SemEval

5. Ankush Maind, Prof Anil Deorankar, and Dr Prashant Chatur, "Measurement of semantic similarity between

words: A survey, International Journal of Computer Science, Engineeringand Information Technology 2, no. 6

(2012): 189-194.

6. www.google.com

7. www.ieeexplore.ieee.org

8. www.wikipedia.org

@IJAERD-2017, All rights Reserved 334

You might also like

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeFrom EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeRating: 4 out of 5 stars4/5 (5794)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreFrom EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreRating: 4 out of 5 stars4/5 (1090)

- Never Split the Difference: Negotiating As If Your Life Depended On ItFrom EverandNever Split the Difference: Negotiating As If Your Life Depended On ItRating: 4.5 out of 5 stars4.5/5 (838)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceFrom EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceRating: 4 out of 5 stars4/5 (894)

- Grit: The Power of Passion and PerseveranceFrom EverandGrit: The Power of Passion and PerseveranceRating: 4 out of 5 stars4/5 (587)

- Shoe Dog: A Memoir by the Creator of NikeFrom EverandShoe Dog: A Memoir by the Creator of NikeRating: 4.5 out of 5 stars4.5/5 (537)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureFrom EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureRating: 4.5 out of 5 stars4.5/5 (474)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersFrom EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersRating: 4.5 out of 5 stars4.5/5 (344)

- Her Body and Other Parties: StoriesFrom EverandHer Body and Other Parties: StoriesRating: 4 out of 5 stars4/5 (821)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)From EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Rating: 4.5 out of 5 stars4.5/5 (119)

- The Emperor of All Maladies: A Biography of CancerFrom EverandThe Emperor of All Maladies: A Biography of CancerRating: 4.5 out of 5 stars4.5/5 (271)

- The Little Book of Hygge: Danish Secrets to Happy LivingFrom EverandThe Little Book of Hygge: Danish Secrets to Happy LivingRating: 3.5 out of 5 stars3.5/5 (399)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyFrom EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyRating: 3.5 out of 5 stars3.5/5 (2219)

- The Yellow House: A Memoir (2019 National Book Award Winner)From EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Rating: 4 out of 5 stars4/5 (98)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaFrom EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaRating: 4.5 out of 5 stars4.5/5 (265)

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryFrom EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryRating: 3.5 out of 5 stars3.5/5 (231)

- Team of Rivals: The Political Genius of Abraham LincolnFrom EverandTeam of Rivals: The Political Genius of Abraham LincolnRating: 4.5 out of 5 stars4.5/5 (234)

- On Fire: The (Burning) Case for a Green New DealFrom EverandOn Fire: The (Burning) Case for a Green New DealRating: 4 out of 5 stars4/5 (73)

- The Unwinding: An Inner History of the New AmericaFrom EverandThe Unwinding: An Inner History of the New AmericaRating: 4 out of 5 stars4/5 (45)

- Gold Experience A1 - Workbook (Answer Key)Document24 pagesGold Experience A1 - Workbook (Answer Key)Daiva Pamaiva100% (3)

- Antecedents of The Students' Acceptance of Online Courses - A Test of UTAUT in The Context of Sri Lankan Higher Education-IJAERDV04I0991102NDocument7 pagesAntecedents of The Students' Acceptance of Online Courses - A Test of UTAUT in The Context of Sri Lankan Higher Education-IJAERDV04I0991102NEditor IJAERDNo ratings yet

- Analysis of Vendor's For Machine Tool Manufacturing Industries With Fuzzy Inference Decision Support System - Modelling Tool-IJAERDV04I0977668Document5 pagesAnalysis of Vendor's For Machine Tool Manufacturing Industries With Fuzzy Inference Decision Support System - Modelling Tool-IJAERDV04I0977668Editor IJAERDNo ratings yet

- A Six-Order Method For Non-Linear Equations To Find Roots-IJAERDV04I0919497Document5 pagesA Six-Order Method For Non-Linear Equations To Find Roots-IJAERDV04I0919497Editor IJAERDNo ratings yet

- A Survey On Different Techniques of CBIR-IJAERDV04I0921177Document5 pagesA Survey On Different Techniques of CBIR-IJAERDV04I0921177Editor IJAERDNo ratings yet

- Analytic Study of Phase Shift Crystal Structure and Temperature Dependent Dielectric Properties of Modified Synthesis One System Ceramics Using BNT-BZT-IJAERDV04I0937657Document6 pagesAnalytic Study of Phase Shift Crystal Structure and Temperature Dependent Dielectric Properties of Modified Synthesis One System Ceramics Using BNT-BZT-IJAERDV04I0937657Editor IJAERDNo ratings yet

- A Review - On Solid Waste Management in Smart Cities-IJAERDV04I0922901Document9 pagesA Review - On Solid Waste Management in Smart Cities-IJAERDV04I0922901Editor IJAERDNo ratings yet

- An Improved Mobility Aware Clustering Based MAC Protocol For Safety Message Dissemination in VANET-IJAERDV04I0970094Document7 pagesAn Improved Mobility Aware Clustering Based MAC Protocol For Safety Message Dissemination in VANET-IJAERDV04I0970094Editor IJAERDNo ratings yet

- Analysis of A Structure With Magneto-Rheological Fluid Damper-IJAERDV04I0917914Document7 pagesAnalysis of A Structure With Magneto-Rheological Fluid Damper-IJAERDV04I0917914Editor IJAERDNo ratings yet

- An Enhance Expert System For Diagnosis of Diabetes Using Fuzzy Rules Over PIMA Dataset-IJAERDV04I0996134Document6 pagesAn Enhance Expert System For Diagnosis of Diabetes Using Fuzzy Rules Over PIMA Dataset-IJAERDV04I0996134Editor IJAERDNo ratings yet

- An Efficient and Secure AMDMM Routing Protocol in MANET-IJAERDV04I0874842Document9 pagesAn Efficient and Secure AMDMM Routing Protocol in MANET-IJAERDV04I0874842Editor IJAERDNo ratings yet

- An Analysis of Water Governance in India - Problems and Remedies-IJAERDV04I0953541Document6 pagesAn Analysis of Water Governance in India - Problems and Remedies-IJAERDV04I0953541Editor IJAERDNo ratings yet

- A Study On Tall Structure With Soft Stories at Various Level by Non-Linear Analysis-IJAERDV04I0928305Document7 pagesA Study On Tall Structure With Soft Stories at Various Level by Non-Linear Analysis-IJAERDV04I0928305Editor IJAERDNo ratings yet

- A Study On Different Failures of Composite Materials-IJAERDV04I0978320Document6 pagesA Study On Different Failures of Composite Materials-IJAERDV04I0978320Editor IJAERDNo ratings yet

- A Study To Determine The Effectiveness of Psychoneurobics On Memory Among High School Children Aged 14 To 16 A Pilot Study-Ijaerdv04i0950904Document3 pagesA Study To Determine The Effectiveness of Psychoneurobics On Memory Among High School Children Aged 14 To 16 A Pilot Study-Ijaerdv04i0950904Editor IJAERDNo ratings yet

- A Critical Review On Flow, Heat Transfer Characteristics and Correlations of Helically Coiled Heat Exchanger-IJAERDV04I0972560Document6 pagesA Critical Review On Flow, Heat Transfer Characteristics and Correlations of Helically Coiled Heat Exchanger-IJAERDV04I0972560Editor IJAERDNo ratings yet

- A Novel Design "Binary To Gray Converter" With QCA Nanotechnology-IJAERDV04I0999722Document5 pagesA Novel Design "Binary To Gray Converter" With QCA Nanotechnology-IJAERDV04I0999722Editor IJAERDNo ratings yet

- A Review of Studies On Ultrasonic Welding-IJAERDV04I0977419Document8 pagesA Review of Studies On Ultrasonic Welding-IJAERDV04I0977419Editor IJAERDNo ratings yet

- A Review On Solar Photovoltaic Powerplant-Ijaerdv04i0992895Document5 pagesA Review On Solar Photovoltaic Powerplant-Ijaerdv04i0992895Editor IJAERDNo ratings yet

- A Combined Approach WNN For ECG Feature Based Disease Classification-IJAERDV04I0932888Document8 pagesA Combined Approach WNN For ECG Feature Based Disease Classification-IJAERDV04I0932888Editor IJAERDNo ratings yet

- A Concept-Based Approach For Entity Matching-IJAERDV04I0965828Document3 pagesA Concept-Based Approach For Entity Matching-IJAERDV04I0965828Editor IJAERDNo ratings yet

- A Survey On New Horizons For Health Through Mobile Technologies-Ijaerdv04i0334966 PDFDocument6 pagesA Survey On New Horizons For Health Through Mobile Technologies-Ijaerdv04i0334966 PDFEditor IJAERDNo ratings yet

- Design of 5-Bit Flash ADC Through Domino Logic in 180nm, 90nm, and 45nm Technology-IJAERDV04I0819867Document6 pagesDesign of 5-Bit Flash ADC Through Domino Logic in 180nm, 90nm, and 45nm Technology-IJAERDV04I0819867Editor IJAERDNo ratings yet

- A Survey On Content Based Video Retrieval Using Mpeg-7 Visual Descriptors-Ijaerdv04i0399438 PDFDocument7 pagesA Survey On Content Based Video Retrieval Using Mpeg-7 Visual Descriptors-Ijaerdv04i0399438 PDFEditor IJAERDNo ratings yet

- A Survey On Performance Evaluation of VPN-IJAERDV04I0324072Document5 pagesA Survey On Performance Evaluation of VPN-IJAERDV04I0324072Editor IJAERDNo ratings yet

- A Review On Optimization of Bus Driver Scheduling-IJAERDV04I0373755Document5 pagesA Review On Optimization of Bus Driver Scheduling-IJAERDV04I0373755Editor IJAERDNo ratings yet

- A Study On Improving Energy Metering System and Energy Monitoring-IJAERDV04I0346872Document4 pagesA Study On Improving Energy Metering System and Energy Monitoring-IJAERDV04I0346872Editor IJAERDNo ratings yet

- A Study On Speed Breakers-IJAERDV04I0363450Document5 pagesA Study On Speed Breakers-IJAERDV04I0363450Editor IJAERDNo ratings yet

- A Survey On Content Based Video Retrieval Using Mpeg-7 Visual Descriptors-Ijaerdv04i0399438Document5 pagesA Survey On Content Based Video Retrieval Using Mpeg-7 Visual Descriptors-Ijaerdv04i0399438Editor IJAERDNo ratings yet

- A Review Pneumatic Bumper For Four Wheeler Using Two Cylinder-IJAERDV04I0342284Document4 pagesA Review Pneumatic Bumper For Four Wheeler Using Two Cylinder-IJAERDV04I0342284Editor IJAERDNo ratings yet

- A Review On Mood Prediction Based Music System-IJAERDV04I0355925Document6 pagesA Review On Mood Prediction Based Music System-IJAERDV04I0355925Editor IJAERDNo ratings yet

- CBSE Class 12 English Core Question Paper Solved 2019 Set ADocument30 pagesCBSE Class 12 English Core Question Paper Solved 2019 Set ARiya SinghNo ratings yet

- Brown Vs ScrivenerDocument2 pagesBrown Vs ScrivenerAgustín TrujilloNo ratings yet

- Marlett 2013 Phonology StudentDocument368 pagesMarlett 2013 Phonology StudentAlexander RamírezNo ratings yet

- Finding Your True CallingDocument114 pagesFinding Your True CallingNeeru Bhardwaj100% (1)

- PHD PDFDocument53 pagesPHD PDFPriyashunmugavelNo ratings yet

- 9781786460196-Java 9 Programming BlueprintsDocument458 pages9781786460196-Java 9 Programming BlueprintsPopescu Aurelian100% (1)

- The Importance of English LanguageDocument3 pagesThe Importance of English LanguageresearchparksNo ratings yet

- RPS Basic LinguisticsDocument5 pagesRPS Basic LinguisticsNurul FitriNo ratings yet

- REVIEW Units 6+ 7+ 8Document2 pagesREVIEW Units 6+ 7+ 8Fatima haguiNo ratings yet

- ENGLISH: Grammar & Composition: Added Enrichment EvaluationDocument18 pagesENGLISH: Grammar & Composition: Added Enrichment EvaluationKeith LavinNo ratings yet

- Question #20Document12 pagesQuestion #20Елена ХалимоноваNo ratings yet

- VERBnTOnBEnnn905f5052e37b6d6nn 235f569e65882ceDocument5 pagesVERBnTOnBEnnn905f5052e37b6d6nn 235f569e65882ceMIGUEL ANGEL CORREA MANTILLANo ratings yet

- Ielts-Writing-Task-1 - PH M Lý Nhã CaDocument2 pagesIelts-Writing-Task-1 - PH M Lý Nhã CaCa NhãNo ratings yet

- Ffective Riting Kills: Training & Discussion OnDocument37 pagesFfective Riting Kills: Training & Discussion OnKasi ReddyNo ratings yet

- CreateSpace ArduinoDocument57 pagesCreateSpace ArduinoMichel MüllerNo ratings yet

- FL FL: Flora Flamingo Learns To FlyDocument18 pagesFL FL: Flora Flamingo Learns To FlyAnna Lushagina100% (2)

- Free Online Dictionary, Thesaurus and Reference Materials Free Online Dictionary, Thesaurus and Reference MaterialsDocument6 pagesFree Online Dictionary, Thesaurus and Reference Materials Free Online Dictionary, Thesaurus and Reference MaterialsZackblackNo ratings yet

- Lesson Plan PrefixDocument2 pagesLesson Plan Prefixapi-301152526No ratings yet

- Rules Stress Word PDFDocument3 pagesRules Stress Word PDFfernandoNo ratings yet

- Orpie v1.4 User ManualDocument25 pagesOrpie v1.4 User ManualJeff PrattNo ratings yet

- Houschka Rounded SpecimenDocument10 pagesHouschka Rounded SpecimenFormacros ZNo ratings yet

- Grammar Norsk Kurs Oslo UniversityDocument52 pagesGrammar Norsk Kurs Oslo UniversitycaspericaNo ratings yet

- 2002-07 F.Y.B.A-B.Com URDU PDFDocument7 pages2002-07 F.Y.B.A-B.Com URDU PDFSyeda AyeshaNo ratings yet

- Unit 3 Lesson 19 To Go or Not To GoDocument87 pagesUnit 3 Lesson 19 To Go or Not To GoMichael Angelo AsuncionNo ratings yet

- Do You Need Translations Into French, German, English?Document6 pagesDo You Need Translations Into French, German, English?Nathalie SchonNo ratings yet

- University of Cambridge International Examinations General Certificate of Education Ordinary LevelDocument8 pagesUniversity of Cambridge International Examinations General Certificate of Education Ordinary Levelmstudy123456No ratings yet

- An Analysis On Slang Words Used in Jumanji 2 MovieDocument32 pagesAn Analysis On Slang Words Used in Jumanji 2 MovieDoniBenu100% (3)

- Elements of A StoryDocument17 pagesElements of A StoryAyrad DiNo ratings yet

- DLL Week 28 All Subjects Day 1-5Document36 pagesDLL Week 28 All Subjects Day 1-5Rex Lagunzad FloresNo ratings yet