Professional Documents

Culture Documents

Extracting Reviews of Mobile Applications From Google Play Store

Uploaded by

Editor IJRITCCOriginal Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Extracting Reviews of Mobile Applications From Google Play Store

Uploaded by

Editor IJRITCCCopyright:

Available Formats

International Journal on Recent and Innovation Trends in Computing and Communication

ISSN: 2321-8169

Volume: 4 Issue: 4

190 - 193

___________________________________________________________________________________________________________________

Extracting Reviews of Mobile Applications from Google Play Store

Palak Thakur

Asmita Raut

Salina Bharthu

ADIT

A.D. Patel Institute of Technology,

Gujarat, India

ADIT

A.D. Patel Institute of Technology,

Gujarat, India

ADIT

A.D. Patel Institute of Technology,

Gujarat, India

Prof.Bhagirath Prajapati

Prof. Priyanka Puvar

Assoc. Professor, ADIT

A.D. Patel Institute of Technology, Gujarat, India

Asst. Professor, ADIT

A.D. Patel Institute of Technology, Gujarat, India

Abstract-Nowadays, there are huge numbers of mobile applications coming in the market. Many of these applications provide similar

functionality and are available from different unknown vendors. Users are not aware about the risks and malfunctions related to the applications

and hence end up downloading an inappropriate application. Thus classification of the applications has become an important aspect. This

classification can be done with the help of extracting some data and doing analysis on that data. Nowadays reviews have become an asset for

decision making purpose for the users using these mobile applications. Thus classification of the application can be done on the basis of analysis

of the reviews. In this paper we are extracting the reviews for various mobile applications using crawling and scraping process and also the use

of Json and Jsoup has been discussed.

Keywords- Scraper, Web Crawler, focused crawling, reviews, mobile applications,JSoup, JSon

__________________________________________________*****_________________________________________________

I. INTRODUCTION

In todays world, the use of mobile device is not limited to only

calling and texting. With the passage of time, this device has

started providing various facilities among which the most

amazing facility is internet service through which the user is

able to use email service, access the social networking sites etc.

Also due to internet service available in the mobile different

types of other applications are also available to the users for the

use. These applications have different purposes. Initially there

were only few applications available, so the users had not much

to think before using the application. But nowadays

applications with same purpose are available in ample amount.

So the users are really not sure about which application is

preferable and end up downloading an inappropriate

application. Thus to help the user in selecting the most

preferable application, we will provide a risk score to the

application on the basis of the reviews for the application. We

will be extracting the reviews from the Google Play Store.A

Web Crawler is a special program that visits Web site, reads

the page and then collects raw contents in order to create

entries for further work[1]. Crawler will be used to extract the

entire page from Google Play Store. Then we will be focusing

on extracting only the reviews from the pages available for a

particular application. This will be done through the scraping

process. Web scraping is a technique of extracting useful

information from a specific website. Its main purpose is to

extract the required information and then transform it into a

format that can be useful in some another context. We will be

focusing only on the textual content of the reviews and not

other information like date on which the review was written,

the rating given by the user, the title given by the user for the

particular review etc. Then finally to save the extracted reviews

in some useful format, we will be using the Json and

Jsoup.Jsoup is an open source java library consisting of

methods design to extract and manipulate html document

content. Json is used to list all elements that you want to crawl.

II. WEB CRAWLER FOR EXTRACTING PAGES

A Web Crawler is a special program that visits Web site, reads

the page and then collects raw contents in order to create

entries for further work[1]. It is also known as a "spider" or a

"bot"[1]. The behaviour of Web Crawler is the result of a

combination of policies[3]:

A selection policy which states the pages to

download.

A re-visit policy which states when to check for

changes to the page.

A politeness policy that states how to avoid

overloading websites.

A parallelization policy which states how to

coordinate distributed web crawlers.

Here is a process that web crawler follows for extracting the

pages from Google Play store[1]:

Starts from one preselected page which is the page

indexed by the URL for that Mobile application. We

call the starting page the seed page.

It then extract all the links available on that page and

stores them in the URL queue.

Then it follows each of those links to find new pages

for user reviews or other application details.

Extract all the links from all the new pages found.

Follow each of the links to find new pages.

And the procedure then continues till it keeps on

finding new links or else some termination condition

is fulfilled.

190

IJRITCC | April 2016, Available @ http://www.ijritcc.org

________________________________________________________________________________________________________

International Journal on Recent and Innovation Trends in Computing and Communication

ISSN: 2321-8169

Volume: 4 Issue: 4

190 - 193

___________________________________________________________________________________________________________________

III. FOCUSED CRAWLING

A focused crawler is web crawler that attempts to download

only those web pages that are relevant to a pre-defined topic or

set of topic[4]. A focused crawler is called the topical crawler

because fetch only those pages that are topic specific[4].

A focused crawler tries to get the most promising links, and

ignore the off- topic document. A focused crawler must predict

the probability that whether an unvisited page will be relevant

before actually downloading the page.

A possible predictor is the anchor text of links.The

performance of a focused crawler depends on the richness of

links in the specific topic that is being searched, and focused

crawling usually relies on general web search engine for

providing starting points[7].

A.

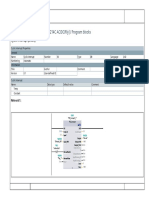

Architecture of focused web crawler

The Architecture of the focused Web crawling is shown here in

figure 1, in the architecture URL Queue contains the seed

URLs maintained by the crawler and is initialized with

unvisited URLs. Web page Downloader fetches URLs from the

URL Queue and Downloads corresponding pages from the

internet. The parser and extractor extract information like the

text and the hyperlink URLs from a Downloaded page[4].

Relevance calculator calculates relevance of a page with

respect to topic and assigns score to URLs extracted from the

page. Topic filter analyses whether the content of parsed pages

is related to topic or not. If the page is relevant, the URLs

extracted from it will be added to the URL queue, otherwise

added to the irrelevant table[4].

IV. WEB SCRAPER FOR EXTRACTING REVIEWS OF

MOBILE APPLICATIONS

The term scraping refers to the process of parsing the HTML

source code of a web page in order to extract or scrape some

specific data from the web page[2]. The target web page does

not need to be opened in a browser. Only an internet

connection is required[2]. But the target web page needs to be

public (not behind a login or encrypted)[2]. The basic process

of scraping can be easily understood as shown in figure 2.

The whole process takes place in the form of request and

response. Initially we need to mention the URL from which the

data has to be extracted. Then an object of URL class is created

which uses the URL mentioned for scraping the data[6].Then a

call to the openConnection method is made using this object.

The openConnection method returns aURLconnection object,

which is used to create a BufferedReader object. The

BufferedReader objects are used to read streams of text,

probably one line at a time[6].

Fig. 2 Scraping Process

But also at the same time it is important to set a limit for the

data to be retrieved from the URL as mentioned. So we can set

a buffer size too[6]. Now to save the data extracted in a well

formatted document the use of JSON and JSOUP is done which

has been discussed in the next section.

Thus for our work we require the reviews of different

applications. Therefore here the process of scraping is applied

where the URL to be mentioned will be the complete URL to

reach the page of a particular application. Here we are using the

Application Id of a particular application. Then using the

scraping process mention in the section, the reviews for a

particular application will be scraped.

Fig. 1 Architecture of Focused Crawler

In the below given code snippet, an object con of class

MyConnection is created. Then we created a variable of type

String which holds the URL of the page that needs to be

scraped. To provide a terminating condition for a scraper we

have used the integer variable MAX_REVIEW_COUNT

whose value is 2000. Now again we have another variable of

type String which holds the application id of the particular

application. Now from the main function a constructor of

ReviewExtract class will be called in which the application id

191

IJRITCC | April 2016, Available @ http://www.ijritcc.org

________________________________________________________________________________________________________

International Journal on Recent and Innovation Trends in Computing and Communication

ISSN: 2321-8169

Volume: 4 Issue: 4

190 - 193

___________________________________________________________________________________________________________________

will be passed as a parameter. This constructor will create a

new XML file at the mentioned location. Now a http request

will be send and control will be transferred to the sendpost()

method which is called using the object of ReviewExtract class.

MyConnection con=new MyConnection();

new InputStreamReader(con.getInputStream()))) {

String inputLine;

StringBuilder response = new StringBuilder();

in.readLine();

private static final String url =

"https://play.google.com/store/getreviews";

in.readLine();

private static final int MAX_REVIEW_COUNT = 2000;

while ((inputLine = in.readLine()) != null) {

private static final String INSTAGRAM =

"com.instagram.android";

response.append(inputLine);

publicReviewExtract(String applicationId){

this.applicationId = applicationId;

file = new File("d:/"+applicationId+".xml");}

public static void main(String[] args) {

ReviewExtract http = new ReviewExtract(INSTAGRAM);

try{System.out.println("Send Http POST request");

file.createNewFile();

try (FileWriterfw = new FileWriter(file.getAbsoluteFile())) {

fw.write("<app id="+http.applicationId+"\">\n");

}

while(!end){

http.sendPost();

}

}

}

Now in sendPost() method, an object named obj is created for

the URL class. Then using the object obj the openConnection

method of HttpsURLConnection class is called. Thus the

connection is established to extract the reviews. Then a post

request is sent to the mentioned URL. Now using the

bufferedReader object the response is taken and then stored in

the response object.

}}

V. USE OF JSOUP AND JSON IN OUR SYSTEM

JSON is abbreviation for JavaScript Object Notation, and it is a

way to store the information in an organized and easy-to-access

manner. In a nutshell, it provides us a human-readable

collection of data which we can access in a really logical

manner. JSON is a lightweight format that is used for data

exchanging. JSON is based on a subset of JavaScript language

(way of building objects in JavaScript).Compared to static

pages, scraping the pages rendered from JSON is often easier:

you simply have to load the JSON string and iterate through

each object, extracting the relevant key/value pairs as you go.

In our project we use json to list all elements that we want to

crawl and also JSON parser to convert a JSON text to a

JavaScript object. A JSON parser will recognize only JSON

text and will not compile scripts. JSON parsers are also faster.

Once the web page is loaded, there arises the need to sniff

around the web page to get specific information. JSoup is an

open-source Java library consisting of methods designed to

extract and manipulate HTML document content[5]. Jsoup

scrape and parse HTML from a URL, find and extract data,

using DOM traversal or CSS selectors[5]. JSoup is an open

source project distributed under the MIT licence. Moreover,

JSoup is compliant to the latest version of HTML5 and parses a

document the same way a modern browser does. The main

classes of JSoupapi are JSoup, Document and Element.

There are 6 packages in JSoupapi providing classes and

interfaces for developing JSoup application.

org.jsoup

org.jsoup.helper( utilities for handling data.)

org.jsoup.nodes( contains HTML document structure nodes)

org.jsoup.parser(Contains the HTML parser, tag specifications,

and HTML tokeniser.)

private void sendPost() throws Exception {

URL obj = new URL(url);

org.jsoup.safety(Contains the jsoup HTML cleaner, and the

whitelist definitions)

HttpsURLConnection con = (HttpsURLConnection)

obj.openConnection();

org.jsoup.select(contains packages to support the CSS-style

element selector.)

try (BufferedReader in = new BufferedReader(

192

IJRITCC | April 2016, Available @ http://www.ijritcc.org

________________________________________________________________________________________________________

International Journal on Recent and Innovation Trends in Computing and Communication

ISSN: 2321-8169

Volume: 4 Issue: 4

190 - 193

___________________________________________________________________________________________________________________

To fetch the page using jsoup:

1.

2.

First you create the Connection to the resource.

Then you call the get() function to retrieve the page

content

(Document doc =

Jsoup.connect("http://google.com").get();)

In our project we adopt JSoup component to extract

information and URLs from a web page. The file jsoup1.7.3.jar can be downloaded on its company website. We need

to import it into netbeans. While scraping reviews from Google

playstore website, these reviews are located between

<div></div> tags, thus JSoup parser object will first locate

div tag and then using DOM getter method it will locate tag

having class="single-review".

ACKNOWLEDGMENTS

This research paper was supported by the Project Guide Prof.

BhagirathPrajapati as well as the institution A. D. Patel

Institute of Technology, Gujarat, India.

REFERENCES

[1]

[2]

[3]

[4]

importorg.jsoup.nodes.Document;

importorg.jsoup.nodes.Element;

importorg.jsoup.select.Elements;

[5]

Document doc =

Jsoup.parseBodyFragment(body);//System.out.println(doc.html

());

[6]

[7]

An Approach to build a Web Crawler using Clustering

based K-Means Algorithm, Nilesh Jain, Priyanka

Mangal and Dr. Ashok Bhansali

Five easy steps for scraping data from web pages by

Jon Starkweather

Web Crawler, Shalini Sharma, Dept. Of Computer

Science, Shoolini University, Solan(H.P), India

Efficient Focused Web Crawling Approach for Search

Engine, Ayar Pranav and Sandip Chauhan, Computer

and Science Engineering, Kalol Institute of

Technology and research centre, Kalol, Gujarat,India.

Research of a professional search engine system based

on Lucene and heritrix, Ying hong and Chao Lv

Creating sample web scraper-www.packtpub.com

Focused Crawling, Wikipedia

Elements reviews = doc.getElementsByClass("single-review");

The parseBodyFragment method will create an empty shell

document, and insert the parsed HTML into the body element.

Elements provide a range of DOM-like methods to find

elements, and then extract and manipulate their data. The DOM

getters are contextual: called on a parent Document they will

find matching elements under the document; called on a child

element they will find elements under that child. In this way we

can extract the data we want.

VI.

CONCLUSION

This paper provides insight for extracting selective content

from the website. There are two major technologies used for

carrying out the selection and extraction are crawling and

scraping. For our work we require the reviews of different

mobile applications. Therefore here the process of scraping is

applied where the URL to be mentioned will be the complete

URL to reach the page of a particular application. Here we are

using the Application Id of a particular application. Then using

the scraping process mention in the section, the reviews for a

particular application will be scraped.

VII.

FUTURE WORK

Now for our future work, we will be learning about various

algorithms used in analysing the reviews scraped and then

providing a proper classification of the applications. We will be

using one of the machine learning algorithms which will be

best for our work. A summary of the reviews will be provided

in the form of risk score of the application.

193

IJRITCC | April 2016, Available @ http://www.ijritcc.org

________________________________________________________________________________________________________

You might also like

- Regression Based Comparative Study For Continuous BP Measurement Using Pulse Transit TimeDocument7 pagesRegression Based Comparative Study For Continuous BP Measurement Using Pulse Transit TimeEditor IJRITCCNo ratings yet

- Channel Estimation Techniques Over MIMO-OFDM SystemDocument4 pagesChannel Estimation Techniques Over MIMO-OFDM SystemEditor IJRITCCNo ratings yet

- A Review of Wearable Antenna For Body Area Network ApplicationDocument4 pagesA Review of Wearable Antenna For Body Area Network ApplicationEditor IJRITCCNo ratings yet

- A Review of 2D &3D Image Steganography TechniquesDocument5 pagesA Review of 2D &3D Image Steganography TechniquesEditor IJRITCCNo ratings yet

- Modeling Heterogeneous Vehicle Routing Problem With Strict Time ScheduleDocument4 pagesModeling Heterogeneous Vehicle Routing Problem With Strict Time ScheduleEditor IJRITCCNo ratings yet

- A Review of 2D &3D Image Steganography TechniquesDocument5 pagesA Review of 2D &3D Image Steganography TechniquesEditor IJRITCCNo ratings yet

- IJRITCC Call For Papers (October 2016 Issue) Citation in Google Scholar Impact Factor 5.837 DOI (CrossRef USA) For Each Paper, IC Value 5.075Document3 pagesIJRITCC Call For Papers (October 2016 Issue) Citation in Google Scholar Impact Factor 5.837 DOI (CrossRef USA) For Each Paper, IC Value 5.075Editor IJRITCCNo ratings yet

- Channel Estimation Techniques Over MIMO-OFDM SystemDocument4 pagesChannel Estimation Techniques Over MIMO-OFDM SystemEditor IJRITCCNo ratings yet

- Performance Analysis of Image Restoration Techniques at Different NoisesDocument4 pagesPerformance Analysis of Image Restoration Techniques at Different NoisesEditor IJRITCCNo ratings yet

- Comparative Analysis of Hybrid Algorithms in Information HidingDocument5 pagesComparative Analysis of Hybrid Algorithms in Information HidingEditor IJRITCCNo ratings yet

- Importance of Similarity Measures in Effective Web Information RetrievalDocument5 pagesImportance of Similarity Measures in Effective Web Information RetrievalEditor IJRITCCNo ratings yet

- A Review of Wearable Antenna For Body Area Network ApplicationDocument4 pagesA Review of Wearable Antenna For Body Area Network ApplicationEditor IJRITCCNo ratings yet

- Predictive Analysis For Diabetes Using Tableau: Dhanamma Jagli Siddhanth KotianDocument3 pagesPredictive Analysis For Diabetes Using Tableau: Dhanamma Jagli Siddhanth Kotianrahul sharmaNo ratings yet

- Network Approach Based Hindi Numeral RecognitionDocument4 pagesNetwork Approach Based Hindi Numeral RecognitionEditor IJRITCCNo ratings yet

- Diagnosis and Prognosis of Breast Cancer Using Multi Classification AlgorithmDocument5 pagesDiagnosis and Prognosis of Breast Cancer Using Multi Classification AlgorithmEditor IJRITCCNo ratings yet

- 41 1530347319 - 30-06-2018 PDFDocument9 pages41 1530347319 - 30-06-2018 PDFrahul sharmaNo ratings yet

- Fuzzy Logic A Soft Computing Approach For E-Learning: A Qualitative ReviewDocument4 pagesFuzzy Logic A Soft Computing Approach For E-Learning: A Qualitative ReviewEditor IJRITCCNo ratings yet

- Efficient Techniques For Image CompressionDocument4 pagesEfficient Techniques For Image CompressionEditor IJRITCCNo ratings yet

- 44 1530697679 - 04-07-2018 PDFDocument3 pages44 1530697679 - 04-07-2018 PDFrahul sharmaNo ratings yet

- Prediction of Crop Yield Using LS-SVMDocument3 pagesPrediction of Crop Yield Using LS-SVMEditor IJRITCCNo ratings yet

- 45 1530697786 - 04-07-2018 PDFDocument5 pages45 1530697786 - 04-07-2018 PDFrahul sharmaNo ratings yet

- A Study of Focused Web Crawling TechniquesDocument4 pagesA Study of Focused Web Crawling TechniquesEditor IJRITCCNo ratings yet

- Itimer: Count On Your TimeDocument4 pagesItimer: Count On Your Timerahul sharmaNo ratings yet

- Safeguarding Data Privacy by Placing Multi-Level Access RestrictionsDocument3 pagesSafeguarding Data Privacy by Placing Multi-Level Access Restrictionsrahul sharmaNo ratings yet

- Hybrid Algorithm For Enhanced Watermark Security With Robust DetectionDocument5 pagesHybrid Algorithm For Enhanced Watermark Security With Robust Detectionrahul sharmaNo ratings yet

- Image Restoration Techniques Using Fusion To Remove Motion BlurDocument5 pagesImage Restoration Techniques Using Fusion To Remove Motion Blurrahul sharmaNo ratings yet

- Vehicular Ad-Hoc Network, Its Security and Issues: A ReviewDocument4 pagesVehicular Ad-Hoc Network, Its Security and Issues: A Reviewrahul sharmaNo ratings yet

- Space Complexity Analysis of Rsa and Ecc Based Security Algorithms in Cloud DataDocument12 pagesSpace Complexity Analysis of Rsa and Ecc Based Security Algorithms in Cloud Datarahul sharmaNo ratings yet

- A Clustering and Associativity Analysis Based Probabilistic Method For Web Page PredictionDocument5 pagesA Clustering and Associativity Analysis Based Probabilistic Method For Web Page Predictionrahul sharmaNo ratings yet

- A Content Based Region Separation and Analysis Approach For Sar Image ClassificationDocument7 pagesA Content Based Region Separation and Analysis Approach For Sar Image Classificationrahul sharmaNo ratings yet

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeFrom EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeRating: 4 out of 5 stars4/5 (5784)

- The Yellow House: A Memoir (2019 National Book Award Winner)From EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Rating: 4 out of 5 stars4/5 (98)

- Never Split the Difference: Negotiating As If Your Life Depended On ItFrom EverandNever Split the Difference: Negotiating As If Your Life Depended On ItRating: 4.5 out of 5 stars4.5/5 (838)

- Shoe Dog: A Memoir by the Creator of NikeFrom EverandShoe Dog: A Memoir by the Creator of NikeRating: 4.5 out of 5 stars4.5/5 (537)

- The Emperor of All Maladies: A Biography of CancerFrom EverandThe Emperor of All Maladies: A Biography of CancerRating: 4.5 out of 5 stars4.5/5 (271)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceFrom EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceRating: 4 out of 5 stars4/5 (890)

- The Little Book of Hygge: Danish Secrets to Happy LivingFrom EverandThe Little Book of Hygge: Danish Secrets to Happy LivingRating: 3.5 out of 5 stars3.5/5 (399)

- Team of Rivals: The Political Genius of Abraham LincolnFrom EverandTeam of Rivals: The Political Genius of Abraham LincolnRating: 4.5 out of 5 stars4.5/5 (234)

- Grit: The Power of Passion and PerseveranceFrom EverandGrit: The Power of Passion and PerseveranceRating: 4 out of 5 stars4/5 (587)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaFrom EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaRating: 4.5 out of 5 stars4.5/5 (265)

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryFrom EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryRating: 3.5 out of 5 stars3.5/5 (231)

- On Fire: The (Burning) Case for a Green New DealFrom EverandOn Fire: The (Burning) Case for a Green New DealRating: 4 out of 5 stars4/5 (72)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureFrom EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureRating: 4.5 out of 5 stars4.5/5 (474)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersFrom EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersRating: 4.5 out of 5 stars4.5/5 (344)

- The Unwinding: An Inner History of the New AmericaFrom EverandThe Unwinding: An Inner History of the New AmericaRating: 4 out of 5 stars4/5 (45)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyFrom EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyRating: 3.5 out of 5 stars3.5/5 (2219)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreFrom EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreRating: 4 out of 5 stars4/5 (1090)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)From EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Rating: 4.5 out of 5 stars4.5/5 (119)

- Her Body and Other Parties: StoriesFrom EverandHer Body and Other Parties: StoriesRating: 4 out of 5 stars4/5 (821)

- PrognosisDocument7 pagesPrognosisprabadayoeNo ratings yet

- Broom Manufacture Machine: StartDocument62 pagesBroom Manufacture Machine: StartHaziq PazliNo ratings yet

- V60 Ventilator Specifications PDFDocument4 pagesV60 Ventilator Specifications PDFJonathan Issac Dominguez RamirezNo ratings yet

- User Manual - Numrolgy SoftwareDocument14 pagesUser Manual - Numrolgy SoftwareComputershastriNoproblem0% (1)

- Cat IQ TestDocument3 pagesCat IQ TestBrendan Bowen100% (1)

- Johnson 1999Document20 pagesJohnson 1999Linh Hoàng PhươngNo ratings yet

- A Laboratory Experiment in Crystals and Crystal Model Building ObjectivesDocument7 pagesA Laboratory Experiment in Crystals and Crystal Model Building ObjectivesrajaaNo ratings yet

- Osprey, Men-At-Arms #008 The Black Watch (1971) (-) OCR 8.12Document48 pagesOsprey, Men-At-Arms #008 The Black Watch (1971) (-) OCR 8.12mancini100% (4)

- PoiconverterDocument2 pagesPoiconvertertaco6541No ratings yet

- Adjutant-Introuvable BASIC VERSIONDocument7 pagesAdjutant-Introuvable BASIC VERSIONfurrypdfNo ratings yet

- Escalado / PLC - 1 (CPU 1214C AC/DC/Rly) / Program BlocksDocument2 pagesEscalado / PLC - 1 (CPU 1214C AC/DC/Rly) / Program BlocksSegundo Angel Vasquez HuamanNo ratings yet

- Differentiation SS2Document88 pagesDifferentiation SS2merezemenike272No ratings yet

- Hong Kong A-Level Chemistry Book 3ADocument69 pagesHong Kong A-Level Chemistry Book 3AMARENG BERNABENo ratings yet

- Readme cljM880fw 2305076 518488 PDFDocument37 pagesReadme cljM880fw 2305076 518488 PDFjuan carlos MalagonNo ratings yet

- Sr. No. Name Nationality Profession Book Discovery Speciality 1 2 3 4 5 6 Unit 6Document3 pagesSr. No. Name Nationality Profession Book Discovery Speciality 1 2 3 4 5 6 Unit 6Dashrath KarkiNo ratings yet

- Math 2 Unit 9 - Probability: Lesson 1: "Sample Spaces, Subsets, and Basic Probability"Document87 pagesMath 2 Unit 9 - Probability: Lesson 1: "Sample Spaces, Subsets, and Basic Probability"Anonymous BUG9KZ3100% (1)

- Financial Reporting Statement Analysis Project Report: Name of The Company: Tata SteelDocument35 pagesFinancial Reporting Statement Analysis Project Report: Name of The Company: Tata SteelRagava KarthiNo ratings yet

- Chapter 1 Critical Thin...Document7 pagesChapter 1 Critical Thin...sameh06No ratings yet

- CP R77.30 ReleaseNotesDocument18 pagesCP R77.30 ReleaseNotesnenjamsNo ratings yet

- LTC2410 Datasheet and Product Info - Analog DevicesDocument6 pagesLTC2410 Datasheet and Product Info - Analog DevicesdonatoNo ratings yet

- 1 API 653 Exam Mar 2015 MemoryDocument12 pages1 API 653 Exam Mar 2015 MemorymajidNo ratings yet

- Exploratory Data Analysis: M. SrinathDocument19 pagesExploratory Data Analysis: M. SrinathromaNo ratings yet

- July 4th G11 AssignmentDocument5 pagesJuly 4th G11 Assignmentmargo.nicole.schwartzNo ratings yet

- Laboratory SafetyDocument4 pagesLaboratory SafetyLey DoydoraNo ratings yet

- Vsip - Info - Ga16de Ecu Pinout PDF FreeDocument4 pagesVsip - Info - Ga16de Ecu Pinout PDF FreeCameron VeldmanNo ratings yet

- OJT Form 03 Performance EvaluationDocument2 pagesOJT Form 03 Performance EvaluationResshille Ann T. SalleyNo ratings yet

- JA Ip42 Creating Maintenance PlansDocument8 pagesJA Ip42 Creating Maintenance PlansvikasbumcaNo ratings yet

- Lake Isle of Innisfree Lesson Plan BV ZGDocument4 pagesLake Isle of Innisfree Lesson Plan BV ZGapi-266111651100% (1)

- Footprints 080311 For All Basic IcsDocument18 pagesFootprints 080311 For All Basic IcsAmit PujarNo ratings yet

- Pressing and Finishing (Latest)Document8 pagesPressing and Finishing (Latest)Imran TexNo ratings yet