Professional Documents

Culture Documents

Review Multi Target

Uploaded by

AnaSCopyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Review Multi Target

Uploaded by

AnaSCopyright:

Available Formats

Overview

A survey on multi-output

regression

Hanen Borchani,1 Gherardo Varando,2 Concha Bielza2 and

Pedro Larraaga2

In recent years, a plethora of approaches have been proposed to deal with the

increasingly challenging task of multi-output regression. This study provides a

survey on state-of-the-art multi-output regression methods, that are categorized

as problem transformation and algorithm adaptation methods. In addition, we

present the mostly used performance evaluation measures, publicly available

data sets for multi-output regression real-world problems, as well as open-source

software frameworks. 2015 John Wiley & Sons, Ltd.

How to cite this article:

WIREs Data Mining Knowl Discov 2015, 5:216233. doi: 10.1002/widm.1157

INTRODUCTION

ulti-output regression, also known in the literature as multi-target,15 multi-variate,68 or

multi-response9,10 regression, aims to simultaneously

predict multiple real-valued output/target variables.

When the output variables are binary, the learning

problem is called multi-label classification.1113 However, when the output variables are discrete (not necessarily binary), the learning problem is referred to as

multi-dimensional classification.14

Several applications for multi-output regression

have been studied. They include ecological modeling

to predict multiple target variables describing the condition or quality of the vegetation,3 chemometrics to

infer concentrations of several analytes from multivariate calibration using multivariate spectral data,15

prediction of the audio spectrum of wind noise (represented by several sound pressure variables) of a given

vehicle component,16 real-time prediction of multiple gas tank levels of the Linz Donawitz converter

gas system,17 simultaneous estimation of different biophysical parameters from remote sensing images,18

Correspondence

to: hanen@cs.aau.dk

1 Machine

Intelligence Group, Department of Computer Science,

Aalborg University, Aalborg, Denmark

2 Computational

Intelligence Group, Departamento de Inteligencia Artificial, Facultad de Informtica, Universidad Politcnica de

Madrid, Madrid, Spain

Conflict of interest: The authors have declared no conflicts of interest

for this article.

216

channel estimation through the prediction of several

received signals,19 and so on.

In spite of their different backgrounds, these

real-world applications give rise to many challenges

such as missing data (i.e., when some feature/target

values are not observed), the presence of noise typically due to the complexity of the real domains,

and most importantly, the multivariate nature and

the compound dependencies between the multiple

feature/target variables. In dealing with these challenges, it has been proven that multi-output regression methods yield to a better predictive performance,

in general, when compared against the single-output

methods.1517 Multi-output regression methods provide as well the means to effectively model the

multi-output datasets by considering not only the

underlying relationships between the features and

the corresponding targets but also the relationships

between the targets, guaranteeing thereby a better

representation and interpretability of the real-world

problems.3,18 A further advantage of the multi-target

approaches is that they may produce simpler models

with a better computational efficiency.3

Existing methods for multi-output regression

can be categorized as: (1) problem transformation methods (also known as local methods) that

transform the multi-output problem into independent single-output problems each solved using a

single-output regression algorithm, and (2) algorithm adaptation methods (also known as global or

big-bang methods) that adapt a specific single-output

method (such as decision trees and support vector

2015 John Wiley & Sons, Ltd.

Volume 5, September/October 2015

WIREs Data Mining and Knowledge Discovery

Multi-output regression survey

machines) to directly handle multi-output data sets.

Algorithm adaptation methods are deemed to be

more challenging because they usually aim not only

to predict the multiple targets but also to model and

interpret the dependencies among these targets.

Note here that the multi-task learning (MTL)

problem2024 is related to the multi-output regression

problem: it also aims to learn multiple related tasks

(i.e., outputs) at the same time. Commonly investigated issues in MTL include modeling task relatedness and the definition of similarity between jointly

learned tasks, feature selection, and certainly, the

development of efficient algorithms for learning and

predicting several tasks simultaneously using different approaches, such as clustering, kernel regression,

neural networks, tree and graph structures, Bayesian

model, and so forth. The main difference between

multi-output regression and multi-task problems is

that tasks may have different training sets and/or

different descriptive features, in contrast to the target variables that share always the same data and/or

descriptive features.

The article is organized as follows. In Problem

Transformation Methods section, the state-of-the-art

multi-output regression approaches are presented

according to the categorization as problem transformation and algorithm adaptation methods. In

Discussion section, we provide a theoretical comparison of the different presented approaches. In

Performance Evaluation Measures section, we discuss

evaluation measures, and publicly available data

sets for multi-output regression learning problems

are given in Data Sets section. Open-Source Software Frameworks section describes the open-source

software frameworks available for multi-output

regression methods, and finally, Conclusion section

sums up the article with some conclusions and possible

lines for future research.

MULTI-OUTPUT REGRESSION

Let us consider the training data set D of N instances

containing a value assignment for each variable

X1 , , Xm , Y 1 , , Y d , i.e., D = {(x(1) , y(1) ), ,

(x(N) , y(N) )}. Each instance is characterized by an

input vector

( l of m l descriptive

) or predictive variables

()

()

(l)

l)

(

x = x1 , , xj , , xm and an output vector

( l

)

()

(l)

(l)

of d target variables y(l) = y1 , , yi , , yd ,

with i {1, , d}, j {1, , m}, and l {1, , N}.

The task is to learn a multi-target regression model

from D consisting of finding a function h that assigns

to each instance, given by the vector x, a vector y of d

Volume 5, September/October 2015

target values:

h X1 Xm Y1 Yd

)

(

)

(

x = x1 , , xm y = y1 , , yd ,

where Xj and Yi denote the sample spaces of each

predictive variable Xj , for all j {1, , m}, and each

target variable Y i , for all i {1, , d}, respectively.

Note that, all target variables are considered to be

continuous here. The learned multi-target model will

be

{ used afterward

}to simultaneously predict the values

y(N+1) , ,

y(N ) of all target variables of the new

{

}

incoming unlabeled instances x(N+1) , , x(N ) .

Throughout this section, we provide a survey

on state-of-the-art multi-output regression learning

methods categorized as problem transformation methods (Problem Transformation Methods section) and

algorithm adaptation methods (Algorithm Adaptation

Methods section).

Problem Transformation Methods

These methods are mainly based on transforming

the multi-output regression problem into single-target

(ST) problems, then building a model for each target,

and finally concatenating all the d predictions. The

main drawback of these methods is that the relationships among the targets are ignored, and the targets are

predicted independently, which may affect the overall

quality of the predictions.

Recently, Spyromitros-Xioufis et al.4 proposed

to extend well-known multi-label classification transformation methods to deal with the multi-output

regression problem and to also model the target

dependencies. In particular, they introduced two novel

approaches for multi-target regression, multi-target

regressor stacking (MTRS) and regressor chains (RC),

inspired by popular and successful multi-label classification approaches.

As discussed in Spyromitros-Xioufis et al.,4 only

approaches based on single labels (such as the typical

binary relevance, stacked generalization-based methods, and classifier chains) can be straightforwardly

adapted to multi-output regression by using a regression instead of a classification algorithm. Multi-label

approaches, based on either pairs of labels or sets

of labels paradigms, are generally not transferable to

multi-target regression problems. However, the random k-labelsets (RAkEL) method has been the inspiration for a new problem transformation method

recently proposed by Tsoumakas et al.5 Their method

creates new output variables as random linear combinations of k original output variables. Next, a

user-specified multi-output algorithm is applied to

2015 John Wiley & Sons, Ltd.

217

wires.wiley.com/widm

Overview

predict the new variables, and finally, the original

targets are recovered by inverting the random linear

transformation.

In this section, we present state-of-the-art

multi-output regression methods based on problem

transformation, namely, ST method, MTRS, RC, and

multi-output support vector regression (MO-SVR).

Single-Target Method

In the baseline ST method,4 a multi-target

model is comprised of d single-target models, each

training set

) on (a transformed

)}

{( trained

{

}

(1)

(N) , y(N)

Di =

,

,

x

,

i

1, , d ,

x(1)

,

y

i

i

1

to predict the value of a single-target variable Y i .

In this way, the target variables are predicted independently and potential relationships between them

cannot be exploited. The ST method is also known as

binary relevance in the literature.13

As the multi-target prediction problem is transformed into several single-target problems, any offthe-shelf ST regression algorithm can be used. For

instance, Spyromitros-Xioufis et al.4 used four

well-known regression algorithms, namely, ridge

regression,25 SVR machines,26 regression trees,27 and

stochastic gradient boosting.28

Moreover, Hoerl and Kennard25 proposed

the separate ridge regression method to deal with

multi-variate regression problems. It consists of

performing a separate ridge regression of each

individual target Y i on the predictor variables

X = (X1 , , Xm ). The regression coefficient estimates ij , with i {1, , d} and j {1, , m}, are the

solution to a penalized least squares criterion:

{ }m

aij j=1

]2

N [

m

(l) (l)

= arg minm

a j xj

yi

{aj }j=1 l=1

j=1

+ i

{

}

a2j , i 1, , d ,

j=1

where i > 0 represents the ridge parameters.

Multi-Target Regressor Stacking

The MTRS method4 is inspired by29 where stacked

generalization30 was used to deal with multi-label

classification. MTRS training is a two-stage process.

First, d ST models are learned as in ST. However,

instead of directly using these models for prediction,

MTRS includes an additional training stage where a

second set of d meta-models are learned, one for each

target Y i , i {1, , d}.

Each meta-model

a transformed

) on (

)}

{( is learned

(N) (N)

,

,

x

,

training set Di =

x(1) , y(1)

,

y

i

i

218

( l

)

()

(l) (l)

(l)

is a

where x(l) = x1 , , xN ,

y1 , ,

yd

transformed input vector consisting of the original input vector of the training set augmented by

predictions (or estimates) of their target variables

yielded by the first-stage models. In fact, MTRS is

based on the idea that a second-stage model is able to

correct the prediction of a first-stage model by using

information about the predictions of other first-stage

models.

The predictions for a new instance

x(N + 1) are obtained by generating first-stage

models (inducing the estimated

output vector

)

(N+1)

y(N+1) =

,

then

applying the

y(N+1)

,

,

y

1

d

second-stage models

on the transformed input vec)

(

tor x(N+1) = x(N+1)

, , x(N+1)

,

y(N+1)

, ,

yd(N+1)

m

1

1

to produce

the final estimated

multi-output targets

)

( (N+1)

(N+1)

(N+1)

.

=

y1

, ,

yd

y

Regressor Chains

The RC method4 is inspired by the recent multi-label

chain classifiers.31 RC is another problem transformation method, based on the idea of chaining

single-target models. The training of RC consists of

selecting a random chain (i.e., permutation) of the set

of target variables, then building a separate regression model for each target following the order of the

selected chain.

Assuming that the ordered set or the full chain

C = (Y 1 , Y 2 , , Y d ) is selected, the first model is only

concerned with the prediction of Y 1 . Then, subsequent

models for Y i,s. t. i >1 {(

are trained) on the

( transformed

)}

, , x(N)

,

Di =

x(1)

, y(1)

, y(N)

i

i

i

i

)

(

(l)

(l)

(l) (l)

(l)

where xi = x1 , , xm , y1 , , yi1

is a

transformed input vector consisting of the original input vector of the training set augmented by

the actual values of all previous targets in the chain.

Spyromitros-Xioufis et al.4 then introduced the

regressor chain corrected (RCC) method that uses

cross-validation estimates instead of the actual values

in the transformation data step.

However, the main problem with the RC and

RCC methods is that they are sensitive to the selected

chain ordering. To avoid this problem, and alike,31

Spyromitros-Xioufis et al.4 proposed a set of regression chain models with differently ordered chains: if

the number of distinct chains was less than 10, they

created exactly as many models as the number of distinct label chains; otherwise, they selected 10 chains

randomly. The resulting approaches are called ensemble of regressor chains (ERC) and ensemble of regressor chains corrected (ERCC).

data

sets

2015 John Wiley & Sons, Ltd.

Volume 5, September/October 2015

WIREs Data Mining and Knowledge Discovery

Multi-output regression survey

Multi-Output Support Vector Regression

Zhang et al.32 presented a MO-SVR approach based

on problem transformation. It builds a multi-output

model that takes into account the correlations between

all the targets using the vector virtualization method.

Basically, it extends the original feature space and

expresses the multi-output problem as an equivalent

single-output problem, so that it can then be solved

using the single-output least squares SVR machines

(LS-SVR) algorithm.

In particular, Zhang et al.32 used a binary representation to express y(l) with vectors Ii of length d

such that only the ith element representing the ith output takes the value 1 and all the remaining elements

are zero. In this way, for any instance (x(l) , y(l) ), d virtual samples are built by feature vector virtualization

as follows:

)

(

(l) , y(l)

I

,

x

1

1

(

)

x(l) , y(l)

(

)

(l)

I , x(l) , y

.

d

a new data set Di =

)}

{(( This ) yields,

(l)

l)

(

,

with

i {1, , d}

and

, yi

Ii , x

l {1, , N}, in the extended feature space. The

solution follows directly from solving a set of linear

equations using extended LS-SVR, where the objective

function f to be minimized is defined as follows:

N d

1

1 (l)2

e

||w||2 +

2

C l=1 i=1 i

)

(

(l)

(l )

s.t. yi = wT Ii , x(l) + Ii b + ei ,

f =

where w = (w1 , , wd ) defines the weights, () is

a nonlinear transformation to the feature space,

and b = (b1 , , bd )T is the bias vector. C is the

trade-off factor used to balance the strengths of the

(l )

Vapnik-Chervonenkis dimension and the loss, and ei

is the fitting error for each instance in the data set Di .

Algorithm Adaptation Methods

These methods are based on the idea of simultaneously predicting all the targets using a single model

that is able to capture all dependencies and internal relationships between them. This actually has

several advantages over problem transformation

methods: it is easier to interpret a single multi-target

model than many single-target models and it ensures

better predictive performance especially when the

targets are correlated.3,6,10 In this section, we present

state-of-the-art multi-output regression methods

defined as extensions of several standard learning

Volume 5, September/October 2015

algorithms including statistical methods, support

vector machines, kernel methods, regression trees,

and classification rules.

Statistical Methods

The statistical approaches are considered as the first

attempt to deal with simultaneously predicting multiple real-valued targets. They aim to take advantage

of correlations between the target variables in order

to improve predictive accuracy compared with the

traditional procedure of doing individual regressions

of each target variable on the common set of predictor

variables.

Izenman33 proposed reduced-rank regression

which places a rank of constraint on the matrix of

estimated regression coefficients. Considering the following regression model:

yi =

{

}

aij xj + i , i 1, , d ,

j=1

the aim is to determine the coefficient matrix

r d m of rank r min{m, d} such that

[

]

1

= arg min E (y Ax)T

A

(y Ax)

r

rank(A)=r

where

with

estimated

error

= E( T ),

T

e = {1 , , d }. The above equation is then solved

as r = Br , where d m is the matrix of the

ordinary least squares (OLS) estimates and the

reduced-rank shrinking matrix Br d d is given by

Br = T1 Ir T,

where Ir = diag {1 (i r)}di=1 and T is the canonical

co-ordinate matrix that seeks to maximize the correlation between the d-vector y and the m-vector x.

Later, Brown and Zidek7 presented a

multi-variate version of the Hoerl-Kennard ridge

regression rule and proposed the estimator (K):

)

(

)1 ( T

(K) = xT x Id + Im K

x x Id ,

where K(d d) > 0 is the ridge matrix. denotes

are (md 1)

the usual Kronecker product, and ,

vectors of estimators of = ( 1 , , m )T , where

1 , , m are each (1 d) row vectors of . represents the maximum likelihood estimator of

corresponding to K = 0.

Furthermore, van der Merwe and Zidek34

introduced the filtered canonical y-variate regression

(FICYREG) method defined as a generalization to the

multi-variate regression problem of the James-Stein

estimator. The estimated coefficient matrix d m

takes the form

= B A,

A

2015 John Wiley & Sons, Ltd.

219

wires.wiley.com/widm

Overview

where d m is the matrix of OLS estimates.

The shrinking matrix Bf d d is given by Bf =

1 FT,

where T

is the sample canonical co-ordinate

T

matrix and F = diag{f 1 , , f d } represents the canoni{ }d

cal co-ordinate shrinkage factors fi i=1 that depend

on the number of targets d, the number of predictor

variables m, and the corresponding sample-squared

{ 2 }d

canonical correlations

ci i=1 :

)

)

(

(

md1

md1

c2i 1

c2i

fi =

N

N

}

{

and fi max 0, fi .

In addition, one of the most prominent

approaches for dealing with the multi-output regression problem is the curds and whey (C&W) method

proposed by Breiman and Friedman in Ref 6. Basically, given d targets y = (y1 , , yd )T with separate

least squares regressions y = (y 1 , , y d )T , where y

and x are the sample means of y and x, respectively, a

more accurate predictor yi of each yi is obtained using

a linear combination

yi = yi +

(

)

{

}

yk yk , i 1, , d ,

bik

k=1

is measured by the L2 -norm of the regression coefficients associated with the input. To solve the L2 -SVS,

W, the m d matrix of regression coefficients, is estimated by minimizing the error sum of squares subject

to a sparsity constraint as follows:

m

1

2

minf (W) = ||y xW||F subject to

||wj ||2 r,

W

2

j=1

where the

subscript F denotes the Frobenius norm, i.e.,

||B||2F = ij b2ij . The factor || wj || 2 is a measure of the

importance of the jth input in the model, and r is a free

parameter that controls the amount of shrinkage that

is applied to the estimate.

If the value of r 0 is large enough, the optimal

W is equal to the OLS solution, whereas small values

of r impose a row-sparse structure on W, which

means that only some of the inputs are effective in the

estimate.

Abraham et al.35 coupled linear regressions and

quantile mapping to both minimize the residual errors

and capturing the joint (including nonlinear) relationships among variables. The method was tested on

bivariate and trivariate output spaces showing that it

is able to reduce residual errors while keeping the joint

distribution of the output variables.

of the OLS predictors

Multi-Output Support Vector Regression

(

)

aij xj xj , s.t.

yi = yi +

{ }m

aij j=1

j=1

(

)

N

m

( l

) 2

()

(l)

,

= arg minm

a j xj x j

yi yi

{aj }j=1 l=1

j=1

rather than with the least squares themselves. Here

ij are the estimated regression coefficients, and bik

can be regarded as shrinking parameters that transform the vector-valued OLS estimates y to the biased

estimates

y, and are determined by the C&W procedure, which is a form of multi-variate shrinking. In

fact, the estimates of the matrix B = [bik ] d d take

the form of B = T 1 ST, where T is the d d matrix

whose rows are the response canonical co-ordinates

maximizing the correlations between y and x, and

S = diag(s1 , , sd ) is a diagonal shrinking matrix. To

estimate B, C&W starts by transforming (T), shrinking (i.e., multiplying by S), and then transforming back

(T 1 ).

More recently, Simil and Tikka10 investigated

the problem of input selection and shrinkage in

multi-response linear regression. They presented a

simultaneous variable selection (SVS) method called

L2 -SVS, where the importance of an input in the model

220

Traditionally, SVR is used with a single-output variable. It aims to determine the mapping between the

input vector x and the single output yi from a given

training data set Di , by finding the regressor w m 1

and the bias term b that minimize

( ( )

(

))

N

T

1

2

l)

l)

(

(

w+b

,

||w|| + C L y x

2

l=1

where () is a nonlinear transformation to a higher

dimensional Hilbert space H, and C is a parameter

chosen by the user that determines the trade-off

between the regularization and the error reduction term, first and second addend, respectively.

L is a Vapnick -insensitive loss function, which

is equal to 0 for |y(l) ((x(l) )T w + b)| < and to

|y(l) ((x(l) )T w + b)| for |y(l) ((x(l) )T w + b)| .

The solution (w and b) is induced by a linear combination of the training set in the transformed space

with an absolute error equal to or greater than .

Hence, in order to deal with the multi-output

case, single-output SVR can be easily applied independently to each output (see Multi-Output Support

Vector Regression in Problem Transformation Methods section). Because it exhibits the serious drawback

of not taking into account the possible correlations

between outputs however, several approaches have

2015 John Wiley & Sons, Ltd.

Volume 5, September/October 2015

WIREs Data Mining and Knowledge Discovery

Multi-output regression survey

been proposed to extend traditional SVR in order to

better manage the multi-output case. In general, this

consists of minimizing

( ( )

(

))

d

N

T

1

2

l)

l)

(

(

||w || + C L y x

W+b

,

2 i=1 i

l=1

where the m d matrix W = (w1 , w2 , , wd ) and

b = (b1 , b2 , , bd )T .

For instance, Vazquez and Walter36 extended

SVR by considering the so-called Cokriging37

method, which is a multi-output version of Kriging

that exploits the correlations due to the proximity in the space of factors and outputs. In this

way, with an appropriate choice of covariance

and cross-covariances models, the authors showed

that multi-output SVR yields better results than an

independent prediction of the outputs.

Snchez-Fernndez et al.19 introduced a generalization of SVR. The so-called multiregressor SVR

(M-SVR) is based on an iterative reweighted least

squares (IRWLS) procedure that iteratively estimates

the weights W and the bias parameters b until convergence, i.e., until reaching a stationary point where

there is no more improvement of the considered loss

function.

Similarly, Brudnak38 developed a vector-valued

SVR by extending the notions of the estimator,

loss function and regularization functional from

the scalar-valued case; and Tuia et al.18 proposed

a multi-output SVR method by extending the

single-output SVR to multiple outputs while maintaining the advantages of a sparse and compact

solution using a cost function. Later, Deger et al.39

adapted Tuia et al.s18 approach to tackle the problem

of reflectance recovery from multispectral camera

output, and proved through their empirical results

that it has the advantages of being simpler and faster

to compute than a scalar-valued based method.

In Ref 40, Cai and Cherkassky described a

new methodology for regression problems, combining Vapniks SVM+ regression method and the MTL

setting. SVM+, also known as learning with structured data, extends the standard SVM regression by

taking into account the group information available

in the training data. The SVM+ approach learns

a single regression model using information on all

groups, whereas the proposed SVM+MTL approach

learns several related regression models, specifically

one model for each group.

In Ref 41, Liu et al. considered the output

space as a Riemannian submanifold to incorporate its

geometric structure into the regression process, and

they proposed a locally linear transformation (LLT)

mechanism to define the loss functions on the output

Volume 5, September/October 2015

manifold. Their proposed approach, called LLT-SVR,

starts by identifying the k-nearest neighbors of each

output using the Euclidean distance, then obtains local

coordinate systems, and finally trains the regression

model by solving a convex quadratic programming

problem.

Moreover, Han et al.17 dealt with the prediction of the gas tank level of the Linz Donawitz converter gas system using a multi-output least squares

SVR. They considered both the single-output and

the combined-output fitting errors. In model solving, a full-rank equation is given to determine the

required parameters during training using an optimization based on particle swarm42 (an evolutionary

computation method).

Xu et al.43 recently proposed another

approach to extend least squares SVR to the

multi-output case. The so-called multi-output LS-SVR

(MLS-SVR) then solves the problem by finding

the weights W = (w1 , , wd ) and the bias parameters

b = (b1 , , bd )T that minimize the following objective

function:

)

(

1

F (W, ) = trace WT W

min

nh d

d

2

W

,b

)

(

1

+ trace T ,

(2

)

s.t. Y = ZT W + repmat bT , N, 1 + ,

( ( ) ( )

(

))

where Z = x(1) , x(2) , , x(N) nh N ,

m nh is a mapping to some higher dimensional Hilbert space H with nh dimensions. The

function repmat defined over a 1 d matrix b

repmat(bT , N, 1) creates a large block matrix

consisting

of an) N 1 tiling of copies of b.

(

= 1 , 2 , , d +Nd is a matrix consisting

of slack variables, and + is a positive real

regularized parameter.

Kernel Methods

A study of vector-valued learning with kernel methods was started by Micchelli and Pontil,9 where they

analyzed the regularized least squares from the computational point of view. They also analyzed the theoretical aspects of reproducing kernel Hilbert spaces

(RKHS) in the range-space of the estimator, and they

generalized the representer theorem for Tikhonov regularization to the vector-valued setting.

Baldassarre et al.44 later studied a class of

regularized kernel methods for multi-output learning which are based on filtering the spectrum of

the kernel matrix. They considered methods also

including Tikhonov regularization as a special case,

and alternatives such as vector-valued extensions of

squared loss function (L2 ) boosting and other iterative

2015 John Wiley & Sons, Ltd.

221

wires.wiley.com/widm

Overview

schemes. In particular, they claimed that Tikhonov

regularization could be seen as a low-pass filtering

applied to the kernel matrix. The idea is thus to use

different kinds of spectral filtering, defining regularized matrices that in general do not have interpretation

as penalized empirical risk minimization.

In addition, Evgeniou and Pontil45 considered

the learning of an average task simultaneously with

small deviations for each task, and Evgeniou et al.

extended their earlier results in Ref 46 by developing indexed kernels with coupled regularization

functionals.

lvarez et al.47 reviewed at length kernel methods for vector-valued functions, focusing especially on

regularization and Bayesian prospective, connecting

the two points of view. They provided a large collection of kernel choices, focusing on separable kernels,

sum of separable kernels and further extensions as kernels to learn divergence-free and curl-free vector fields.

Multi-Target Regression Trees

Multi-target regression trees, also known as multivariate regression trees (MRTs) or multi-objective

regression trees, are trees able to predict multiple

continuous targets at once. Multi-target regression

trees have two main advantages over building a separate regression tree for each target.48 First, a single

multi-target regression tree is usually much smaller

than the total size of the individual single-target trees

for all variables, and, second, a multi-target regression

tree better identifies the dependencies between the

different target variables.

One of the first approaches proposed for dealing with multi-target regression trees was proposed

by Death.49 He presented an extension of the univariate recursive partitioning method (CART)50 to the

multi-output regression problem. Hence, the so-called

MRTs are built following the same steps as CART, i.e.,

starting with all instances in the root node, then iteratively finding the optimal split and partitioning the

leaves accordingly until a pre-defined stopping criterion is reached. The only difference from CART is the

redefinition of the impurity measure of a node as the

sum of squared error over the multi-variate response:

N d (

)2

(l)

yi yi ,

l=1 i=1

(l)

where yi denotes the value of the output variable

Y i for the instance l and yi denotes the mean of Y i

in the node. Each split is selected to minimize the

sum of squared error. Finally, each leaf of the tree

can be characterized by the multi-variate mean of its

instances, the number of instances at the leaf, and its

222

defining feature values. Death49 claimed that MRT

also inherits characteristics of univariate regression

trees: they are easy to construct and the resulting

groups are often simple to interpret; they are robust

to the addition of pure noise response and/or feature

variables; they automatically detect the interactions

between variables, and they handle missing values in

feature variables with minimal loss of information.

Struyf and Deroski48 proposed a constraintbased system for building multi-objective regression

trees (MORTs). It includes both size and accuracy constraints, so that the user can trade off size (and thus

interpretability) for accuracy by either specifying maximum tree size or minimum accuracy. Their approach

consists of first building a large tree using the training set, then pruning it in a second step to satisfy the

user constraints. This has the advantage that the tree

can be stored in the inductive database and used for

answering inductive queries with different constraints.

Basically, MORTs are constructed with a standard top-down induction algorithm,50 and the heuristic used for selecting the attribute tests in the internal

nodes is the intra-cluster variation summed over the

subsets (or clusters) induced by the test.

( ) Intra-cluster

d

variation is defined as N i=1 Var Yi with N the

number of instances in the cluster, d number of target

variables, and Var(Y i ) the variance of the target variable Y i in the cluster. Minimizing intra-cluster variation produces homogeneous leaves, which in turn

results in accurate predictions.

In addition, Appice and Deroski2 presented

an algorithm, named multi-target stepwise model

tree induction (MTSMOTI), for inducing multi-target

model trees in a stepwise fashion. Model trees are

decision trees whose leaves contain linear regression

models that predict the value of a single continuous

target variable. Based on the stepwise model tree

induction algorithm,51 MTSMOTI induces the model

tree top-down by choosing at each step to either

partition the training space (split nodes) or introduce

a regression variable in the set of linear models to

be associated with leaves. In this way, each leaf of

such a model tree contains several linear models, each

predicting the value of a different target variable Y i .

Kocev et al.3 explored and compared two

approaches for dealing with multi-output regression

problem: first, learning a model for each output separately (i.e., multiple regression trees) and, second,

learning one model for all outputs simultaneously

(i.e., a single multi-target regression tree). In order to

improve predictive performance, Kocev et al.52 also

considered two ensemble learning techniques, namely,

bagging27 and random forests53 of regression trees

and multi-target regression trees.

2015 John Wiley & Sons, Ltd.

Volume 5, September/October 2015

WIREs Data Mining and Knowledge Discovery

Multi-output regression survey

Ikonomovska et al.54 proposed an incremental multi-target model tree algorithm, referred to as

FIMT-MT, for simultaneous modeling of multiple

continuous targets from time changing data streams.

FIMT-MT extends an incremental single-target model

tree by adopting the principles of the predictive clustering methodology in the split selection criterion. In

the tree leaves, linear models are separately computed

for each target using an incremental training of perceptrons.

Stojanova et al.55 developed the NCLUS algorithm for modeling nonstationary autocorrelation in

network data by using predictive clustering trees

(i.e., decision trees with a hierarchy of clusters: the

top-node corresponds to one cluster containing all

data, which is recursively partitioned into smaller

clusters while moving down the tree). NCLUS is a

topdown induction algorithm that recursively partitions the set of nodes based on the average values of

variance reduction and autocorrelation measure computed over the set of all target variables.

More recently, a similar work has been proposed by Appice et al.56 They dealt with the problem

of modeling nonstationary spatial autocorrelation of

multi-variate geophysical data streams by using interpolative clustering trees (i.e., tree-structured models

where a split node is associated with a cluster and a

leaf node with a single predictive model for the multiple target variables). Their proposed time-evolving

method is also based on a topdown induction algorithm that makes use of variance reduction and spatial autocorrelation measure computed over the target

variables.

Levatic et al.57 addressed the task of

semi-supervised learning for multi-target regression and proposed a self-training approach using a

random forest of predictive clustering trees. The main

feature of self-training is that it iteratively uses its

own most reliable predictions in the learning process.

The most reliable predictions are selected in this case

using a threshold on the reliability scores, which are

computed as the average of the normalized per-target

standard deviations.

Rule Methods

Aho et al.58 presented a new method for learning

rule ensembles for multi-target regression problems

and simultaneously predicting multiple numeric target

attributes. The so-called FItted Rule Ensemble (FIRE)

algorithm transcribes an ensemble of regression trees

into a large collection of rules, then an optimization

procedure is used to select the best (and much smaller)

subset of these rules and determine their respective

weights.

Volume 5, September/October 2015

More recently, Aho et al.1 extended the FIRE

algorithm by combining rules with simple linear functions in order to increase the predictive accuracy.

Thus, FIRE optimizes the weights of rules and linear terms with a gradient-directed optimization algorithm. Given an unlabeled example x, the resulting

rule ensemble is a vector y consisting of the values of

all target variables:

y = f (x) = w0 avg +

k=1

wk rk (x) +

d m

wij xij ,

i=1 j=1

where w0 is the baseline prediction, avg is the

constant vector whose components are the average

values for each of the targets, and R defines the

number of considered rules. Hence, the first sum is the

contribution of the R rules: each rule rk is a vector

function that gives a constant prediction for each of

the targets if it covers the example x, or returns a zero

vector otherwise; and the weights wk are optimized

by a gradient-directed optimization algorithm. The

double sum is the contribution of optional m d

linear terms. In fact, a linear term xij is a vector

that corresponds to the influence of the jth numerical

descriptive variable Xj on the ith target variable Y i ,

i.e., its ith component is equal to Xj , whereas all other

components are zero:

xij = 0, , 0 , xj , 0 , , 0

i1

i+1

i

Finally, the values of all weights wij are also

determined using a gradient-directed optimization

algorithm that depends on a gradient threshold .

Thus, the optimization procedure is repeated using

different values of in order to find a set of weights

with the smallest validation error.

Table 1 summarizes the reviewed multi-output

regression algorithms.

DISCUSSION

Note that, even though the ST method is a simple

approach, it does not imply simpler models. In fact,

exploiting relationships among the output variables

could be used to improve the precision or reduce

computational costs as explained in what follows.

First, let us point out that some transformation

algorithms fail to properly exploit the multi-output

relationships, and therefore they may be considered as

ST methods. For instance, this is the case of RC using

linear regression as base models, namely, OLS or ridge

estimators of the coefficients.

2015 John Wiley & Sons, Ltd.

223

wires.wiley.com/widm

Overview

TABLE 1 Summary of Multi-Output Regression Methods

Method

Problem transformation methods

Algorithm adaptation methods

References

Single target

Random linear target combinations

Separate ridge regression

Multi-target regressor stacking

Regressor chains

Multi-output SVR

Statistical methods

Multi-output SVR

Kernel methods

Multi-target regression trees

Rule methods

Lemma 1. RC with linear regression is an ST if OLS

or ridge regression is used as base models.

Proof. See Appendix A.

To our knowledge, Lemma 1 is valid just for

linear regression. However, it presents an example of

the fact that, in some cases, intuitions behind a model

could be misleading. In particular, when problem

224

Year

4

Spyromitros-Xious et al.

Tsoumakas et al.5

Hoerl and Kennard25

Spyromitros-Xious et al.4

Spyromitros-Xious et al.4

Zhang et al.32

Izenman33

van der Merwe and Zidek34

Brown and Zidek7

Breiman and Friedman6

Simil and Tikka10

Abraham et al.35

Brudnak38

Cai et al.40

Deger et al.39

Han et al.17

Liu et al.41

Sanchez et al.19

Tuia et al.18

Vazquez and Walter36

Xu et al.43

Baldassarre et al.44

Evgeniou and Pontil45

Evgeniou et al.46

Micchelli and Pontil9

lvarez at al.47

Death49

Appice and Deroski2

Kocev et al.3

Kocev et al.52

Struyf and Deroski48

Ikonomovska et al.54

Stojanova et al.55

Appice et al.56

Levatic et al.57

Aho et al.58

Aho et al.1

2012

2014

1970

2012

2012

2012

1975

1980

1980

1997

2007

2013

2006

2009

2012

2012

2009

2004

2011

2003

2013

2012

2004

2005

2005

2012

2002

2007

2009

2012

2006

2011

2012

2014

2014

2009

2012

transformations methods are used in combination

with ensemble methods (e.g., ERC and ERCC), the

advantages of the multi-output approach could be

hard to understand and interpret.

In addition, statistical methods and multi-output

SVR (MO-SVR) are methods that mainly rely on

the idea of embedding of the output-space. They

assume that the space of the output variables could

2015 John Wiley & Sons, Ltd.

Volume 5, September/October 2015

WIREs Data Mining and Knowledge Discovery

Multi-output regression survey

be described using a sub-space of lower dimensions

than d (e.g., Izenman,33 Brudnak,38 and Liu et al.41 ).

There are several reasons to adopt this embedding:

When m < d. In this case, an embedding is

certain.38

When we have a prior knowledge on the

output-space structure, e.g., spatial relationship

among the output variables.36

When we assume a linear model with a nonfull

rank matrix of coefficients.33,34

When we assume a manifold structure for the

output-space.41

Such an embedding implies a series of advantages. First of all, a more compact representation of

the output space is achieved. Second, in the case of linear models, it assures correct estimations of ill-posed

problems.7,33,44 Third, it may improve the predictive

performance of the considered methods.41 Moreover,

in the case of MO-SVR and kernel methods with a

large number of input variables, computations could

become very costly, so exploiting output dependencies

permits to reduce them.38

Statistical methods could be considered as direct

extensions of the ST linear regression, while MO-SVR

and kernel methods present the merits of dealing

with nonlinear regression functions, and therefore

they are more general. We could then ascribe statistical methods in the modeling tradition of statistics,

while MO-SVR, kernel methods, rule methods and

multi-target regression trees rather belong to the algorithmic branch of statistics or to the machine learning

community (see Breiman59 ).

Predictive Performance

Considering the models predictive performance as a

comparison criterion, the benefits of using MTRS and

RC (or ERC and the corrected versions) instead of

the baseline ST approach are not so clear. In fact,

in Ref 4, an extensive empirical comparison of these

methods is presented, and the results show that ST

methods outperform several variants of MTRS and

ERC. This fact is especially notable in the straightforward applications. In particular, the benefits of MTRS

and RC methods appear to derive uniquely from the

randomization process (e.g., due to the order of the

chain) and from the ensemble model (e.g., ERC).

Statistical methods could improve notably the

performance with respect to a baseline ST regression but only if specific assumptions are fulfilled,

i.e., a relation among outputs truly exists, and a

Volume 5, September/October 2015

linear outputoutput relationship (in addition to a

linear inputoutput relationship) is verified. Otherwise, using these statistical models could produce a

detriment of the predictive performance. In particular, if we assume that the d m matrix of regression

coefficients has a reduced rank r < min(d, m), when in

reality it possess a full-rank, then we are obviously

wrongly estimating the relationship and we lose some

information.

MO-SVR and kernel methods are, in general,

designed to achieve a good predictive performance

where linearity cannot be assumed. It is interesting to

notice that some of the MO-SVR methods are basically designed with the following goals: (1) speeding

up computations, (2) obtaining a sparser representation (avoiding the use of the same support vector for

several times) compared to the ST approach,38 and (3)

keeping more or less the same error rates as the ST

approach. On the contrary, Liu et al.41 implementation is only based on improving the predictive performance. The authors also advocate that their method

should be implemented in every regression algorithm

because it guarantees to find an optimal local basis for

computing distances in the output manifolds.

Finally, multi-target regression trees and

rule methods are also based on finding simpler

multi-output models, that usually achieve good predictive results (i.e., comparable with ST approach).

Computational Complexity

For a large number of output variables, all problem

transformation methods face the challenging problems

of either solving a large number of ST problems

(e.g., ST, MTRS, and RC) or a single large problem

(e.g., LS-SVR32 ). Nevertheless, note that ST and some

implementations of RC could be speeded up in the

training and/or prediction phases using a parallel

computation (see Open Source Software Frameworks

section and Appendix B).

Using ST with kernel methods as a base model

may also lead to compute the same kernel over the

same points more than once. In this case, it is computationally more efficient to consider multi-output kernels

and thus avoid redundant computations.

Multi-target regression trees and rule methods

are also designed to be more competitive from the

point of view of computational and memory complexity, especially compared to their ST counterparts.

Representation and Interpretability

Algorithm adaptation methods, relying on ST models,

do not provide a description of the relationships

among the multiple output variables. Those methods

2015 John Wiley & Sons, Ltd.

225

wires.wiley.com/widm

Overview

are interpretable as long as the number of outputs is

not intractable, otherwise, it is extremely difficult to

analyze each model and retrieve information about the

relationships between the different variables.

Statistical methods provide a similar representation as ST regression models (each output is a linear

combination of inputs), the main difference is that the

subset of independent and sufficient outputs could be

discovered. In some cases (e.g., LASSO penalty estimations), the estimated model could be represented as

a graph since the matrix of the regression coefficients

tends to be sparse.

Kernel and MO-SVR methods suffer from the

same problem as in the single-output SVR. In fact,

their model interpretation is not straightforward since

the input space is transformed. The gain in predictive

performance is usually paid in terms of readability.

Multi-target regression trees and rule methods build human-readable predictive models. They

are hence considered as the most interpretable

multi-output models (if not coupled with ensemble

methods), clearly illustrating which input variables

are relevant and important in the prediction of a given

group of outputs.

The average relative error43 :

| (l)

(l) |

yi ||

Ntest ||y

d

i

1

1

|

|

=

a =

(l)

d i=1

d i=1 Ntest l=1

yi

d

1

(2)

The mean-squared error (MSE)38,40,48 :

MSE =

Ntest (

d

)

1 (l)

(l) 2

yi

yi

Ntest l=1

i=1

The

average

root-mean-squared

(aRMSE)3,17,18,39,52 :

1

RMSE

d i=1

N

test (

(l)

(l) 2

y

i

i

d

1 l=1

=

Ntest

d i=1

(3)

error

aRMSE =

(4)

The average relative root-mean-squared error

(aRRMSE)1,4,5,52 :

1

RRMSE

d i=1

Ntest (

)

(l)

(l) 2

y

i

i

d

1

l=1

=

test (

d i=1

)2

N

y(l) y

i

i

d

aRRMSE =

PERFORMANCE EVALUATION

MEASURES

In this section, we introduce the performance evaluation measures used to assess the behavior of learned

models when applied to an unseen or test data set

of size Ntest , and thereby to assess the multi-output

regression methods used for model induction. Let y(l)

and y (l) be the vectors of the actual and predicted outy be the vectors of

puts for x(l) , respectively, and y and

averages of the actual and predicted outputs, respectively. Besides measuring the computing times,1,3,17,52

the mostly used evaluation measures for assessing

multi-output regression models are:

The average correlation coefficient (aCC)3,43,52 :

1

aCC =

CC

d i=1

d

)( l

)

( (l)

()

yi yi

yi

yi

Ntest

l=1

1

d i=1

N

test (

test (

)2 N

)2

(l)

(l)

yi yi

yi

yi

d

l=1

l=1

(1)

226

(5)

l=1

1,3,52

The model size

: defined, e.g., as the total

number of nodes in trees (or the total number

of rules) in multi-target regression trees (or rule

methods).

Note that, the different described estimated

errors are computed as the sum/average over all the

separately computed errors for each target variable.

This allows to calculate the model performance across

multiple targets, which may potentially have distinct

ranges. In such cases, the use of a normalization operator could be useful in order to obtain a normalized

error values for each target, prior to averaging. The

normalization is usually done by dividing each target variable by its standard deviation or by re-scaling

its range. The re-scaling factor could be either determined by the data/application at hand (i.e., some prior

knowledge), or by the type of the used evaluation

measures. For instance, when using MSE or RMSE,

a reasonable choice would be to scale each target variable by its standard deviation. Relative measures, such

2015 John Wiley & Sons, Ltd.

Volume 5, September/October 2015

WIREs Data Mining and Knowledge Discovery

Multi-output regression survey

as RRMSE, automatically re-scale the error contributions of each target variable, and hence, there might be

no need here to use an extra normalization operator.

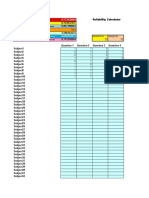

TABLE 2 Multi-Target Regression Data Sets

Data Set

60

Solar Flare

61

DATA SETS

Despite the many interesting applications of

multi-target regression, there are only a few publicly

available data sets. There follows a brief description of those data sets, which are then summarized

in Table 2 including details about the number of

instances (represented as training/testing or total

number of instances/CV where cross-validation (CV)

is applied for the evaluation process), the number of

targets, and the number of features.

Solar Flare60 : data set for predicting how often

three potential types of solar flarecommon,

moderate, and severe (i.e., d = 3)occur in a

24-h period. The prediction is performed from

the input information of 10 feature variables

describing active regions on the sun.

Water Quality61 : data set for inferring chemical

from biological parameters of river water quality.

The data are provided by the Hydrometeorological Institute of Slovenia and cover the six-year

period from 1990 to 1995. It includes the measured values of 16 different chemical parameters

and 14 bioindicator taxa.

OES97 and OES104 : data gathered from the

annual Occupation Employment Survey compiled by the US Bureau of Labor Statistics for

the years 1997 (OES97) and 2010 (OES10). Each

row provides the estimated number of full-time

equivalent employees across many employment

types for a specific metropolitan area. The input

variables are a randomly sequenced subset of

employment types, and the targets (d = 16) are

randomly selected from the entire set of categories above the 50% threshold.

ATP1d and ATP7d4 : data sets of airline

ticket prices where the rows are sequences

of time-ordered observations over several days.

The input variables include details about the

flights (such as prices, stops, and departure

date), and the six target variables are the minimum prices observed over the next 7 days for six

flight preferences (namely, any airline with any

number of stops, any airline nonstop only, Delta

Airlines, Continental Airlines, AirTran Airlines,

and United Airlines).

RF1 and RF24 : the river flow domain is a temporal prediction task designed to test predictions

Volume 5, September/October 2015

Instances

Features

Targets

1389/CV

10

Water Quality

1060/CV

16

14

OES974

323/CV

263

16

OES104

403/CV

298

16

ATP1d4

201/136

411

ATP7d

188/108

411

RF14

4108/5017

64

4108/5017

576

154/CV

16

2

4

RF2

EDM62

Polymer

43

41/20

10

Forestry-Kras63

60607/CV

160

Soil quality64

1945/CV

142

on the flows in a river network for 48 h in the

future at specific locations. The data sets were

obtained from the US National Weather Service

and include hourly flow observations for eight

sites in the Mississippi River network in the

United States from September 2011 to September 2012. The RF1 and RF2 data sets contain a

total of 64 and 576 predictor variables respectively, describing lagged flow observations from

6, 12, 18, 24, 36, 48, and 60 h in the past.

EDM62 : data set for the electrical discharge

machining (EDM) domain in which the workpiece surface is machined by electrical discharges

occurring in the gap between two electrodesthe

tool and the workpiece. The aim here is to

predict the two target variables, gap control

and flow control, using 16 input variables representing mean values and deviations of the

observed quantities of the considered machining

parameters.

Note here that all the above data sets can

be downloaded from http://users.auth.gr/espyromi/

datasets.html.

Polymer43 : the Polymer test plant data set

includes 10 input variables, measurements of

controlled variables in a polymer processing

plant (temperatures, feed rates, etc.), and 4 target variables which are measures of the output

of that plant.

It is available from ftp://ftp.cis.upenn.edu/pub/

ungar/chemdata/.

2015 John Wiley & Sons, Ltd.

227

wires.wiley.com/widm

Overview

Forestry-Kras63 : data set for the prediction of

forest stand height and canopy cover for the Kras

region in Western Slovenia. This data set contains

2 target variables representing forest properties

(i.e., vegetation height and canopy cover) and

160 explanatory input variables derived from

Landsat satellite imagery data. The data are

available upon request from the authors.

Soil quality64 : data set for predicting the soil

quality from agricultural measures. It consists

of 145 variables, of which 3 are target variables (the abundances of Acari and Collembolans, and Shannon-Wiener biodiversity), and

142 are input variables that describe the field

where the microarthropod sample was taken and

mainly include agricultural measures (such as

crops planted, packing, tillage, fertilizer, and pesticide use). The data are available upon request

from the authors.

OPEN-SOURCE SOFTWARE

FRAMEWORKS

We present now a brief summary of available implementations of some multi-output regression algorithms.

Problem Transformation Methods

Single target models, RC and all the methods described

in Spyromitros-Xioufis et al.4 have been implemented as an extension of MULAN65 (developed by

Tsoumakas, Spyromitros-Xioufis, and Vilcek), which

also consists of an extension of the widely used WEKA

software.66 MULAN is available as a library, thus

there is no graphical user or command line interfaces.

Similar to MULAN, there is also the MEKA

software67 (developed by Read and Reutemann),

which is defined as a multi-label extension to WEKA.

It mainly focuses on multi-label algorithms, but incorporates as well some multi-output algorithms. MEKA

presents a graphical user interface similar to WEKA

and it is very easy to use for nonexperts.

The main advantage of both MEKA and

MULAN is that problem transformation methods

can be coupled with any ST algorithm implemented

in the WEKA library. Moreover, MULAN could be

coupled with MOA68 framework for data stream

mining or integrated in ADAMs69 framework for

scientific workflow management.

Furthermore, problem transformation methods,

such as ST, MTRS, and RC could be easily implemented in R,70 and it is possible to use as well the

228

parallel70 package (included in R, version 2.14.0) to

speed up computations using parallel computing (see

Appendix B for a simple example of an R source code

of a parallel ST implementation).

Statistical Methods

The glmnet71 R package offers the possibility of

learning multi-target linear models with penalized

maximum likelihood. In particular, using this package,

it is possible to perform LASSO, ridge or mixed

penalty estimation of the coefficients.

Multi-Target Regression Trees

MRTs49 are available through the R package

mvpart,72 which is an extension of the rpart73

package that implements the CART algorithm. The

mvpart package is not currently available in CRAN

but its older versions could be retrieved.

Additional implementation of multi-target

regression trees algorithms could be also found in

the CLUS system,74 focused on decision trees and

rules induction. Among others, the CLUS system

implements the predictive clustering framework and

includes multi-objective regression trees.48 Moreover, MULAN includes a wrapper implementation

of CLUS, supporting hence multi-objective random

forest.

CONCLUSION

In this study, the state of the art of multi-output regression is thoroughly surveyed, presenting details of the

main approaches that have been proposed in the literature, and including a theoretical comparison of these

approaches in terms of predictive performance, computational complexity, and representation and interpretability. Moreover, we have presented the most

often used performance evaluation measures, as well

as the publicly available data sets for multi-output

regression problems, and we have provided a summary of the related open-source software frameworks.

To the best of our knowledge, there is no other

review paper addressing the challenging problem of

multi-output regression. An interesting line of future

work would be to perform a comparative experimental study of the different approaches presented here

on the publicly available data sets to round out this

review. Another interesting extension of this review is

to consider different categorizations of the described

multi-output regression approaches, such as grouping them based on how they model the relationships

among the multiple target variables.

2015 John Wiley & Sons, Ltd.

Volume 5, September/October 2015

WIREs Data Mining and Knowledge Discovery

Multi-output regression survey

ACKNOWLEDGMENTS

This work has been partially supported by the Spanish Ministry of Economy and Competitiveness

through the Cajal Blue Brain project (C080020-09; the Spanish partner of the Blue Brain initiative

from EPFL), the TIN2013-41592-P project, and the Regional Government of Madrid through the

S2013/ICE-2845-CASI-CAM-CM project.

REFERENCES

1. Aho T, enko B, Deroski S, Elomaa T. Multi-target

regression with rule ensembles. J Mach Learn Res 2009,

373:20552066.

14. Bielza C, Li G, Larraaga P. Multi-dimensional classification with Bayesian networks. Int J Approx Reason

2011, 52:705727.

2. Appice A, Deroski S. Stepwise induction of multi-target

model trees. In: Proceedings of the Eighteenth European

Conference on Machine Learning, Warsaw, Poland,

2007, 502509. Springer Verlag.

15. Burnham AJ, MacGregor JF, Viveros R. Latent variable

multivariate regression modeling. Chemometr Intell

Lab 1999, 48:167180.

3. Kocev D, Deroski S, White MD, Newell GR, Griffioen

P. Using single- and multi-target regression trees and

ensembles to model a compound index of vegetation

condition. Ecol Model 2009, 220:11591168.

4. Spyromitros-Xioufis E, Groves W, Tsoumakas

G, Vlahavas I. Multi-label classification methods for multi-target regression, arXiv preprint

arXiv:1211.6581, 2012, 11591168. Cornell University Library.

5. Tsoumakas G, Spyromitros-Xioufis E, Vrekou A, and

Vlahavas I. Multi-target regression via random linear

target combinations. In: Proceedings of the European

Conference on Machine Learning and Principles and

Practice of Knowledge Discovery in Databases, Nancy,

France, 2014, 225240. Springer Verlag.

6. Breiman L, Friedman JH. Predicting multivariate

responses in multiple linear regression. J R Stat Soc

Series B Stat Methodol 1997, 59:354.

7. Brown PJ, Zidek JV. Adaptive multivariate ridge regression. Ann Stat 1980, 8:6474.

8. Haitovsky Y. On multivariate ridge regression.

Biometrika 1987, 74:563570.

9. Micchelli CA, Pontil M. On learning vector-valued

functions. Neural Comput 2005, 17:177204.

10. Simil T, Tikka J. Input selection and shrinkage in

multiresponse linear regression. Comput Stat Data Anal

2007, 52:406422.

11. Madjarov G, Kocevb D, Gjorgjevikja D, Deroskib S.

An extensive experimental comparison of methods for

multi-label learning. Pattern Recogn 2012, 45:705727.

12. Tsoumakas G, Katakis I. Multi-label classification: an

overview. Int J Data Warehouse Min 2007, 3:113.

13. Zhang M, Zhou Z. A review on multi-label learning algorithms. IEEE Trans Knowl Data Eng 2014,

26:18191837.

Volume 5, September/October 2015

16. Kuznar D, Mozina M, BratkoI I. Curve prediction with

kernel regression. In: Proceedings of the ECML/PKDD

2009 Workshop on Learning from Multi-Label Data,

Bled, Slovenia, 2009, 6168.

17. Han Z, Liu Y, Zhao J, Wang W. Real time prediction

for converter gas tank levels based on multi-output

least square support vector regressor. Control Eng Pract

2012, 20:14001409.

18. Tuia D, Verrelst J, Alonso L, Prez-Cruz F, Camps-Valls

G. Multioutput support vector regression for remote

sensing biophysical parameter estimation. IEEE Geosci

Remote Sens Lett 2011, 8:804808.

19. Snchez-Fernndez M, de-Prado-Cumplido M,

Arenas-Garca J, Prez-Cruz F. SVM multiregression for nonlinear channel estimation in multiple-input

multiple-output systems. IEEE Trans Signal Process

2004, 52:22982307.

20. Baxter J. A Bayesian/information theoretic model of

learning to learn via multiple task sampling. Mach

Learn 1997, 28:739.

21. Caruana R. Multitask learning. Mach Learn 1997,

28:4175.

22. Ben-David S, Schuller R. Exploiting task relatedness for

multiple task learning. In: Proceedings of the Sixteenth

Annual Conference on Learning Theory, Washington,

DC, 2003, 567580. Springer Verlag.

23. Jalali A, Sanghavi S, Ruan C, Ravikumar PK. A dirty

model for multi-task learning. In: Proceedings of the

Advances in Neural Information Processing Systems 23,

Vancouver, Canada, 2010, 964972.

24. Marquand AF, Williams SCR, Doyle OM, Rosa MJ.

Full Bayesian multi-task learning for multi-output brain

decoding and accommodating missing data. In: Proceedings of the 2014 International Workshop on Pattern Recognition in Neuroimaging, Tbingen, Germany,

2014, 14. IEEE Press.

2015 John Wiley & Sons, Ltd.

229

wires.wiley.com/widm

Overview

25. Hoerl AE, Kennard RW. Ridge regression: biased estimation for nonorthogonal problems. Technometrics

1970, 12:5567.

26. Drucker H, Burges CJC, Kaufman L, Smola A, Vapnik

V. Support vector regression machines. In: Proceedings

of the Advances in Neural Information Processing

Systems 9, Denver, CO, 1997, 155161.

27. Breiman L. Bagging predictors. Mach Learn 1997,

24:123140.

28. Friedman JH. Stochastic gradient boosting. Comput

Stat Data Anal 2002, 38:367378.

29. Godbole S, Sarawagi S. Discriminative methods for

multi-labeled classification. In: Proceedings of the

Eighth Pacific-Asia Conference on Knowledge Discovery and Data Mining, Sydney, Australia, 2004, 2230.

Springer Verlag.

30. Wolpert DH. Stacked generalization. Neural Netw

1992, 5:241259.

31. Read J, Pfahringer B, Holmes G, Frank E. Classifier

chains for multi-label classification. Mach Learn 2011,

85:333359.

32. Zhang W, Liu X, Ding Y, Shi D. Multi-output LS-SVR

machine in extended feature space. In: Proceedings of

the 2012 IEEE International Conference on Computational Intelligence for Measurement Systems and Applications, Tianjin, China, 2012, 130134.

33. Izenman AJ. Reduced-rank regression for the multivariate linear model. J Multivar Anal 1975, 5:248264.

34. van der Merwe A, Zidek JV. Multivariate regression analysis and canonical variates. Can J Stat 1980,

8:2739.

35. Abraham Z, Tan P, Perdinan P, Winkler J, Zhong

S, Liszewska M. Position preserving multi-output prediction. In: Proceedings of the European Conference

on Machine Learning and Principles and Practice of

Knowledge Discovery in Databases, Prague, Czech

Republic, 2013, 320335. Springer Verlag.

36. Vazquez E, Walter E. Multi-output support vector

regression. In: Proceedings of the Thirteen IFAC Symposium on System Identification, Rotterdam, The Netherlands, 2003, 18201825.

37. Chils JP, Delfiner P. Geostatistics: Modeling Spatial

Uncertainty. Wiley Series in Probability and Statistics.

Wiley; 1999.

38. Brudnak M. Vector-valued support vector regression.

In: Proceedings of the 2006 International Joint Conference on Neural Networks, Vancouver, Canada, 2006,

15621569. IEEE Press.

39. Deger F, Mansouri A, Pedersen M, Hardeberg JY.

Multi- and single-output support vector regression for

spectral reflectance recovery. In: Proceedings of the

Eighth International Conference on Signal Image Technology and Internet Based Systems, Sorrento, Italy,

2012, 139148. IEEE Press.

40. Cai F, Cherkassky V. SVM+ regression and multi-task

learning. In: Proceedings of the 2009 International Joint

230

Conference on Neural Networks, Atlanta, GA, 2009,

418424. IEEE Press.

41. Liu G, Lin Z, Yu Y. Multi-output regression on

the output manifold. Pattern Recogn 2009, 42:

27372743.

42. Kennedy J, Eberhart R. Particle swarm optimization.

In: Proceedings of IEEE International Conference on

Neural Networks, Perth, Western Australia, 1995, 28.

IEEE Press.

43. Xu S, An X, Qiao X, Zhu L, Li L. Multi-output

least-squares support vector regression machines. Pattern Recogn Lett 2013, 34:10781084.

44. Baldassarre L, Rosasco L, Barla A, Verri A.

Multi-output learning via spectral filtering. Mach

Learn 2012, 87:259301.

45. Evgeniou T, Pontil M. Regularized multi-task learning.

In: Proceedings of the 2004 ACM SIGKDD International Conference on Knowledge Discovery and Data

Mining, Seattle, WA, 2004, 109117. ACM Press.

46. Evgeniou T, Micchelli CA, Pontil M. Learning multiple

tasks with kernel methods. J Mach Learn Res 2005,

6:615637.

47. lvarez MA, Rosasco L, Lawrence ND. Kernels for

vector-valued functions: a review. Found Trends Mach

Learn 2012, 4:195266.

48. Struyf J, Deroski S. Constraint based induction of

multi-objective regression trees. In: Proceedings of the

Fifth International Workshop on Knowledge Discovery in Inductive Databases, Berlin, Germany, 2006,

222233. Springer Verlag.

49. Death G. Multivariate regression trees: a new technique

for modeling species-environment relationships. Ecology 2002, 83:11051117.

50. Breiman L, Friedman JH, Stone CJ, Olshen RA. Classification and Regression Trees. Chapman & Hall/CRC;

1984.

51. Malerba D, Esposito F, Ceci M, Appice A, Pontil M.

Top-down induction of model trees with regression and

splitting nodes. IEEE Trans Pattern Anal Mach Intell

2004, 26:612625.

52. Kocev D, Vens C, Struyf J, Deroski S. Tree ensembles

for predicting structured outputs. Pattern Recogn 2012,

46:817833.

53. Breiman L. Random forests. Mach Learn 2001,

45:532.

54. Ikonomovska E, Gama J, Deroski S. Incremental

multi-target model trees for data streams. In: Proceedings of the 2011 ACM Symposium on Applied Computing, Taichung, Taiwan, 2011, 988993. ACM.

55. Stojanova D, Ceci M, Appice A, Deroski S. Network

regression with predictive clustering trees. Data Min

Knowl Disc 2012, 25:378413.

56. Appice A, Malerba D. Leveraging the power of local

spatial autocorrelation in geophysical interpolative clustering. Data Min Knowl Disc 2014, 28:12661313.

2015 John Wiley & Sons, Ltd.

Volume 5, September/October 2015

WIREs Data Mining and Knowledge Discovery

Multi-output regression survey

57. Levatic J, Ceci M, Kocev D, Deroski S. Semi-supervised

learning for multi-target regression. In: Proceedings of

the Third International Workshop on New Frontiers

In Mining Complex Patterns, Nancy, France, 2014,

110123. Springer Verlag.

58. Aho T, enko B, Deroski S. Rule ensembles for

multi-target regression. In: Proceedings of the Ninth

IEEE International Conference on Data Mining,

Miami, FL; 2009, 2130. IEEE Press.

73. Therneau T, Atkinson B, Ripley B. rpart: recursive

partitioning and regression trees, R package version 4.1-9. Available at: http://CRAN.R-project.org/

package=rpart. (Accessed May 10, 2015).

74. Declarative Languages and Artificial Intelligence Group

(Katholieke Universiteit Leuven) and Department of

Knowledge Technologies (Joef Stefan Institute). CLUS

system. Available at: https://dtai.cs.kuleuven.be/clus/.

(Accessed May 10, 2015).

59. Breiman L. Statistical modeling: the two cultures. Stat

Sci 2001, 16:199231.

60. Bache K, Lichman M. UCI Machine Learning Repository. University of California, School of Information

and Computer Sciences, Irvine, CA, 2013. Available at:

http://archive.ics.uci.edu/ml. (Accessed May 10, 2015).

61. Deroski S, Demar D, Grbovic J. Predicting chemical

parameters of river water quality from bioindicator

data. Appl Intell 2000, 13:717.

62. Karalic A, Bratko I. First order regression. Mach Learn

1997, 26:147176.

63. Stojanova D, Panov P, Gjorgjioski V, Kobler A,

Deroski S. Estimating vegetation height and canopy

cover from remotely sensed data with machine learning.

Ecol Inform 2010, 5:256266.

64. Demar D, Deroski S, Larsen T, Struyfc J, Axelsenb J,

Pedersenb MB, Kroghb PH. Using multi-objective classification to model communities of soil microarthropods. Ecol Model 2006, 191:131143.

65. Tsoumakas G, Spyromitros-Xioufis E, Vilcek J, Vlahavas I. Mulan: a Java library for multi-label learning.

J Mach Learn Res 2011, 12:24112414.

66. Hall M, Frank E, Holmes G, Pfahringer B, Reutemann

P, Witten IH. The WEKA data mining software: an

update. ACM SIGKDD Explor Newsl 2009, 11:1018.

67. Read J, Reutemann P. MEKA: a multi-label extension

to WEKA. Available at: http://meka.sourceforge.net/.

(Accessed May 10, 2015).