Professional Documents

Culture Documents

Blue Coat Vs Riverbed Wan Optimization PDF

Uploaded by

Anonymous b2fvcXSFOriginal Description:

Original Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Blue Coat Vs Riverbed Wan Optimization PDF

Uploaded by

Anonymous b2fvcXSFCopyright:

Available Formats

WAN Optimisation Test

Blue Coat vs. Riverbed

A Broadband-Testing Report

By Steve Broadhead, Founder & Director, BB-T

WAN OptimisationTest Report

First published March 2011 (V1.0)

Published by Broadband-Testing

A division of Connexio-Informatica 2007, Andorra

Tel : +376 633010

E-mail : info@broadband-testing.co.uk

Internet : HTTP://www.broadband-testing.co.uk

2011 Broadband-Testing

All rights reserved. No part of this publication may be reproduced, photocopied, stored on a retrieval system, or transmitted without the express written consent of the

authors.

Please note that access to or use of this Report is conditioned on the following:

ii

1.

The information in this Report is subject to change by Broadband-Testing without notice.

2.

The information in this Report, at publication date, is believed by Broadband-Testing to be accurate and reliable, but is not guaranteed. All use of and reliance on

this Report are at your sole risk. Broadband-Testing is not liable or responsible for any damages, losses or expenses arising from any error or omission in this

Report.

3.

NO WARRANTIES, EXPRESS OR IMPLIED ARE GIVEN BY Broadband-Testing. ALL IMPLIED WARRANTIES, INCLUDING IMPLIED WARRANTIES OF

MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND NON-INFRINGEMENT ARE DISCLAIMED AND EXCLUDED BY Broadband-Testing. IN

NO EVENT SHALL Broadband-Testing BE LIABLE FOR ANY CONSEQUENTIAL, INCIDENTAL OR INDIRECT DAMAGES, OR FOR ANY LOSS OF PROFIT,

REVENUE, DATA, COMPUTER PROGRAMS, OR OTHER ASSETS, EVEN IF ADVISED OF THE POSSIBILITY THEREOF.

4.

This Report does not constitute an endorsement, recommendation or guarantee of any of the products (hardware or software) tested or the hardware and

software used in testing the products. The testing does not guarantee that there are no errors or defects in the products, or that the products will meet your

expectations, requirements, needs or specifications, or that they will operate without interruption.

5.

This Report does not imply any endorsement, sponsorship, affiliation or verification by or with any companies mentioned in this report.

6.

All trademarks, service marks, and trade names used in this Report are the trademarks, service marks, and trade names of their respective owners, and no

endorsement of, sponsorship of, affiliation with, or involvement in, any of the testing, this Report or Broadband-Testing is implied, nor should it be inferred.

Broadband-Testing 1995-2011

WAN Optimisation Test Report

TABLE OF CONTENTS

TABLE OF CONTENTS ........................................................................................ 1

BROADBAND-TESTING ..................................................................................... 3

EXECUTIVE SUMMARY ...................................................................................... 4

INTRODUCTION: OPTIMISING THE WAN MORE IMPORTANT THAN EVER ...... 7

TEST OVERVIEW ............................................................................................... 8

Products In This Report ............................................................................ 8

How The Technology Is Applied ............................................................... 10

PUT TO THE TEST: WAN OPTIMISATION......................................................... 13

Our Test Bed ........................................................................................ 13

Test 1: Video ........................................................................................ 14

Test 2: WAFS/File Transfer Tests ............................................................. 22

Test 3: Email ........................................................................................ 25

Test 4: FTP .......................................................................................... 26

Test 5: Web-based Applications - SharePoint............................................. 27

Test 6: Microsoft Cloud-based SharePoint ................................................. 30

Test 7: Web Application Test Salesforce.com .......................................... 35

SUMMARY & CONCLUSIONS ........................................................................... 37

Figure 1 Overall Results Summary .....................................................................................................................................6

Figure 2 Blue Coat MACH5 SG600 .....................................................................................................................................8

Figure 3 Riverbed Steelhead 1050 .....................................................................................................................................9

Figure 4 - Our Test Bed .................................................................................................................................................... 13

Figure 5 Baseline Video Test: 10 Streams ......................................................................................................................... 14

Figure 6 Device Video Test: 10 Streams ........................................................................................................................... 14

Figure 7 Baseline Video Test: 20 Streams ......................................................................................................................... 15

Figure 8 Device Video Test: 20 Streams ........................................................................................................................... 16

Figure 9 Baseline Video Test: 30 Streams ......................................................................................................................... 16

Figure 10 Device Video Test: 30 Streams ......................................................................................................................... 17

Figure 11 Baseline Video Test: 30 Streams ....................................................................................................................... 17

Figure 12 Device Video Test: 50 Streams ......................................................................................................................... 18

Figure 13 Riverbed Video Test: 50 Streams Errors .......................................................................................................... 18

Figure 14 Baseline Video Test: 100 Streams ..................................................................................................................... 19

Figure 15 Blue Coat Video Test: 100 Streams ................................................................................................................... 19

Figure 16 Blue Coat Video Test: 100 Streams Bandwidth Reduction Achieved..................................................................... 20

Figure 17 Blue Coat Video Test: 500 Streams Bandwidth Reduction Achieved..................................................................... 20

Broadband-Testing 1995-2011 1

WAN OptimisationTest Report

Figure 18 Cisco WAFS Benchmark ................................................................................................................................... 22

Figure 19 Cisco WAFS Benchmark: Cold Runs File Open .................................................................................................. 23

Figure 20 Cisco WAFS Benchmark: Cold Runs File Save .................................................................................................. 23

Figure 21 Cisco WAFS Benchmark: Warm Runs File Open ............................................................................................... 24

Figure 22 Cisco WAFS Benchmark: Warm Runs File Save ................................................................................................ 24

Figure 23 Email Test: Bandwidth Usage ........................................................................................................................... 25

Figure 24 FTP Test: Time Taken To Complete Transfer ...................................................................................................... 26

Figure 25 SharePoint 2007: Cold Runs ............................................................................................................................ 27

Figure 26 SharePoint 2007: Warm Runs .......................................................................................................................... 28

Figure 27 SharePoint 2010: Cold Runs ............................................................................................................................ 29

Figure 28 SharePoint 2010: Warm Runs .......................................................................................................................... 30

Figure 29 - Hub and Spoke topology .................................................................................................................................. 31

Figure 30 BPOS: Hub-and-Spoke Cold Run ...................................................................................................................... 32

Figure 31 BPOS: Hub-and-Spoke Warm Run .................................................................................................................... 32

Figure 32 - Branch Office to Cloud via Direct Internet Connection .......................................................................................... 33

Figure 33 SharePoint Cloud Service, Branch Office to Cloud via Direct Internet Connection, Cold ............................................ 33

Figure 34 - SharePoint Cloud Service, Branch Directly Connected to Cloud via Internet, WARM Run........................................... 34

Figure 35 Branch Directly Connected to Cloud via Internet: Cold Run .................................................................................. 35

Figure 36 Directly Connected to Cloud via Internet: Warm Run .......................................................................................... 36

Broadband-Testing 1995-2011

WAN Optimisation Test Report

BROADBAND-TESTING

Broadband-Testing is Europes foremost independent network testing facility and consultancy

organisation for broadband and network infrastructure products.

Based in Andorra, Broadband-Testing provides extensive test demo facilities. From this base,

Broadband-Testing provides a range of specialist IT, networking and development services to

vendors and end-user organisations throughout Europe, SEAP and the United States.

Broadband-Testing is an associate of the following:

Limbo Creatives (bespoke software development)

Broadband-Testing Laboratories are available to vendors and end users for fully independent

testing of networking, communications and security hardware and software.

Broadband-Testing Laboratories operates an Approval scheme that enables products to be

short-listed for purchase by end users, based on their successful approval.

Output from the labs, including detailed research reports, articles and white papers on the latest

network-related technologies, are made available free of charge on our web site at

HTTP://www.broadband-testing.co.uk

Broadband-Testing Consultancy Services offers a range of network consultancy services

including network design, strategy planning, Internet connectivity and product development

assistance.

Broadband-Testing 1995-2011 3

WAN OptimisationTest Report

EXECUTIVE SUMMARY

As the networking world becomes more distributed, so the need for LAN-type

performance across the WAN becomes ever more important. The reality is that

people are finally working in a more flexible fashion, geographically. But

regardless of where they are based head office, branch office or even working

from home they rightly expect to be able to use the same set of applications

and services. With more types of applications travelling on the same WAN link,

connecting more offices and more users its inevitable that performance suffers

due to latency, congestion and bandwidth limitations.

Not only do traditional enterprise applications and services need optimising, but

applications such as streaming video and cloud-delivered SaaS applications are

becoming increasingly important in the enterprise and are competing for that

same bandwidth. They need predictable, quality performance as well, video

especially.

To see if the existing WAN optimisation hardware is capable of delivering on these

requirements, we tested comparable Blue Coat MACH5 SG600 and Riverbed

Steelhead 1050 products. We created a test bed using real traffic across a

simulated WAN link (using typical bandwidth and latency settings). Our

application selection for testing was based on typical usage patterns and included

video, CIFS/file transfer, FTP, email, web-based SharePoint collaboration,

Microsoft Business Productivity Online Suite (BPOS) and Salesforce.com. We ran

multiple tests simulating both cold and warm runs. Cold runs mean the data has

never been seen by the optimisation device before; warm runs indicate that the

device has seen that data previously, meaning that various cache mechanisms

are warm and can deliver greater performance benefit.

We found that, when testing with traditional applications such as Microsoft file

shares over CIFS, FTP, and email, performance between both vendors was

relatively even with some small advantages for each vendor in different

situations. When it came to the fast growing applications like web-based apps,

video and Cloud/SaaS, however, Blue Coat performed significantly better than

Riverbed.

For SharePoint, tested as an internally deployed web application (SharePoint

operates over HTTP/SSL with an HTML browser interface to the user) the cold

results were relatively even between the two vendors. On warm runs, however,

Blue Coat performed significantly better an average of 5x better than Riverbed

across the board.

Our email test showed relatively even performance for both parties: 96%

bandwidth reduction for Riverbed and 97% for Blue Coat.

Broadband-Testing 1995-2011

WAN Optimisation Test Report

We also tested Microsofts cloud-based SharePoint service that is delivered over

the Internet in two different ways: symmetric and asymmetric. First, with

symmetric WAN optimisation (assumes data travels into Internet link, across data

centre WAN optimisation device, then across the WAN to a branch) the cold run

results were reasonably similar between both vendors; Blue Coat clearly beat

Riverbed on warm runs.

In the second test case, where cloud-based SharePoint was accessed directly

from a branch office, via the Internet, Riverbed did not provide any optimisation.

Blue Coat, however, showed extensive performance and bandwidth improvements

an average of 40x faster performance - with just the single appliance in a onearmed topology a significant benefit.

Looking at another cloud application, connecting over an HTTPS session, our

Salesforce.com testing highlighted the very significant limitation of the Riverbed

product that Blue Coat is able to overcome. Of the two, only the Blue Coat

technology was capable of actually accelerating from the cold runs, with

everything being accessed instantly, while Riverbed showed no optimisation

whatsoever. This can be explained by only Blue Coat being able to decrypt (then

re-encrypt) the SSL traffic stream at the edge device and the fact that it can

optimise in one-armed mode. Since it doesnt require the head end device (data

centre WAN optimisation controller) to be always in place to decrypt SSL traffic

(i.e. to know the private keys at both ends of the connection) it can therefore

intercept and optimise that traffic. However, the Riverbed solution is clearly

unable to do this something that is well documented already.

In the fastest growing, and arguably biggest component of network traffic today

video Blue Coat dominated. While Riverbed was able to raise the number of

concurrent streams to 30 across our simulated WAN link before generating client

errors, it still consumed the entire link at just 20 clients, with no room for other

applications. Blue Coat was able to service 30 streams with no problems, using

only 30% of the link for a period of time plenty of bandwidth for other

applications - then winding down to almost no bandwidth for the majority of the 6

minutes. This characteristic continued with 50, 100 and even 500 client streams.

Even then, they consumed an average of only 5-10% of the link 30% at the

start up of the video then almost no bandwidth. What this means is that Blue

Coat allowed for plenty of room on the link for other applications, where Riverbed

could not, even then it was using minimal bandwidth.

Broadband-Testing 1995-2011 5

WAN OptimisationTest Report

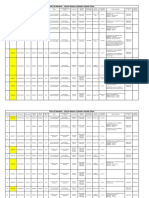

Applying a performance index to each element of our testing, based on the amount of

test data in each case, we see that, in comparison with our 1.0 baseline, Riverbed clearly

provides some optimisation benefits but Blue Coat is significantly out in front, thanks to

its superior performance in a number of key test areas, as weve outlined above.

Figure 1 Overall Results Summary

Broadband-Testing 1995-2011

WAN Optimisation Test Report

INTRODUCTION: OPTIMISING THE WAN MORE

IMPORTANT THAN EVER

As the networking world becomes more distributed, so the need for LAN-type

performance across the WAN becomes ever more important.

The reality is that people are finally working in a more flexible fashion, geographically.

But regardless of where they are based head office, branch office or even working

from home they rightly expect to be able to use the same set of applications and

services wherever they are. Logical enough but available bandwidth changes

significantly from location to location, especially in an international context. And,

regardless of bandwidth availability, unless the link is a well-managed, point-to-point

connection, issues such as latency will always arise. Throwing bandwidth at a problem

has never been the answer.

According to a new report from TeleGeography, streaming media now accounts for

18% of worldwide Internet traffic, and, together with other video-heavy applications,

comprises 52% of worldwide Internet traffic. However, Gartners VP of Research, Joe

Skorupa, believes there is a problem here whereby companies want to use more live

video internally and contend with video from the web, yet both can undermine

seemingly well-designed networks. Here, technologies such as video caching can help

and they need to.

External video on the WAN frequently consumes large amounts of the bandwidth at

various times, frequently slowing or even stopping business-critical applications from

functioning properly. Traditional WAN optimisation solutions that rely on generalpurpose acceleration technologies alone are believed to be not enough to remedy

sluggish application performance on lower bandwidth links, especially as a result of the

increasingly heavy video traffic.

Meantime, web-based collaboration also continues to increase and brings with it

another heavy drain on bandwidth resources. At the same time, the traditional

applications havent gone away, even if they are changing in structure from traditional

client-server to cloud-based/SaaS models. So, in an enterprise context, we are looking

at supporting a huge number of widely varying application and service types from

traditional enterprise applications such as database, file transfer, backup and email,

though Internet generation services such as Salesforce.com and desktop collaboration

contribute to this significant increase in video usage.

While many companies appear to still consider some form of WAN optimisation to be a

luxury, we argue that it is now an absolute necessity. Fundamentally, why would

anyone not want to optimise all their connections (unless youre in the game of selling

bandwidth)? We are moving beyond that nice to have moment into the must have

era, thanks to the onslaught of video, Internet-based collaboration and other

applications that demand low latency and sufficient performance levels.

Here, best effort is not enough so traditional QoS is not enough either. The question

is, just how flexible are WAN optimisation products at optimising very different types of

applications and are all solutions made equal? Here we put these points to the test,

focusing on comparing Blue Coats newly released MACH5 SG600 to Riverbeds current

1050-L.

Broadband-Testing 1995-2011 7

WAN OptimisationTest Report

Latency: The Performance Problem In 2011

While there is always talk of the era of unlimited bandwidth, this simply isnt true. We

regularly witness poor file service performance, suffer from excessive Internet

backhaul, slow SSL-encrypted and cloud-based SaaS applications and an inability to

use thick-client applications over the WAN. Throw in the aforementioned issue with

video and we see that the traditional approach to problem solving more bandwidth

is ineffective, since most networks are now bound by a more fundamental limitation:

latency. In response, many IT organisations have begun to evaluate specialised

solutions that can accelerate application delivery by overcoming latency and expanding

throughput. However, many of these solutions address only part of the overall mix of

challenges. A comprehensive solution should support all key enterprise business

applications regardless of whether they are webified, rich media or plain text, hosted

internally or externally, or encrypted or unencrypted. Ideally, such a solution should

make decisions based on the user, not just the application or source server. In

addition, it is important to consider support for future WAN architectures for example,

a mix of MPLS with direct-to-Internet branch office connectivity.

TEST OVERVIEW

Products In This Report

To compare WAN Optimisation performance, we tested comparable products from Blue

Coat (the MACH5 SG600) and Riverbed (the Steelhead 1050). In each test case, both

devices were optimally configured for the tests.

Blue Coat MACH5 SG600

Figure 2 Blue Coat MACH5 SG600

The MACH5 SG600 is just one in a complete family of appliances, virtual appliances and

mobile software clients focused on WAN optimisation.

Blue Coat supports a full range of optimisation for collaboration, file sharing, email,

storage, backup and disaster recovery. In addition, Blue Coat has integrated specialised

technologies to optimise web-based applications and video over HTTP/SSL, as well as

video optimisation for RTMP (Adobe Flash), MMS and RTSP (Microsoft Windows Media

Server).

Broadband-Testing 1995-2011

WAN Optimisation Test Report

The resulting product claims to not only reduce bandwidth by 50-99% for optimised

applications; customer case studies demonstrate the ability to accelerate end-user

performance for applications like SharePoint by 300x and to massively scale bandwidth

for video delivery (a claim borne out in the tests as we shall see).

It should be noted that the MACH5 can be software key upgraded to add integrated

Secure Web Gateway functionality, including web categorising, content filtering, web

usage reporting, malware protection, ICAP services and much more. That is an extra

charge, but enables interesting possibilities for locations that want direct, safe Internet

access.

Riverbed Steelhead 1050

Figure 3 Riverbed Steelhead 1050

The Steelhead 1050 is part of a range of physical and virtual appliances from Riverbed.

Supported applications for acceleration include file sharing (CIFS and NFS), Exchange

(MAPI), Lotus Notes, web (HTTP- and HTTPS-based applications), database (MS SQL and

Oracle), and disaster recovery. Riverbed claims the Steelhead can cut bandwidth usage

typically by 60-95% while offering real-time visibility into application and WAN

performance.

Broadband-Testing 1995-2011 9

WAN OptimisationTest Report

How The Technology Is Applied

General WAN Optimisation Concepts

When remote users access centralised applications, there are two main problems that

WAN optimisation aims to solve bandwidth and latency. Bandwidth becomes a problem

because users and systems are pulling everything over a narrow WAN link 1MB files,

Excel spreadsheets, 4MB PowerPoint presentations, videos measured in gigabytes, and

data backups measured often in terabytes. With hundreds of applications and recreational

Internet access, bandwidth is heavily taxed. Latency is the amount of delay from point A

to point B. When coupled with protocols that require hundreds or thousands of chats

across the link, performance can suffer tremendously. Even with infinite bandwidth

available, protocol chattiness needs to be overcome. Enter WAN optimisation. Data

reduction techniques like caching (at the byte data stream level) and compression reduce

bandwidth required. Protocol accelerations remove the impact of latency for TCP as well

as numerous application protocols.

Video

Video seems to be an area where the vendors depart a bit. Riverbed doesnt document

any specialised optimisation techniques for video traffic. Its compression and caching

techniques provide the optimisation, and, as we will see in the test results, provide

limited value. Blue Coat uses send once, serve many stream-splitting technology that

includes support for Real-Time Messaging Protocol (RTMP) on the Adobe Flash Platform

and Microsoft Windows Media MMS- and RTSP-based streaming media, including

Silverlight. This capability is designed to scale video and reduce the strain on the most

constrained portion of enterprise networks the Internet gateway or WAN link.

In addition to optimisation and delivery of live video, MACH5 SG appliances can optimise,

scale and minimise the impact of on-demand video from the web or internal sources over

HTTP, SSL, RTMP, RTSP or MMS. On-demand video, including progressively downloaded

video using a Flash platform such as YouTube, is stored or cached locally at the branch

office after the first person requests it. Subsequent viewers can access the content locally

without impacting the WAN. In addition, internal content can be distributed automatically,

typically at night when network usage is low, to avoid straining the WAN during peak

business activity. The MACH5 technology can also be used to minimise the impact of

video floods, where employees watching live video events over the web (e.g. breaking

news, major events) saturate WAN links and prevent business-critical applications from

operating. Bandwidth usage is reduced via caching and stream splitting.

FTP

FTP transfers over large bandwidth networks can be massively accelerated with TCP

optimisations and compression, while repeat file transfers can benefit from byte

caching/sequence reduction. FTP traffic usually contains compressible and repetitive

elements that respond extremely well to byte caching and compression technologies to

significantly reduce overall data transferred. Both Riverbed and Blue Coat solutions also

provide the optimisations, as well as the ability to employ bandwidth management/QoS

for any class of FTP and other traffic to be appropriately prioritised in alignment with the

needs of the user.

10

Broadband-Testing 1995-2011

WAN Optimisation Test Report

For example, FTP traffic can be given maximum priority at night when an offline

replication must complete, but be given secondary priority in the daytime when user

productivity is paramount.

Email systems and applications, such as Microsoft Exchange 2007 and 2010, recognising

the needs of the modern, distributed organisation have developed synchronization and

optimisation improvements to enhance the end-user experience and minimise bandwidth

requirements. While these new features help address some of the issues introduced by

latency and bandwidth limitations, users in branch offices still suffer poor performance.

WAN Optimization provides an end-to-end acceleration solution to improve performance

and response times, reduce and prioritise bandwidth usage, and significantly reduce the

time to complete email operations.

The optimisations in Exchange 2007, as well as Exchange 2010, along with protocol

improvements in MAPI, are not enough to give users in branch offices a smooth and

problem-free experience. Even though Cached Exchange Mode allows remote users to

remain productive by working offline, it does not address the WAN latency and bandwidth

limitations that degrade performance for mail that must still be sent and received.

Using byte caching and compression technologies, as well as protocol acceleration for

MAPI, both the Blue Coat and Riverbed solutions are designed to help reduce redundant

data and attachments, while it is claimed that batching and pre-population provides users

with a LAN-like experience when downloading email. Additionally, both solutions provide

the ability to employ bandwidth management/QoS, allowing for any class of traffic to be

prioritised, ensuring that email services remain reliable.

MS Office

MS Office files are typically stored on a centrally located file server available to groups of

users to facilitate collaboration. To open, save and manage documents between the users

computer and the server, Microsoft uses the Common Internet File System protocol

(CIFS) for Wide Area Files Services (WAFS). When Microsoft Office attempts to interact

with files on a server across a WAN, the performance and user experience suffer

considerably.

CIFS, by design, makes hundreds or thousands of round-trips between the client and

server and therefore is particularly sensitive to latency. Worse, Microsoft Office

applications expect files will be accessible quickly and respond poorly to latency-induced

delay. Blue Coat and Riverbed technology is designed to accelerate and optimise all MS

Office applications, and more generally, any application that relies on the CIFS protocol

by reducing latency while increasing WAN throughput.

They do this using many specific techniques, such as read ahead, write back, and

directory meta-caching. Each technique is designed to overcome the performance

shortcomings of using the CIFS protocol over the WAN.

In addition to protocol optimisation and byte-level caching, compression technologies, in

combination with TCP enhancements and bandwidth management are designed to further

Broadband-Testing 1995-2011 11

WAN OptimisationTest Report

improve and accelerate any CIFS application. Most MS Office files, whether it is Word,

Excel, or PowerPoint contain compressible and repetitive elements that respond extremely

well to byte caching/sequence reduction and compression technologies.

MS Office SharePoint

Office SharePoint Server provides good collaborative functionality, but WAN links and

degraded performance have discouraged users from adopting the software and processes.

To assist with uptake, both Riverbed and Blue Coat provides an end-to-end acceleration

solution designed to regain performance, minimise bandwidth usage and significantly

reduce the time to complete all SharePoint Server operations, whether it is for

information or content management, collaboration and workflow, or the integration of

customer and partner information during the proposal process. It seems that some of

Blue Coats technologies for HTTP and SSL provide quite a bit of extra optimisation

benefit, judging from how they outperformed Riverbed for warm reads of SharePoint.

12

Broadband-Testing 1995-2011

WAN Optimisation Test Report

PUT TO THE TEST: WAN OPTIMISATION

Our Test Bed

Figure 4 - Our Test Bed

Our test bed simulated a classic office environment.

Using virtual machines, we created a set of clients that attached directly to each of the

WAN optimisation products we had under test. We used a WAN simulator to enforce

bandwidth and latency typical for an Internet connection. At the other side of the WAN

link were the second in the pair of each product under test. These attached directly to our

set of applications used to test the devices with, each on a virtual machine.

To provide some level of compatibility between different tests, we created a file set that

we were able to use in many of our test scenarios. This was based on a real-world mix of

Office and general file types including .doc, .xls, and .ppt in various file sizes, as follows:

1340k.doc

7108k.doc

1100k.xls

500k.ppt

3500k.ppt

268KB

1372KB

7108KB

1108KB

256KB

464KB

3568KB

Our testing covered video, WAFS/CIFS, email, FTP, collaborative and web applications.

Broadband-Testing 1995-2011 13

WAN OptimisationTest Report

Test 1: Video

For the video testing, we used Microsofts Windows Media Load Simulator. Setting our

WAN simulator to a T1 (1.544Mbps) bandwidth limit with 100ms latency to create a realworld scenario, we began by loading to a maximum of 10, then 20 concurrent clients,

then 30, then 50 and finally 100, depending on the ability of the device under test to cope

with that number of video streams. We monitored and measured bandwidth utilisation at

each step. Tests were run over a 350 second period.

Figure 5 Baseline Video Test: 10 Streams

Figure 6 Device Video Test: 10 Streams

14

Broadband-Testing 1995-2011

WAN Optimisation Test Report

Our starting point was a vanilla baseline run across a non-optimised link with just 10

video clients. Checking bandwidth usage at this point we observed around the 1.544Mbps

of constant inbound bandwidth utilisation as the ceiling of our link was hit with relatively

minimal outbound utilisation.

Testing each of our devices under the same test conditions we found very different

behavioural characteristics from each of the WAN optimisation devices.

The Riverbed device fluctuated constantly between around 25-70% utilisation obviously

a symptom its video handling technique while Blue Coat peaked at around 30% for the

first 80 seconds of the test then consumed almost zero inbound bandwidth clearly the

video is already fully in the object cache by then and being served locally.

BaselineLinkUtilisation

20Clientsover350seconds

120.00%

100.00%

80.00%

60.00%

Incoming video from 20 clients

consume entire inbound link,

no room for other traffic

40.00%

20.00%

0.00%

0

50

100

150

200

250

300

350

Figure 7 Baseline Video Test: 20 Streams

Moving onto sustaining 20 video streams, our baseline is as above, with a very similar

pattern to our 10-stream test.

Comparing this baseline with our device tests, again we see some very interesting

bandwidth usage patterns. The Riverbed Steelhead is largely pegged at maximum

bandwidth utilisation throughout the test. Blue Coat peaked between 25-30% utilisation

until all the video was stored in the object cache and then served locally again.

Broadband-Testing 1995-2011 15

WAN OptimisationTest Report

Figure 8 Device Video Test: 20 Streams

Moving to 30 client streams, the pattern continued as before. Blue Coat was able to

rapidly cache and serve the video to multiple clients directly from the branch device,

while Riverbed required constant communication between the head and branch. With 20

clients all pulling the same video at the same time, the WAN link was quickly saturated.

Not so for Blue Coat WAN bandwidth utilisation was between 20-30% until the entire

video was cached, then dropped to near zero even while continuing to serve 20 clients.

BaselineLinkUtilisation

30Clientsover350seconds

120.00%

100.00%

80.00%

60.00%

40.00%

20.00%

0.00%

0

50

100

150

200

250

Figure 9 Baseline Video Test: 30 Streams

16

Broadband-Testing 1995-2011

300

350

WAN Optimisation Test Report

Figure 10 Device Video Test: 30 Streams

Again we found inbound bandwidth pegged throughout the test by our WAN limit and the

Riverbed device. As we approached 30 streams, the Riverbed 1050 completely filled the

available bandwidth, eventually resulting in the load test software straining to establish

new streams. Again we saw Blue Coats superior performance with no additional

bandwidth requirement than when running 10 and 20 stream tests.

BaselineLinkUtilisation

50Clientsover350seconds

120.00%

100.00%

80.00%

60.00%

40.00%

20.00%

0.00%

0

50

100

150

200

250

300

350

Figure 11 Baseline Video Test: 30 Streams

Broadband-Testing 1995-2011 17

WAN OptimisationTest Report

Figure 12 Device Video Test: 50 Streams

At 50 clients, we saw huge numbers of errors being recorded by the Riverbed device (see

illustration below of Riverbed device under test below).

Again, this is appears to be due to the bandwidth limits of our WAN link being hit early in

the test with no ability to optimise that bandwidth significantly enough to support large

numbers of video streams.

Figure 13 Riverbed Video Test: 50 Streams Errors

At this point only the Blue Coat device was capable of potentially supporting more

streams, so we reran the test again with 100 concurrent video clients.

18

Broadband-Testing 1995-2011

WAN Optimisation Test Report

BaselineLinkUtilisation

100Clientsover350seconds

120.00%

100.00%

80.00%

60.00%

40.00%

20.00%

0.00%

0

50

100

150

200

250

300

350

Figure 14 Baseline Video Test: 100 Streams

The pattern was exactly as before, minimal outbound utilisation still and a short period of

30% or so utilisation, followed by almost zero utilisation inbound. We were able to sustain

100 concurrent video streams and there were no indications that adding more would hurt

performance in any way.

Figure 15 Blue Coat Video Test: 100 Streams

At most, the Blue Coat device used significantly less than half of the available WAN

bandwidth and effectively none once the entire video had been placed in the object cache.

Broadband-Testing 1995-2011 19

WAN OptimisationTest Report

Cross-checking this against the SG600 management interface we saw near 100%

bandwidth reduction achieved courtesy of the video going straight into the object cache

of the Blue Coat device.

Figure 16 Blue Coat Video Test: 100 Streams Bandwidth Reduction Achieved

Finally, we decided to roll the dice and test Blue Coats SG600 with 500 video streams.

That test saw successful delivery of on-demand video streams to 500 clients. Again,

utilization was about 30-35% of bandwidth at the start of the video, and then took

almost no bandwidth for the last two thirds of the time.

Blue Coat delivered 500 video streams over a T-1 with plenty of bandwidth available for

other applications. That discovery shows significant optimization results.

Figure 17 Blue Coat Video Test: 500 Streams Bandwidth Reduction Achieved

20

Broadband-Testing 1995-2011

WAN Optimisation Test Report

So why are these results significant? Weve already mentioned that video is the fastest

growing application and therefore traffic generator on the network today, so it is

essential to be able to optimise it.

Why is it so popular? Online training videos are increasingly popular, as is video as part

of collaborative working. Add these enterprise applications to the growing use of video

for entertainment and in social networking as an accepted form of work-related

interchange in many companies, as well as for casual use, and the scale of the growth

in video across the network is a clear challenge for businesses and network providers

alike.

Put simply, if you cant optimise video, your network connections and other applications

are going to suffer.

Broadband-Testing 1995-2011 21

WAN OptimisationTest Report

Test 2: WAFS/File Transfer Tests

For the WAFS/CIFS test we used our Office file mix as defined earlier, in combination with

Ciscos WAFS Benchmark Tool for Microsoft Office Applications, to again create a totally

independent test platform for these devices.

This measures response times for basic file operations (open, save, close) when using a

Microsoft Office application (such as Word, PowerPoint or Excel). The benchmark executes

a scripted set of operations and measures the time it takes to complete them.

Figure 18 Cisco WAFS Benchmark

The operations were repeated for the different MS Office file types and sizes we created,

as described earlier, with our default WAN simulator settings in place and repeated our

tests over multiple iterations.

We started with cold run testing, ensuring caches and every element were cleared before

running the tests, first a file open operation, then a save and then a close. Across the cold

runs, performance was relatively similar between all devices.

22

Broadband-Testing 1995-2011

WAN Optimisation Test Report

Figure 19 Cisco WAFS Benchmark: Cold Runs File Open

Figure 20 Cisco WAFS Benchmark: Cold Runs File Save

On the file open tests, Riverbed delivered significant optimisation, though we did note

with some additional Excel file tests (not shown) it was significantly slower across all runs

than the others. On the file save tests, Riverbed tended to edge many tests.

Broadband-Testing 1995-2011 23

WAN OptimisationTest Report

WARMTimetoOpenOfficeDocument

19.3

1340k.doc

3.5

3.5

7108k.doc

4.0

3.6

67.1

Baseline

21.6

1100k.xls

RiverbedWarm

3.4

3.5

BlueCoatWarm

22.7

500k.ppt

3.5

3.4

3500k.ppt

3.7

3.5

50.3

0.0

20.0

40.0

60.0

Figure 21 Cisco WAFS Benchmark: Warm Runs File Open

WARMSAVETimeforOfficeDocument

22.8

1340k.doc

5.0

5.4

7108k.doc

5.1

7.1

Baseline

21.2

1100k.xls

RiverbedWarm

4.8

5.1

BlueCoatWarm

20.4

500k.ppt

5.0

5.4

45.9

3500k.ppt

5.1

6.4

0.0

20.0

40.0

60.0

Figure 22 Cisco WAFS Benchmark: Warm Runs File Save

Our warm test runs again showed a relatively close level of performance between both

vendors, file saves showing a larger margin than compared to file open. Depending on the

actual file involved, the results were between 5-30% with most results inside a 10%

margin.

24

Broadband-Testing 1995-2011

WAN Optimisation Test Report

Riverbeds performance was generally strong with best times in many file/action

combinations across all tests.

Test 3: Email

For our email test we used our Office file set as attachments and monitored a series of

cold then warm runs with each test device using a pair of Outlook clients and Exchange

server across our WAN simulator.

We found that both the Riverbed and Blue Coat devices were able to significantly further

optimise the email attachments on a warm run. For the cold, Blue Coat recorded 8.26%

and Riverbed 10% optimisation. On the warm run, Riverbed improved significantly to

96% bandwidth optimisation. However, Blue Coat was still better with an excellent

97.81% bandwidth optimisation.

In terms of bandwidth utilisation given our 1.544Mbps link - this translates to Riverbed

utilising an average of 6kbps while Blue Coat required on average 4.9kbps to send each

of our test documents. Obviously, the native compressibility of each document was the

significant factor in the cold runs but once a document was cached, the MACH5 was more

efficient at sending the attachments.

100.0%

100.0%

92.0%

90.0%

80.0%

60.0%

40.0%

20.0%

4.0%

3.0%

0.0%

Baseline

RiverbedCold/Warm

BlueCoatCold/Warm

Figure 23 Email Test: Bandwidth Usage

Broadband-Testing 1995-2011 25

WAN OptimisationTest Report

Test 4: FTP

For our FTP tests we again took our Office file set and our default WAN simulator settings

of 1.544Mbit and 100ms round-trip-time and created an FTP client-server connection. We

downloaded all of the files used for the WAFS Benchmark and summarised the results.

We ran a number of cold runs, resulting in a baseline time of 230 seconds to complete the

transfers. We then performed several warm run iterations and averaged these out to

produce the results as shown in the graph below, with transfer times measured in

seconds.

We found Blue Coat to be faster than Riverbed on both the cold and warm runs, about

17% faster cold, 30% faster on the warm. This is classic optimisation territory and each

device provided significant savings over baseline, with Blue Coat taking the ultimate

honours here.

ColdandWarmTimetoTransferFTPFile

9.0Warm

BlueCoat

128.0Cold

13.0Warm

Riverbed

155.0Cold

BASELINE

230.0

0.0

100.0

200.0

Figure 24 FTP Test: Time Taken To Complete Transfer

26

Broadband-Testing 1995-2011

WAN Optimisation Test Report

Test 5: Web-based Applications - SharePoint

Collaboration has shifted largely to web-based protocols, HTTP and SSL. It has gone

through the hype cycle and out into the real world as a much-used service/application

right now.

TestFiles

13MB.mp3

7108K.doc

500K.ppt

3.5MB.ppt

300K.docx

Here we start with still a classic end-to-end, client-server setup, deployed

and operated within the enterprise, using our default WAN simulation

settings and testing with Microsofts SharePoint 2007 and 2010.

For this test we have extended our file set to include .mp3 and .txt files, in

addition to our Excel, Word and PowerPoint documents. Given that

SharePoint users tend to exchange barely changed files regularly, the

2MB.txt

ability to optimise this traffic is critical, both in the kind of end-to-end

11.34MB.doc

scenario we have here and in a live Internet environment (see BPOS testing

1.1MB.xls

section next, for example) where bandwidth and latency may vary

enormously from user to user involved in the collaborative workspace.

Figure 25 SharePoint 2007: Cold Runs

Broadband-Testing 1995-2011 27

WAN OptimisationTest Report

Starting with SharePoint 2007 cold runs (see above), we see generally consistent

performance between both products, some marginally quicker than the other in certain

tests, but nothing significant in the real world.

Neither device was able to improve baseline performance on the pre-compressed .mp3

file or on the (pre-compressed graphic-heavy) 3.5MB PowerPoint but, in most cases,

some optimisation could be applied, even on a cold run.

Figure 26 SharePoint 2007: Warm Runs

On the warm runs we see significant optimisation from both vendors, but Blue Coat still

dominated with near-instant transfer times in all cases - a good level of performance all

round.

We then switched our attention to SharePoint 2010 (see next page) and reran cold and

warm test runs with the same configuration as before. Again, on the cold runs we found

largely consistent performance, but with Riverbed just shading many of the tests. On

warms runs, Blue Coat again emerged on top, albeit not quite as clearly as with

SharePoint 2007, but consistently so across all tests.

28

Broadband-Testing 1995-2011

WAN Optimisation Test Report

Overall we were very happy with the level of performance we saw in the SharePoint tests

itself a significant application for 2011 and beyond.

The question now is, when we move to an Internet-based model, without our controlled

end-to-end test setup, especially when running secure web applications, will the pattern

still follow? Read on to find out

MicrosoftSharePoint2010COLDRUNS

74.5

77.4

76.4

13MB.mp3

2.9

2.9

3.6

300k.docx

2MB.txt

4.6

5.0

1340k.doc

4.2

5.5

7108k.doc

12.5

8.3

BASELINE

39.7

9.1

9.6

RiverbedCold

BlueCoatCold

3.3

1.9

2.7

500k.ppt

20.3

19.6

21.7

3500k.ppt

1100k.xls

2.3

2.9

0.0

6.8

20.0

40.0

60.0

80.0

Figure 27 SharePoint 2010: Cold Runs

Broadband-Testing 1995-2011 29

WAN OptimisationTest Report

MicrosoftSharePoint2010WARMRUNS

1.4

13MB.mp3

0.8

0.8

300k.docx

0.5

0.9

2MB.txt

0.6

0.9

1340k.doc

0.6

RiverbedWarm

1.2

7108k.doc

0.7

BlueCoatWarm

0.8

500k.ppt

0.5

1.0

3500k.ppt

0.6

0.8

1100k.xls

0.6

0.0

0.2

0.4

0.6

0.8

1.0

1.2

1.4

Figure 28 SharePoint 2010: Warm Runs

Test 6: Microsoft Cloud-based SharePoint

Microsoft provides SharePoint as a cloud-delivered application as well. The Business

Productivity Online Suite (BPOS) is a set of messaging and collaboration tools, delivered

as a subscription service that provides Office/collaboration-type tools without the need to

deploy and maintain software and hardware on the premises true SaaS in other words.

The suite includes MS Exchange Online for email and calendaring, MS SharePoint Online

for portals and document sharing, MS Office Communications for presence availability,

instant messaging, and peer-to-peer audio calls and Office Live Meeting for web and video

conferencing. Its what analysts are calling the future of IT, so the ability to optimise this

type of service is critical going forward.

This application test meant going live onto the Internet and involved some small file

transfers. In order to make the results more measurable and meaningful, we pegged the

bandwidth at 512Kbps still a common access speed in Europe, especially for branch

offices and SMBs.

30

Broadband-Testing 1995-2011

1.6

WAN Optimisation Test Report

How a branch office actually reaches an SaaS provider is a matter of deployment

preference. On the one hand, it is possible to utilise a full hub-and-spoke topology, where

all Internet-bound traffic is backhauled through a corporate-controlled data centre, and

then from there routed out to the Internet proper.

Figure 29 - Hub and Spoke topology

The other approach is a branch office connected directly to the Internet (what well refer

to as Direct-to-Internet; each branch office is able to route traffic to the company data

centre as well as maintaining a direct connection to the Internet as provided by their local

ISP or via an MPLS split tunnel design. Each WAN optimisation vendor integrates

differently between these two approaches.

Riverbed must be deployed in a hub-and-spoke environment, where all traffic from each

branch passes through the single hub of the data centre.

Blue Coat, while supporting the hub-and-spoke topology, also supports an asymmetric

approach where Internet access is provided at the endpoint by the local ISP or MPLS split

tunnel a branch-to-Internet solution.

As a consequence of these differing philosophies, each test has been run twice: Once with

hub-and-spoke, and again with the branch office directly connected to the Internet. See

graphs on the next two pages.

In the hub-and-spoke topology, while the cold run results were reasonably similar

between our vendors, on several of the warm runs Blue Coat left Riverbed behind. Blue

Coat was significantly quicker than Riverbed when transferring the large PowerPoint file

(3500K.ppt), as well as the large (and highly compressible) 7108k Word document. As

the file sizes increased, Blue Coat was consistently faster.

Broadband-Testing 1995-2011 31

WAN OptimisationTest Report

BPOSHubandSpokeCOLD

3.0

2.2

2.0

250k.doc

1340k.doc

21.3

9.0

9.3

7108k.doc

116.3

24.7

27.0

1100k.xls

17.3

4.0

3.7

500k.xls

1.0

1.0

Baseline

7.0

RiverbedCold

BlueCoatCold

3.0

1.0

1.0

250k.ppt

500k.ppt

7.0

7.7

13.3

58.0

57.7

56.0

3500k.ppt

0.0

20.0

40.0

60.0

80.0

100.0

120.0

Figure 30 BPOS: Hub-and-Spoke Cold Run

BPOSHubandSpokeWARM

2.7

1340k.doc

1.0

17.0

7108k.doc

1.5

1.7

1100k.xls

1.0

RiverbedWarm

1.0

1.0

500k.xls

BlueCoatWarm

1.5

1.0

500k.ppt

8.0

3500k.ppt

1.5

0.0

2.0

4.0

6.0

8.0

10.0

12.0

14.0

16.0

Figure 31 BPOS: Hub-and-Spoke Warm Run

The results from the Hub-and-Spoke configuration showed both vendors optimising the

download by several factors.

32

Broadband-Testing 1995-2011

18.0

WAN Optimisation Test Report

Figure 32 - Branch Office to Cloud via Direct Internet Connection

Where the branch offices were connected directly to the Internet, however, the results

were a very different story. Without an upstream device to assist in compressing the file,

every vendor was at the mercy of the uplink speed again, using a common 512Kbps line

with 100ms latency.

The download speeds on the Direct-to-Internet cold run are all hovering just around the

same times as the baseline, indicating that no single vendor can improve performance.

MSFTBPOSBranchOfficetoCloudviaDirectInternetConnectionCOLD

22.0

21.7

21.0

1340k.doc

121.3

116.3

116.7

7108k.doc

17.0

17.7

17.7

1100k.xs

Baseline

6.3

7.0

7.0

500k.xls

RiverbedCold

13.0

13.2

13.7

500k.ppt

BlueCoatCold

58.0

58.2

57.7

3500k.ppt

0.0

20.0

40.0

60.0

80.0

100.0

120.0

Figure 33 SharePoint Cloud Service, Branch Office to Cloud via Direct Internet Connection, Cold

Broadband-Testing 1995-2011 33

WAN OptimisationTest Report

Comparatively, each performed equally no real optimisation on the Direct-to-Internet

cold transfer. This is understandable, unfortunate as it may seem, as the bulk of

optimisation offerings require a device at either end of a connection to provide

compression and caching.

The warm results exhibited a marked advantage for Blue Coat but not so for Riverbed.

With the data centre device removed from the network path, the SG600 showed its ability

to optimise Internet and cloud SAAS traffic, whereas the Riverbed Steelhead showed

effectively no improvement over baseline. On average, Blue Coats SG600 was able to

serve the warm file in just around one second.

Again, the ability of Blue Coat to provide optimisation where other vendors struggle is

apparent (see graph below). This is proving to be a consistent benefit in many of our

tests, notably those that are forward-looking. We will now move into a completely

Internet-based scenario, testing with Salesforce.com.

MSFTBPOSBranchOfficetoCloudviaDirectInternetConnectionWARM

22.0

22.0

1340k.doc

1.0

121.3

116.0

7108k.doc

1.3

17.0

16.7

1100k.xs

1.0

500k.xls

1.0

6.3

7.0

Baseline

RiverbedWarm

500k.ppt

1.0

13.0

14.0

BlueCoatWarm

58.0

58.0

3500k.ppt

1.2

0.0

20.0

40.0

60.0

80.0

100.0

120.0

Figure 34 - SharePoint Cloud Service, Branch Directly Connected to Cloud via Internet, WARM Run

34

Broadband-Testing 1995-2011

WAN Optimisation Test Report

Test 7: Web Application Test Salesforce.com

One of the great web application successes of the past few years is Salesforce.com once

ahead of its time but now a model application/SaaS for the future.

Salesforce.com is used to manage customer information and sales opportunities. Certain

uses and workflows may not benefit from optimization technologies. There are many use

cases, however, where large amounts of data are transferred, and, often, on a repetitive

basis. Reporting dashboards are commonly accessed by multiple users; in addition, data

dumps, documents and other large queries and downloads are also common (and can be

the most performance constrained use cases of Salesforce.com). For this reason the

ability to optimise this kind of environment is critical from our perspective. We created a

secure (https) connection across the Internet as before and carried out a series of file

transfers using a variation on our file set, including text, Excel, Word, PowerPoint and .csv

file types.

SFDCBranchOfficetoCloudviaDirectInternetConnectionCOLD

5.0

5.0

5.0

300K.doc

35.0

35.0

2MB.txt

2.0

1340k.doc

18.0

1100k.xls

6.0

22.0

22.0

Baseline

RiverbedCold

17.0

17.0

BlueCoatCold

7.0

7.0

500k.ppt

3.0

58.0

58.0

3500k.ppt

19.1

0.0

20.0

40.0

60.0

Figure 35 Branch Directly Connected to Cloud via Internet: Cold Run

Looking at the cold runs first, we see that the Blue Coat device largely dominates in terms

of best results. Obviously the compression options from Blue Coat are working especially

well on the text file here.

Moving on to the warm results, we can see that only the Blue Coat technology was

capable of actually accelerating from the cold runs, with everything being accessed

instantly, while Riverbed shows no optimisation whatsoever.

Broadband-Testing 1995-2011 35

WAN OptimisationTest Report

This can be explained only by Blue Coats ability to decrypt (then re-encrypt) the SSL

traffic stream here at the edge and take immediate advantage of its object cache on the

branch device. Since it doesnt require the head end device to be always in place to

decrypt SSL traffic (i.e. to know the private keys at both ends of the connection) it can

therefore intercept and optimise that traffic. The Riverbed solution is clearly unable to do

this something that is well documented already.

SFDCBranchOfficetoCloudviaDirectInternetConnectionWARM

300K.doc

1.0

5.0

5.0

35.0

35.0

2MB.txt

1.0

22.0

22.0

1340k.doc

1.0

Baseline

RiverbedWarm

17.0

17.0

1100k.xls

1.0

BlueCoatWarm

7.0

7.0

500k.ppt

1.0

58.0

58.0

3500k.ppt

1.0

0.0

20.0

40.0

60.0

Figure 36 Directly Connected to Cloud via Internet: Warm Run

Riverbeds usual argument here is that terminating SSL before re-encrypting is

fundamentally insecure. However, the Blue Coat argument seemed to stand up during our

testing because the last hop from its SG600 to the client is using a known certificate with

a known trust relationship. In general, if unknown parties are terminating SSL upstream

from the client, then it is insecure.

If known parties are terminating SSL upstream from the client, and that termination

point is a trusted entity within your organisation with a verifiable chain of trust, then it is

just as secure as a regular SSL connection.

36

Broadband-Testing 1995-2011

WAN Optimisation Test Report

SUMMARY & CONCLUSIONS

The networking world is changing; the cloud, virtualisation and cloud/software-as-aservice applications are growing fast in adoption and requirements to optimise.

As such, while classic CIFS and FTP-type WAN optimisation are still important, it is quickly

being overtaken by a new wave of applications such as secure web applications,

collaborative environments and finally the onslaught of video.

To see if the current crop of WAN optimisation hardware is capable of delivering on these

requirements, we tested comparable products from the leading WAN optimisation

vendors: Blue Coat (MACH5 SG600) and Riverbed (Steelhead 1050).

We created a test bed using real traffic across a simulated WAN link (using typical

bandwidth and latency settings). Our application selection for testing was based on typical

modern usage patterns and included video, WAFS/file transfer, FTP, email, SharePoint

collaboration, BPOS and Salesforce.com.

We found that, when testing with traditional applications such as CIFS and FTP,

performance between these vendors was relatively even with some small advantages for

each vendor in different situations. Again, with SharePoint, Riverbed lagged slightly

behind Blue Coat. Our email test also showed even performance, with each vendor

claiming a small advantage over the other in specific conditions.

Testing of BPOS, Microsofts cloud-delivered MS Office over Internet, with symmetric WAN

optimisation (assumes data travels onto Internet link, across data centre WAN

optimisation device, then across the WAN to a branch). With traffic backhauled through

the data centre, the cold run results were reasonably similar between both vendors. What

is more interesting, however, is that when BPOS was accessed from a branch office

directly via the Internet, Riverbed could not provide any optimisation. Blue Coat,

however, showed extensive performance and bandwidth improvements with just the

single appliance.

Looking at another cloud application over a secure (SSL) Internet environment, our

Salesforce.com testing highlighted a significant limitation of the Riverbed technology that

Blue Coat is able to overcome. Only the Blue Coat product was capable of actually

accelerating this type of traffic, with everything being accessed instantly, while the

Riverbed showed no optimisation whatsoever. This can be explained only by Blue Coats

ability to decrypt (then re-encrypt) the SSL traffic stream at the branch. Since it doesnt

require the head end device (data centre WAN optimisation controller) to be always in

place to decrypt SSL traffic (i.e. to know the private keys at both ends of the connection)

it can therefore intercept and optimise that traffic, while the other could not.

The Riverbed solution is clearly unable to do this something that is well documented

already. Riverbeds usual argument here is that terminating SSL before re-encrypting is

fundamentally insecure. However, the Blue Coat argument seemed to stand up during our

testing because the last hop from its SG600 to the client is using a known certificate with

a known trust relationship.

Broadband-Testing 1995-2011 37

WAN OptimisationTest Report

In general, if unknown parties are terminating SSL upstream from the client, then it is

insecure. If known parties are terminating SSL upstream from the client, and that

termination point is a trusted entity within your organisation with a verifiable chain of

trust, then it is just as secure as a regular SSL connection.

In the fastest growing, and arguably biggest component of network traffic today video

Blue Coat dominated. On a link that was fully saturated at 20 concurrent clients, Blue

Coat was able to service 500 streams compared to Riverbeds modest improvement of 30

streams. On average, Blue Coat was drawing only 6kbps per stream, compared to

Riverbeds 200kbps, an almost 30x advantage for Blue Coat. Even then the SG600 was

using minimal bandwidth. R&D investment in specialised video technologies here has

clearly paid off.

38

Broadband-Testing 1995-2011

You might also like

- OpenFlowTutorial ONS 1017 2011Document97 pagesOpenFlowTutorial ONS 1017 2011Katelyn KasperowiczNo ratings yet

- Ca Siteminder R12.X Administrator Exam (Cat-160) : Study GuideDocument12 pagesCa Siteminder R12.X Administrator Exam (Cat-160) : Study GuideSai Madhav RavulaNo ratings yet

- D72965GC30 - Oracle Solaris 11 - Advanced System Administration - SG - Vol 2 - 2013Document390 pagesD72965GC30 - Oracle Solaris 11 - Advanced System Administration - SG - Vol 2 - 2013Đậu BắpNo ratings yet

- Pan Edu 311Document1 pagePan Edu 311George JaneNo ratings yet

- DO NorthStarController2Document78 pagesDO NorthStarController2Manaf Al OqlahNo ratings yet

- FortiGate Enterprise Configuration Example 01-30006-0315-20080310Document54 pagesFortiGate Enterprise Configuration Example 01-30006-0315-20080310Hoa NguyenNo ratings yet

- Ex9250 Ethernet Switch: Product OverviewDocument12 pagesEx9250 Ethernet Switch: Product OverviewBullzeye StrategyNo ratings yet

- Configure Failover Guest DomainDocument12 pagesConfigure Failover Guest Domainimranpathan22No ratings yet

- Ldom Admin GuideDocument260 pagesLdom Admin GuidemuthubalajiNo ratings yet

- Vmware NSX Quick Start GuideDocument1 pageVmware NSX Quick Start GuideAbdulrahMan MuhammedNo ratings yet

- Classroom Requirements: Red Hat Training & CertificationDocument11 pagesClassroom Requirements: Red Hat Training & Certificationpalimarium100% (1)

- Firewall Reference ManualDocument72 pagesFirewall Reference ManualHamzaKhanNo ratings yet

- Mcafee Email Gateway Best PracticesDocument42 pagesMcafee Email Gateway Best PracticestestNo ratings yet

- Red Hat Gluster Storage-3.2-Container-Native Storage For OpenShift Container Platform-En-USDocument90 pagesRed Hat Gluster Storage-3.2-Container-Native Storage For OpenShift Container Platform-En-USSandesh AhirNo ratings yet

- Supercluster T5-8 DocumentDocument178 pagesSupercluster T5-8 Documentreddy.abhishek2190No ratings yet

- The OSPF ProtocolDocument162 pagesThe OSPF ProtocolNelsonbohrNo ratings yet

- Oracle Coherence Admin GuideDocument156 pagesOracle Coherence Admin Guidegisharoy100% (1)

- Do MigrateasatosrxDocument66 pagesDo MigrateasatosrxMiro JanosNo ratings yet

- CCNA INTRO1.0a Knet HiResDocument822 pagesCCNA INTRO1.0a Knet HiResShamsol AriffinNo ratings yet

- Solaris 10 Overview of Security Controls Appendix-V1 1Document45 pagesSolaris 10 Overview of Security Controls Appendix-V1 1kuba100% (5)

- UrBackup Server ServerAdminGuide v2.1 2Document35 pagesUrBackup Server ServerAdminGuide v2.1 2Andrea MoranoNo ratings yet

- Debug and Troubleshoot EDU 311 PDFDocument2 pagesDebug and Troubleshoot EDU 311 PDFAponteTrujilloNo ratings yet

- HP NG1 Bookmarked2Document68 pagesHP NG1 Bookmarked2enco123encoNo ratings yet

- 820-4490 - Solaris 9 ContainersDocument48 pages820-4490 - Solaris 9 ContainersShahmat DahlanNo ratings yet

- Oracle Solaris Cluster Data Service For Oracle GuideDocument118 pagesOracle Solaris Cluster Data Service For Oracle GuidemadhusribNo ratings yet

- Symantec DLP 15.5 System Requirements GuideDocument67 pagesSymantec DLP 15.5 System Requirements GuideHiếu Lê VănNo ratings yet

- SparkT7 Administratorguide PDFDocument112 pagesSparkT7 Administratorguide PDFHtoo Satt WaiNo ratings yet

- Fortigate Ii: Instructor Guide For Fortigate 5.4.1Document8 pagesFortigate Ii: Instructor Guide For Fortigate 5.4.1Mohsine AzouliNo ratings yet

- Administering Oracle Java Cloud ServiceDocument553 pagesAdministering Oracle Java Cloud ServiceAdel ElhadyNo ratings yet

- Ibm Bto SNV1 Student Guide Book 1 450 525 PDFDocument76 pagesIbm Bto SNV1 Student Guide Book 1 450 525 PDFSreenath GootyNo ratings yet

- Day One - Securing The Routing Engine On M, MX, and T SeriesDocument150 pagesDay One - Securing The Routing Engine On M, MX, and T SeriesHelmi Amir BahaswanNo ratings yet

- OVM Command Line Interface User GuideDocument166 pagesOVM Command Line Interface User GuideJulio Cesar Flores NavarroNo ratings yet

- Introduction to Networking Basics CCNADocument100 pagesIntroduction to Networking Basics CCNALiberito SantosNo ratings yet

- Linux - McAfee Antivirus InstallationDocument22 pagesLinux - McAfee Antivirus Installationsamvora2008997850% (2)

- C120 E534 08enDocument184 pagesC120 E534 08envinnie79No ratings yet

- Using Kerberos To Authenticate A Solaris 10 OS LDAP Client With Microsoft Active DirectoryDocument25 pagesUsing Kerberos To Authenticate A Solaris 10 OS LDAP Client With Microsoft Active DirectoryrajeshpavanNo ratings yet

- ES400 - Ultra Enterprise 10000 Administration - SG - 0698Document606 pagesES400 - Ultra Enterprise 10000 Administration - SG - 0698JackieNo ratings yet

- Design and Implementation of Ofdm Transmitter and Receiver On Fpga HardwareDocument153 pagesDesign and Implementation of Ofdm Transmitter and Receiver On Fpga Hardwaredhan_7100% (11)

- Oracle®VMServer For SPARC 3.3 Administration GuideDocument524 pagesOracle®VMServer For SPARC 3.3 Administration GuideSahatma SiallaganNo ratings yet

- ORACLE 12.1 Financials ImplementationDocument424 pagesORACLE 12.1 Financials ImplementationAbdulqayum SattigeriNo ratings yet

- BIG-IP Link Controller ImplementationsDocument80 pagesBIG-IP Link Controller ImplementationsmlaazimaniNo ratings yet

- IBM BigFix WebUI Users GuideDocument40 pagesIBM BigFix WebUI Users GuidePaul BezuidenhoutNo ratings yet

- Aruba Instant 6.2.0.0-3.2 Release Notes PDFDocument24 pagesAruba Instant 6.2.0.0-3.2 Release Notes PDFBoris JhonatanNo ratings yet

- Solaris 10 Handbook PDFDocument198 pagesSolaris 10 Handbook PDFChRamakrishnaNo ratings yet

- OpenScape Business V1 Administrator DocumentationDocument1,407 pagesOpenScape Business V1 Administrator Documentationsorin birou100% (1)

- Oracle® Fusion Middleware Installing and Configuring Oracle Data IntegratorDocument110 pagesOracle® Fusion Middleware Installing and Configuring Oracle Data Integratortranhieu5959No ratings yet

- Sun Fire Midrange Server MaintenanceDocument404 pagesSun Fire Midrange Server Maintenancevignesh17jNo ratings yet

- CIS Apache Tomcat 8 Benchmark v1.1.0 PDFDocument129 pagesCIS Apache Tomcat 8 Benchmark v1.1.0 PDFchankyakutilNo ratings yet

- Deploying Oracle Real Application Clusters (RAC) On Solaris Zone ClustersDocument59 pagesDeploying Oracle Real Application Clusters (RAC) On Solaris Zone ClustersarvindNo ratings yet

- FMW Patching GuideDocument224 pagesFMW Patching GuiderdhanokarNo ratings yet

- Red Hat Enterprise Linux-7-SELinux Users and Administrators Guide-En-USDocument187 pagesRed Hat Enterprise Linux-7-SELinux Users and Administrators Guide-En-USFestilaCatalinGeorgeNo ratings yet

- 00482837-Alarms and Performance Events Reference (V100R007 - 08)Document511 pages00482837-Alarms and Performance Events Reference (V100R007 - 08)RonnieSmgNo ratings yet

- Basic Project Plan SmartSheet SampleDocument2 pagesBasic Project Plan SmartSheet SampleAnonymous b2fvcXSFNo ratings yet

- LLumarSpecsArchGlobalSolar PDFDocument1 pageLLumarSpecsArchGlobalSolar PDFAnonymous b2fvcXSFNo ratings yet

- Wave 2 EbookDocument8 pagesWave 2 EbookAnonymous b2fvcXSFNo ratings yet

- LLumarSpecsArchGlobalSolar PDFDocument1 pageLLumarSpecsArchGlobalSolar PDFAnonymous b2fvcXSFNo ratings yet

- Documento UbntDocument126 pagesDocumento UbntRafa Besalduch MercéNo ratings yet

- DNA Gel Electrophoresis Lab Solves MysteryDocument8 pagesDNA Gel Electrophoresis Lab Solves MysteryAmit KumarNo ratings yet

- Bank NIFTY Components and WeightageDocument2 pagesBank NIFTY Components and WeightageUptrend0% (2)

- Unit-1: Introduction: Question BankDocument12 pagesUnit-1: Introduction: Question BankAmit BharadwajNo ratings yet

- PESO Online Explosives-Returns SystemDocument1 pagePESO Online Explosives-Returns Systemgirinandini0% (1)

- Difference Between Text and Discourse: The Agent FactorDocument4 pagesDifference Between Text and Discourse: The Agent FactorBenjamin Paner100% (1)

- The Berkeley Review: MCAT Chemistry Atomic Theory PracticeDocument37 pagesThe Berkeley Review: MCAT Chemistry Atomic Theory Practicerenjade1516No ratings yet

- AZ-900T00 Microsoft Azure Fundamentals-01Document21 pagesAZ-900T00 Microsoft Azure Fundamentals-01MgminLukaLayNo ratings yet

- Petty Cash Vouchers:: Accountability Accounted ForDocument3 pagesPetty Cash Vouchers:: Accountability Accounted ForCrizhae OconNo ratings yet

- UAPPDocument91 pagesUAPPMassimiliano de StellaNo ratings yet

- Oxford Digital Marketing Programme ProspectusDocument12 pagesOxford Digital Marketing Programme ProspectusLeonard AbellaNo ratings yet

- Mtle - Hema 1Document50 pagesMtle - Hema 1Leogene Earl FranciaNo ratings yet

- HCW22 PDFDocument4 pagesHCW22 PDFJerryPNo ratings yet

- Mercedes BenzDocument56 pagesMercedes BenzRoland Joldis100% (1)

- Data Sheet: Experiment 5: Factors Affecting Reaction RateDocument4 pagesData Sheet: Experiment 5: Factors Affecting Reaction Ratesmuyet lêNo ratings yet

- #### # ## E232 0010 Qba - 0Document9 pages#### # ## E232 0010 Qba - 0MARCONo ratings yet

- Fernandez ArmestoDocument10 pagesFernandez Armestosrodriguezlorenzo3288No ratings yet

- Audit Acq Pay Cycle & InventoryDocument39 pagesAudit Acq Pay Cycle & InventoryVianney Claire RabeNo ratings yet

- Intro To Gas DynamicsDocument8 pagesIntro To Gas DynamicsMSK65No ratings yet

- CMC Ready ReckonerxlsxDocument3 pagesCMC Ready ReckonerxlsxShalaniNo ratings yet

- Assignment 2 - Weather DerivativeDocument8 pagesAssignment 2 - Weather DerivativeBrow SimonNo ratings yet

- 4 Wheel ThunderDocument9 pages4 Wheel ThunderOlga Lucia Zapata SavaresseNo ratings yet

- 2023-Physics-Informed Radial Basis Network (PIRBN) A LocalDocument41 pages2023-Physics-Informed Radial Basis Network (PIRBN) A LocalmaycvcNo ratings yet

- DELcraFT Works CleanEra ProjectDocument31 pagesDELcraFT Works CleanEra Projectenrico_britaiNo ratings yet

- Lecture02 NoteDocument23 pagesLecture02 NoteJibril JundiNo ratings yet

- Surgery Lecture - 01 Asepsis, Antisepsis & OperationDocument60 pagesSurgery Lecture - 01 Asepsis, Antisepsis & OperationChris QueiklinNo ratings yet

- Maverick Brochure SMLDocument16 pagesMaverick Brochure SMLmalaoui44No ratings yet

- Liebert PSP: Quick-Start Guide - 500VA/650VA, 230VDocument2 pagesLiebert PSP: Quick-Start Guide - 500VA/650VA, 230VsinoNo ratings yet

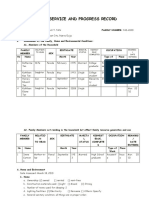

- Family Service and Progress Record: Daughter SeptemberDocument29 pagesFamily Service and Progress Record: Daughter SeptemberKathleen Kae Carmona TanNo ratings yet

- Merchandising Calender: By: Harsha Siddham Sanghamitra Kalita Sayantani SahaDocument29 pagesMerchandising Calender: By: Harsha Siddham Sanghamitra Kalita Sayantani SahaSanghamitra KalitaNo ratings yet