Professional Documents

Culture Documents

MATH2114 Numerical LN1

Uploaded by

was122333gmail.comOriginal Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

MATH2114 Numerical LN1

Uploaded by

was122333gmail.comCopyright:

Available Formats

MATH2114 Lecture Notes

Numerical Methods and Statistics for Engineers

Solution of Nonlinear Equations

Dr Eddie Ly

School of Mathematical & Geospatial Sciences (SMGS)

College of Sciences, Engineering & Health (SEH)

RMIT University, Melbourne, Australia

July 2014

Contents

1 Introduction 2

2 Bisection Method 3

2.1 Convergence Criterion . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5

3 Fixed Point Iteration Method 7

3.1 Convergence Criterion . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 8

4 Rate of Convergence 9

5 Termination of the Iterations 10

6 Newtons Method 10

6.1 Convergence and Stopping Criterions . . . . . . . . . . . . . . . . . . . . . . . . . 12

7 Secant Method 14

7.1 Convergence and Stopping Criterions . . . . . . . . . . . . . . . . . . . . . . . . . 14

8 Remarks on Multiple Roots 15

9 Self Assessment Exercises 17

1

2 Solution of Nonlinear Equations

1 Introduction

Since in senior high school, you learned to use the quadratic formula,

x =

b

b

2

4ac

2a

to solve

f(x) = ax

2

+ bx + c = 0,

where a, b and c are constants. The values obtained with the above quadratic formula

are called the roots, or solutions, of the function f(x). They represent the values of x

(denoted by a variable r in this document) that make f(x) equal to zero. Thus, we can

dene the root of an equation as the value of x that makes f(r) = 0. For this reason,

roots are sometimes called the zeros of the equation. If f(r) = 0 and f

(r) = 0, then

r is a simple root. For example, for f(x) = x

2

1, the simple roots are x = 1, since

f(1) = 0, f

(x) = 2x and f

(1) = 2 = 0 . Although, the quadratic formula is handly

for solving such equation, there are many other (nonlinear) functions for which the root

cannot be determined so easily. For these cases, the numerical methods described here

provide ecient means to obtain the answers.

The methods for solving nonlinear equations are iterative methods, where a sequence of

approximations to the solution is computed. The iteration is terminated once a suciently

accurate estimate is found. An equation f(x) = 0 may have no roots, a nite number of

roots, or innitely number of roots. Often for a simple function, the number of roots and

their approximate value can be found using a sketch.

EXAMPLE

Determine the approximate values of the roots of x

2

+ e

x

= 4 .

solution:

The equation can be written as e

x

= 4 x

2

, so we sketch the functions y = e

x

and y = 4 x

2

on the same axes, and estimate the x-coordinates of the points of

intersection.

2.5 2 1.5 1 0.5 0 0.5 1 1.5 2

0

1

2

3

4

5

6

X

Y

y = 4x

2

y = e

x

Lecture Notes MATH2114 Numerical Methods and Statistics for Engineers 3

From the sketch, we can see that there are two roots with r

1

1.95 and r

2

1.05.

We can verify the estimates as follows :

f(x) = x

2

+ e

x

4,

f(1.95) = (1.95)

2

+ e

1.95

4 0.06,

f(1.05) = (1.05)

2

+ e

1.05

4 0.04 .

Although, graphical methods are useful for obtaining rough estimates of roots, they are

limited because of their lack of precision. An alternative approach is to use trial and error,

a technique consists of guessing a value of x and evaluating whether f(x) is zero. If not,

another guess is made, and f(x) is again evaluated to determine whether the new value

provides a better estimate of the root. The process is repeated until a guess is obtained

that results in f(x) 0 . Such graphical, and trial and error, methods are obviously

inecient and inadequate for the requirements of scientic and engineering practice. This

course presents alternatives that are also approximate, but employ systematic strategies

to home in on the root.

2 Bisection Method

If f(x) is continuous on the interval, a x b, and f(a) and f(b) have opposite sign,

that is, f(a) f(b) < 0, then there is at least one root in the interval. Suppose that there

is only one root in the interval. If the interval is halved, the interval which contains the

root can be determined, and this process continued until the root is known to sucient

accuracy. This method is known as the bisection method, binary chopping, interval halving

or Bolzanos method.

0 0.2 0.4 0.6 0.8 1 1.2 1.4 1.6 1.8

10

8

6

4

2

0

2

4

X

Y

y = f(x)

c

0

b

0

a

0

b

1

a

2

a

3

original interval

first iteration

second iteration

third iteration

a

1

c

1

b

2

c

2

b

3

c

3

root, x = r

4 Solution of Nonlinear Equations

As shown in the illustration, the original interval is denoted by [a

0

, b

0

], the subsequent

intervals by [a

1

, b

1

], [a

2

, b

2

], [a

3

, b

3

], and so on. Suppose that we know the root lies in the

interval [a, b]. We compute the midpoint of the interval, c =

1

2

(a+b), and if f(b)f(c) < 0,

then the root r lies in the interval dened by c x b, so a is replaced by c. Otherwise,

the root r is in the interval dened by a x c, and b is replaced by c. By now, we have

an interval which is half as long and still contains the root. This bisection of the interval

is repeated until we can estimate the root to the required accuracy.

At each iteration, we take x = c as the current estimate of the root. Suppose that an

estimate of the root is required such that

| Error | ,

where is a given tolerance. It can be seen from the illustration that r cannot be further

from c than the distance of b from c, that is,

|r c| b c .

Hence, the iteration is terminated when

b c ; 0 < 1.

EXAMPLE

Use the bisection method to nd the root of x 1 = e

x

that lies in the interval

[1, 1.4] to a tolerance of 0.02.

solution:

Let f(x) = x 1 e

x

, a

0

= 1, b

0

= 1.4 and = 0.02 . Thus,

c

0

=

1

2

(

a

0

+ b

0

)

= 1.2 ,

f(c

0

) = f(1.2)

= 1.2 1 e

1.2

= 0.10 ,

f(b

0

) = f(1.4)

= 1.4 1 e

1.4

= 0.15 .

Lecture Notes MATH2114 Numerical Methods and Statistics for Engineers 5

Since f(b

0

) f(c

0

) < 0, the next interval that contains the root is [c

0

, b

0

], which is

half as long as the original interval [a

0

, b

0

]. For next iteration, we set a

1

= c

0

and

b

1

= b

0

, and compute c

1

, f(c

1

) and f(b

1

) (which is same as f(b

0

) since b

1

= b

0

) :

a

1

= c

0

= 1.2 ,

b

1

= b

0

= 1.4 ,

c

1

=

1

2

(

a

1

+ b

1

)

= 1.3 ,

f(c

1

) = f(1.3)

= 1.3 1 e

1.3

= 0.027 ,

f(b

1

) = f(b

0

)

= 0.15 .

Since f(b

1

) f(c

1

) > 0, then root must be in the interval [a

1

, c

1

]. So, for the next

iteration, we set a

2

= a

1

, b

2

= c

1

and f(b

2

) = f(c

1

). The bisection of the interval

is repeated until we can estimate the root to the required accuracy. Putting the

computed results in a table provides :

a b c b c f(b) f(c) f(b) f(c) action

1 1.4 1.2 0.2 (> ) 0.15 0.10 < 0 Set a = c

1.2 1.4 1.3 0.1 (> ) 0.15 0.027 > 0 Set b = c, f(b) = f(c)

1.2 1.3 1.25 0.05 (> ) 0.027 0.037 < 0 Set a = c

1.25 1.3 1.275 0.025 (> ) 0.027 0.0044 < 0 Set a = c

1.275 1.3 1.2875 0.0125 (< )

The iteration is terminated, because b c = 0.0125 is smaller than the tolerance of

0.02. The estimate of the root is r 1.2875, and it is known that 1.275 r 1.3 .

2.1 Convergence Criterion

Let the original interval be designated by (a

0

, b

0

) and succeeding ones by (a

1

, b

1

), (a

2

, b

2

),

etc., or (a

n

, b

n

) for n = 1, 2, 3, . . . . Then, the intervals are

b

1

a

1

=

1

2

(b

0

a

0

),

b

2

a

2

=

1

2

(b

1

a

1

)

=

1

2

2

(b

0

a

0

),

6 Solution of Nonlinear Equations

b

3

a

3

=

1

2

(b

2

a

2

)

=

1

2

3

(b

0

a

0

),

.

.

. =

.

.

.

b

n

a

n

=

1

2

n

(b

0

a

0

).

Since c is the midpoint of the interval [a, b], then c

n

=

1

2

(a

n

+ b

n

), and

|r c

n

| b

n

c

n

=

1

2

(b

n

a

n

)

=

1

2

n+1

(b

0

a

0

).

As n ,

1

2

n+1

(b

0

a

0

) 0, thus |r c

n

| 0 as well. Hence, c

n

approaches the root r

as n . That is, the bisection method converges for any initial interval containing the

root. Furthermore, for an initial interval [a

0

, b

0

] and a given tolerance , we can estimate

the required number of iterations n by noticing that |r c

n

| if

1

2

n+1

(b

0

a

0

) n

ln

(

b

0

a

0

)

ln 2

1.

Note that the number of iterations is independent of the function f(x).

EXAMPLE

How many iterations of the bisection method are required to nd the solution of

x 1 e

x

= 0 to six decimal places if the initial interval [1, 1.5] is used ?

solution:

A tolerance of = 5 10

7

(or smaller) is required to ensure six decimal places

accuracy. Here, a

0

= 1 and b

0

= 1.5, then

n

ln

(

1.51

510

7

)

ln 2

1 18.9 .

At least 19 iterations are required.

Lecture Notes MATH2114 Numerical Methods and Statistics for Engineers 7

3 Fixed Point Iteration Method

Suppose that x = g(x) is a rearrangement of f(x) = 0. Then, f(r) = 0 implies that

r = g(r), and the root r is called a xed point of the function g(x). A xed point can

sometimes be found using the xed point iteration method (also known as simple iteration,

method of successive substitution and one-point iteration),

x

n+1

= g(x

n

) for n = 0, 1, 2, . . . ,

given an initial estimate x

0

of the root r . The above relation provides a formula to predict

a new value of x as a function of an old value of x. Examples of convergent and divergent

iteration are shown below:

X

Y

g(x

0

)=x

1

g(x

1

)=x

2

x

2

x

1

x

0

y = g(x)

y = x

r

Convergent fixed point iteration

Y

r

x

0

x

2

x

1

Divergent fixed point iteration

g(x

0

) = x

1

g(x

1

) = x

2

y = g(x)

y = x

X

8 Solution of Nonlinear Equations

3.1 Convergence Criterion

Recalling that the error represents the dierence between the true value and approximate

(estimate) value. If r represents the exact root of f(x) and x

n+1

represents the most

recent estimate of r, then the error of x

n+1

is

e

n+1

= r x

n+1

.

Since r = g(r) amd x

n+1

= g(x

n

), we have

e

n+1

= r x

n+1

= g(r) g(x

n

)

= g

(

n

)(r x

n

) for

n

(r, x

n

) (by Mean Value Theorem)

= g

(

n

) e

n

.

Suppose that |g

(x)| K for |r x| < . Then,

|e

n+1

| =

(

n

) e

n

(

n

)

e

n

K|e

n

| if |e

n

| <

K

(

K|e

n1

|

)

= K

2

|e

n1

| if |e

n1

| <

K

2

(

K|e

n2

|

)

= K

3

|e

n2

| if |e

n2

| <

.

.

.

.

.

.

.

.

.

K

n+1

|e

0

| if |e

0

| < .

If K < 1, K

n+1

0 and K

n+1

|e

0

| 0 as n for any value of e

0

. Hence, |e

n+1

| =

|r x

n+1

| 0. Therefore, x

n+1

approaches the exact root r as n . As a result, the

xed point iteration x

n+1

= g(x

n

) converges to r for any initial value x

0

(r , r +) if

(x)

K < 1 for all x (r , r + ).

Note that the smaller the value of K, the faster the convergence. It can be shown that

the xed point iteration method will not converge if

(r)

> 1.

EXAMPLE

Two rearrangements of the equation e

x

= sin x are

x = log(sin x) and x = arcsin

(

e

x

)

.

Determine if the xed point iteration method will converge to the rst positive

solution r 0.6 for these rearrangements. If the iteration does converge, compute

x

3

using initial value of 0.6.

Lecture Notes MATH2114 Numerical Methods and Statistics for Engineers 9

solution:

If g(x) = log(sin x) then

g

(x) =

d

dx

[

log(sin x)

]

=

cos x

sin x

= cot x,

giving g

(r) = g

(0.6) 1.4617. Since

(x)

> 1, the xed point iteration method

will not converge.

If g(x) = arcsin

(

e

x

)

, then

g

(x) =

d

dx

arcsin

(

e

x

)

=

e

x

1 e

2x

,

so g

(r) = g

(0.6) 0.6565. As

(x)

< 1 near the root, the xed point iteration

method will converge.

Using the second arrangement of the equation, the iteration x

n+1

= arcsin(e

x

n

)

with starting value of x

0

= 0.6 provides

x

1

= arcsin(e

x

0

)

= 0.580942 ,

x

2

= arcsin(e

x

1

)

= 0.593627 ,

x

3

= arcsin(e

x

2

)

= 0.585145 .

Note that the convergence is rather slow, as expected from the large value of

(x)

.

4 Rate of Convergence

Suppose x

0

, x

1

, x

2

, . . . , is a sequence of approximation to a root, r . If x

n

r as n ,

we say the sequence is convergent. The rate of convergence is important. If

lim

n

|r x

n+1

|

|r x

n

|

p

= lim

n

|e

n+1

|

|e

n

|

p

= K

for positive constants K and p, we say that x

n

converges to r with order of convergence

p. The larger the value of p and the smaller the value of K, the faster the convergence. If

p = 1 and K < 1, the sequence is said to converge linearly, and if p = 2, the convergence

is quadratic.

10 Solution of Nonlinear Equations

For the xed point iteration method, x

n+1

= g(x

n

), it was shown that

e

n+1

= g

(c

n

)e

n

where c

n

(x

n

, r) .

Thus,

lim

n

|e

n+1

|

|e

n

|

= lim

n

(c

n

)

(r)

,

so iteration will converge linearly if K =

(r)

< 1. If g

(r) = 0, the order of convergence

will be two or higher. The bisection method converges linearly with K = 1/2.

5 Termination of the Iterations

We want to terminate the iteration process when the estimate x

n

is suciently close to

the actual root r, that is when |r x

n

| for some prescribed tolerance . There are

several criterions that are employed in practice to terminate such iteration process. One

criterion is to terminate when |f(x

n

)| (since f(x

n

) 0 when x

n

r), but this does

not ensure that |r x

n

| . Another criterion is to terminate when |x

n+1

x

n

| .

6 Newtons Method

One of the major achievements in mathematics was the proof that polynomial equations of

degree greater than four cannot be solved by means of a formula (proved by the Norwegian

mathematician, Niels Henrik Abel (18021829)).

r x

2

x

1

x

0

x

slope = f

(x

0

)

slope = f

(x

1

)

y = f(x)

(x

0

, f(x

0

))

(x

1

, f(x

1

))

y

The Newtons method can be derived on the basis of the geometrical interpretation of

the above illustration. Suppose f(x) is dierentiable, and has a root at an unknown point

x = r. If we have a point x

0

close to r, we could obtain a better approximation to r by

Lecture Notes MATH2114 Numerical Methods and Statistics for Engineers 11

drawing a tangent line through the point

(

x

0

, f(x

0

)

)

. This tangent line given by

f

(x

0

)

rise

run

=

f(x

0

) 0

x

0

x

1

would cut the x-axis at x = x

1

, which will be our new estimate of r. Solving for x

1

gives

x

1

= x

0

f(x

0

)

f

(x

0

)

provided f

(x

0

) = 0.

Repeat the procedure at

(

x

1

, f(x

1

)

)

, and let x

2

be the x-intercept of the second tangent

line. From

f

(x

1

) =

f(x

1

) 0

x

1

x

2

,

we obtain

x

2

= x

1

f(x

1

)

f

(x

1

)

provided f

(x

1

) = 0.

Continuing in this manner, we determine x

n+1

from

x

n+1

= x

n

f(x

n

)

f

(x

n

)

for n = 0, 1, 2, . . . and provided f

(x

n

) = 0.

The repetitive use of the above formula yields a sequence x

1

, x

2

, x

3

, . . . , of approximations

that converges to the root r ; that is, x

n

r as n . This method is called Newtons

method or Newton-Raphson method. A potential problem in implementing this method

is the evaluation of the derivative. Although this is not inconvenient for polynomials and

many other functions, there are certain functions whose derivatives may be impossible,

extremely dicult (if possible), or computationally expensive to evaluate. For these cases,

the derivative can be approximated by a backward nite divided dierence approximation,

f

(x

n

)

f(x

n

) f(x

n1

)

x

n

x

n1

.

EXAMPLE

Perform two iterations of Newtons method with x

0

= 1.2 to nd an estimate of the

root of x 1 = e

x

.

solution:

Let f(x) = x 1 e

x

. Then, f

(x) = 1 + e

x

, and

x

n+1

= x

n

x

n

1 e

x

n

1 + e

x

n

for n = 0, 1, 2, . . . .

12 Solution of Nonlinear Equations

Newtons iterations :

x

1

= x

0

x

0

1 e

x

0

1 + e

x

0

= 1.2

1.2 1 e

1.2

1 + e

1.2

= 1.277770 ,

x

2

= x

1

x

1

1 e

x

1

1 + e

x

1

= 1.277770

1.277770 1 e

1.277770

1 + e

1.277770

= 1.278465 .

Hence, r x

2

= 1.2785 .

6.1 Convergence and Stopping Criterions

Newtons method converges quadratically (p = 2) to a simple root, since

lim

n

|e

n+1

|

|e

n

|

p

= lim

n

|r x

n+1

|

|r x

n

|

2

= lim

n

(c

n

)

2f

(x

n

)

=

|f

(r)|

2 |f

(r)|

(note x

n

r as n )

= K.

But, for a multiple root f

(r) = 0, the convergent rate reduces to linear (p = 1).

The method is terminated when

|x

n+1

x

n

| ,

ensuring |r x

n

| for a simple root. Of course, x

n+1

will have an even smaller error.

Lecture Notes MATH2114 Numerical Methods and Statistics for Engineers 13

EXAMPLE

Find the solution of x

3

= 2 to a tolerance of 0.0005 using Newtons method with

x

0

= 1.2 .

solution:

Let f(x) = x

3

2. Then f

(x) = 3x

2

, and

x

n+1

= x

n

f(x

n

)

f

(x

n

)

= x

n

x

3

n

2

3x

2

n

for n = 0, 1, 2, . . . .

For n = 0 (rst iteration):

x

1

= x

0

x

3

0

2

3x

2

0

= 1.2

(1.2)

3

2

3 (1.2)

2

= 1.262963 .

Since |x

1

x

0

| = 0.062963 > 0.0005, we continue with the next iteration (n = 1):

x

2

= x

1

x

3

1

2

3x

2

1

= 1.262963

(1.262963)

3

2

3 (1.262963)

2

= 1.259928 .

Again, |x

2

x

1

| = 0.003035 > 0.0005, so we continue to the next iteration (n = 2):

x

3

= x

2

x

3

2

2

3x

2

2

= 1.259928

(1.259928)

3

2

3 (1.259928)

2

= 1.259921 .

Now, |x

3

x

2

| = 7.321 (10

6

) < 0.0005, then the iteration process is terminated,

and x

3

= 1.259921 is accepted as an approximation to r. That is, r 1.256. Note

that the exact solution is

3

2 1.25992105, then the error in the approximation is

about 5 (10

8

), which is much smaller than 7.321 (10

6

).

14 Solution of Nonlinear Equations

7 Secant Method

In the secand method, an approved estimate x

2

of the solution of f(x) = 0 is found from

two estimates x

0

and x

1

by determining the point where the chord cuts the x-axis (see

illustration shown below). Note that x

0

and x

1

do not have to bracket the solution.

r x

2

x

1

x

0

x

y = f(x)

(x

0

, f(x

0

))

(x

1

, f(x

1

))

y

Equating the two expressions for the slope (for intervals [x

1

, x

0

] and [x

1

, x

2

]) of the chord :

slope

rise

run

=

f(x

0

) f(x

1

)

x

0

x

1

=

f(x

2

) f(x

1

)

x

2

x

1

.

Solving the above relation for x

2

, noting that f(x

2

) = 0, gives

x

2

x

1

=

0 f(x

1

)

f(x

0

) f(x

1

)

(x

0

x

1

) x

2

= x

1

f(x

1

)(x

1

x

0

)

f(x

1

) f(x

0

)

.

The next approximation x

3

is computed in the same manner from x

1

and x

2

, and so on.

The secant method can be formulated as

x

n+1

= x

n

f(x

n

)(x

n

x

n1

)

f(x

n

) f(x

n1

)

for n = 1, 2, 3, . . . .

7.1 Convergence and Stopping Criterions

For some problems, the secant method may not converge for some choices of x

0

and x

1

.

However, convergence is guaranteed if x

0

and x

1

are suciently close to r. The order of

convergence of the secant method is

p =

1 +

5

2

1.618 .

Lecture Notes MATH2114 Numerical Methods and Statistics for Engineers 15

Consequently, the secant method can be expected to converge much faster than the bisec-

tion method (p = 1) or xed point iteration method (p = 1), but slower than the Newtons

method (p = 2). For a simple root with x

n

close to r, we have r x

n

x

n+1

x

n

. The

secant method is therefore terminated when

|x

n+1

x

n

| ,

which will ensure that |r x

n

| for a simple root.

EXAMPLE

Perform two iterations of the secant method with x

0

= 1.3 and x

1

= 1.1 to nd an

estimate of the root of x 1 = e

x

.

solution:

Let f(x) = x 1 e

x

. Then,

x

2

= x

1

f(x

1

)(x

1

x

0

)

f(x

1

) f(x

0

)

= 1.1

f(1.1)(1.1 1.3)

f(1.1) f(1.3)

= 1.278898 ,

and

x

3

= x

2

f(x

2

)(x

2

x

1

)

f(x

2

) f(x

1

)

= 1.278898

f(1.278898)(1.278898 1.1)

f(1.278898) f(1.1)

= 1.278473 .

After two iterations, the estimate is r x

3

= 1.278 .

8 Remarks on Multiple Roots

A multiple root corresponds to a point where a function is tangent to the x-axis. For

example, a double root results from

f(x) = (x 2)(x 1)(x 1),

or mutliplying terms, f(x) = x

3

4x

2

+ 5x 2. The equation has a double root, because

one value of x makes two terms in f(x) equal to zero. Graphically, this corresponds to

the curve touching the x-axis tangentially at the double root (the function touches the

16 Solution of Nonlinear Equations

axis, but does not cross it at the root). A triple root corresponds to the case where one

x value makes three terms in an equation equal to zero, as in

f(x) = (x 2)(x 1)(x 1)(x 1),

or multiplying terms, f(x) = x

4

5x

3

+9x

2

7x+2. Graphically, this function is tangent

to the axis at the root, but for this case the axis crossed. In general, odd multiple roots

cross the axis, whereas even ones do not. For example, the quadruple root (four repeated

roots) does not cross the axis.

Multiple roots pose some diculties for many of the numerical methods presented in

this document :

(1) The fact that the function does not change sign at even multiple roots precludes

the use of the reliable bracketing methods such as the bisection method. Therefore,

you are limited to the xed point iteration method that may diverge.

(2) Another issue is related to the fact that not only f(x), but also f

(x) goes to

zero at the root. This poses problems for both the Newtons method and secant

method, which both contain the derivative (or its estimate) in the denominator of

their respective formulae. This could result in division by zero when the solution

converges very close to the root.

(3) It can be demonstrated that the Newtons method and secant method are linearly,

rather than quadratically, convergent for multiple roots. However, the formula for

the Newtons method can be slightly modied in the following manner to return the

method to its quadratic convergence,

x

n+1

= x

n

m

f(x

n

)

f

(x

n

)

,

where m is the multiplicity of the root. That is, m = 2 for a double root, m = 3

for a triple root, etc. Of course, this may be an unsatisfactory alternative, because

it hinges on foreknowledge of the multiplicity of the root. Another alternative is to

dene a new function u(x) as the ratio of the function to its derivative,

u(x) =

f(x)

f

(x)

.

It can be shown that this function has roots at all the same locations as the original

function. Therefore, this function can be inserted into the Newtons method to

develop an alternative form,

x

n+1

= x

n

u(x

n

)

u

(x

n

)

.

Lecture Notes MATH2114 Numerical Methods and Statistics for Engineers 17

9 Self Assessment Exercises

[1] Beginning with the interval [0, /2], carry out three iterations of the bisection

method to nd an estimate of the root of 5x = 4 + sin x.

[2] Use the bisection method with a tolerance of 1/20 to nd the solution of x = 2 sin x

that lies in the interval x [1, 2].

[3] Determine the error bound for the estimate of the solution after ve iterations of

the bisection method if initial interval [2, 1.6] is used.

[4] Estimate the number of iterations required by the bisection method to nd the root

of x 2 sin x = 0 that lies between x = 1 and x = 2 for the tolerance of 10

6

.

[5] Show that the iteration x

n+1

= 2 sin x

n

will converge to the rst positive root

(r 1.9) of x 2 sin x = 0. Using x

0

= 1.9, compute x

3

.

[6] Two rearrangements of the equation,

e

x

= 2 cos x,

are x = ln(2 cos x) and x = arccos(

1

2

e

x

). Are either of these suitable to nd the

root r 1.5 using a xed point iteration method ?

[7] Show that the bisection method converges linearly with K = 1/2.

[8] Using x

0

= 1.5, perform two iterations of the Newtons method to nd an estimate

of the root of x = 2 sin x.

[9] Estimate the negative solution of e

x/2

= 2 x

2

using the Newtons method with

x

0

= 1.2 and a tolerance of 10

4

.

[10] Using the secant method with x

0

= 1 and x

1

= 2, nd the approximation x

3

to the

positive root of x = 2 sin x.

[11] Compute the negative solution of e

x/2

= 2x

2

to three decimal place accuracy (use

= 0.0005) using the secant method with x

0

= 1.2 and x

1

= 1.3 .

[12] Suppose we wish to solve the nonlinear equation, x = 0.8 + 0.2 sin x.

(a) Beginning with the interval [0, /2], perform three iterations of the bisection

method.

(b) How many iterations of the bisection method are required to estimate the

solution to within 10

4

?

18 Solution of Nonlinear Equations

(c) Use Newtons method with x

0

= /4 to estimate x

1

and x

2

.

(d) What is the approximate size of the error of x

2

, obtained from the Newtons

method, as an estimate of the solution of the above equation ?

(e) Taking x

0

= 0 and x

1

= /2, compute x

2

and x

3

using the secant method.

[13] Suppose we wish to solve the nonlinear equation,

f(x) = x e

x1

= 0.

(a) Use Newtons method to nd improved approximations x

1

, x

2

and x

3

from an

initial approximation x

0

= 2.

(b) Describe the observed rate of convergence of Newtons method given that the

exact solution is x = 1. What do the results suggest about the nature of the

solution at x = 1 ?

[14] Given f(x) = 2x

6

1.6x

4

+ 12x + 1. Use bisection method to determine the

maximum of this function. Employ initial interval of [0, 1], and perform iterations

until the error is less than 0.005.

[15] The velocity v of a falling parachutist is given by

v =

gm

c

d

(

1 e

ct/m

)

where g = 9.8 m/s

2

. For a parachutist with a drag coecient c

d

= 15 kg/s, compute

the mass m, so that the velocity is 35 m/s at time t = 9 s . Use the bisection method

to nd m to three decimal places accuracy.

You might also like

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceFrom EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceRating: 4 out of 5 stars4/5 (895)

- Finalexam 5e PracticeDocument21 pagesFinalexam 5e Practiceodette_7thNo ratings yet

- Never Split the Difference: Negotiating As If Your Life Depended On ItFrom EverandNever Split the Difference: Negotiating As If Your Life Depended On ItRating: 4.5 out of 5 stars4.5/5 (838)

- Polynomials VideosDocument14 pagesPolynomials VideosThat One Lazy CatNo ratings yet

- The Yellow House: A Memoir (2019 National Book Award Winner)From EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Rating: 4 out of 5 stars4/5 (98)

- 978-0!00!820587-4 Edexcel International GCSE Maths Second Edition - Student BookDocument18 pages978-0!00!820587-4 Edexcel International GCSE Maths Second Edition - Student Booktella idrisNo ratings yet

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeFrom EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeRating: 4 out of 5 stars4/5 (5794)

- Practice Questions: Class Ix: Chapter - 2 PolynomialsDocument3 pagesPractice Questions: Class Ix: Chapter - 2 Polynomialsramyaanand73No ratings yet

- Shoe Dog: A Memoir by the Creator of NikeFrom EverandShoe Dog: A Memoir by the Creator of NikeRating: 4.5 out of 5 stars4.5/5 (537)

- Second Order Differential Equations - Dynamics - 2020Document13 pagesSecond Order Differential Equations - Dynamics - 2020rory mcelhinneyNo ratings yet

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaFrom EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaRating: 4.5 out of 5 stars4.5/5 (266)

- Lecture Week 13a (Supplementary) : - Constrained OptimisationDocument27 pagesLecture Week 13a (Supplementary) : - Constrained OptimisationdanNo ratings yet

- The Little Book of Hygge: Danish Secrets to Happy LivingFrom EverandThe Little Book of Hygge: Danish Secrets to Happy LivingRating: 3.5 out of 5 stars3.5/5 (400)

- Diophantine Equations HandoutDocument2 pagesDiophantine Equations Handoutdb7894No ratings yet

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureFrom EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureRating: 4.5 out of 5 stars4.5/5 (474)

- 5ACh12 (Coordinate Treatment of Simple Locus Problems)Document45 pages5ACh12 (Coordinate Treatment of Simple Locus Problems)api-19856023No ratings yet

- 1 s2.0 S1574013721000186 MainDocument13 pages1 s2.0 S1574013721000186 MainThanmai MuvvaNo ratings yet

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryFrom EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryRating: 3.5 out of 5 stars3.5/5 (231)

- Giancoli - Physics Principles Appendix-2Document1 pageGiancoli - Physics Principles Appendix-2Aman KeltaNo ratings yet

- Grit: The Power of Passion and PerseveranceFrom EverandGrit: The Power of Passion and PerseveranceRating: 4 out of 5 stars4/5 (588)

- Jee Maths Binomial Theorem TestDocument5 pagesJee Maths Binomial Theorem Testmonikamonika74201No ratings yet

- The Emperor of All Maladies: A Biography of CancerFrom EverandThe Emperor of All Maladies: A Biography of CancerRating: 4.5 out of 5 stars4.5/5 (271)

- Jasrae Issue 4 Vol 16 89154Document10 pagesJasrae Issue 4 Vol 16 89154Sanju AdiNo ratings yet

- The Unwinding: An Inner History of the New AmericaFrom EverandThe Unwinding: An Inner History of the New AmericaRating: 4 out of 5 stars4/5 (45)

- Vector Calculus, Linear Algebra, and Differential Forms: A Unified ApproachDocument4 pagesVector Calculus, Linear Algebra, and Differential Forms: A Unified ApproachDhrubajyoti DasNo ratings yet

- On Fire: The (Burning) Case for a Green New DealFrom EverandOn Fire: The (Burning) Case for a Green New DealRating: 4 out of 5 stars4/5 (74)

- Matrix - Algebra ReviewDocument25 pagesMatrix - Algebra Reviewandres felipe otero rojasNo ratings yet

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersFrom EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersRating: 4.5 out of 5 stars4.5/5 (345)

- Partial DerivativesDocument15 pagesPartial DerivativesAien Nurul AinNo ratings yet

- Team of Rivals: The Political Genius of Abraham LincolnFrom EverandTeam of Rivals: The Political Genius of Abraham LincolnRating: 4.5 out of 5 stars4.5/5 (234)

- MatlabDocument50 pagesMatlabOleg ZenderNo ratings yet

- MathematicsDocument55 pagesMathematicsBrian kNo ratings yet

- WINSEM2019-20 STS5102 SS VL2019205000260 Reference Material I 18-Feb-2020 LogarithmsDocument21 pagesWINSEM2019-20 STS5102 SS VL2019205000260 Reference Material I 18-Feb-2020 LogarithmsDipali AttardeNo ratings yet

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreFrom EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreRating: 4 out of 5 stars4/5 (1090)

- P6.1 VectorsDocument29 pagesP6.1 Vectorstravisclark123123No ratings yet

- 3 - Examples of Irrational NumbersDocument12 pages3 - Examples of Irrational Numberssap6370No ratings yet

- CSE 2201 Algorthm Analysis & Design: Solving RecurrenceDocument22 pagesCSE 2201 Algorthm Analysis & Design: Solving RecurrenceRomNo ratings yet

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyFrom EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyRating: 3.5 out of 5 stars3.5/5 (2259)

- CBSE Class 6 Algebra WorksheetDocument1 pageCBSE Class 6 Algebra WorksheetAnsuman Mahanty100% (5)

- Cad Cam 30 - 03 - 2021Document14 pagesCad Cam 30 - 03 - 2021Bharat GavaliNo ratings yet

- Econometrics2006 PDFDocument196 pagesEconometrics2006 PDFAmz 1No ratings yet

- Homework 7 Solutions: 1 Chapter 9, Problem 10 (Graded)Document14 pagesHomework 7 Solutions: 1 Chapter 9, Problem 10 (Graded)muhammad1zeeshan1sarNo ratings yet

- VectorsDocument55 pagesVectorsGeofrey KanenoNo ratings yet

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)From EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Rating: 4.5 out of 5 stars4.5/5 (121)

- VECTORDocument38 pagesVECTORnithin_v90No ratings yet

- Exactness, Tor and Flat Modules Over A Commutative RingDocument8 pagesExactness, Tor and Flat Modules Over A Commutative RingAlbertoAlcaláNo ratings yet

- 10 Maths. 1 Mark. EM. BookbackDocument13 pages10 Maths. 1 Mark. EM. BookbackKrish KrishnNo ratings yet

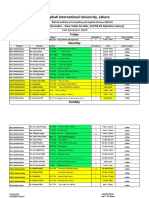

- Riphah International University, Lahore: Deparetment of Mathematics - Time Table For MSC, M.Phil, PH.D (Online Classes)Document2 pagesRiphah International University, Lahore: Deparetment of Mathematics - Time Table For MSC, M.Phil, PH.D (Online Classes)Anam BilqeesNo ratings yet

- Her Body and Other Parties: StoriesFrom EverandHer Body and Other Parties: StoriesRating: 4 out of 5 stars4/5 (821)