Professional Documents

Culture Documents

AI Def

Uploaded by

Ukesh ShresthaOriginal Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

AI Def

Uploaded by

Ukesh ShresthaCopyright:

Available Formats

1.

Definitions of Artificial Intelligence

I. Artificial Intelligence is a branch of Science which deals with helping machines to find solutions to complex

problems in a more human-like fashion

1

.

This generally involves borrowing characteristics from human intelligence, and applying them as algorithms in

a computer friendly way.

II. Turing's test

2

: the begin of AI

We place something behind a curtain and it speaks with us. If we cant make difference between it and a human

being then it will be !I.

!I is knowledge gained through experiences.

III. Then how about newly born baby??

Intelligent thing is that it may know nothing but it can learn.

IV. "Artificial intelligence is the study of ideas to bring into being machines that respond to stimulation

consistent with traditional responses from humans, given the human capacity for contemplation, "udgment and

intention. #ach such machine should engage in critical appraisal and selection of differing opinions within itself.

$roduced by human skill and labor, these machines should conduct themselves in agreement with life, spirit and

sensitivity, though in reality, they have limitations.%

3

!ll pursuits of !I involve the construction of a machine, where a machine may be a robot, a computer, a

program or a system of machines whose essence these days is assumed to be rooted in digital computer

technology &though biological machines or combined biological and digital machines may be possible in the

future &'night and Sussman, ())*+. The construction of a machine re,uires hardwiring, which is the

knowledge, expertise or know-how that is incorporated a priori into the machine. While self-refinement within

the machine is possible such as modifying internal state, ad"usting parameters, updating data structures, or even

modifying its own control structure, hardwiring concerns the construction of the initial machine itself. -achines

are hardwired to conduct one or more tasks.

5. Artificial intelligence is the study of ideas to bring into being machines that perform behavior or thinking

tasks ideally or like humans

4

.

So, Artificial Intelligence &!I+ is a term that encompasses many definitions. .owever, the most experts agree

that !I is concerned with two basic ideas/

It involves studying thought processes of humans &understanding what intelligence is+

It deals with representing these processes via machines &such as computer, robots etc.+

!I is the part of computer science concerned with designing intelligent computer systems, that is, computer

systems that exhibit the characteristics we associate with intelligence in human behavior - understanding

language, learning, reasoning and solving problems.

V.What do we mean by Intelligent beha!ior"?

(

0rom http://ai-depot.com/Intro.html

1

2omputing -achinery and Intelligence, !lan Turing &()34+

5

6atanya Sweeney&())1+, http://privacy.cs.cmu.edu/people/sweeney/aidef.html

7

8ussell, S. and 9orvig, $. Artificial intelligence: a modern approach. #nglewood 2liffs/ $rentice-.all, ())3.

1

Several abilities are considered as signs of intelligence/

6earning or understanding from experience

-aking sense out of ambiguous or contradictory message

8esponding ,uickly and successfully to a new situation

:sing reasoning in solving problems and directing conduct effectively

;ealing with perplexing situations

:nderstanding and inferring in ordinary rational ways

!pplying knowledge for manipulate the environment

Thinking and reasoning

8ecogni<ing the relative importance of different elements in a situation.

:ltimate goal of !I/ =uilding machine that mimic human intelligence.

VI. #ow could the Intelligence be tested?

Turing test is obviously an option. !ccording to the test, a computer can be considered smart only when a

human interviewer conversing with both an unseen human being and unseen computer cannot determine which

is which.

. !ranches of AI

9one has defined the exact numbers of branches of !I.

Some of the branches are follows

I. 6ogical !I

The program knows about the facts of the specific situation in which it must act and its goals are all

represented by sentences of some mathematical logical language. The program decides what to do by

inferring that certain actions are appropriate for achieving its goals &>ohn -c2arthy, ())? @ 2oncept of

6ogical !I+

II. Search

!I programs often examine large numbers of possibilities.

III. $attern 8ecognition

When a program makes observations of some kind, it is often programmed to compare what it sees with a

pattern.

IA. 8epresentation

0acts about a system or world are represented in some way, usually using languages of mathematical logic

&expert system+.

A. Inference &8easoning+

The strategy concerns the problem of how a reasonable conclusion &action+ should be made with respect to an

incoming situation.

AI. .euristic &opposite of analytic+

AII. Benetic programming

3. Areas of AI

!rtificial intelligence includes

games playing/ programming computers to play games such as chess and checkers

expert systems / programming computers to make decisions in real-life situations &for example, some

expert systems help doctors diagnose diseases based on symptomsC fault diagnosis in industrial

applications+

natural language / programming computers to understand natural human languages

neural networks / Systems that simulate intelligence by attempting to reproduce the types of physical

connections that occur in animal brains

robotics / programming computers to see and hear and react to other sensory stimuli

0ew examples of application

-ilitary

Industry

.ospitals

=anks

Insurance 2ompanies

$. Artificial !erses %atural Intelligence

!I is more permanent &mDc donEt forget like human workers, and workers may switch the working place or

"obs+.

!I offers ease of duplication and dissemination. Transferring knowledge from human to human to

computer is not as easy as transfer of files or memory device of intelligent machines.

!I can be less expensive than natural intelligence

!I can be well documented.

!I can execute certain tasks much faster than a human can.

!I can perform certain tasks better than many or even most people.

.owever

!I is not as creative as .uman &9atural Intelligence+.

9atural intelligence enables people to benefit from and use sensory experience directly, whereas !I

systems must work with symbolic input and representations.

9I i.e. human reasoning uses a wide context of experiences.

3

Approaches in "ystem Analysis

! system can be defined as a set of elements standing in interrelations. There exist models, principle, and laws

that apply to generali<ed systems or their subclasses, irrespective of their particular kind, the nature of their

component elements, and the relations or forces between them. . In mathematics a system can be defined in

various ways. 0or an instance, we can choose a system of simultaneous differential e,uations for different

applications.

;efining a system by rules of conventional physics is called F0irst principle -odelingG. =ut every system will

have some amount of complexity &nonlinearity+ and it is difficult to define the system from the existing laws of

sciences. 6arger a system, more complexity the system will tend to have and modeling the system is an

extremely complex task. F;ata based -ethodsG on such circumstances can be a promising option.

In data based methods, only availability of large amount of processDsystem data is needed. There are

different ways in which this data can be transformed and formulated e.g. expert system, statistical

classifiers, neural network etc.

1. #$pert "ystem

It is difficult to define what an expert is. It is usually talked about degree or level of expertise. .uman expertise

typically includes a constellation of behavior that involves the following activities.

8ecogni<ing and formulating the problem

Solving the problems ,uickly and correctly

#xplaining the solution

6earning from experience

8estructuring knowledge

=reaking rules if necessary

;etermining relevance

;egrading gracefully &being aware of oneEs limitation+

To mimic a human expert, it is necessary to build a computer system that exhibits all these characteristics.

!n e&'ert system is a computer system which emulates the decision-making ability of a human expert. The

system consists of a model and associated procedure that exhibits, within a specific domain, a degree of

expertise in problem solving that is comparable to that of a human expert. It basically contains knowledge

derived from an expert in some narrow domain. This knowledge is used to help users &non expert+ of the system

to solve the problems. ! narrow domain is considered since it is ,uite difficult to encode enough knowledge

into a system so that it may solve a variety of problems.

#xpert system helps

to preserve knowledge

if expertise is scarce, expensive, or unavailable.

if under time and pressure constraints.

in training new employees.

to improve workersE productivity.

#xpert systems are used in a variety of areas, and are still the most popular developmental approach in the

artificial intelligence world.

4

So, the ob"ective of expert system is considered as transfer of expertise from an expert to a computer system and

then on to other humans &novice+. This process involves four activities/

'nowledge ac,uisition &from expert or other sources e.g. books, records etc.+

'nowledge representation &coding in the computer+

'nowledge inferencing, and

'nowledge transfer to user.

The components of the expert system are as shown in 0igure below.

0ig. Structure of an #xpert System

(nowledge base contains all the rules &if-then+

)atabase gives the context of the problem domain and is generally considered to be a set of useful facts. These

are the facts that satisfy the condition part of the action rules.

Inference engine controls overall execution of the rules. It searches through the knowledge base, attempting to

pattern match facts or knowledge present in memory to the antecedents of rules. If a rules antecedent is

satisfied, the rule is ready to fire. When a rule is ready to fire it means that since the antecedent is satisfied, the

conse,uent can be executed.

*achine +earning

#$pert "ystem

%nowledge !ase

(Set of rules&

Inference #ngine

&8ule Interpreter+

Data !ase

'Set of facts&

I

/

(

I

n

t

e

r

f

a

c

e

(Knowledge

acquisition)

)ser

#$pert

*

'nowledge ac,uisition is a ,uite labor intensive process. Two ma"or participants of the knowledge ac,uisition

process are the knowledge engineer who works to ac,uire the domain knowledge, and the expert who could be

too busy personnel or very expensive recordsDdocuments describing the problem situations. Therefore, manual

and even semiautomatic elicitation methods are both slow and expensive. Thus, it makes sense to develop

knowledge ac,uisition methods that will reduce or even eliminate the need for these two participants. These

methods are computer aided knowledge ac,uisitions, or automated knowledge ac,uisition. This is also called

machine learning.

,e'resenting -ncertainty

! rule or fact is usually assumed as whether it is true or false. .owever, human knowledge is often inexact.

Sometimes, we will be partly sure about the truth of a statement and still have to make educated guesses to

solve problems.

Some concepts or words are inherently inexact. 0or instance, how can we determine exactly whether someone is

tallH The concept tall has a built-in form of inexactness.

-oreover, we sometimes have to make decisions based on partial or incomplete data.

-eaning of uncertainty/ doubtful, dubious, ,uestionable, not sure, or problematic.

In knowledge-based &expert+ system, it is necessary to understand how to process uncertain knowledge. In

addition, there is a need for inexact inference methods in !I because there do exit many inexact pieces of data

and knowledge that must be combined.

In a numeric context, uncertainty can be viewed as a value with a known error margin. When the possible range

of value is symbolic rather than numeric, the uncertainty can be represented in terms of ,ualitative expressions

or by using fu<<y sets.! way of handling uncertainity in expert system is insertion of fu<<y logic. !n expert

system in association with fu<<y logic is called +u,,y #$pert "ystem.

1. ;ata -ining and 'nowledge ;iscovery

-or.ing with Data

Data

;ata are collection of facts, measurements and statistics.

Data Preprocessing

;ata preprocessing transforms the raw data into a format that will be more easily and effectively

processed for the purpose of the user. ! case is a neural network modeling. There are a number of

different tools and methods used for preprocessing.

Sampling/ It selects a representative subset from a large population of data.

ransformation/ It manipulates raw data to transform to most convenient form. 2ertain transformation

of data may lead to the discovery of structures &defined model form+ that were not at all obvious on the

original data. 2ommon data transformations include taking s,uare roots, reciprocals, logarithms, and

raising variables to positive integral powers.

Denoising/ It removes &or minimi<e+ noise from data.

!ormali"ation: It organi<es data for more efficient access. In creating a database, normali<ation is the

process of organi<ing it into tables in such a way that the results of using the database are always

unambiguous and as intended. Two simple normali<ation options areC either scale the inputs to the

range I4 (J, or translate them to their mean values and rescale to unit variance.

/

#eature e$traction/ It pulls out specified data that is significant in some particular context e.g. feature

selection, principle component analysis &$2!+. 0eature selection simply selects important set of data

from the data matrix. $2! is useful tool capable of compressing data and reducing its dimensionality

so that essential information is retained and easier to analy<e than the original huge data set. !rtificial

9eural 9etwork &Self Krgani<ing -ap+ can be also a tool of feature extraction.

Data% &nformation and Knowledge

;ata, information and knowledge can be viewed as assets of an organi<ation.

;ata are collection of facts, measurements and statistics

Information is organi<ed or processed data that are timely and accurate i.e. inferences from original

data.

'nowledge is information that is contextual, relevant, and actionable.

.aving knowledge implies that it can be exercised to solve a problem whereas having information does not

carry the same connotation. !n ability to act to a given context is an integral part of being 'nowledgeable. 0or

example, two people in the same context having same information may not have the same ability to use the

information to the same degree of success.

'nowledge provides higher level of meaning than data or information.

'nowledge ;iscovery in ;atabases &';;+ involves processes at several stages as/

selecting the target data,

preprocessing the data, transforming them if necessary,

performing ;!T! -I9I9B to extract patterns and relationships, and then

interpreting and assessing the discovered structures.

;ata -ining is the analysis of observational data sets &often large sets+ to find unsuspected relationships and to

summari<e the data in novel ways that are both understandable and useful to the data owner. ;ata -ining,

&process of seeking model or patterns relationships+ within the data set, involves number of steps

;etermine the nature and structure of the representation &model or pattern+.

2hoosing score function to decide how well the relationship or model fits the data

2hoosing an algorithm process to optimi<e score function &search methods+

;eciding what data mining principles are re,uired to implement the algorithms efficiently &data

management strateg'+.

The relationships and summaries derived through a ;!T! -I9I9B exercise are often referred to as models or

patterns. #xamples are linear e,uations, rules, clusters, graphs, tree structures, and recurrent patterns in times

series.

The output of data mining is expected as

The relationship determined should be 9KA#6.

The relationship should be understandable.

!ature of data

;ata -L a set of measurements

Two different measurements

Muantitative measurement e.g. persons, age etc.

2ategorical measurement e.g. sex, educational degree etc.

0

Kbservational data &may not be experimental+ -L historical data or data that have already been collected

for some purpose other than data mining analysis.

$roblem faced due to large data sets

.ousekeeping issues/ how to store and access the data

.ow to determine the representative ness of the data &sampling, does the sample represent the data in

generalH+

.ow to analy<e data in reasonable period of time

.ow to decide whether an apparent relationship is merely a chance occurrence not reflecting any

underline realities.

'pes of structure

The structure of representing knowledge could be a -odel or $attern. ! model is built as an abstract

representation of a real world. -odel is a global summery of data. The model can be

6inear

Muadratic or other non linear structures.

$attern structure &clustering+ represents only a part of total data structure. $attern represents departures from

general run of data.

Score function

Several score functions are widely used. ! well known sore function is s,uared error function

n

i

i ' i '

(

1

++ & + & &

;istance measures

! widely used measure is #uclidean distance defined as

1 D (

(

1

++ & + & & + , &

=

=

p

(

( ( )

* $ i $ * i d p ( n i ( (

here p N number of measurements, n N number of variables

!nother distance measure could be -etric measure, defined with the following properties

$(/ and * i * i d , 4 + , & = * i if * i d = = 4 + , &

$1/ * i i * d * i d , + , & + , & =

$5/ ( and * i * ( d ( i d * i d , + , & + , & + , & +

Search methods

-inimi<e or maximi<e score function changing model parameters or model structures as well

Strategies of data management

Krgani<e data set

;efine the data hierarchy etc.

"ummari,ing data

*ean

-ean simply summari<es a collection of values &data set+.

=

i

n i $ D + &

1

The sample mean has the property that it is the value that is FcentralE in the sense that it minimi<es the sum of

s,uared differences between it and the data values. Thus, if there are n data values, the mean is the value such

that the sum of n copies of it e,uals the sum of the data values. -ean is the measure of location. !nother such

measure is the median &a value with e,ual number of values below and above it+. The most repeated value is

+ode.

.tandard )e!iation

The dispersion or variability of data is measured with standard deviation or variance.

,ariance is the sum of s,uare of the difference between the mean and individual data values.

=

i

n i $ D + + & &

1 1

The s,uare root of the variance is standard de-iation.

=

i

n i $ D + + & &

1

ool displa'ing single data set or uni-ariate data (unidirectional): e.g. .istogram

ools displa'ing relationship in between two -ariables (bi/dimensional data) e.g. scatter plot% a locus (cur-e)%

contour lines etc.

ools displa'ing multi-ariate (higher dimensional data set)% e.g. Principal 0omponent Anal'sis

/rinci'al 0om'onent Analysis 1/0A2 3 /artial +east .4uare 1/+.2

$2! and $6S comprises the concept of factor analysis. 0actor analysis techni,ues are used

to reduce the number of variables, and

to detect structure in the relationships between variables &i.e. to classify variables+

The purpose of $2! is to reduce the original variables into fewer composite variables, called principal

components. In $2!, the ob"ective is to account for the maximum portion of the variance present in the original

set of variables with a minimum number of composite variables called principal components.

$2!s are useful tools capable of compressing data and reducing its dimensionality so that essential information

is retained and easier to analy<e than the original huge data set. The theory behind $2! is that the covariance

matrix of the process variables is decomposed orthogonally along directions that explain the maximum variation

of data. $2! is used only for a single data matrix, $6S models the relationship between two blocks of data

while compressing them simultaneously. The ma"or limitation of $2!-based monitoring is that $2! models are

time invariant, while most real processes are time-variant. So it is important for a $2! to be updated

recursively.

P0A Algorithm

Step (/ Bet some data

Step 1/ Subtract the mean/ Bet mean-substracted data set &;ata!d"ust+.

Step 5/ 2alculate the covariance matrix

Step 7/ 2alculate the eigenvectors and eigenvalues of the covariance -atrix

Step 3/ 2hoose components and form a feature vector

0eatureAector N Ieig(,eig1,O..eig9J

Step 3/ ;eriving the new data set

0inal;ata N 8aw0eatureAector x 8aw;ata!d"ust

2

#ach eigenvector represents a principle component. $2( &$rinciple 2omponent (+, is defined as the eigenvector

with the highest corresponding eigenvalue. The individual eigenvalues are numerically related to the variance

they capture via $2s - the higher the value, the more variance they have captured.

Partial 1east Squares (P1S) regression is based on linear transition from a large number of original descriptors

to a new variable space based on small number of orthogonal factors &latent variables+. In other words, factors

are mutually independent &orthogonal+ linear combinations of original descriptors. :nlike some similar

approaches &e.g. principal component regression $28

*

+, latent variables are chosen in such a way as to provide

maximum correlation with dependent variableC thus, $6S model contains the smallest necessary number of

factors &.oskuldsson, ()PP+

This concept is illustrated by 0ig. ( representing a hypothetical data set with two independent variables $2 and

$3 and one dependent variable '. It can be easily seen that original variables $2 and $3 here are strongly

correlated. 0rom them, we change to two orthogonal factors &latent variables+ t2 and t3 that are linear

combinations of original descriptors. !s a result, a single-factor model can be obtained that relates activity ' to

the first latent variable t2.

=asic algorithm of $6S method I-artens Q 9aes, ()P)J for the step of building (-th factor/

Where, % @ number of compounds &samples+,

* @ number of descriptors &variables+

56%7*8 - descriptor matrix

y6%8 @ activity vector,

W6*8 @ auxiliary weight vector

t6%8 @ factor coefficient vector

'6*8 @ loading vector,

4 @ scalar coefficient of relationship between factor and activity

!ll vectors are columns, entities without index %&(42+% are for the current &(-th+ factor.

3atent varia4les are the linear com4inations of original descriptors 'with coefficients represented 4y

loading vector p&.

3

To perform a principal component analysis of the X matrix and then use the principal

components of X as regressors on Y.The orthogonality of the principal components

eliminates the multicolinearity problem. Here, nothing guarantees that the principal

components, which explain X are relevant for Y. By contrast, P! regression "nds

components from X that are also relevant for Y. !peci"cally, P! regression searches for

a set of components #called latent vectors$ that performs a simultaneous decomposition

of X and Y with the constraint that these components explain as much as possible of the

covariance between X and Y. This step generali%es P&'. (t is followed by a regression

step where the decomposition of X is used to predict Y.

15

#ig. 2 ransformation of original descriptors to latent -ariables (a) and construction of acti-it' model

containing one P1S factor (b).

-artens .., 9aes T. -ultivariate 2alibration. 2hichester etc./ Wiley, ()P).

.Rskuldsson !. $6S regression methods. >. 2hemometrics., ()PP, 1&5+ 1((-11

11

You might also like

- Shoe Dog: A Memoir by the Creator of NikeFrom EverandShoe Dog: A Memoir by the Creator of NikeRating: 4.5 out of 5 stars4.5/5 (537)

- SF6 Circuit BreakerDocument8 pagesSF6 Circuit BreakerUkesh ShresthaNo ratings yet

- The Yellow House: A Memoir (2019 National Book Award Winner)From EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Rating: 4 out of 5 stars4/5 (98)

- Disconnector (With: InstallationDocument12 pagesDisconnector (With: InstallationUkesh ShresthaNo ratings yet

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeFrom EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeRating: 4 out of 5 stars4/5 (5794)

- 132KV Line Blocking CircuitDocument3 pages132KV Line Blocking CircuitUkesh ShresthaNo ratings yet

- 110 - Technical Specification 220kV Moose + Zebra WB - 10-ADocument1 page110 - Technical Specification 220kV Moose + Zebra WB - 10-AUkesh ShresthaNo ratings yet

- The Little Book of Hygge: Danish Secrets to Happy LivingFrom EverandThe Little Book of Hygge: Danish Secrets to Happy LivingRating: 3.5 out of 5 stars3.5/5 (400)

- Presentation Outline For Iee/Eia Review in Doed: S. No. Title Details To Be IncludedDocument2 pagesPresentation Outline For Iee/Eia Review in Doed: S. No. Title Details To Be IncludedUkesh ShresthaNo ratings yet

- Grit: The Power of Passion and PerseveranceFrom EverandGrit: The Power of Passion and PerseveranceRating: 4 out of 5 stars4/5 (588)

- Fire Retardant CableDocument1 pageFire Retardant CableUkesh ShresthaNo ratings yet

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureFrom EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureRating: 4.5 out of 5 stars4.5/5 (474)

- Dewatering System PDFDocument2 pagesDewatering System PDFUkesh ShresthaNo ratings yet

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryFrom EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryRating: 3.5 out of 5 stars3.5/5 (231)

- Project EvaluationDocument4 pagesProject EvaluationUkesh ShresthaNo ratings yet

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceFrom EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceRating: 4 out of 5 stars4/5 (895)

- File qR6nKKoFbyXaT8vGxnnK PDFDocument3 pagesFile qR6nKKoFbyXaT8vGxnnK PDFUkesh ShresthaNo ratings yet

- Team of Rivals: The Political Genius of Abraham LincolnFrom EverandTeam of Rivals: The Political Genius of Abraham LincolnRating: 4.5 out of 5 stars4.5/5 (234)

- 30 发总承包 关于提交调速器系统设备修改版图纸的函 02Drawings List图纸清单Document1 page30 发总承包 关于提交调速器系统设备修改版图纸的函 02Drawings List图纸清单Ukesh ShresthaNo ratings yet

- Never Split the Difference: Negotiating As If Your Life Depended On ItFrom EverandNever Split the Difference: Negotiating As If Your Life Depended On ItRating: 4.5 out of 5 stars4.5/5 (838)

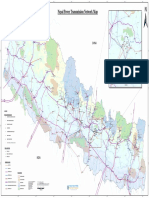

- Nepal Power Transmission Network MapDocument1 pageNepal Power Transmission Network MapUkesh Shrestha100% (1)

- The Emperor of All Maladies: A Biography of CancerFrom EverandThe Emperor of All Maladies: A Biography of CancerRating: 4.5 out of 5 stars4.5/5 (271)

- Turbine Model Test Outline PDFDocument29 pagesTurbine Model Test Outline PDFUkesh ShresthaNo ratings yet

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaFrom EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaRating: 4.5 out of 5 stars4.5/5 (266)

- Nepal Electricity Authority (NEA) Transmission Network and Its LossesDocument3 pagesNepal Electricity Authority (NEA) Transmission Network and Its LossesUkesh ShresthaNo ratings yet

- On Fire: The (Burning) Case for a Green New DealFrom EverandOn Fire: The (Burning) Case for a Green New DealRating: 4 out of 5 stars4/5 (74)

- Nepal Government National Reconstruction Authority Building Reconstruction ProgramDocument1 pageNepal Government National Reconstruction Authority Building Reconstruction ProgramUkesh ShresthaNo ratings yet

- What Is The Power Consumption (In Kilowatts) For A 1.5 Ton Split Air Conditioner?Document1 pageWhat Is The Power Consumption (In Kilowatts) For A 1.5 Ton Split Air Conditioner?Ukesh ShresthaNo ratings yet

- The Unwinding: An Inner History of the New AmericaFrom EverandThe Unwinding: An Inner History of the New AmericaRating: 4 out of 5 stars4/5 (45)

- Design and Simulation of Grid-Connected Hybrid Photovoltaic/Battery Distributed Generation SystemDocument20 pagesDesign and Simulation of Grid-Connected Hybrid Photovoltaic/Battery Distributed Generation SystemUkesh ShresthaNo ratings yet

- Thumb Rules Motor To FollowDocument2 pagesThumb Rules Motor To FollowUkesh ShresthaNo ratings yet

- Import Data From Other Excel FileDocument2 pagesImport Data From Other Excel FileUkesh ShresthaNo ratings yet

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersFrom EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersRating: 4.5 out of 5 stars4.5/5 (345)

- Pca&pls (Ioe)Document1 pagePca&pls (Ioe)Ukesh ShresthaNo ratings yet

- Neural NetworkDocument7 pagesNeural NetworkUkesh ShresthaNo ratings yet

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyFrom EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyRating: 3.5 out of 5 stars3.5/5 (2259)

- Ellis R Corrective Feedback L2 Journal Vol1 2009 PP 3 18Document17 pagesEllis R Corrective Feedback L2 Journal Vol1 2009 PP 3 18english_tuition_1No ratings yet

- Lesson Plan Template For PortifolioDocument2 pagesLesson Plan Template For Portifolioapi-513413837No ratings yet

- Lesson Planning Observation Checklist AteneuDocument2 pagesLesson Planning Observation Checklist AteneuCarme Florit BallesterNo ratings yet

- Human Computer Interaction: Comsats University Islambad, Wah CampusDocument27 pagesHuman Computer Interaction: Comsats University Islambad, Wah CampusArfa NisaNo ratings yet

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreFrom EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreRating: 4 out of 5 stars4/5 (1090)

- Empowering Critical Thinking With Ricosre Learning Model: Tri Maniarta Sari, Susriyati Mahanal, Siti ZubaidahDocument5 pagesEmpowering Critical Thinking With Ricosre Learning Model: Tri Maniarta Sari, Susriyati Mahanal, Siti ZubaidahsuhartiniNo ratings yet

- Module 3 Multiple IntelligencesDocument2 pagesModule 3 Multiple IntelligencesShai Ra CapillanNo ratings yet

- Bruner's Constructivist TheoryDocument4 pagesBruner's Constructivist TheoryAerwyna SMS100% (3)

- The Happiness Advantage by Shawn AchorDocument11 pagesThe Happiness Advantage by Shawn AchorsimasNo ratings yet

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)From EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Rating: 4.5 out of 5 stars4.5/5 (121)

- 01 Interdisciplinary Hannelore Lee JahnkeDocument12 pages01 Interdisciplinary Hannelore Lee JahnkeMohammed RashwanNo ratings yet

- Impact of Online Gaming On The Academic Performance of College Students of Capiz State University Dayao Satellite CollegeDocument5 pagesImpact of Online Gaming On The Academic Performance of College Students of Capiz State University Dayao Satellite CollegeCHeart100% (1)

- HCI Chapter 2Document25 pagesHCI Chapter 2AschenakiNo ratings yet

- Ped 356 IepDocument7 pagesPed 356 Iepapi-375221630No ratings yet

- GEH111 Module 3Document43 pagesGEH111 Module 3Elaine EscalanteNo ratings yet

- Related LitDocument16 pagesRelated LitcieloNo ratings yet

- Sensing and Perceiving Mcqs PDF For All Screening Tests and InterviewsDocument9 pagesSensing and Perceiving Mcqs PDF For All Screening Tests and InterviewsHurairah abbasiNo ratings yet

- Ambuja Public School, Rabriyawas Bottom Line Students Tracking Sheet Sr. No. Class Name QTR 01 Result H. Y. ResultDocument32 pagesAmbuja Public School, Rabriyawas Bottom Line Students Tracking Sheet Sr. No. Class Name QTR 01 Result H. Y. ResultSantanu PachhalNo ratings yet

- Language and The BrainDocument33 pagesLanguage and The BrainRaymond CayabyabNo ratings yet

- Cog Ass Syllabus-2018 Fall - StuDocument5 pagesCog Ass Syllabus-2018 Fall - StuNESLİHAN ORALNo ratings yet

- Her Body and Other Parties: StoriesFrom EverandHer Body and Other Parties: StoriesRating: 4 out of 5 stars4/5 (821)

- Wilhelm Wundt PSYCHOLOGYDocument2 pagesWilhelm Wundt PSYCHOLOGYtrisha lamputiNo ratings yet

- Reading Term 4 ZoeDocument1 pageReading Term 4 Zoeroom8ncsNo ratings yet

- Jemimah Bogbog - Psych 122 Final RequirementDocument1 pageJemimah Bogbog - Psych 122 Final RequirementJinnie AmbroseNo ratings yet

- The Wechsler Intelligence ScalesDocument42 pagesThe Wechsler Intelligence ScalesChris CeNo ratings yet

- Effects of Music and Color On MemoryDocument17 pagesEffects of Music and Color On MemoryTerence TitusNo ratings yet

- Intro To PsycholinguisticsDocument33 pagesIntro To Psycholinguisticsahmed4dodiNo ratings yet

- Boston Diagnostic Aphasia ExaminationDocument2 pagesBoston Diagnostic Aphasia ExaminationSami Ullah Khan NiaziNo ratings yet

- Remote Memory and The Hippocampus: A Constructive Critique: OpinionDocument15 pagesRemote Memory and The Hippocampus: A Constructive Critique: OpinionJortegloriaNo ratings yet

- NB Positive Student ProfileDocument6 pagesNB Positive Student Profileapi-287749588No ratings yet

- Burger PPT CH 15Document30 pagesBurger PPT CH 15Necelle YuretaNo ratings yet

- I.FOI All TasksDocument21 pagesI.FOI All TasksPuckyOne100% (1)

- Consumer Behavior Study Material For MBADocument71 pagesConsumer Behavior Study Material For MBAgirikiruba82% (38)

- Algorithms to Live By: The Computer Science of Human DecisionsFrom EverandAlgorithms to Live By: The Computer Science of Human DecisionsRating: 4.5 out of 5 stars4.5/5 (722)

- Defensive Cyber Mastery: Expert Strategies for Unbeatable Personal and Business SecurityFrom EverandDefensive Cyber Mastery: Expert Strategies for Unbeatable Personal and Business SecurityRating: 5 out of 5 stars5/5 (1)