Professional Documents

Culture Documents

97 Statistical Control of The Measurement Process

Uploaded by

euszko0 ratings0% found this document useful (0 votes)

65 views9 pagesThis paper proposes a non-traditional paradigm that considers measurement to be a process. Statistical process control allows a manufacturer to confirm that a process is operating "in control" the results are used to provide estimated uncertainty values associated with the measurement result.

Original Description:

Original Title

97 Statistical Control of the Measurement Process

Copyright

© © All Rights Reserved

Available Formats

PDF, TXT or read online from Scribd

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentThis paper proposes a non-traditional paradigm that considers measurement to be a process. Statistical process control allows a manufacturer to confirm that a process is operating "in control" the results are used to provide estimated uncertainty values associated with the measurement result.

Copyright:

© All Rights Reserved

Available Formats

Download as PDF, TXT or read online from Scribd

0 ratings0% found this document useful (0 votes)

65 views9 pages97 Statistical Control of The Measurement Process

Uploaded by

euszkoThis paper proposes a non-traditional paradigm that considers measurement to be a process. Statistical process control allows a manufacturer to confirm that a process is operating "in control" the results are used to provide estimated uncertainty values associated with the measurement result.

Copyright:

© All Rights Reserved

Available Formats

Download as PDF, TXT or read online from Scribd

You are on page 1of 9

Comit Interorganismos de Ductos de Petrleos Mexicanos

Congreso Internacional de Ductos

Mrida, Yucatn, 14 16 Noviembre del 2001

Paper ID: OAM-18

STATISTICAL CONTROL OF THE MEASUREMENT PROCESS

Thomas Kegel

Colorado Engineering Experiment Station, Inc.

Nunn, Colorado, USA

www.ceesi.com

(970)897-2711

ABSTRACT

This paper proposes a non-traditional paradigm that

considers measurement to be a process. In a manufacturing

process, it is recognized that many small effects can contribute

to random variance in the process output. These effects arise

from an operator, a procedure, the environment, and raw

materials as well as the machinery itself. The economic

equilibrium between the cost of identifying the source of an

effect and the benefit realized from reducing the impact

inevitably results in some level of random variance. The

manufacturing community has developed a statistical tool,

statistical process control (SPC), to monitor the consistency of

the random variance. The application of SPC allows a

manufacturer to confirm that a process is operating in control

and therefore producing consistent products. From a statistical

point of view, the measurement and manufacturing processes

are very similar. The result of a measurement will exhibit

random variance due to the effects of operators, procedures,

and the environment as well as the instrument itself. The SPC

algorithms are therefore equally applicable to the measurement

process. This paper describes by example the application of

statistical process control to several calibration processes. In

addition to monitoring consistency, the results are used to

provide estimated uncertainty values associated with the

measurement result.

INTRODUCTION

As technology continues to play an increasing role in

todays energy business, the demand for supporting

measurements also increases. In addition, competitive pressures

to continuously improve processes further expand the role of

measurement. These economic forces highlight the importance

of understanding measurement science and the implications of

decisions made based on measurement results. This

understanding is even more critical given the increasing value

of the commodity being measured. In the manufacturing

industries the correlations between measurement, statistics and

process improvement have been well established for many

years

1,2

. The automotive industry in particular

3

has realized

benefits in productivity and quality as a result of improved

measurement science. More recently there has been a

realization that the same statistical techniques that have been

developed for manufacturing are applicable to measurement

science

4,5

. The underlying statistics are incapable of

differentiating between the geometry of a manufactured part

and the result of a measurement process

One of the more important tools developed by the

manufacturing community is Statistical Process Control. It is

usually presented in the form of a control chart designed to

interpret the underlying statistics in a simplified form. The

simplification allows for reliable decision making by

practitioners without extensive training in statistics. This paper

provides an introduction to the application of statistical analysis

to measurement science.

THE MEASUREMENT PROCESS

When a measurement is made there is a tendency to

consider only the performance of the measuring instrument

itself. When stating the uncertainty associated with a

measurement, the instrument manufacturers specifications are

often given as the only source of uncertainty. In fact, the

measurement is a process that includes the instrument as only

one of several components. Other components that potentially

contribute uncertainty to the process output include: an

operator, operating procedures, instrument maintenance and the

environment. Each of these components contribute potentially

small random effects that when combined result in a larger

random effect associated with the measurement process output.

The sources of the small random effects are generally unknown

and potentially costly to identify. In fact, as long as the process

is capable of making the required measurement, there is no

need to identify the sources of random variation. It is only

Comit Interorganismos de Ductos de Petrleos Mexicanos

required that the process be monitored to ensure that the

random effects are consistent over time.

For the practitioner, the brief discussion above is

summarized in two important questions to be asked regarding a

measurement process. First, is the uncertainty appropriate for

the application? Second, are measurements consistent over

time? It has been proposed

4

that if the answer to either of these

questions is no then the process is not capable of making a

measurement. This paper presents statistical tools to allow the

practitioner to answer these questions and ensure valid

measurement.

A simple example has been formulated to demonstrate the

statistical principles. The subject is a fictitious pressure

transducer that is used over a 1001000 psi range. The output is

a voltage that varies over the 05 volt range, the nominal

sensitivity is therefore 200 psi/volt. The transducer is calibrated

every 90 days, a history has been developed based on 16

calibrations made between January 1999 and December 2002.

Each calibration involves determining the transducer sensitivity

based on values calculated from pressure and voltage standards.

Sensitivity data are obtained at ten pressure levels equally

spaced over the input range.

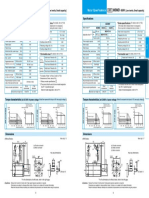

The calibration results from December 1999 are shown in

Figure 1. These data would appear to suggest a change in

sensitivity over the range of the transducer, the solid line

represents a proposed correlation. The magnitude of the change

in sensitivity is 1.9 [psi/v] from 100 psi to 1000 psi. Is this

significant? Is it typical of the calibration history, or a

characteristic of this particular calibration? Some decisions

need to be made based on these results. Does the change of

sensitivity affect measurements made with the transducer?

Should the transducer be adjusted? Figure 2 shows the

December 1999 data (closed circles connected by lines)

compared with the entire calibration history (open circles). The

data at each pressure level are distributed over a range of

sensitivity values, this is indicative of random effects. It

appears as if the 1.9 [psi/v] change in sensitivity lies within the

limits of these random effects. Are the data in Figure 1 the

result of a series of random effects rather than a trend?

STATISTICAL PROCESS CONTROL

The scenario described above does not provide the

practitioner with adequate basis on which to answer some

potentially important questions. The calibration data are

adequate but the proper tools are not available. The reliability

of the required decisions can be improved through the use of

Statistical Process Control (SPC).

The heart of SPC is the control chart. It assists the

practitioner to quickly evaluate to status of a calibration history

and confidently make the correct decision. What is a control

chart and how is it developed? This process is now described:

The ten sensitivity values that make up a calibration are used to

calculate a mean and standard deviation. These values, when

plotted against time make up the two types of control charts.

The x chart contains the mean values while the standard

deviations are plotted in the s chart. Control charts for the

current example are shown in Figures 3 and 4. The symbols

represent the mean and standard deviation values of sensitivity

calculated for the 16 calibrations. The solid lines represent

control limits, they are calculated from values of accumulated

standard deviation

4

. The measurement process is considered to

be in a state of statistical control if a data point lies between

the control limits of the x chart and below the single control

limit of the s chart. Only one limit is included in the s chart

because the values for standard deviation are always greater

than zero. The control limits in the charts are not fixed because

they are updated as calibration data are accumulated. The term

statistical control means that the measurement process is

operating in a consistent manner. The control limits quantify

the level of consistency. If a process is operating consistently,

and nothing is done to change that process, then there is a

level of confidence that the consistent performance will

continue into the future. An out of control condition observed

in a control chart is an indication of a problem with the

measurement process that needs to be investigated. The great

value in the use of control charts is in separating potentially

serious measurement problems from naturally occurring

random variations. In the present example, the control charts

indicate that the apparent trend in Figure 1 is nothing more than

random effects and does not warrant adjusting the transducer.

The term level of confidence appears in the discussion

above. It accounts for the fact that statistical analyses never

produce absolutely conclusive results. Conclusions are always

stated with some level of confidence. The particular level

depends on the application, standard practice in measurement

science it is a 95% level of confidence. The shape of the control

limits of Figure 3 is a graphical example of the confidence

interval concept. The x chart shows a steady decrease in the

control limit interval width, decreasing from 2.46 [psi/v] in

April 1999 0.67 [psi/v] in December 2002. As data are

accumulated the level of confidence associated with an in

control conclusion increases. This increase in confidence

results in the control limits moving closer together. The s chart

would show a similar trend except that the standard deviation

value for the first data point is small enough (relative to

subsequent data) to offset the low initial level of confidence.

In an industrial SPC application the control charts are

maintained based on samples of the manufacturing process. In a

measurement process application periodic samples of the

measurement are made, these samples are called calibrations.

While the control charts are maintained based on calibration

data (samples), the relevant application is what takes place

between the calibrations. The validity of the industrial control

charts is dependent on how well the sample represents an entire

batch of the product. Similarly, the validity of the measurement

process control charts depends on the degree of measurement

and calibration process similarity.

ESTIMATING UNCERTAINTY

Two values of standard deviation, with different

interpretation, are calculated from the calibration data to form

the control limits. A pooled value of calibration standard

Comit Interorganismos de Ductos de Petrleos Mexicanos

deviations, called s

w

for within, defines the control limit for

the s chart (Figure 4). The term pooled refers to a process for

combining multiple standard deviation values. The pooled

value represents the process variation observed in the time

required to perform a ten point calibration. The second standard

deviation, calculated from the mean values, defines the control

limits of the x chart (Figure 3). This standard deviation, called

s

b

for between, represents random effects that are only

observed over long periods of time. The duration of a long

period of time depends on the application, in the present

example the duration is four years.

The reported standard deviation, s

r

, accounts for both short

term and long term random effects. The measurement

uncertainty will be underestimated both effects are not

included. The value for s

r

is calculated from values of s

w

and s

b

combined in quadrature (root sum square):

08 . 1 03 . 1 32 . 0 s s s

2 2 2

w

2

b r

= + = + = [psi/v]

The interpretation of s

r

is as follows: all of the random

effects associated with the measurement process amount to

(21.08) = 2.16 [psi/v] with a confidence level of 95%. The

2 term means that two standard deviation values are

required to achieve the 95% level of confidence. The s

r

value

can be confirmed by inspection of Figure 2; 95% of those data

points fall within an interval with width equal to 2.16 [psi/v].

While invaluable in the determination of uncertainty, the

control charts cannot identify the systematic effects that

contribute uncertainty. What is a systematic effect? A random

effect will contribute to observed changes in a repeated

measurement while a systematic effect will not change through

repetition of the same measurement. A typical systematic effect

present in the current example would be the uncertainty

associated with the standard used to calibrate the transducer.

When using control chart data in an uncertainty analysis,

uncertainty estimates attributed to relevant systematic effects

must be determined by other methods.

PREDICTING THE FUTURE

When an instrument is calibrated, the purpose of the

calibration is to predict the future. While the present calibration

of an instrument can be used to re-evaluate events in the past,

the most common application of the result is in future events.

The most recent calibration results for the fictitious pressure

transducer are used for the proceeding 90 days. While

predicting the future with absolute certainty cannot be

accomplished, statistical tools can be applied to estimate the

uncertainty in the prediction.

The mean values for the first four calibrations are

contained in Figure 5. A simple linear fit is made of mean

values and calibration date (the date is expressed as the number

of days since January 1, 1900). The solid lines represent the

prediction interval, the interval within which a future

calibration can be predicted to fall

6

. The interval width

increases over time, from 1.7 [psi/v] in September 1999 and

8.4 [psi/v] in December 2002. The shape of the prediction

interval is the result of the range of slope and intercept values

describing lines that could be fitted to the four calibration

points. Stated a different way: when a line is fitted to data there

in uncertainty associated with both slope and intercept, this

results in the increase in interval width when the data are

extrapolated. The prediction interval is fundamentally different

from the control limits despite similar appearances. While new

control limit values are calculated for each calibration the

values for past calibrations remain the same. The prediction

interval is recalculated for the entire calibration history when a

new data point is obtained. The important conclusion to be

drawn from Figure 5 is that uncertainty grows over time after a

calibration.

Figure 6 shows the prediction intervals resulting from six

(dashed line) and ten (solid line) data points. The interval width

decreases as additional data points are obtained. The December

2002 values are reduced from 8.4 [psi/v] to 1.2 [psi/v] as the

data points increase in number from four to ten. While useful as

a learning aid, predicting instrument performance several years

into the future is not a realistic application. A more practical

need would be to predict the uncertainty growth from one

calibration to the next. This information is contained in Figure

7. The abscissa is the number of accumulated calibrations. The

ordinate is the predicted increase in interval width from the last

calibration to the next. For example, if a history consists of

eight calibrations, the prediction interval width is expected to

increase by 6.5% by the time of the ninth calibration.

The linear fit determined from the first four data points

(Figure 5) predicts a change in sensitivity of approximately 1.0

[psi/v] per year. On the one hand, this behavior should prompt

an investigation of the transducer because it might indicate

measurement problem. On the other hand, the trend could be

nothing more than random values appearing in a particular

order. The data of Figure 1 appeared to have indicated a trend

that wasnt present, the same thing may be happening with

these data. The results in Figure 6 indicate that the trend over

time is not predicted as more data are accumulated. The results

are summarized in the plot of Figure 7, as additional calibration

data are obtained the slope approaches a value of zero.

SPC APPLICATIONS

The concepts of SPC have been applied to a number of

applications in a flow calibration facility. The earliest

application involves pressure instrumentation that is maintained

in a regular calibration program

7,8

. Analyses have been

performed on both laboratory and industrial grade transducers.

The control charts are used to ensure measurement consistency

and estimate uncertainty.

A more recent application involves the use of flowmeter

check standards

9,10,11

. Several flowmeters have been

permanently installed in series with the test section of a

calibration facility. One of these meters is always calibrated at

the same time as the meters under test (MUT). This application

differs from the first in that the flowmeters are not used as

standards. Control charts from one of the meters are contained

in Figures 8 and 9. The abscissa values differ from those of

Figures 3 and 4. The flowmeter performance varies with the

Comit Interorganismos de Ductos de Petrleos Mexicanos

input, the random effects increase as the flowrate decreases. In

order to compare performance at different flowrates, the data

are normalized.

The control charts in Figures 8 and 9 are based on 3976

data points contained in 224 calibrations obtained during a six

month period of time. Calibration data obtained before and

after this time interval were based on different check meters

and are summarized in different control charts. The check

standard concept is very valuable because a meter with a well

established history is always subject to the same conditions as a

MUT. When the check meter indicates that the calibration

process is in control this means that the current calibration of

the MUT is consistent with the calibration history of the check

standard. The control chart data also form an important part of

the uncertainty estimate for the facility, the value of s

r

is

assumed to account for all random effects present during the

MUT calibration. The ability to apply the estimated uncertainty

to the MUT is enhanced by the calibration history of the check

standard.

The occasional data points that exceed the control limits in

Figures 8 and 9 are not a problem because the limits are defined

based on a 95% level of confidence. At this level of confidence

5 points out of 100 can fall outside the limits and the process

will remain in control. In general, a decision based on one data

point in the middle of a group is relatively straightforward,

hindsight is always 20/20. For example, the control charts

clearly show that the data from mid September to mid October

are in control. A practitioner looking at the current control

charts in early September does not have this information. They

are faced with an out of control condition (OCC), what does

this mean? Is the current calibration one of the 5% that can be

OCC?, Is the process truly out of control? There are a number

of recommended steps to react to an OCC: The first step is to

confirm OCC indication from both control charts, most of the

OCC cases in Figures 8 and 9 appear only on one chart. The

second step is to wait for a few calibrations and see if the OCC

indicator persists. Referring again to Figures 8 and 9, most of

the OCC indicators exist only for one or two data points. Third,

the manufacturing community has developed a variety of rules

to interpret control chart trends and patterns

1

. Finally, an

engineering investigation of the process should be undertaken

with the intent of localizing the source of the OCC.

CONCLUSION

The application of traditional statistical analysis tools to

measurement science has been briefly described. This was

accomplished based on a simple example as well as some real

world data. Through the use of Statistical Process Control, the

practitioner has a tool to help answer the following questions:

1) Are the measurement process results consistent?

2) What is the uncertainty due to random effects?

3) How much uncertainty growth will occur prior to the

next calibration?

REFERENCES

1. Juran, J. M., Quality Control Handbook, McGraw-Hill,

1951.

2. Bothe, David R., Measuring Process Capability, McGraw-

Hill, 1997.

3. Automotive Industry Action Group, Measuring System

Analysis Reference Manual, Southfield, MI, 1990.

4. Croarkin, Carroll, Measurement Assurance Programs, Part

2: Development and Implementation, NBS Special

Publication 676-2, 1985.

5. Castrup, H. T., et al, Metrology-Calibration and

Measurement Process Guidelines, NASA Reference

Publication 1342, 1994.

6. Hahn, G. J. and Meeker, W. Q., Statistical Intervals, A

Guide for Practitioners, John Wiley, 1991.

7. Kegel, T. M., "Statistical Control of a Pressure

Measurement Process," Transactions of the ISA: Journal of

the Instrument Society of America, 1996. Vol 35, p. 69-77.

8. Kegel, Thomas, Statistical Control of a Differential

Pressure Instrument Calibration Process, 45

th

International Instrumentation Symposium, Albuquerque,

New Mexico, May 2-6, 1999.

9. Kegel, Thomas, Uncertainty Issues Associated With a

Very Large Capacity Flow Calibration Facility,

Measurement Science Conference, Anaheim, California,

January 2021, 2000

10. Kegel, T. M., Statistical Control of a Flowmeter

Calibration Process, 46

th

International Instrumentation

Symposium, Bellevue, WA, April 30 May 5, 2000.

11. Kegel, T. M., Long Term Ultrasonic Meter Performance

Data, AGA Operations Conference, May, 2001.

Comit Interorganismos de Ductos de Petrleos Mexicanos

197

199

201

203

0 200 400 600 800 1000

Applied Pressure [psia]

S

e

n

s

i

t

i

v

i

t

y

[

p

s

i

/

v

o

l

t

]

197

199

201

203

0 200 400 600 800 1000

Applied Pressure [psia]

S

e

n

s

i

t

i

v

i

t

y

[

p

s

i

/

v

o

l

t

]

Figure 1: December 1999 Calibration Data

Figure 2: Entire Calibration History

(December 1999 data shown in black)

Comit Interorganismos de Ductos de Petrleos Mexicanos

197

199

201

203

Nov-98 Nov-99 Nov-00 Nov-01 Nov-02

Date

M

e

a

n

[

p

s

i

/

v

]

0.4

0.8

1.2

1.6

2.0

Nov-98 Nov-99 Nov-00 Nov-01 Nov-02

Date

S

t

a

n

d

a

r

d

D

e

v

i

a

t

i

o

n

[

p

s

i

/

v

]

Figure 3: Control Chart of Mean Data ( x chart)

Figure 4: Control Chart of Standard Deviation Data (s chart)

Comit Interorganismos de Ductos de Petrleos Mexicanos

184

188

192

196

200

204

208

Nov-98 Nov-99 Nov-00 Nov-01 Nov-02

Date

M

e

a

n

[

p

s

i

/

v

]

196

198

200

202

204

Nov-98 Nov-99 Nov-00 Nov-01 Nov-02

Calibration Date

M

e

a

n

[

p

s

i

/

v

]

Interval width based on:

6 points

10 points

Figure 6: Prediction Intervals Based on Six and Ten Calibrations

Figure 5: Prediction Interval Based on Four Calibrations

Comit Interorganismos de Ductos de Petrleos Mexicanos

0

5

10

15

20

25

4 6 8 10 12 14 16

Calibrations

U

n

c

e

r

t

a

i

n

t

y

G

r

o

w

t

h

[

%

]

-1.2

-1.0

-0.8

-0.6

-0.4

-0.2

0.0

L

i

n

e

a

r

D

r

i

f

t

[

p

s

i

/

v

/

y

r

]

Growth

Drift

0.0

0.4

0.8

1.2

1.6

Jun-00 Aug-00 Oct-00 Dec-00

Date

S

t

a

n

d

a

r

d

D

e

v

i

a

t

i

o

n

[

s

]

Figure 7: Uncertainty Growth and Linear Drift

Figure 8: Control Chart for Standard Deviation Data from a Flowmeter Check Standard

Comit Interorganismos de Ductos de Petrleos Mexicanos

-2.0

-1.0

0.0

1.0

2.0

Jun-00 Aug-00 Oct-00 Dec-00

Date

M

e

a

n

[

s

]

Figure 9: Control Chart for Mean Data from a Flowmeter Check Standard

You might also like

- SQCDocument36 pagesSQCMandeep SinghNo ratings yet

- Process Capability and Statistical Process ControlDocument8 pagesProcess Capability and Statistical Process ControlDeepakh ArunNo ratings yet

- Washing MachinesDocument6 pagesWashing MachinesAnonymous wK36hLNo ratings yet

- Statistical Quality ControlDocument47 pagesStatistical Quality ControlShreya TewatiaNo ratings yet

- Ahsmrw30dam SD101Document48 pagesAhsmrw30dam SD101ibrahimNo ratings yet

- Pharma MarketingDocument55 pagesPharma MarketingArpan KoradiyaNo ratings yet

- Statistical Quality ControlDocument23 pagesStatistical Quality Controljoan dueroNo ratings yet

- Husqvarna 2008Document470 pagesHusqvarna 2008klukasinteria100% (2)

- Statistical Process Control (SPC) TutorialDocument10 pagesStatistical Process Control (SPC) TutorialRob WillestoneNo ratings yet

- Unit VDocument130 pagesUnit VJoJa JoJaNo ratings yet

- Statistical Quality ControlDocument91 pagesStatistical Quality ControlJabir Aghadi100% (3)

- Reciprocating Compressor Discharge TemperatureDocument6 pagesReciprocating Compressor Discharge TemperaturesalleyNo ratings yet

- Ignitability and Explosibility of Gases and VaporsDocument230 pagesIgnitability and Explosibility of Gases and VaporsKonstantinKot100% (3)

- Measurement System AnalysisDocument5 pagesMeasurement System AnalysisRahul BenkeNo ratings yet

- Iso 3951Document13 pagesIso 3951ziauddin bukhariNo ratings yet

- Application of Multivariate Control Chart For Improvement in Quality of Hotmetal - A Case StudyDocument18 pagesApplication of Multivariate Control Chart For Improvement in Quality of Hotmetal - A Case StudyMohamed HamdyNo ratings yet

- Data Reconciliation: Department of Chemical Engineering, University of Liège, BelgiumDocument17 pagesData Reconciliation: Department of Chemical Engineering, University of Liège, BelgiumDemaropz DenzNo ratings yet

- Udhe 2.standardsDocument1 pageUdhe 2.standardsom dhamnikarNo ratings yet

- Measurement System AnalysisDocument7 pagesMeasurement System AnalysisselvamNo ratings yet

- 10 - Statistical Quality ControlDocument63 pages10 - Statistical Quality ControlSandeep Singh100% (4)

- Firewall Geometric Design-SaiTejaDocument9 pagesFirewall Geometric Design-SaiTejanaveenNo ratings yet

- C ChartDocument10 pagesC ChartManohar MemrNo ratings yet

- SPC Quality OneDocument9 pagesSPC Quality OneElanthendral GNo ratings yet

- Statistical Quality ControlDocument6 pagesStatistical Quality ControlAtish JitekarNo ratings yet

- Core Tools: Measurement Systems Analysis (MSA)Document6 pagesCore Tools: Measurement Systems Analysis (MSA)Salvador Hernandez ColoradoNo ratings yet

- Control Charts in QC in ConstructionDocument11 pagesControl Charts in QC in ConstructionGlenn Paul GalanoNo ratings yet

- Quality Engineering and Management System Accounting EssayDocument12 pagesQuality Engineering and Management System Accounting EssayvasudhaaaaaNo ratings yet

- Implementation of SPC Techniques in Automotive Industry: A Case StudyDocument15 pagesImplementation of SPC Techniques in Automotive Industry: A Case StudysushmaxNo ratings yet

- Reading Assignment OperationsDocument6 pagesReading Assignment OperationsAhmed NasrNo ratings yet

- Analysis of Error in Measurement SystemDocument8 pagesAnalysis of Error in Measurement SystemHimanshuKalbandheNo ratings yet

- Lecture 9Document12 pagesLecture 9Sherif SaidNo ratings yet

- Statistical Process ControlDocument8 pagesStatistical Process ControlSaurabh MishraNo ratings yet

- Statistical Process Control SPCDocument3 pagesStatistical Process Control SPCtintucinNo ratings yet

- HE DEA James O. Westgard, PH.D.: Need For QCDocument6 pagesHE DEA James O. Westgard, PH.D.: Need For QCFelipe ContrerasNo ratings yet

- Manufacturing Technology AssignmentDocument15 pagesManufacturing Technology AssignmentDan Kiama MuriithiNo ratings yet

- Certification Course On Quality Assurance and Statistical Quality Techniques Course Level A Statistical Process Control Concepts & Control ChartsDocument28 pagesCertification Course On Quality Assurance and Statistical Quality Techniques Course Level A Statistical Process Control Concepts & Control Chartsrchandra2473No ratings yet

- Statistical Statements For Mathematical Methods Synthesis of Automated Measurements Systems For Accuracy IncrementDocument9 pagesStatistical Statements For Mathematical Methods Synthesis of Automated Measurements Systems For Accuracy IncrementReddappa HosurNo ratings yet

- 5 3 QC Automated Inspection AbkDocument45 pages5 3 QC Automated Inspection Abkfree0332hNo ratings yet

- Unit - V IemDocument19 pagesUnit - V IemG Hitesh ReddyNo ratings yet

- Assignment 3Document9 pagesAssignment 3api-265324689No ratings yet

- Statistical Process Control For Quality ImprovementDocument8 pagesStatistical Process Control For Quality ImprovementAhmed IsmailNo ratings yet

- Working Paper Series: College of Business Administration University of Rhode IslandDocument25 pagesWorking Paper Series: College of Business Administration University of Rhode IslandDeivid Zharatte Enry QuezNo ratings yet

- Design of Experiments (SS)Document4 pagesDesign of Experiments (SS)Sudipta SarangiNo ratings yet

- Fall 2020: Javier Cruz SalgadoDocument16 pagesFall 2020: Javier Cruz SalgadoLuis CruzNo ratings yet

- Control Chart For Contunuous Quality Improvement - Analysis in The Industries of BangladeshDocument9 pagesControl Chart For Contunuous Quality Improvement - Analysis in The Industries of Bangladeshpriyanka rajaNo ratings yet

- North Sea Flow Measurement Workshop (2007) PaperDocument25 pagesNorth Sea Flow Measurement Workshop (2007) Papermatteo2009No ratings yet

- Statistical Quality ControlDocument4 pagesStatistical Quality ControlSIMERA GABRIELNo ratings yet

- P-Chart: Statistical Quality Control Control Chart Nonconforming Units Sample Go-No Go Gauges SpecificationsDocument12 pagesP-Chart: Statistical Quality Control Control Chart Nonconforming Units Sample Go-No Go Gauges SpecificationsAnita PanthakiNo ratings yet

- Case StudyDocument8 pagesCase StudyIbra Tutor100% (1)

- Statistical Quality ControlDocument43 pagesStatistical Quality ControlRudraksh AgrawalNo ratings yet

- SPC in Communication EngineeringDocument12 pagesSPC in Communication EngineeringHusaini BaharinNo ratings yet

- Assessing The Effects of Autocorrelation On The Performance of Statistical Process Control Charts - (Printed)Document10 pagesAssessing The Effects of Autocorrelation On The Performance of Statistical Process Control Charts - (Printed)Mohamed HamdyNo ratings yet

- Production Management Unit 5Document73 pagesProduction Management Unit 5Saif Ali KhanNo ratings yet

- Analytical Metrology SPC Methods For ATE ImplementationDocument16 pagesAnalytical Metrology SPC Methods For ATE ImplementationtherojecasNo ratings yet

- A Comparative Study of Software Reliability Models Using SPC On Ungrouped DataDocument6 pagesA Comparative Study of Software Reliability Models Using SPC On Ungrouped Dataeditor_ijarcsseNo ratings yet

- 07-Elfard Salem SherifDocument5 pages07-Elfard Salem SherifAbhishek SharmaNo ratings yet

- Chapter 10 NotesDocument21 pagesChapter 10 NotesfrtisNo ratings yet

- 2011 Enciclopedia-Mine SQCG PDFDocument6 pages2011 Enciclopedia-Mine SQCG PDFJumrianti riaNo ratings yet

- Lecture Control Charts 1558081780Document49 pagesLecture Control Charts 1558081780Dahn NguyenNo ratings yet

- Basics of Statistical Process Control (SPC) : X-Bar and Range ChartsDocument4 pagesBasics of Statistical Process Control (SPC) : X-Bar and Range ChartsprabhupipingNo ratings yet

- Mathematics 08 00706 v2 PDFDocument21 pagesMathematics 08 00706 v2 PDFKhaled GharaibehNo ratings yet

- Process Variability Reduction Through Statistical Process Control For Quality ImprovementDocument11 pagesProcess Variability Reduction Through Statistical Process Control For Quality ImprovementMehul BarotNo ratings yet

- Metrology Control ChartsDocument14 pagesMetrology Control ChartsRaghu KrishnanNo ratings yet

- Optimization Under Stochastic Uncertainty: Methods, Control and Random Search MethodsFrom EverandOptimization Under Stochastic Uncertainty: Methods, Control and Random Search MethodsNo ratings yet

- Catalogue MV 07Document54 pagesCatalogue MV 07api-3815405100% (3)

- Laboratorio de Microondas - Medicion en Lineas de TX Usando Lineas RanuradasDocument5 pagesLaboratorio de Microondas - Medicion en Lineas de TX Usando Lineas RanuradasacajahuaringaNo ratings yet

- Practice Test 3Document13 pagesPractice Test 3Ngân Hà NguyễnNo ratings yet

- ASME B16.47 Series A FlangeDocument5 pagesASME B16.47 Series A FlangePhạm Trung HiếuNo ratings yet

- PDF CatalogEngDocument24 pagesPDF CatalogEngReal Gee MNo ratings yet

- Internship Report (EWSD)Document23 pagesInternship Report (EWSD)Spartacus GladNo ratings yet

- Center Pivot Cable / Wire Raintec Span Cable Raintec Motor DropDocument1 pageCenter Pivot Cable / Wire Raintec Span Cable Raintec Motor Drophicham boutoucheNo ratings yet

- JFo 2 1 PDFDocument45 pagesJFo 2 1 PDFAkbar WisnuNo ratings yet

- HPB Install Manual ABB - Distribution BUS BarsDocument11 pagesHPB Install Manual ABB - Distribution BUS BarsArunallNo ratings yet

- Polyvalve Poly-Gas Polyvalve For Gas ApplicationsDocument4 pagesPolyvalve Poly-Gas Polyvalve For Gas ApplicationsVasco FerreiraNo ratings yet

- Omni PageDocument98 pagesOmni Pageterracotta2014No ratings yet

- Maintenance ManualDocument6 pagesMaintenance ManualHuda LestraNo ratings yet

- VCEguide 300-360Document25 pagesVCEguide 300-360olam batorNo ratings yet

- A30050-X6026-X-4-7618-rectifier GR60Document17 pagesA30050-X6026-X-4-7618-rectifier GR60baothienbinhNo ratings yet

- How02 - Z11 - Mec503 - C01 - Oss Piping Matr Class SpecDocument31 pagesHow02 - Z11 - Mec503 - C01 - Oss Piping Matr Class Speckristian100% (1)

- RX-78GP03S Gundam - Dendrobium Stamen - Gundam WikiDocument5 pagesRX-78GP03S Gundam - Dendrobium Stamen - Gundam WikiMark AbNo ratings yet

- A5 MSMD 400WDocument1 pageA5 MSMD 400WInfo PLSNo ratings yet

- Guidelines For Layout and Format of The Proposal: 1. Page Margins (For All Pages) - Use A4 Size PaperDocument3 pagesGuidelines For Layout and Format of The Proposal: 1. Page Margins (For All Pages) - Use A4 Size PaperAummy CreationNo ratings yet

- (DOC) Makalah Sistem Endokrin - YENNA PUTRI - Academia - Edu183414Document13 pages(DOC) Makalah Sistem Endokrin - YENNA PUTRI - Academia - Edu183414dominggus kakaNo ratings yet

- Value Creation Through Project Risk ManagementDocument19 pagesValue Creation Through Project Risk ManagementMatt SlowikowskiNo ratings yet

- Implementation 3-Axis CNC Router For Small Scale Industry: Telkom Applied Science School, Telkom University, IndonesiaDocument6 pagesImplementation 3-Axis CNC Router For Small Scale Industry: Telkom Applied Science School, Telkom University, IndonesiaAnonymous gzC9adeNo ratings yet