Professional Documents

Culture Documents

Wang Shazam

Uploaded by

Pirkka Åman0 ratings0% found this document useful (0 votes)

22 views5 pagesQuery-by-example (qbe) music search service enables users to learn the identity of audible prerecorded music. Users sample a few seconds of audio using a mobile phone as a recording device. The service delivers the matching song, as well as related music information.

Original Description:

Copyright

© © All Rights Reserved

Available Formats

PDF, TXT or read online from Scribd

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentQuery-by-example (qbe) music search service enables users to learn the identity of audible prerecorded music. Users sample a few seconds of audio using a mobile phone as a recording device. The service delivers the matching song, as well as related music information.

Copyright:

© All Rights Reserved

Available Formats

Download as PDF, TXT or read online from Scribd

0 ratings0% found this document useful (0 votes)

22 views5 pagesWang Shazam

Uploaded by

Pirkka ÅmanQuery-by-example (qbe) music search service enables users to learn the identity of audible prerecorded music. Users sample a few seconds of audio using a mobile phone as a recording device. The service delivers the matching song, as well as related music information.

Copyright:

© All Rights Reserved

Available Formats

Download as PDF, TXT or read online from Scribd

You are on page 1of 5

People are routinely exposed to music in everyday environments

car, home, restaurant, movie theater, shopping mallbut are frus-

trated by not being able to learn more about what they hear. They

may, for instance, be interested in a particular piece of music and

want to know its title and the name of the artist who created it.

They may want to buy a digital download of the song or a ring-

tone. To address these limitations, my colleagues and I at Shazam

Entertainment have developed a query-by-example (QBE) music

search service that enables users to learn the identity of audible pre-

recorded music by sampling a few seconds of audio using a mobile

phone as a recording device.

THE SHAZAM MUSIC

RECOGNITION SERVICE

Guided by a users query-by-example music sample, it delivers

the matching song, as well as related music information, of

immediate interest to the user.

By Avery Wang

44 August 2006/Vol. 49, No. 8 COMMUNICATIONS OF THE ACM

COMMUNICATIONS OF THE ACM August 2006/Vol. 49, No. 8 45

Others have also sought to deliver music

identification. For example, in 1999 an early

pioneer, StarCD, introduced a service

enabling users with mobile phones to iden-

tify songs playing on certain radio stations

[1]. Identification was accomplished by

users calling into the StarCD service while

an unknown song was playing. They would

then enter the radio station call letters onto

the phones keypad. StarCD would auto-

matically consult a third-party playlist data-

base provider to determine what song was

playing on the specified radio station at the

time of the call. Learning the identity of the

music, users would be given the opportunity

to buy the CD. The StarCD system was

thus limited to songs playing on radio sta-

tions being monitored by the third-

party playlist provider.

QBE is a more flexible music-

recognition modality than the one

provided by StarCD, recognizing

music from a sample recording rather

than requiring the user to key in radio

station information. QBE music

recognition research has been pursued

by a number of groups worldwide.

For example, in the early 1980s

Broadcast Data Systems (www.bdson-

line.com/) was an early pioneer, using

a variety of methods to correlate wave-

forms [4]. In 1996 Musclefish

(www.musclefish.com/) developed a

method based on multidimensional

feature analysis and Euclidean dis-

tance metrics [6]. However, these methods

were all best suited for fairly clean audio sam-

ples and do not work well in the presence of

significant noise and distortion.

When we founded Shazam Entertain-

ment in London in 2000, we aimed to

develop a commercial QBE music recogni-

tion service that could be offered through

mobile phones [5]. A user would capture

audio samples by calling the service through

a short dial code; after sampling, the server

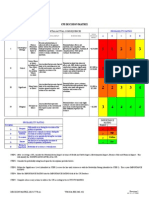

Shazam interface: (a) recording a 10-second audio

sample; (b) displaying the query results.

(a)

(b)

46 August 2006/Vol. 49, No. 8 COMMUNICATIONS OF THE ACM

would hang up and return the identification results

via an SMS text message. In 2004, we introduced a

newer version called Song Identity, providing a more

interactive interface, available first through Verizon

Wireless in the U.S. Since then, we have ported the

application to a variety of handset platforms.

B

efore using Song Identity, users first down-

load and install its applet into their hand-

sets. Upon hearing music they wish to

identify, they launch the applet, which

records about 10 seconds of ambient

audio and either sends an audio file to Shazam or per-

forms feature extraction on the phone to generate a

small signature file. This signature file is then sent to

a central server that performs a search and provides

the matching metadatatitle, artist, albumback to

the applet (see the figure here). Users are then pre-

sented with the title, artist, and album information, as

well as the option of downloading the corresponding

ringtoneif the matching one is availablebased on

the music. Future versions on some platforms may

also allow the purchase of full-track downloads, CD,

concert tickets, lyrics, and other follow-on data or

activities associated with the music.

Commercial QBE music recognition systems,

including Song Identity, must overcome a number of

daunting technical challenges:

Noise. Noise competes with the target music in

some environments; for example, music is generally

in the background in noisy cafs and shopping malls.

Being in a car adds the complication of traffic or

engine noise. Noise power may indeed be signifi-

cantly greater than signal power, so a recognition

algorithm must be robust enough to deal with signif-

icant noise.

Distortion. The system must be able to deal with

distortion arising from a variety of sources, including

imperfect playback or sampling equipment, as well as

environmental factors (such as reverberation and

absorption). Sampling through telephony equipment

reduces the frequency response to about

300Hz3,400Hz. Distortion may also arise from the

audio sample being subject to low bit-rate voice com-

pression in the mobile phone. Nonlinear noise sup-

pression and voice-quality-enhancement algorithms

built into the handset or into the mobile carriers net-

work may represent a further challenge in which the

sampling of background music results in recordings

that contain mostly silence.

Database management. The system must be able

to index fingerprints of millions of songs in its

online database without requiring an inordinate

number of servers. Thus the fingerprint, or unique

feature representation, of each song must be reason-

ably small, on the order of only a few kilobytes. More-

over, scaling to millions of songs must not be able to

incur a significant processing load on the backend

search engine, as the system may need to dispatch

hundreds or thousands of queries per second. The

system must also scale statistically, meaning the addi-

tion of many millions of songs into the database must

not significantly decrease the probability of finding a

correct match or significantly increase the probability

of reporting a false positive.

We were dismayed by the early audio samples we

collected through mobile phones; the music was often

so distorted we could barely recognize the presence of

music with our own ears. We were often hard-pressed

to match an audio sample over the phone against its

known master recording, let alone scale it to millions

THE ADDITION OF MANY

millions of songs into

the database must not

significantly decrease the

probability of finding a

correct match or significantly

increase the probability of

reporting a false positive.

of recordings. We were even at risk of having to aban-

don our efforts. Fortunately, in 2000 after three

months of work, we arrived at a solution involving

temporally aligned combinatorial hashing, generally

overcoming these challenges.

Another challenge we managed to address is more

logistical than technological: How to cost-effectively

compile a database of millions of songs. Shazam has

been purchasing music assets, as well as extracting fin-

gerprints from content partners with large catalogs of

music. Confronting these constraints actually helped

simplify development of our music recognition algo-

rithm. We were forced to disregard approaches that

could not scale to large numbers of recordings, espe-

cially in the presence of more noise than signal. This

thinking produced a number of insights [5]; first

among them, we would have to find robust features

that could be reproduced in the presence of significant

noise and distortion. We considered a number of can-

didate features (such as power envelopes and mel-fre-

quency cepstral coefficients), but most werent robust

enough for our needs.

We needed features that could be linearly super-

posed (transparently) and recovered in the presence of

noise. We thus turned to spectrogram peaks [1],

which provide a map of energy distribution in terms

of time and frequency.

1

The location of the peaks,

though the result of nonlinear processing, are sub-

stantially linearly superposable; that is, a spectrogram

peak analysis of a mixture of music and noise contains

spectral peaks (due to the music and the noise) if each

would be analyzed separately. The presence of corre-

sponding spectrogram peaks in a noisy versus noiseless

music signal makes it possible to determine with high

probability whether an audio sample matches a

recording in the database.

Though robust, sets of individual spectrogram

peaks used as fingerprint features provide insufficient

entropy (the number of unique features is too small)

to allow for an efficient search, especially in a very

large database. In order to increase the entropy while

maintaining transparency, we hit upon a scheme we

call combinatorial hashing in which we construct

fingerprint hash tokens using pairs of spectrogram

peaks chosen from the set of all spectrogram peaks

present in the signal being analyzed. In the fingerprint

formation process, we use a subset of spectrogram

peaks as anchor points, each with a target zone

defined by a range of time and frequency values offset

from the anchor points coordinates. Each anchor

point is paired to a number of target points in the tar-

get zone. The frequency information and relative time

offset from each pair of points are used to create a 32b

fingerprint hash token.

This combinatorial expansion results in perhaps a

ten-fold increase in the number of tokens searched in

the database over the original number of spectrogram

peaks. However, the increased entropy in the hash

tokens helps accelerate the index search by a factor of

more than a million, resulting in significant speed

improvement when identifying a particular song. This

speedup is due to the fact that more descriptive bits of

information allow for cutting more efficiently

through ambiguous clutter.

The effect of this combinatorial hashing on a mix-

ture of music and noise generates three classes of fin-

gerprint tokens:

Both spectrogram peaks belong to the target signal;

One peak belongs to the target signal and one to

a noise signal; and

Both peaks belong to noise.

O

nly the tokens having peaks from the

target signal are important to the

search process. In the kind of low sig-

nal-to-noise situation frequently

encountered in the Shazam applica-

tion, most of the tokens generated from the audio

sample are garbage. But the presence of even a small

percentage of good matching tokens is sufficient to

flag a statistically significant probability of finding the

correct song in a large database of songs.

We also found that the fingerprint features must be

aligned temporally; that is, if a set of features appears

in both the original recording in the database and in a

sample query, the relative positions of each feature

within each recording must be the same. Unlike

speech recognition, in which dynamic time warping

may be used to match up loosely corresponding fea-

tures with a time-varying and nondeterministic rate of

progression, the Shazam technique assumes the corre-

spondence is directly linear; that is, if you plot the rel-

ative times of occurrence of each token in a

time-versus-time scatterplot, a valid match should

have points that accumulate on a straight diagonal

line.

Such a line is quickly detected by searching for a

peak in a histogram of relative time differences. This

assumption concerning temporal alignment greatly

accelerates the matching process and strengthens the

acceptance/rejection criteria determining whether a

given fingerprint feature is valid, thus providing a

quick and effective way to filter out the large number

COMMUNICATIONS OF THE ACM August 2006/Vol. 49, No. 8 47

1

An overlapping Short-Time Fourier Transform is calculated at regular intervals on the

audio data, and a power level is calculated for each resulting time-frequency bin. A bin

is a peak if its power level is greater than all the other bins in a bounded region around

the bin.

of garbage tokens generated in the combinatorial

hashing stage.

We implemented the recognition algorithm in

C++ with certain speed-critical sections optimized in

assembly. The recognition server is implemented as a

cluster of a few dozen commodity off-the-shelf 64-bit

x86-based servers, each with 8GB of RAM and run-

ning an optimized Linux kernel.

As of June 2006, the Shazam database contained

more than three million tracks. Each incoming

request is received by a master process that then

broadcasts the query to a farm of slave processors,

each holding a piece of the database index in memory.

Each slave independently searches its chunk of the

universe of fingerprint tokens and reports its identifi-

cation results to the master. The master collects the

results and returns a report (concerning recognition)

to the remote client. For a discussion on performance

characteristics of the recognition algorithm, see [5].

The Shazam music recognition service

(www.shazam.com) has been publicly available on

mobile phones in the U.K. since 2002. Since then, it

has expanded into more than 20 countries hosted

through a variety of local partners under various ser-

vice brands, including Verizon Wireless and Cingular

in the U.S. As of June 2006, nearly six million paying

customers worldwide had used the service.

Meanwhile, Philips Electronics demonstrated its

own robust hashing audio fingerprinting algorithm

in 2001. Like Shazam, it is capable of free-field audio

identification (QBE), though it forms hashes from

differential energy flux in a time-frequency grid [2].

This technology was acquired in 2005 by Gracenote,

a music database company in Emeryville, CA. Also in

2001, the Fraunhofer Institut in Erlangen, Germany

(www.iis.fraunhofer.de/), demonstrated a technique

based on spectral flatness [3].

QBE music recognition is a commercial reality. In

the next few years, as more carriers and phone manu-

facturers offer related services, QBE will likely

become part of the standard mobile phone feature set,

like camera phones are today. The cost per query

should drop, and more integration into follow-on

sales and discovery services should also be possible.

Other search modalities (such as query-by-humming

or -similarity) may also be added.

References

1. Bond, P. StarCD: A star is born nationally seeking stellar CD sales. Hol-

lywood Reporter CCCLX, 13 (Nov. 1, 1999), 3.

2. Haitsma, J., Kalker, T., and Oostveen, J. Robust audio hashing for con-

tent identification. In Proceedings of the International Workshop on Con-

tent-based Multimedia Indexing (Brescia, Italy, Sept. 1921, 2001).

3. Herre, J., Allamanche, E., and Helmuth, O. Robust matching of audio

signals using spectral flatness features. In Proceedings of the 2001 IEEE

Workshop on Applications of Signal Processing to Audio and Acoustics

(Mohonk, NY, 2001), 127130.

4. Kenyon, S., Simkins, L., Brown, L., and Sebastian, R. U.S. Patent

4,450,531: Broadcast Signal Recognition System and Method. U.S. Patent

and Trademark Office, Washington, D.C.; www.uspto.gov.

5. Wang, A. An industrial-strength audio search algorithm. In Proceedings

of the Fourth International Conference on Music Information Retrieval

(Baltimore, Oct. 2630, 2003); www.ismir.net.

6. Wold, E., Blum, T., Keislar, D., and Wheaton, J. Content-based classi-

fication, search, and retrieval of audio. IEEE Multimedia 3, 3 (Fall

1996), 2736.

Avery Wang (avery@shazamteam.com) is the chief scientist of

Shazam Entertainment, Ltd., London, U.K.

Permission to make digital or hard copies of all or part of this work for personal or

classroom use is granted without fee provided that copies are not made or distributed

for profit or commercial advantage and that copies bear this notice and the full cita-

tion on the first page. To copy otherwise, to republish, to post on servers or to redis-

tribute to lists, requires prior specific permission and/or a fee.

2006 ACM 0001-0782/06/0800 $5.00

c

48 August 2006/Vol. 49, No. 8 COMMUNICATIONS OF THE ACM

THE PRESENCE OF

corresponding spectrogram

peaks in a noisy versus

noiseless music signal makes

it possible to determine with

high probability whether an

audio sample matches a

recording in the database.

You might also like

- Pirkka Aman Thesis Content HiresDocument192 pagesPirkka Aman Thesis Content HiresPirkka ÅmanNo ratings yet

- Facilitating Mobile Music Sharing and Social Interaction With Push!MusicDocument10 pagesFacilitating Mobile Music Sharing and Social Interaction With Push!MusicPirkka ÅmanNo ratings yet

- Pirkka Aman Thesis CoverDocument1 pagePirkka Aman Thesis CoverPirkka ÅmanNo ratings yet

- Salovaara PHDDocument130 pagesSalovaara PHDPirkka ÅmanNo ratings yet

- Life TrakDocument10 pagesLife TrakPirkka ÅmanNo ratings yet

- Braunhofer Sound of MusicDocument8 pagesBraunhofer Sound of MusicPirkka ÅmanNo ratings yet

- Wang, Context-Aware Mobile Music RecommendationDocument10 pagesWang, Context-Aware Mobile Music RecommendationPirkka ÅmanNo ratings yet

- SoH Out of The BubbleDocument4 pagesSoH Out of The BubblePirkka ÅmanNo ratings yet

- Ankolekar MusiconsDocument10 pagesAnkolekar MusiconsPirkka ÅmanNo ratings yet

- Storyplace - Me BentleyDocument16 pagesStoryplace - Me BentleyPirkka ÅmanNo ratings yet

- Capital MusicDocument10 pagesCapital MusicPirkka ÅmanNo ratings yet

- Auralist: Introducing Serendipity Into Music RecommendationDocument10 pagesAuralist: Introducing Serendipity Into Music RecommendationPirkka ÅmanNo ratings yet

- Serendipitous Familystories BentleyDocument10 pagesSerendipitous Familystories BentleyPirkka ÅmanNo ratings yet

- B OttariDocument9 pagesB OttariPirkka ÅmanNo ratings yet

- The CompassDocument4 pagesThe CompassPirkka ÅmanNo ratings yet

- Super MusicDocument8 pagesSuper MusicPirkka ÅmanNo ratings yet

- Cheng Just For MeDocument8 pagesCheng Just For MePirkka ÅmanNo ratings yet

- My TerritoryDocument10 pagesMy TerritoryPirkka ÅmanNo ratings yet

- Schedl Mobile Music GeniusDocument4 pagesSchedl Mobile Music GeniusPirkka ÅmanNo ratings yet

- Context PlayerDocument7 pagesContext PlayerPirkka ÅmanNo ratings yet

- Blue TunaDocument6 pagesBlue TunaPirkka ÅmanNo ratings yet

- A Survey of Music Similarity and Recommendation From Music Context DataDocument21 pagesA Survey of Music Similarity and Recommendation From Music Context DataPirkka ÅmanNo ratings yet

- 3D Map UI For Exploring Music CollectionsDocument8 pages3D Map UI For Exploring Music CollectionsPirkka ÅmanNo ratings yet

- Shoe Dog: A Memoir by the Creator of NikeFrom EverandShoe Dog: A Memoir by the Creator of NikeRating: 4.5 out of 5 stars4.5/5 (537)

- Grit: The Power of Passion and PerseveranceFrom EverandGrit: The Power of Passion and PerseveranceRating: 4 out of 5 stars4/5 (587)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceFrom EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceRating: 4 out of 5 stars4/5 (894)

- The Yellow House: A Memoir (2019 National Book Award Winner)From EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Rating: 4 out of 5 stars4/5 (98)

- The Little Book of Hygge: Danish Secrets to Happy LivingFrom EverandThe Little Book of Hygge: Danish Secrets to Happy LivingRating: 3.5 out of 5 stars3.5/5 (399)

- On Fire: The (Burning) Case for a Green New DealFrom EverandOn Fire: The (Burning) Case for a Green New DealRating: 4 out of 5 stars4/5 (73)

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeFrom EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeRating: 4 out of 5 stars4/5 (5794)

- Never Split the Difference: Negotiating As If Your Life Depended On ItFrom EverandNever Split the Difference: Negotiating As If Your Life Depended On ItRating: 4.5 out of 5 stars4.5/5 (838)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureFrom EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureRating: 4.5 out of 5 stars4.5/5 (474)

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryFrom EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryRating: 3.5 out of 5 stars3.5/5 (231)

- The Emperor of All Maladies: A Biography of CancerFrom EverandThe Emperor of All Maladies: A Biography of CancerRating: 4.5 out of 5 stars4.5/5 (271)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreFrom EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreRating: 4 out of 5 stars4/5 (1090)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyFrom EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyRating: 3.5 out of 5 stars3.5/5 (2219)

- Team of Rivals: The Political Genius of Abraham LincolnFrom EverandTeam of Rivals: The Political Genius of Abraham LincolnRating: 4.5 out of 5 stars4.5/5 (234)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersFrom EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersRating: 4.5 out of 5 stars4.5/5 (344)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaFrom EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaRating: 4.5 out of 5 stars4.5/5 (265)

- The Unwinding: An Inner History of the New AmericaFrom EverandThe Unwinding: An Inner History of the New AmericaRating: 4 out of 5 stars4/5 (45)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)From EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Rating: 4.5 out of 5 stars4.5/5 (119)

- Her Body and Other Parties: StoriesFrom EverandHer Body and Other Parties: StoriesRating: 4 out of 5 stars4/5 (821)

- Studies On Diffusion Approach of MN Ions Onto Granular Activated CarbonDocument7 pagesStudies On Diffusion Approach of MN Ions Onto Granular Activated CarbonInternational Journal of Application or Innovation in Engineering & ManagementNo ratings yet

- Math Curriculum Overview Grades 1 8Document1 pageMath Curriculum Overview Grades 1 8GuiselleNo ratings yet

- Learn Six Sigma Process and Methodology BasicsDocument4 pagesLearn Six Sigma Process and Methodology BasicsGeorge MarkasNo ratings yet

- Structural Testing Facilities at University of AlbertaDocument10 pagesStructural Testing Facilities at University of AlbertaCarlos AcnNo ratings yet

- Proportions PosterDocument1 pageProportions Posterapi-214764900No ratings yet

- 1 PDFDocument14 pages1 PDFPM JFNo ratings yet

- Energy Efficient Solar-Powered Street Lights Using Sun-Tracking Solar Panel With Traffic Density Monitoring and Wireless Control SystemDocument9 pagesEnergy Efficient Solar-Powered Street Lights Using Sun-Tracking Solar Panel With Traffic Density Monitoring and Wireless Control SystemIJRASETPublicationsNo ratings yet

- MGMT 410 Book ReportDocument1 pageMGMT 410 Book ReportLester F BoernerNo ratings yet

- Procedural Text Unit Plan OverviewDocument3 pagesProcedural Text Unit Plan Overviewapi-361274406No ratings yet

- Manual For The MCPL Programming LanguageDocument74 pagesManual For The MCPL Programming Languagechri1753No ratings yet

- 7 JitDocument36 pages7 JitFatima AsadNo ratings yet

- 62046PSYCHICSDocument1 page62046PSYCHICSs0hpokc310No ratings yet

- Subject and Power - FoucaultDocument10 pagesSubject and Power - FoucaultEduardo EspíndolaNo ratings yet

- 5.mpob - LeadershipDocument21 pages5.mpob - LeadershipChaitanya PillalaNo ratings yet

- What Is Architecture?Document17 pagesWhat Is Architecture?Asad Zafar HaiderNo ratings yet

- Menggambar Dengan Mode GrafikDocument30 pagesMenggambar Dengan Mode GrafikkurniawanNo ratings yet

- Rhythm Music and Education - Dalcroze PDFDocument409 pagesRhythm Music and Education - Dalcroze PDFJhonatas Carmo100% (3)

- Digital Logic Design: Dr. Oliver FaustDocument16 pagesDigital Logic Design: Dr. Oliver FaustAtifMinhasNo ratings yet

- HAU Theology 103 Group Goal Commitment ReportDocument6 pagesHAU Theology 103 Group Goal Commitment ReportEM SagunNo ratings yet

- Tabelas Normativas DinDocument2 pagesTabelas Normativas DinDeimos PhobosNo ratings yet

- The God Complex How It Makes The Most Effective LeadersDocument4 pagesThe God Complex How It Makes The Most Effective Leadersapi-409867539No ratings yet

- Honey Commission InternationalDocument62 pagesHoney Commission Internationallevsoy672173No ratings yet

- Circle Midpoint Algorithm - Modified As Cartesian CoordinatesDocument10 pagesCircle Midpoint Algorithm - Modified As Cartesian Coordinateskamar100% (1)

- New Microsoft Word DocumentDocument5 pagesNew Microsoft Word DocumentxandercageNo ratings yet

- Decision MatrixDocument12 pagesDecision Matrixrdos14No ratings yet

- Dswd-As-Gf-018 - Rev 03 - Records Disposal RequestDocument1 pageDswd-As-Gf-018 - Rev 03 - Records Disposal RequestKim Mark C ParaneNo ratings yet

- Pengenalan Icd-10 Struktur & IsiDocument16 pagesPengenalan Icd-10 Struktur & IsirsudpwslampungNo ratings yet

- STAR Worksheet Interviewing SkillsDocument1 pageSTAR Worksheet Interviewing SkillsCharity WacekeNo ratings yet

- Hum-Axis of Resistance A Study of Despair, Melancholy and Dis-Heartedness in Shahnaz Bashir's Novel The Half MotherDocument8 pagesHum-Axis of Resistance A Study of Despair, Melancholy and Dis-Heartedness in Shahnaz Bashir's Novel The Half MotherImpact JournalsNo ratings yet

- Space Gass 12 5 Help Manual PDFDocument841 pagesSpace Gass 12 5 Help Manual PDFNita NabanitaNo ratings yet