Professional Documents

Culture Documents

Optimal State-Feedback Control Under Sparsity and Delay Constraints

Uploaded by

naumzCopyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Optimal State-Feedback Control Under Sparsity and Delay Constraints

Uploaded by

naumzCopyright:

Available Formats

Optimal State-Feedback Control Under

Sparsity and Delay Constraints

Andrew Lamperski

1

Laurent Lessard

2

3

rd

IFAC Workshop on Distributed Estimation and Control in Networked Systems

(NecSys) pp. 204209, 2012

Abstract

This paper presents the solution to a general decentral-

ized state-feedback problem, in which the plant and con-

troller must satisfy the same combination of delay con-

straints and sparsity constraints. The control problem

is decomposed into independent subproblems, which are

solved by dynamic programming. In special cases with

only sparsity or only delay constraints, the controller re-

duces to existing solutions.

Notation

y

0:t

Time history of y: {y

0

, y

1

, . . . , y

t

}.

a b Directed edge from node a to node b.

d

ij

Shortest delay from node j to node i.

M

sr

Block submatrix (M

ij

)

is,jr

, e.g.

_

_

M

11

M

12

M

13

M

21

M

22

M

23

M

31

M

32

M

33

_

_

{2,3},{1}

=

_

M

21

M

31

_

.

1 Introduction

This paper studies a class of decentralized linear

quadratic control problems. In decentralized control, in-

puts to a dynamic system are chosen by multiple con-

trollers with access to dierent information. In this work,

the dynamic system is decomposed into subsystems, each

with a corresponding state and controller. A given con-

troller has immediate access to some states, delayed ac-

cess to some others, and no access to the rest. Since

some controllers cannot access certain state components,

the transfer matrix for the overall controller must satisfy

sparsity constraints.

While decentralized synthesis is dicult in general,

some classes of problems are known to be tractable and

can be reduced to convex, albeit innite dimensional, op-

timization problems. See [3] and [5]. These papers also

1

A. Lamperski is with the Department of Control and Dynamical

Systems at the California Institute of Technology, Pasadena, CA

91125 USA. andyl@cds.caltech.edu

2

L. Lessard is with the Department of Automatic Control at Lund

University, Lund, Sweden. laurent.lessard@control.lth.se

The second author would like to acknowledge the support of the

Swedish Research Council through the LCCC Linnaeus Center

suggest methods for solving the optimization problem.

The former gives a sequence of approximate problems

whose solutions converge to the global optimum, and

the latter uses vectorization to convert the problem to

a much larger one that is unconstrained. An ecient

LMI method was also proposed by [4].

The class of problems studied in this paper have par-

tially nested information constraints, which guarantees

that linear optimal solutions exist, [1]. Explicit state-

space solutions have been reported for certain special

cases. For state-feedback problems in which the con-

troller has sparsity constraints but is delay-free, solutions

were given in [6], and [7]. For state-feedback with delays

but no sparsity, a special case of the dynamic program-

ming argument of this paper was given by [2].

In Sections 2 and 3, we state the problem and explain

the state and input decomposition we will use. Main

results appear in Section 4, followed by discussion and

proofs.

2 Problem Statement

In this paper, we consider a network of discrete-time lin-

ear time-invariant dynamical systems. We will illustrate

our notation and problem setup through a simple ex-

ample, and then describe the problem setup in its full

generality.

Example 1. Consider the state-space equations

_

_

x

1

t+1

x

2

t+1

x

3

t+1

_

_

=

_

_

A

11

A

12

0

A

21

A

22

0

A

31

A

32

A

33

_

_

_

_

x

1

t

x

2

t

x

3

t

_

_

+

_

_

B

11

B

12

0

B

21

B

22

0

B

31

B

32

B

33

_

_

_

_

u

1

t

u

2

t

u

3

t

_

_

+

_

_

w

1

t

w

2

t

w

3

t

_

_

(1)

For quantities with a time dependence, we use subscripts

to specify the time index, while superscripts denote sub-

systems or sets of subsystems. Thus, x

i

t

is the state of

subsystem i at time t. The u

i

t

are the inputs, and the

w

i

t

are independent Gaussian disturbances. The goal is

to choose a state-feedback control policy that minimizes

1

1 2 3

0 0 0

1

1

0

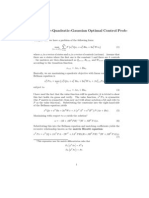

Figure 1: Network graph for Example 1. Each node rep-

resents a subsystem, and the arrows indicate the sparsity

of both the dynamical interactions (1) as well as the infor-

mation constraints (3). Additionally, the labels indicate

the propagation delay from one controller to another.

the standard nite-horizon quadratic cost

E

T1

t=0

_

x

t

u

t

_

T

_

Q S

S

T

R

_ _

x

t

u

t

_

+x

T

T

Q

f

x

T

(2)

with the usual requirement that R is positive denite,

while Q SR

1

S

T

and Q

f

are positive denite. Also,

dierent controllers may have access to dierent infor-

mation. For the purpose of this example, suppose the

dependencies are as follows

u

1

t

=

1

t

_

x

1

0:t

, x

2

0:t1

_

u

2

t

=

2

t

_

x

1

0:t1

, x

2

0:t

_

u

3

t

=

3

t

_

x

1

0:t1

, x

2

0:t

, x

3

0:t

_

(3)

Note that there is a combination of sparsity and delay

constraints; some state information may never be avail-

able to a particular controller, while other state informa-

tion might be available but delayed. It is convenient to

visualize this example using a directed graph with labeled

edges. We call this the network graph. See Fig. 1.

Note that in Example 1, both the plant (1) and the con-

troller (3) share the same sparsity constraints. Namely,

x

3

does not inuence x

1

or x

2

via the dynamics, nor can

it aect u

1

or u

2

via the controller. This condition will

be assumed for the most general case considered herein.

As explained in Appendix A, this condition is sucient

to guarantee that the optimal control policy

i

t

is linear,

a very powerful fact.

A quantity of interest in this paper is the delay matrix,

where each entry d

ij

is dened as the sum of the delays

along the fastest directed path from j to i. If no path

exists, d

ij

= . In Example 1, the delay matrix is:

d =

_

_

0 1

1 0

1 0 0

_

_

(4)

This particular delay matrix only contains 0s, 1s, and

s, but for more intricate graphs, the delay matrix can

contain any nonnegative integer, as long as it is the total

delay of the fasted directed path between two nodes.

General Case. In general, we consider any directed

graph G(V, E) with vertices V = {1, . . . , N}. Every such

edge is labeled with d

ij

{0, 1}. We refer to this graph

as the network graph. We then dene d

ij

for all other

pairs of vertices according to the fastest-path denition

above. We consider systems dened by the state-space

equations:

x

i

t+1

=

jV

dij1

_

A

ij

x

j

t

+ B

ij

u

j

t

_

+ w

i

t

for all i V (5)

The initial state x

0

can be xed and known, such as

x

0

= 0, or it may be a Gaussian random variable. Note

that the A

ij

denote matrices, which can be viewed as

submatrices of a larger A matrix. If we stack the various

vectors and matrices, we obtain a more compact repre-

sentation of the state-space equations:

x

t+1

= Ax

t

+Bu

t

+w

t

(6)

where A and B have sparsity patterns determined by

the d

ij

. Namely, we can have A

ij

= 0 whenever d

ij

{0, 1} and similarly for B

ij

. This fact can be veried in

Example 1 by comparing (1) and (4).

We assume the noise terms are Gaussian, IID for all

time, and independent between subsystems. In other

words, we have E(w

i

w

k T

t

) = 0 whenever = t or i = k,

and E(w

i

t

w

i T

t

) = W

i

for all t 0.

State information propagates amongst subsystems at

every timestep according to the graph topology and the

delays along the links. Each controller may use any state

information that has had sucient time to reach it. More

formally, u

i

t

is a function of the form

u

i

t

=

i

t

(x

1

0:tdi1

, . . . , x

N

0:td

iN

). (7)

Here if t < d

ij

(e.g. when d

ij

= ), then u

i

t

has no

access to x

j

at all. As a technical point, we assume that

G contains no directed cycles with zero length. If it did,

we could collapse the nodes of that cycle into a single

node.

The objective is to nd a control policy that satis-

es the information constraints (7) and minimizes the

quadratic cost function (2). According to our formula-

tion, the controller may be any function of the past in-

formation history, which grows with the size of the time

horizon T. However, we show in this paper how to con-

struct an optimal controller that is linear and has a nite

memory which does not depend on T.

3 Spatio-Temporal Decomposition

The solution presented herein depends on a special de-

composition of the states and inputs into independent

components. In Subsection 3.1, we dene the information

graph, which describes how disturbances injected at each

2

w

1

w

2

w

3

{1} {2, 3} {3}

{1, 2, 3}

Figure 2: The information graph for Example 1. The

nodes of this graph are the subsets of nodes in the net-

work graph (see Fig. 1) aected by dierent noises. For

example, w

2

injected at node 2 aects nodes {2, 3} im-

mediately, and aects {1, 2, 3} after one timestep.

Node 1 w

1

t3

w

1

t2

w

1

t1

w

1

t

Node 2 w

2

t3

w

2

t2

w

2

t1

w

2

t

Node 3 w

3

t3

w

3

t2

w

3

t1

w

3

t

Time

{1} {1, 2, 3}

{2, 3}

{3}

Figure 3: Noise partition diagram for Example 1 (refer to

Fig. 1 and 2). Each row represents a dierent node of the

network graph, and each column represents a dierent

time index, owing from left to right. For example, w

2

t2

will reach all three nodes at time t, so it belongs to the

label set {1, 2, 3}.

node propagate throughout the network. Then, in Sub-

section 3.2, we show how the information graph can be

used to dene a useful partition of the noise history. Fi-

nally, Subsection 3.3 explains the decomposition of states

and inputs, which will prove crucial for our approach.

3.1 Information Graphs

The information graph a useful alternative way of repre-

senting the information ow in the network. Rather than

thinking about nodes and their connections, we track the

propagation of the noise signals w

i

injected at the vari-

ous nodes of the network graph. The information graph

for Example 1 is shown in Fig. 2.

Formally, we dene the information graph as follows.

Let s

j

k

be the set of nodes reachable from node j within

k steps:

s

j

k

= {i V : d

ij

k}.

The information graph,

G(U, F), is given by

U = {s

j

k

: k 0, j V }

F = {(s

j

k

, s

j

k+1

) : k 0, j V }.

We will often write w

i

s to indicate that s = s

i

0

,

though we do not count the w

i

amongst the nodes of the

information graph, as a matter of convention.

The information graph can be constructed by tracking

each of the w

i

as they propagate through the network

graph. For example, consider w

2

, the noise injected into

subsystem 2. In Fig. 1, we see that the nodes {2, 3} are

aected immediately. After one timestep, the noise has

reached all three nodes. This leads to the path w

2

{2, 3} {1, 2, 3} in Fig. 2. When two paths reach the

same subset, we merge them into a single node. For

example, the path starting from w

1

also reaches {1, 2, 3}

after one step.

Proposition 1. Given an information graph

G(U, F),

the following properties hold.

(i) Every node in the information graph has exactly one

descendant. In other words, for every r U, there

is a unique s U such that r s.

(ii) Every path eventually hits a node with a self-loop.

(iii) If d = max

ij

{d

ij

: d

ij

< }, then an upper bound

for the number of nodes in the information graph is

given by |U| Nd.

Example 2. Fig. 4 shows the network and information

graphs for a more complex four-node network. We will

refer to this example throughout the rest of the paper.

Note that the information graph may have several con-

nected components. This happens whenever the network

graph is not strongly connected. For example, Fig. 2 has

two connected components because there is no path from

node 3 to node 2.

3.2 Noise Partition

The noise history at time t is the set random variables

consisting of all past noises:

H

t

= {w

i

: i V, 1 t 1}

where we have used the convention x

0

= w

1

. We now

dene a special partition of H

t

. This partition is related

to the information graph; there is one subset correspond-

ing to each s U. We call these subsets label sets, and

they are dened in the following lemma.

Lemma 2. For every s U, and for all t 0, dene

the label sets recursively using

L

s

0

= {x

i

0

: w

i

s} (8)

L

s

t+1

= {w

i

t

: w

i

s}

_

rs

L

r

t

. (9)

The label sets {L

s

t

}

sU

partition the noise history H

t

:

H

t

=

_

sU

L

s

t

and L

r

t

L

s

t

= whenever r = s

3

1

2

3

4

0

0

0

0

1

1

1 0

1

(a) Network graph for Example 2

w

1

w

2

w

3

w

4

{1} {2} {3} {3, 4}

{1, 2, 3}

{1, 2, 3, 4}

{2, 3, 4}

(b) Information graph for Example 2

Node 1 w

1

t3

w

1

t2

w

1

t1

w

1

t

Node 2 w

2

t3

w

2

t2

w

2

t1

w

2

t

Node 3 w

3

t3

w

3

t2

w

3

t1

w

3

t

Node 4 w

4

t3

w

4

t2

w

4

t1

w

4

t

Time

{1} {1, 2, 3} {1, 2, 3, 4}

{2} {2, 3, 4}

{3} {3, 4}

(c) Noise partition diagram for Example 2

Figure 4: Network graph (a), information graph (b),

and noise partition diagram (c) for Example 2.

Proof. We proceed by induction. At t = 0, we have

H

0

= {x

1

0

, . . . , x

N

0

}. For each i V , s = s

i

0

is the unique

set such that x

i

0

L

s

0

. Thus {L

s

0

}

sU

partitions H

0

.

Now suppose that {L

s

t

}

sU

partitions H

t

for some t 0.

By Proposition 1, for all r U there exists a unique

s U such that r s. Therefore each element w

i

k

H

t

is contained in exactly one set L

s

t+1

. For each i V ,

we have that L

s

i

0

t+1

is the unique label set containing w

i

t

.

Therefore {L

s

t+1

}

sU

must partition H

t+1

.

The partition dened in Lemma 2 can be visualized

using a noise partition diagram. Example 1 is shown

in Fig. 3 and Example 2 is shown in Fig. 4(c). These

diagrams show the partition explicitly at time t by indi-

cating which parts of the noise history belong to which

label set. Each label set is tagged with its corresponding

node in the information graph.

3.3 State and Input Decomposition

We show in Appendix A that our problem setup is par-

tially nested, which implies that there exists an optimal

control policy that is linear. We also show there that x

t

and u

t

are linear functions of the noise history H

t

.

Individual components u

i

t

will not depend on the full

noise history H

t

, because certain noise signals will not

have had sucient time to travel to node i. This fact

can be read directly o the noise partition diagram. To

see whether a noise symbol w

i

tk

L

s

t

aects u

i

t

, we

simply check whether i s. We state this result as a

lemma.

Lemma 3. The input u

i

t

depends on the elements of L

s

t

if and only if i s. The state x

i

t

depends on the elements

of L

s

t

if and only if i s.

The noise partition described in Subsection 3.2 induces

a decomposition of the input and state into components.

We may write:

u

t

=

sU

I

V,s

s

t

and x

t

=

sU

I

V,s

s

t

(10)

where

s

t

and

s

t

each depend on the elements of L

s

t

. Here

I is a large identity matrix with block rows and columns

conforming to the dimensions of x

i

t

or u

i

t

depending on

the context. The notation I

V,s

indicates the submatrix

in which the block-rows corresponding to i V and the

block-columns corresponding to j s have been selected.

For example, the state in Example 1 can be written as:

x

t

=

_

_

I

0

0

_

_

{1}

t

+

_

_

0 0

I 0

0 I

_

_

{2,3}

t

+

_

_

0

0

I

_

_

{3}

t

+

_

_

I 0 0

0 I 0

0 0 I

_

_

{1,2,3}

t

The vectors

s

t

each have dierent sizes, which is due to

Lemma 3. For example, x

2

t

is only a function of

{2,3}

t

and

{1,2,3}

t

, since these are the only label sets that contain 2.

We also use the superscript notation for other matrices.

Taking (1) as an example, if s = {3} and r = {2, 3}, then

A

sr

=

_

A

32

A

33

.

The state equations that dene x

t

(5) have a counter-

part in the

t

coordinates. We state the following lemma

without proof.

Lemma 4. The components {

s

t

}

sU

and {

s

t

}

sU

sat-

isfy the recursive equations:

s

0

=

w

i

s

I

s,{i}

x

i

0

(11)

s

t+1

=

rs

_

A

sr

r

t

+B

sr

r

t

_

+

w

i

s

I

s,{i}

w

i

t

(12)

Two important properties of the input and state de-

composition follow from results in this section. First,

4

each input u

i

t

is a function of a particular subset of

the information history H

t

, which follows directly from

Lemma 3.

Corollary 5. The input u

i

t

depends on the elements of

si

L

s

t

. In particular, u

i

t

has the ability to compute any

state

s

t

for which i s.

Secondly, Lemma 2 implies that the label sets for a

given time index consist of mutually independent noises.

So our decomposition provides independent coordinates:

Corollary 6. Suppose r, s U, and r = s. Then:

E

_

r

t

r

t

_ _

s

t

s

t

_

T

= 0

4 Main Results

Consider the general problem setup described in Sec-

tion 2, and generate the corresponding information graph

G(U, F) as explained in Section 3.1. For every node

r U, dene the matrices X

r

0:T

recursively as follows:

X

r

T

= Q

rr

f

X

r

t

= Q

rr

+A

srT

X

s

t+1

A

sr

_

S

rr

+A

srT

X

s

t+1

B

sr

_

_

R

rr

+B

srT

X

s

t+1

B

sr

_

1

_

S

rr

+A

srT

X

s

t+1

B

sr

_

T

(13)

where s U is the unique node such that r s. Finally,

dene the gain matrices K

r

0:T1

for every r U:

K

r

t

=

_

R

rr

+B

srT

X

s

t+1

B

sr

_

1

_

S

rr

+A

srT

X

s

t+1

B

sr

_

T

(14)

Theorem 7. The optimal control policy is given by

u

t

=

sU

I

V,s

s

t

where

s

t

= K

s

t

s

t

(15)

where the states

s

t

evolve according to (12). The corre-

sponding optimal cost is

V

0

(x

0

) =

iV

wis

_

trace

_

(X

s

0

)

{i},{i}

E

_

x

i

0

x

i

0

T

_

_

+

T

t=1

trace

_

(X

s

t

)

{i},{i}

W

i

_

_

(16)

Theorem 7 describes an optimal controller as a func-

tion of the disturbances w. The controller can be trans-

formed into an explicit state-feedback form as follows.

For every s U, dene the subset s

w

= {i : w

i

s}.

This set will be a singleton {i} when the node is a root

node of the information graph, and will be empty oth-

erwise. For example, consider Example 2 (Fig. 4(b)).

When s = {3, 4} we have s

w

= {4}, and when s =

{2, 3, 4} we have s

w

= . Each s U can be parti-

tioned as s = s

w

s

w

, where s

w

= s \ s

w

. The states of

the controller are given by

(s)

t

= I

sw,s

s

t

for all s U satisfying s

w

=

Theorem 8. Let

A

sr

t

= A

sr

+B

sr

K

r

t

. The optimal state-

feedback controller is given by the state-space equations

(s)

0

= 0

(s)

t+1

=

rs

I

sw,s

A

sr

t

I

r,rw

(r)

t

+

rs

I

sw,s

A

sr

t

I

r,rw

_

x

rw

t

vr

v=r

I

rw,vw

(v)

t

_

(17)

u

t

=

sU

I

V,s

K

s

t

I

s,sw

(s)

t

+

sU

I

V,s

K

s

t

I

s,sw

_

x

sw

t

vs

v=s

I

sw,vw

(v)

t

_

(18)

See Section 6 for complete proofs of Theorems 7 and 8.

5 Discussion

Note that for a self-loop, r r, (13) denes a classical

Riccati dierence equation. Since the information graph

can have at most N self-loops, at most N Riccati equa-

tions must be solved. For other edges, (13) propagates

the solution of the self-loop Riccati equation down the

information graph toward the root nodes.

The solution extends naturally to innite horizon, pro-

vided that at the self-loops, r r, the matrices satisfy

classical conditions for stabilizing solutions to the corre-

sponding algebraic Riccati equations. Indeed, as T ,

X

rr

t

approach steady state values. Since all gains are

computed from the self-loop Riccati equations, the gains

must also approach steady state limits.

The results can also be extended to time-varying sys-

tems simply by replacing A, B, Q, R, S, and W with A

t

,

B

t

, Q

t

, R

t

, S

t

, and W

t

, respectively.

The results of [7] and [6] on control with sparsity con-

straints correspond to the special case of this work in

which all edges have zero delay. In this case, the infor-

mation graph consists of N self-loops. Thus, N Riccati

equations must be solved, but they are not propagated.

The results on delayed systems of [2] correspond to

the special case of strongly connected graphs in which

all edges have a delay of one timestep. Here, all paths

in the information graph eventually lead to the self-loop

V V . Thus, a single Riccati equation is solved and

propagated down the information graph.

5

6 Proofs of Main Results

6.1 Proof of Theorem 7

The proof uses dynamic programming and takes advan-

tage of the decomposition described in Section 3. Begin

by dening the cost-to-go from time t as a function of

the current state and future inputs:

J

t

(x

t

, u

t:T1

) =

T1

=t

_

x

_

T

_

Q S

S

T

R

_ _

x

_

+x

T

T

Q

f

x

T

Note that J

t

is a random variable because it does not de-

pend explicitly on x

t+1:T

. These future states are dened

recursively using (6), and thus depend on the noise terms

w

t:T1

. Using the decomposition 10, the state is divided

according to x

t

=

sU

I

V,s

s

t

. We use the abridged no-

tation

t

to denote {

s

t

: s U}, and we use a similar

notation to denote the decomposition of u

t

into

t

. In

these new coordinates, the cost-to-go becomes:

J

t

(

t

,

t:T1

) =

sU

_

T1

=t

_

_

T

_

Q

ss

S

ss

S

ssT

R

ss

_ _

_

+

s

T

T

Q

ss

f

s

T

_

where the future states

t+1:T

are dened recursively us-

ing (12). Now dene the value function, which is the

minimum expected cost-to-go:

V

t

(

t

) = min

t:T1

EJ

t

(

t

,

t:T1

)

Here, it is implied that the minimization is taken over ad-

missible policies, namely

s

t

is a linear function of L

s

t

as

explained in Section 3.3. Unlike the cost-to-go function,

the value function is deterministic. The original min-

imum cost considered in the Introduction (2) is simply

V

0

(x

0

). The value function satises the Bellman equation

V

t

(

t

) =

min

t

_

E

sU

_

s

t

s

t

_

T

_

Q

ss

S

ss

S

ssT

R

ss

_ _

s

t

s

t

_

+V

t+1

(

t+1

)

_

(19)

Lemma 9. The Bellman equation (19) is satised by a

quadratic value function of the form:

V

t

(

t

) =

sU

E

_

s

t

T

X

s

t

s

t

_

+c

t

(20)

Proof. The proof uses induction proceeding backwards

in time. At t = T, the cost-to-go is simply the termi-

nal cost x

T

T

Q

f

x

T

, and the value function is the expected

value of this quantity. Decompose x

T

into its

T

coordi-

nates. The

T

coordinates are mutually independent by

Corollary 6, so we may write

V

T

(

T

) =

sU

E

_

s

T

T

Q

ss

f

s

T

_

Thus, (20) holds for t = T, by setting X

s

T

= Q

f

for every

s U and c

T

= 0. Now suppose (20) holds for t + 1.

Equation (19) becomes:

V

t

(

t

) = min

t

_

E

sU

_

s

t

s

t

_

T

_

Q

ss

S

ss

S

ssT

R

ss

_ _

s

t

s

t

_

+E

sU

s

t+1

T

X

s

t+1

s

t+1

+c

t+1

_

(21)

Substitute the recursion for

t+1

dened in (12), and take

advantage of the mutual independence of the

t

and

t

terms proved in Corollary 6. Finally, we obtain:

V

t

(

t

) = min

t

_

E

rU

_

r

t

r

t

_

T

r

t+1

_

r

t

r

t

_

_

+c

t+1

+

iV

wis

trace

_

(X

s

t+1

)

{i},{i}

W

i

_

(22)

where

r

t+1

is given by:

r

t+1

=

_

Q

rr

S

rr

S

rrT

R

rr

_

+

_

A

sr

B

sr

T

X

s

t+1

_

A

sr

B

sr

and s is the unique node in the information graph such

that r s, see Proposition 1. Equation (22) can be de-

composed into independent quadratic optimization prob-

lems, one for each

r

t

:

r

t

= arg min

r

t

E

_

r

t

r

t

_

T

r

t+1

_

r

t

r

t

_

for all r U

The optimal cost is again a quadratic function, which

veries our inductive hypothesis.

The optimal inputs found by solving the quadratic op-

timization problems in Lemma 9 are given by

s

t

= K

s

t

s

t

.

This policy is admissible by Corollary 5. Substituting the

optimal policy, and comparing both sides of the equation,

we obtain the desired recursion relation as well as the op-

timal cost (13)(16).

6.2 Proof of Theorem 8

Consider a node s U with w

i

s. Thus the set s

w

is

nonempty, and s

w

= {i}. From (10),

x

i

t

=

vi

I

sw,v

v

t

=

vs

I

sw,v

v

t

(23)

The second equality follows because v i implies that

v s. Indeed, for any other j s, we must have d

ji

= 0.

Thus, if v i, then v j as well. Rearranging (23) gives

I

sw,s

s

t

= x

i

t

vs

v=s

I

sw,v

v

t

6

Since s

w

and s

w

partition s, we may write

s

t

as

s

t

= I

s,sw

I

sw,s

s

t

+I

s,sw

I

sw,s

s

t

.

= I

s,sw

I

sw,s

s

t

+I

s,sw

_

x

i

t

vs

v=s

I

sw,v

v

t

_

= I

s,sw

(s)

t

+I

s,sw

_

x

i

t

vs

v=s

I

sw,vw

(v)

t

_

(24)

where we substituted the denition

(s)

t

= I

sw,s

s

t

in the

nal step and used the fact that i v

w

, because v = s

and we cant have both w

i

v and w

i

s.

We can now nd state-space equations in terms of the

new

t

coordinates. The input u

t

is found by substi-

tuting (24) into (15), and the

t

dynamics are found by

substituting (24) into (12) and multiplying on the left by

I

sw,s

. Note that the controller now depends on the state

x

t

rather than the disturbance w

t

.

References

[1] Y-C. Ho and K-C. Chu. Team decision theory and infor-

mation structures in optimal control problemsPart I.

IEEE Transactions on Automatic Control, 17(1):1522,

1972.

[2] Andrew Lamperski and John C. Doyle. Dynamic pro-

gramming solutions for decentralized state-feedback LQG

problems with communication delays. In American Con-

trol Conference, 2012.

[3] Xin Qi, M.V. Salapaka, P.G. Voulgaris, and M. Kham-

mash. Structured optimal and robust control with mul-

tiple criteria: a convex solution. IEEE Transactions on

Automatic Control, 49(10):16231640, 2004.

[4] Anders Rantzer. Linear quadratic team theory revisited.

In American Control Conference, pages 16371641, 2006.

[5] M. Rotkowitz and S. Lall. A characterization of convex

problems in decentralized control. IEEE Transactions on

Automatic Control, 51(2):274286, 2006.

[6] Parikshit Shah and Pablo A. Parrilo. H2-optimal decen-

tralized control over posets: A state space solution for

state-feedback. In IEEE Conference on Decision and Con-

trol, pages 67226727, 2010.

[7] J. Swigart. Optimal Controller Synthesis for Decentralized

Systems. PhD thesis, Stanford University, 2010.

A Partial Nestedness

In this section, we discuss partial nestedness, a concept

rst introduced by [1]. Dene the sets:

I

i

t

= {x

j

: j V, 0 t d

ij

}

Comparing with (7), I

i

t

is precisely the information that

u

i

t

has access to when making its decision.

Denition 10. A dynamical system (6) with informa-

tion structure (7) is partially nested if for every ad-

missible set of policies

i

t

, whenever u

j

aects u

i

t

, then

I

j

I

i

t

.

The main result regarding partial nestedness is given

in the following lemma.

Lemma 11 ([1]). Given a partially nested structure, the

optimal control for each member exists, is unique, and is

linear.

In order to apply this result, we must rst show that

the problems considered in this paper are of the correct

type.

Lemma 12. The information structure described in (7)

is partially nested.

Proof. Suppose u

j

aects u

i

t

in the most direct manner

possible. Namely, u

j

directly aects x

+1

, which aects a

sequence of other states until it reaches x

k

, and x

k

I

i

t

.

Further suppose that x

I

j

.

u

j

aects x

k

directly = d

kj

(25)

x

k

I

i

t

= d

ik

t (26)

x

I

j

= d

j

(27)

Adding (25)(27) together and using the triangle inequal-

ity, we obtain d

i

t . Thus, x

I

i

t

. It follows that

I

j

I

i

t

, as required.

If u

j

aects u

i

t

via a more complicated path, apply the

above argument to each consecutive pair of inputs along

the path to obtain the chain of inclusions I

j

I

i

t

.

With partial nestedness established, Lemma 11 implies

that we have a unique linear optimal controller. In par-

ticular, the optimal

i

t

are linear functions of I

i

t

. Looking

back at (5), we conclude that x

t

and u

t

must be linear

functions of the noise history w

1:t1

, since w

t

cannot

aect x

t

or u

t

instantaneously. In general, individual

components such as u

i

t

will not be functions of the full

noise history w

1:t1

. This topic is discussed in detail in

Section 3.

7

You might also like

- Instructions For Completing The Business Entities Records - Order FormDocument3 pagesInstructions For Completing The Business Entities Records - Order FormnaumzNo ratings yet

- Coding Interview 6Document677 pagesCoding Interview 6akg299No ratings yet

- The Expectation-Maximisation Algorithm: 14.1 The EM Algorithm - A Method For Maximising The Likeli-HoodDocument21 pagesThe Expectation-Maximisation Algorithm: 14.1 The EM Algorithm - A Method For Maximising The Likeli-HoodnaumzNo ratings yet

- How To Eat Healthy On A BudgetDocument3 pagesHow To Eat Healthy On A BudgetnaumzNo ratings yet

- NBDE Part 1 RQ FileDocument132 pagesNBDE Part 1 RQ Filenaumz60% (5)

- Structural Results and Explicit Solution For Two-Player LQG Systems On A Finite Time HorizonDocument8 pagesStructural Results and Explicit Solution For Two-Player LQG Systems On A Finite Time HorizonnaumzNo ratings yet

- Ggplot2 Cheatsheet 2.0Document2 pagesGgplot2 Cheatsheet 2.0ChaitanyaNo ratings yet

- XEROX PARC InternshipsDocument1 pageXEROX PARC InternshipsnaumzNo ratings yet

- Linear Quadratic ControlDocument7 pagesLinear Quadratic ControlnaumzNo ratings yet

- Optimal Control of Decentralized Quadratic RegulatorDocument7 pagesOptimal Control of Decentralized Quadratic RegulatornaumzNo ratings yet

- Big Data Revolution PDFDocument22 pagesBig Data Revolution PDFkarunesh_343No ratings yet

- WLFGuide 2010Document107 pagesWLFGuide 2010Jaajvel.E.PNo ratings yet

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeFrom EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeRating: 4 out of 5 stars4/5 (5794)

- The Little Book of Hygge: Danish Secrets to Happy LivingFrom EverandThe Little Book of Hygge: Danish Secrets to Happy LivingRating: 3.5 out of 5 stars3.5/5 (399)

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryFrom EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryRating: 3.5 out of 5 stars3.5/5 (231)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceFrom EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceRating: 4 out of 5 stars4/5 (894)

- The Yellow House: A Memoir (2019 National Book Award Winner)From EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Rating: 4 out of 5 stars4/5 (98)

- Shoe Dog: A Memoir by the Creator of NikeFrom EverandShoe Dog: A Memoir by the Creator of NikeRating: 4.5 out of 5 stars4.5/5 (537)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureFrom EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureRating: 4.5 out of 5 stars4.5/5 (474)

- Never Split the Difference: Negotiating As If Your Life Depended On ItFrom EverandNever Split the Difference: Negotiating As If Your Life Depended On ItRating: 4.5 out of 5 stars4.5/5 (838)

- Grit: The Power of Passion and PerseveranceFrom EverandGrit: The Power of Passion and PerseveranceRating: 4 out of 5 stars4/5 (587)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaFrom EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaRating: 4.5 out of 5 stars4.5/5 (265)

- The Emperor of All Maladies: A Biography of CancerFrom EverandThe Emperor of All Maladies: A Biography of CancerRating: 4.5 out of 5 stars4.5/5 (271)

- On Fire: The (Burning) Case for a Green New DealFrom EverandOn Fire: The (Burning) Case for a Green New DealRating: 4 out of 5 stars4/5 (73)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersFrom EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersRating: 4.5 out of 5 stars4.5/5 (344)

- Team of Rivals: The Political Genius of Abraham LincolnFrom EverandTeam of Rivals: The Political Genius of Abraham LincolnRating: 4.5 out of 5 stars4.5/5 (234)

- The Unwinding: An Inner History of the New AmericaFrom EverandThe Unwinding: An Inner History of the New AmericaRating: 4 out of 5 stars4/5 (45)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyFrom EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyRating: 3.5 out of 5 stars3.5/5 (2219)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreFrom EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreRating: 4 out of 5 stars4/5 (1090)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)From EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Rating: 4.5 out of 5 stars4.5/5 (119)

- Her Body and Other Parties: StoriesFrom EverandHer Body and Other Parties: StoriesRating: 4 out of 5 stars4/5 (821)

- IBM Rational Quality Manager 000-823 Practice Exam QuestionsDocument20 pagesIBM Rational Quality Manager 000-823 Practice Exam QuestionsCahya PerdanaNo ratings yet

- Tn179 05 PDFDocument517 pagesTn179 05 PDFchimbwaNo ratings yet

- Penta XL Series UDocument10 pagesPenta XL Series UGlennNo ratings yet

- Chapter 6 Solution PDFDocument7 pagesChapter 6 Solution PDFgauravroongtaNo ratings yet

- Commentz-Walter: Any Better Than Aho-Corasick For Peptide Identification?Document5 pagesCommentz-Walter: Any Better Than Aho-Corasick For Peptide Identification?White Globe Publications (IJORCS)No ratings yet

- Dynamic ProgrammingDocument52 pagesDynamic ProgrammingattaullahchNo ratings yet

- IPF NextLifter InstructionsDocument25 pagesIPF NextLifter Instructionsema94mayNo ratings yet

- Powerful FSL Tools for Brain Imaging AnalysisDocument38 pagesPowerful FSL Tools for Brain Imaging AnalysissiolagNo ratings yet

- Access the new Azure Portal and view subscription information in the dashboardDocument2 pagesAccess the new Azure Portal and view subscription information in the dashboardSaikrishNo ratings yet

- hw2 AnsDocument5 pageshw2 AnsJIBRAN AHMEDNo ratings yet

- 3VA91570EK11 Datasheet en PDFDocument4 pages3VA91570EK11 Datasheet en PDFAmanda RezendeNo ratings yet

- 2018 Metrobank-MTAP-DepEd Math Challenge National Finals Grade 10 Individual Oral Competition QuestionsDocument5 pages2018 Metrobank-MTAP-DepEd Math Challenge National Finals Grade 10 Individual Oral Competition QuestionsHarris Lee50% (2)

- OOP ProgramDocument2 pagesOOP ProgramMuteeb0% (1)

- Revision Module 5 PDFDocument5 pagesRevision Module 5 PDFavineshNo ratings yet

- Stacks and QueuesDocument21 pagesStacks and QueuesDhivya NNo ratings yet

- Creating Interactive OLAP Applications With MySQL Enterprise and MondrianDocument37 pagesCreating Interactive OLAP Applications With MySQL Enterprise and MondrianOleksiy Kovyrin100% (10)

- Purchasing Doc Release With Multiple CurrenciesDocument8 pagesPurchasing Doc Release With Multiple CurrenciesBalanathan VirupasanNo ratings yet

- The Data Centric Security ParadigmDocument10 pagesThe Data Centric Security ParadigmLuke O'ConnorNo ratings yet

- MES Evaluation and SelectionDocument16 pagesMES Evaluation and Selectionadarshdk1100% (1)

- 1Document227 pages1api-3838442No ratings yet

- Programming Logic Concepts For TCS NQTDocument39 pagesProgramming Logic Concepts For TCS NQTAjay Kumar Reddy KummethaNo ratings yet

- C++ Nested Loops LabDocument8 pagesC++ Nested Loops LabFikriZainNo ratings yet

- An Introduction To Optimal Control Applied To Disease ModelsDocument37 pagesAn Introduction To Optimal Control Applied To Disease ModelsMohammad Umar RehmanNo ratings yet

- Distribution System Analysis: Reliable Analytic and Planning Tools To Improve Electrical Network PerformanceDocument2 pagesDistribution System Analysis: Reliable Analytic and Planning Tools To Improve Electrical Network PerformanceSabaMannan123No ratings yet

- Integrating Siebel Web Services Aug2006Document146 pagesIntegrating Siebel Web Services Aug2006api-3732129100% (1)

- Viewshed Calculation AlgoDocument8 pagesViewshed Calculation AlgoYasirNo ratings yet

- How To Make A Simple Computer Virus - Code in Code - BlocksDocument3 pagesHow To Make A Simple Computer Virus - Code in Code - BlocksasmaNo ratings yet

- Deliver Tangible Business Value with Two IT Releases per YearDocument72 pagesDeliver Tangible Business Value with Two IT Releases per YearRodionov RodionNo ratings yet

- Data Science 1Document133 pagesData Science 1Akhil Reddy100% (2)

- SAP Ariba Suppliers’ GuideDocument20 pagesSAP Ariba Suppliers’ GuidevitNo ratings yet