Professional Documents

Culture Documents

Homework 7 Solutions PDF

Uploaded by

Sarah JohnsonOriginal Description:

Original Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Homework 7 Solutions PDF

Uploaded by

Sarah JohnsonCopyright:

Available Formats

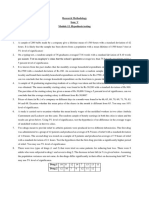

STAT5302 Homework Assignment 7

Solution

Note

All R commands used are provided in the Appendix (Pages 9-10). Please try to replicate the R results in the solutions and learn more about the related R functions by manipulating them (such as try dierent options and experiment on dierent problems). Come to oce hours or make an appoint with me (chen2285@umn.edu) if you need more details.

Problem 7.3

1. The key messages from the scatter-plot matrix (Figure 1) are: (1) the O measurements are very highly correlated, but the A measurements are less highly correlated; (2) there is no obvious dependence on time; (3) evidence of curvature in the marginal response plots, the last row of the scatter-plot matrix, is weak. Figure 1: scatter-plot matrix of original variables

5 15

G G G G GG G G G GG G G G G G GG G G G G G G G G G G GG G GG G G G G G G G G G

6 10

G G G G G GG G G G G G G G GG G G G G G G G G G GG G GG G G G G G G G GG G GG G G G G G G G G G G G G GG G G GG G G G G G G G G G G G G G G G G G G GG GG G G G G G G G G G G G G G G G G GG G G G G G GG G G G G G GG G G G GG G G G G G G G

15

25

G GG G G G GG G G G G G GG GG G G G GG G G G G G G G G G G GG G G GG G G G G G G G G G G G GG G G G G G GG GG GG G G G G G G G G GG GG GG G G G G G GGG G G G GG G G G G G G G G G GG G G G G G G G G G G G G G G GG G G G G G G G GG G G

40000 120000

G G G G G G G GG G G G G GG G G G G GG G GG G G G GG G G G G G G G G G G G G G G G GG G GG G G G G G G G G G G G GG G GG G G G G GG G G G G G G GGGG G G G G GG G G G G G G G G G G G G G G G G GG GG G G GG G G GG G G GG G GGGG G G G G

Year

G G G GG G G G GG G GG G G G G G G G G G G G G G GG G GG G G G G G G GG G G G G G G GG G GG GG G G G GG GG G G GG G G G G G GG G G G G G G G G G G G G G G G G GGGG G G G G GG G G G G G G G G G G GG G G G G G G G G G G G G G G G G GG G G G GG GG GG G G G GG G G G GG G G G G G G G G G G G G G G G

G G G G G G G G GG G G G G G G GG G GG G G GG G G G G G GG G G G G GG G G G G G G G GG G GGG G G G GG GG GG G G GG GG G G G GG G G G G G GG GG G G G G G G

15

GG G G GG GG G GG G G GG G G G G G GG G G G G GG G G G G G GG G G GG G G G G G G G G G G G G GG G G G G G GG G G G G GG G G G G G GG G GG G G G G G G G GG G

APMAM

6 10

G GG G G G G G G GG G G G GG G G GG G G G GG G G G G G GG G GG G GGG G G

GG GG G G G G G G G G G G G G G G G G G G G G G G G G GG G G G G G G G G G

APSLAKE

G G G G G G G GG G G G G G G G G G G G G G GG G G G G G G G G G GG

G G GG G G G GG G G G G G G G G G G GG G G GG GG G G G GG GG GG G G G

G G GG G G GG G G G G G G G G G G G G GG G G G G G GG

G G G GG G G G G G G GG G G GG G G G GG G G GG G GG G G GG G G GGG G G

G GG G G G G GG G G G GG G G G GG G GG G G G G GG G G G G G G G G G G GG G G

G G G G G G GG G G G G G G G GGGG G G G GG G G G G G G G GG G G GG G G G G G G GG G G G GG G GG G G GG G G G G G G G GGGG G G G G G G G G GGG G G G G G G G G GG G G GG G G G G GG G G G G G G G G G G G G G G G G G G G G G G G G GG G G G G G G G G GG G GG G G G G G G G G GG G G G G G G G G G G G G G G GG

G G G GG GG G G G G G G GG G G G G G G GG G G G G GG G G GG G G G G G GG G G G GGG G GG G G G GG G G G GG G G G G GG G G G G G GG G G G G G G G GGG G GG G G GG G G G G G G G G GG G G G G G GG G G G GG G G G G G G GG G G G G G G G G G G G GG GG G GG G GG G G G GGG G G G G G G G

GG G G G G G GG G G G GG G G G G G G G G G GG GG G G GG G GG G G G G G G G G G GG G G G G G GG GG G G G G G GG GG GG G G GG G G G GG G G G G G GG G G G G G G G GG GG G G G G G G G GG G G G G G G G G GG G G G G G G G G GGG G G G G G G G GG G G GG G G G G GG G G GG GG G G G G G G G G

OPBPC

G G GGG G GG G G G GG G G G GG G G G G G G G G G G G G G G GG G G GG G

G GG G G G GG G G G G G G G G G G G G G G G G G G G G G G G G G G G G G G G G G G G G G G G G G G G G G G G G G G G GG G G G G G G G G G G G G

G G G G G G G G GG GG G G G GG G GG G G G G G G G G G G G G G G G G G G G G

25

G G G G G G G G G G G GG G G G GG G G G G G G G G G G GG G GGG GG G G G G G G G G G G G G G G GG G G G GGG G G G G G G G GGG G G G G G G GG G G G GG G G G G G

15

G G G G G G GG GG G G G G GG G G G G GG G G G G G G G G G G G G G G G G G

OPRC

G G GGG G G GG GG G G G GG G G G GG G G GG GG G G G G G G G G G G

40000 120000

G G G GGG G GG G G G G G G GGG G G G G G G G G G G G G G G G G G G G G G G G G G

G G GG G G G G G G G GG G G GG G G G G G G G G G G G G G G G G G G G G G

G G GG G G GG G G G GG G GG G G G G G G G G G G G G G G G G G G G G G G

G G

G G GG G G GG G G G G G G G G G G G G G G G G G G G G GG G G G G G GG G

BSAAM

1950

1980

10

10

30

5 15

30

The automatic choice of a transformation is available in the alr3 package for R: > summary(ans <- powerTransform(water[,2:7])) 1

5 15

G G GG G G G G G GG G G G G G G G G G G G G G G G G G G G G G G G G G G G G

G G GG G G G G G GGG G G G GG G G GG G G G G G G G G G G G G G GG G

OPSLAKE

G G G G G G G GG G G G G G G GG G G G G G G G G G G G G G G G G G G G G

30

10

30

APSAB

GG G G GG G G G G G G GG G G G GG G G G G G G G G G G G G G G GG G

10

1950

GG G G G G G GG G G G G G GG G G G G G G G G G GG G G G G G G G GG G G G G G G

G GG G G G G G G G G G G G G GG G G G GG G G G G G G G G G G GGG G GG G G

GG GG G GG G G GG G G G G G GG G G G G G G G G G G G G G G G G G GG G G G G G G

G G G

1980

STAT5302 bcPower Transformations to Multinormality Est.Power Std.Err. Wald Lower Bound Wald Upper Bound 0.0982 0.2861 -0.4625 0.6589 0.3450 0.2032 -0.0533 0.7432 0.0818 0.2185 -0.3466 0.5101 0.0982 0.1577 -0.2109 0.4073 0.2536 0.2445 -0.2255 0.7328 0.2534 0.1763 -0.0921 0.5988

Solution

APMAM APSAB APSLAKE OPBPC OPRC OPSLAKE

Likelihood ratio tests about transformation parameters LRT df pval LR test, lambda = (0 0 0 0 0 0) 5.452999 6 4.871556e-01 LR test, lambda = (1 1 1 1 1 1) 61.203125 6 2.562905e-11 The indication is to transform all the predictors to log scale, since the p-value for the LR test is about .49. A graphic summary of the transformed variables is in Figure 2. Figure 2: scatter-plot matrix of log-transformed variables

1.0

2.0

G G G G G G G G G G G G G G G G G GG G G G G G G G GG G G G G G GG G G G G G G G G GG G G G G G GG GG G G G G G G G G G G GG G G G G G G GG G G G G G GG G G G

0.5

1.5

G G

2.5

G GG G G G G G G G G G G G GG G G G G G GG G G G G G GG GG G GG GG G G GG G G G G G G G G G G GG G GG GGG GG G G G G G GG G GG G G G G G G G G G G G GG G G G G G G G G G GG G G GG G G GG G G G G G G G G G G G GG G G G G G G G

1.5

2.5

G G G GG G G G GG G G G G G GG G G G GG G G G G GG GG GG GG GG G G GG G G G G G G GG G G G G G G G G G G G G G G G G G G G G G G G G G G G GG G G G G G G G G GG G GG G GG G GG G G G G G G G G G GG G GGG G G G G G G GG G G G G G G

10.6

11.4 40000 120000 1.5 2.5 3.5 1.5 3.0 0.5 1.5 2.5

BSAAM

G G G G GG G G GG GG G GG G G G G G G G G G GGG G G GG G G G G G G G G G G

G G G G GGG G G G GG G G GG G G G G G G G G G G G G GG G GG G G G G G GG G G G G G G G G G G GGG G GG G GG GG G G G GG G G G G G GG GG G G G G G G

G G G G G G G G G G GG G G G G G G G GG G G G G G G G G G G G GGG G G GG G G G G G G G G G G G G G G G GG G G G G G G G G GG G G G G G G G G G G G G GG G GG G G G G GG G G GGG GG G G GG G G G G G GG G G GG GG G G G G

G G G G G G G G G G G G G G G G G G G G G G G G G G G G G GG G G GG G G G G G G G G G GG G GG G G G G G G G G G G G G G G G GG G G G G G GG G GG G G G G G G G G G GG G G G GGG G G G G G GG G G G G GG G G G G G G G G G G GG G G G G G G G GG G GG G G GG G G GG G GG G G GG G G G G GG G G G G G GG G G GG GGG G G G G GG GG G G G G GG G G G G GG G G GG G GG G G G G G GG G G G GG G GG G G GG GG GG G G G GG G G GG G G G GG G G G G GG G G G GG G G G G G G G GG G G G G G G GG G G G GGG G G G GG G G

G G G GG G GG G G G G G G G G G G GG GGG G G G G G G G G G G G G G GGGG G G G G G G G GG G G G G G G G G G G G G G G GG GG G G G G G G GG G G G GG G G G G

2.0

logAPMAM

1.0

G G G G GG GG GG G G G GG G G G G G G G GGGG G G GG G GG G G G G G G G G G G GG G GG G G G GG G G G G G G GG G G G G G G G G G GG G G G G G G G G G G GG G GG G GGG G G G G G G G G G G G G G G GG G G G G G G GGG G G G G G GG G G G G GG G G G GG G G G G G G GG G G G G G G G G G G G G G G G G G G G GG G G G

logAPSAB

G G GG G G GGG G G G G GG GG G G GG G G G GG G G GG G GG G G G G G

G G

2.5

0.5

G G G G GG G G GG G G G G G G GG G G GG GG G G GG G GG G G GG G G G GGG G G G G G GG G G G GG G G G G GG GG GG G G G G G G G G G G G G GG G G G GG G GG

G G G G G G G G G G GG G G G G G G G GG G G G G G G GGG G G G G G G G G G G G G G G G G G G G G G G G G G GG G G G G G G GG G G G G GG

logAPSLAKE

G G G GG G G G G GG GG G G G G G G G G G G G G GG GG G G GG G G G G G G G

G G G G G GG GG G G G G GG G G G G G G GG G GG G G G G GG GG G G G G G G G G G G G G G G G GG G G G G G G G G G G G G G G G G G G G GG G G GG GG G G GG

G G G G G G G GG GG G G G G G G GG G G G G G G GG G G GG G G G G G G G G G G G G G G G GG G G G G G G G G G G G G G G G G G G G G G G G G G GG G G GG G GG G G G G G GG G G G G G G G G GG G G G GG G G GG G G G G G G G G G G G

1.5

G G G G G G G GG G GG G G G G G G G G G G G GG G G GGG GG G G G G GG G G GG G G G G G G GGG G G GG GG G G G G G G G GG GG G G G G G G GG G G G G

logOPBPC

1.5

G G GG G G G GG GG G G G GG GG GG G G G G G G G G G G G G G G G G G

G G G G G G G GG G G G GG GG G G G GG G G G G GG G G G GG G G G GG G G G G G G G GG G G G G G G GG G G G GG GG G GG G G G G G GG GG G G G G G G GG G G G G G G G G G G G G G G G G GG G G GG G GG G G GG G G G G G G GG G G G G G G

G G G G G G G G G GG G G G G G G G G G G G G G G G G GG G GG G GG G G G G GG G G G

2.5

logOPRC

G G G G G G G G G GG G GG GG G G GG GG GG G G G GG G G G G G G G G G G GG G G G G G G G G G GG G GG G G G G G G G GG G G GG G G G G G G G G GGG

G G G G G GG G G G G G G G GG G G G G G G G G G G G G G G G G G G G G G G

G GG G G G G GG G G GG G G GG G G G G G G GG G G GG GG GG G GG G G G G G G G G G G G G GG G GG GG G G GG G G G G G G G G G G G G G G G G G G G G G G G G G G

G G G G GGG G G GG GG G G G GG G G GG G GG G GG G G G G GG G G G G G G G G G G G G G GG G G G GG G G GG G G GG G G G GG G G G GG G G G GG GG G G G

G GG G G G G G G GG GG G G G GG G G G G G G G G G G G GG G G G G G G G G G G GG G G G G G GG G G G G GG G G G GG G G GG G G G G G GG GG G GG G G G

logOPSLAKE

10.6

G G G G G G G G G G G G G G G G G G G G G G G G

G G G G G G GG G G G G G GG G G G GG G G G G G G GG GG G GG GG G G GG G

11.4

logBSAAM

40000 120000

0.5

1.5

2.5

1.5

3.0

1.5

2.5

3.5

2. Either the Box-Cox method, or the inverse tted value plot method, will indicate 2

STAT5302

Solution

that the log transformation matches the data. Here is the inverse tted value plot produced using the function invResPlot in R: > m = lm(BSAAM~log(APMAM)+log(APSAB)+log(APSLAKE)+log(OPBPC) +log(OPRC)+log(OPSLAKE)); > invResPlot(m); lambda RSS 1 0.1048461 2257433456 2 -1.0000000 3008670148 3 0.0000000 2264377190 4 1.0000000 2745251921 The lines in Figure 3 shown on the plot are for = .10, the nonlinear LS estimate, and for = 1, 0, 1. It seems that = 0 is almost the best option. Figure 3: Inverse tted value Plot

^ :

0.1

1

G

120000

G GG

100000

G G

G G G G G

G G

yhat

80000

G G G G G G GG G G G G G G G G G G G G G G

60000

40000

40000

60000

80000

100000 BSAAM

120000

140000

3. See the R output below: > m1 = lm(log(BSAAM)~log(APMAM)+log(APSAB)+log(APSLAKE)+log(OPBPC) +log(OPRC)+log(OPSLAKE)); > summary(m1) 3

STAT5302

Solution

Call: lm(formula = log(BSAAM) ~ log(APMAM) + log(APSAB) + log(APSLAKE) + log(OPBPC) + log(OPRC) + log(OPSLAKE)) Residuals: Min 1Q Median -0.18671 -0.05264 -0.00693

3Q 0.06130

Max 0.17698

Coefficients: Estimate Std. Error t value Pr(>|t|) (Intercept) 9.46675 0.12354 76.626 < 2e-16 *** log(APMAM) -0.02033 0.06596 -0.308 0.75975 log(APSAB) -0.10303 0.08939 -1.153 0.25667 log(APSLAKE) 0.22060 0.08955 2.463 0.01868 * log(OPBPC) 0.11135 0.08169 1.363 0.18134 log(OPRC) 0.36165 0.10926 3.310 0.00213 ** log(OPSLAKE) 0.18613 0.13141 1.416 0.16524 --Signif. codes: 0 *** 0.001 ** 0.01 * 0.05 . 0.1 1 Residual standard error: 0.1017 on 36 degrees of freedom Multiple R-squared: 0.9098,Adjusted R-squared: 0.8948 F-statistic: 60.54 on 6 and 36 DF, p-value: < 2.2e-16 The negative coecients are for two of the (non-signicant) A terms. The negative signs are due to correlations with other terms already included in the mean function. 4. Fit two models, one with six terms plus the intercept, the other replacing the logarithms of the O terms by their sum. The anova comparing these models is: > Osum = log(OPBPC)+log(OPRC)+log(OPSLAKE); > m2 = lm(log(BSAAM)~log(APMAM)+log(APSAB)+log(APSLAKE)+Osum); > anova(m2,m1) Analysis of Variance Table Model 1: log(BSAAM) ~ log(APMAM) + log(APSAB) + log(APSLAKE) + Osum Model 2: log(BSAAM) ~ log(APMAM) + log(APSAB) + log(APSLAKE) + log(OPBPC) + log(OPRC) + log(OPSLAKE) Res.Df RSS Df Sum of Sq F Pr(>F) 1 38 0.40536 2 36 0.37243 2 0.032929 1.5915 0.2176 The sum is as good as the individuals. Suppose that all three O measurements were depth of snowfall in the same mountain valley. The total snow, which is proportional to the amount of runo at Bishop, the response, is the depth times the surface area. If all three are in the same valley, they correspond to the same surface area. Thus the average of the three might give a better estimate of average depth in the whole valley, 4

STAT5302

Solution

and so the average or sum could do as well as the three measurements. The average of the logarithms corresponds to the log of the geometric means of the depths. 5. Re-organize the stories from 7.3.1-7.3.4.

Problem 7.5

1. Let see the four plots in Figure 4. Figure 4: Plots of 7.5.1

^ : 60

G Nairobi

0.58

1

G G Karachi

BigMac

100

G G G G G G G G G G G G GG G G G G G G G G G G G G G GG G G G G G G G GGG GG G G G G G

GG G

20

yhat

40

G Karachi

G G G G G G GGG G G G G G G GG G G G G G G G G GG G G G G G G G G G G G GG G G G G G G G G G G G G G GG G G G G G

150

G Nairobi

50

G G G GG G G GG

G G

0 100 120

G G

20

40

60

80

50

100 BigMac

150

FoodIndex

0.30

GG G G G G GG G G GG G G G G G G G G G GG G G G G G G G G G G G

G G

95% 120 200 160 2

BigMac^(0.5)

G G GG

G G G GG G G G G GG G G G G G G GG G G G G G

0.10

Karachi Nairobi 40

20

60

80

100

120

logLikelihood

0.20

FoodIndex

Plot (a) (upper-left) shows the scatter-plot, and it indicates that the real cost of a BigMac, which is the amount of work required to buy one, declines with overall food prices; the Big Mac is cheapest, for the local people, in the wealthiest countries. The inverse tted value plot in (b) (upper-right) is used to select a transformation; four choices are shown, and the most extreme, with power of about .5, appears to match the best, although the improvement over the logarithmic transformation is small. This choice is inuenced by Nairobi and Karachi, and without these points a log transformation is consistent with the plots. Plot (c) (lower-left) shows that in the transformed scale linearity is achieved, and (d) (lower-left) shows that the Box-Cox procedure essentially agrees with the inverse tted value plot. In summary, either a log transform or the inverse square root scale seem to be appropriate. 5

STAT5302

Solution

2. The scatter-plot matrix in Figure 5 indicates the need to transform because the points are clustered with curvature obvious. Figure 5: Scatter-plot of BigMac, Rice, Bread

20 40 60

G

80

G

G G G G G G G G G G G GG G G G G G G G G G G G G G GG G G GGG G G G G G G G G G G G GG GG G G G G GG G G G G G G G G

BigMac

G G GG G G GG G G G G G G G G GG G G GG G G G G G G G G G G G GG G G G G G G G G G G G G G G GG G GG

80

60

Rice

G G G G G GG G G GG G G G GG G G GG G G G G G G GG G G G GG G G G GG G G G G G G G GG G G G G GG G GG G GG G

40

G G

G G GG G G G G G G G G G G GG G G G G G G G G G G G G G G G G G G G G G G G G G G G G G G GG G G G G GG G G G

G G

20

G G G G G G G

G G G G G

G G G G GG GG G G G G G G G G G G G G G G G G G G GG G G G G G G G G G G G G G G G G G G GG G G GG G G G G

G GG G G G G G GG G G G GG G G G G G G G G G G GG G G G G G GG G G G G G G G G G G G G GG G G G G G G G

G G

50

100

150

20

40

60

80

The results of the multivariate Box-Cox procedure are, > new_data = cbind(BigMac,Rice,Bread); > summary(powerTransform(new_data~1)) bcPower Transformations to Multinormality Est.Power Std.Err. Wald Lower Bound Wald Upper Bound BigMac -0.3035 0.1503 -0.5980 -0.0089 Rice -0.2406 0.1345 -0.5043 0.0230 Bread -0.1566 0.1466 -0.4439 0.1307 Likelihood ratio tests about transformation parameters LRT df pval LR test, lambda = (0 0 0) 7.683155 3 0.05303454 LR test, lambda = (1 1 1) 204.555613 3 0.00000000 LR test, lambda = (-0.5 0 0) 6.605247 3 0.08560296 Rerun the multivariate Box-Cox procedure with the two (potential) outliers removed: 6

20

40

Bread

60

80

50

100

150

STAT5302 > summary(powerTransform(new_data[-c(26,46),]~1)); bcPower Transformations to Multinormality Est.Power Std.Err. Wald Lower Bound Wald Upper Bound BigMac -0.2886 0.1742 -0.6301 0.0529 Rice -0.2465 0.1413 -0.5235 0.0305 Bread -0.1968 0.1507 -0.4922 0.0986 Likelihood ratio tests about transformation parameters LRT df pval LR test, lambda = (0 0 0) 7.083917 3 0.06927061 LR test, lambda = (1 1 1) 181.891304 3 0.00000000

Solution

Deleting the two cases has little important eect on the choice of transformations, and logs of all three seem to be appropriate. The scatter-plot matrix for the transformed variables is in Figure 6. Figure 6: Scatter-plot of log transformed BigMac, Rice, Bread

1.5

2.0

2.5

3.0

3.5

4.0

G G

4.5

G G G G G G G G G G G G G G G G G G G G G G G G G G G G G G G G G G

G G

G G G GG G G G

G GG

G G G G G

GG

4.5

G G G

G G G

4.0

G G G G G GG G G G G G G G GG G G G G G GG G G G G GG GG G G G G G G G G G G G G G GG G G G G G G G G G G G G G G G G G GG

3.5

3.0

logRice

G G G G

2.0

G G G G G G G G G GG G G GG G G G G GG G G G G G G G G G G GG G G G G G G G GG G G G G GG G G G

2.5

1.5

G G G G G G G G G G GG G G G G

G G

G G G G G G GGG G

G G

G G G G GG G GG G G G G G GG G G G G G G G G G G G G G G G G G G

G G G G

GG

2.5

3.0

3.5

4.0

4.5

5.0

2.0

2.5

3.0

3.5

4.0

4.5

3. Left panel of Figure 7 is the inverse tted value plot before transformation. The matching curve corresponds to 1/3. Right panel of Figure 7 is the inverse tted value plot after transforming BigMac. No further transformation seems necessary. 7

2.0

2.5

3.0

G G GG G G G G G G G GG G G G G G G G G G GG GG G G G G G G G G GG G G G G G G G G G G G G G G G

G G G

logBread

3.5

4.0

4.5

2.5

GG G G

3.0

G G G G G G GG G GG G G G

3.5

logBigMac

G G GG G G GG G GG GG G G G GG G G G G GG G G G G G G GGG G G G GG G G G G G G GG G G GG GG G G

4.0

4.5

5.0

STAT5302 Figure 7: Inverse response plots

Solution

0.45

^ : 80

0.26

^ 1 :

0.8

1

G G G

G G G G G G G G G G

0.40

G G G G G G

GG G G G G G G G G G G G G G GG G G G GG G G G

60

G G G G G G G G

G G G

0.35

G G G G G G G G G

yhat

GG G G G G G G G G G G G G G G G G G G G G G G G G GG G G G G G G G G G G

yhat 0.30

40

G G

G G GG G G G G G

G G G G G G

20

0.25

G G G GG G G

G G

0

G G

50

100 BigMac

150

0.20

0.30

0.40

BigMac^(1/3)

Problem 8.5

1. H n n even for simple regression. Using (3.17) to get (X X)1 , a formula may be obtained for an individual hij . We nd: hij =xi (X X)1 xi = 1 xj = By setting j equal to i, hii = 1 (xi x )2 + . n SXX

x2 i nSXX x SXX x SXX 1 SXX

1 xj

)(xj x ) 1 (xi x + n SXX

2. Cases with large hii will have (xi x )2 large, and will therefore correspond to observations at the extreme left or right of the scatter-plot. 3. Let the predictor X consist of the value zero appearing (n 1) times and the value 1 appearing exactly once. For this variable, x = 1/n and SXX = 1 1/n, and the leverage for the case with value one is h = 1/n + (1 1/n)2 /(1 1/n) = 1. 8

STAT5302

Solution

Appendix

## problem 7.3 library(alr3); #### 7.3.1 plot(water); summary(ans <- bctrans(water[,2:7])) attach(water); new_water = cbind(BSAAM,log(water[,2:8])); colnames(new_water) = c("BSAAM", paste("log",colnames(water)[2:8],sep="")); plot(new_water); #### 7.3.2 m = lm(BSAAM~log(APMAM)+log(APSAB)+log(APSLAKE)+log(OPBPC)+log(OPRC)+log(OPSLAKE)); invResPlot(m);

#### 7.3.3 m1 = lm(log(BSAAM)~log(APMAM)+log(APSAB)+log(APSLAKE)+log(OPBPC)+log(OPRC)+log(OPSLAKE)); summary(m1); #### 7.3.4 Osum = log(OPBPC)+log(OPRC)+log(OPSLAKE); m2 = lm(log(BSAAM)~log(APMAM)+log(APSAB)+log(APSLAKE)+Osum); anova(m2,m1) detach(water);

## problem 7.5 library(alr3); attach(BigMac2003) #### 7.5.1 par(mfrow=c(2,2)); names = rep("",69); names[c(26,46)] = c("Karachi","Nairobi"); ### scatter-plot plot(BigMac~FoodIndex); text(FoodIndex,BigMac,names); ### inverse-response plot m = lm(BigMac~FoodIndex); invResPlot(m);

STAT5302 text(BigMac,fitted(m),names); ### transformation plot(BigMac^(-0.5)~FoodIndex); text(FoodIndex,BigMac^(-0.5),names); ### boxcox boxcox(m) #### 7.5.2 new_data = cbind(BigMac,Rice,Bread); plot(as.data.frame(new_data)); summary(powerTransform(new_data~1)); summary(powerTransform(new_data[-c(26,46),]~1)); log_new_data = log(new_data); colnames(log_new_data) = paste("log",colnames(new_data),sep=""); plot(as.data.frame(log_new_data));

Solution

#### 7.5.3 m3 = lm(BigMac~log(Bread) + log(Bus) + log(TeachGI) + I(Apt^(0.33))) m4 = lm(BigMac^(-1/3)~log(Bread) + log(Bus) + log(TeachGI) + I(Apt^(0.33))) par(mfrow=c(1,2)); invResPlot(m3); invResPlot(m4);

10

You might also like

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryFrom EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryRating: 3.5 out of 5 stars3.5/5 (231)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)From EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Rating: 4.5 out of 5 stars4.5/5 (121)

- Grit: The Power of Passion and PerseveranceFrom EverandGrit: The Power of Passion and PerseveranceRating: 4 out of 5 stars4/5 (588)

- Never Split the Difference: Negotiating As If Your Life Depended On ItFrom EverandNever Split the Difference: Negotiating As If Your Life Depended On ItRating: 4.5 out of 5 stars4.5/5 (838)

- The Little Book of Hygge: Danish Secrets to Happy LivingFrom EverandThe Little Book of Hygge: Danish Secrets to Happy LivingRating: 3.5 out of 5 stars3.5/5 (400)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaFrom EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaRating: 4.5 out of 5 stars4.5/5 (266)

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeFrom EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeRating: 4 out of 5 stars4/5 (5794)

- Her Body and Other Parties: StoriesFrom EverandHer Body and Other Parties: StoriesRating: 4 out of 5 stars4/5 (821)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreFrom EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreRating: 4 out of 5 stars4/5 (1090)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyFrom EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyRating: 3.5 out of 5 stars3.5/5 (2259)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersFrom EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersRating: 4.5 out of 5 stars4.5/5 (345)

- Shoe Dog: A Memoir by the Creator of NikeFrom EverandShoe Dog: A Memoir by the Creator of NikeRating: 4.5 out of 5 stars4.5/5 (537)

- The Emperor of All Maladies: A Biography of CancerFrom EverandThe Emperor of All Maladies: A Biography of CancerRating: 4.5 out of 5 stars4.5/5 (271)

- Team of Rivals: The Political Genius of Abraham LincolnFrom EverandTeam of Rivals: The Political Genius of Abraham LincolnRating: 4.5 out of 5 stars4.5/5 (234)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceFrom EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceRating: 4 out of 5 stars4/5 (895)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureFrom EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureRating: 4.5 out of 5 stars4.5/5 (474)

- On Fire: The (Burning) Case for a Green New DealFrom EverandOn Fire: The (Burning) Case for a Green New DealRating: 4 out of 5 stars4/5 (74)

- The Yellow House: A Memoir (2019 National Book Award Winner)From EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Rating: 4 out of 5 stars4/5 (98)

- The Unwinding: An Inner History of the New AmericaFrom EverandThe Unwinding: An Inner History of the New AmericaRating: 4 out of 5 stars4/5 (45)

- Hazards and Risks Assessment MethodsDocument20 pagesHazards and Risks Assessment Methodsapi-3733731100% (8)

- (AMALEAKS - BLOGSPOT.COM) Research (RSCH-111) - Grade 11 Week 1-20Document89 pages(AMALEAKS - BLOGSPOT.COM) Research (RSCH-111) - Grade 11 Week 1-20Mark Allen Secondes56% (16)

- Guide To The Skills Development PortfolioDocument56 pagesGuide To The Skills Development Portfolioبريجينيف خروتشوفNo ratings yet

- Top 60 Questions Frequently Asked During Thesis DefenseDocument7 pagesTop 60 Questions Frequently Asked During Thesis DefenseAbegail RivamonteNo ratings yet

- SWOT Assessment Using The Malcolm Baldrige Model by Consultant or Self AssessmentDocument52 pagesSWOT Assessment Using The Malcolm Baldrige Model by Consultant or Self AssessmentSterlingNo ratings yet

- Scott Meech Etec 500 Journal AssignmentDocument7 pagesScott Meech Etec 500 Journal Assignmentapi-373684092No ratings yet

- Deflectionproblems in ACI-318Document42 pagesDeflectionproblems in ACI-318imzee25No ratings yet

- Assessment - Module No. 8: B. P 17% C. P 17% D. P 17%Document6 pagesAssessment - Module No. 8: B. P 17% C. P 17% D. P 17%Janine LerumNo ratings yet

- DanielNwogwugwu EffectiveCustomerRelationsasaPRStrategyinFinancialInstitutionsDocument15 pagesDanielNwogwugwu EffectiveCustomerRelationsasaPRStrategyinFinancialInstitutionsRizky NurhazmiNo ratings yet

- Clinical/Research Case Studies: Writing Your Thesis StatementDocument3 pagesClinical/Research Case Studies: Writing Your Thesis StatementDylen Mae Larican LoqueroNo ratings yet

- As Statistics - Hypothesis Testing 2 - More About Hypothesis Testing - Exercise 2Document2 pagesAs Statistics - Hypothesis Testing 2 - More About Hypothesis Testing - Exercise 2archiehankeyNo ratings yet

- Harvard Defendant Expert Report - 2017-12-15 Dr. David Card Expert Report Updated Confid Desigs RedactedDocument197 pagesHarvard Defendant Expert Report - 2017-12-15 Dr. David Card Expert Report Updated Confid Desigs Redactedwolf woodNo ratings yet

- Development Economics Theory Empirical Research and Policy Analysis 1st Edition by Schaffner ISBN Solution ManualDocument8 pagesDevelopment Economics Theory Empirical Research and Policy Analysis 1st Edition by Schaffner ISBN Solution Manualtimothy100% (29)

- Ibdp Ass't Calendar 2015-16Document3 pagesIbdp Ass't Calendar 2015-16AndyChoNo ratings yet

- Monitoring Employee Performance at The WorkplaceDocument3 pagesMonitoring Employee Performance at The WorkplaceVishnu ChNo ratings yet

- Concept Paper New FormatDocument6 pagesConcept Paper New FormatLyunisa Kalimutan-CabalunaNo ratings yet

- CAMbrella in EUDocument68 pagesCAMbrella in EUcinderella1977No ratings yet

- Gilbert Et Al 2011 PDFDocument39 pagesGilbert Et Al 2011 PDFLuis VélezNo ratings yet

- Sampling Method and Estimation: Statistics For Economics 1Document62 pagesSampling Method and Estimation: Statistics For Economics 1Benyamin DimasNo ratings yet

- An Introduction To Quantitative ResearchDocument5 pagesAn Introduction To Quantitative ResearchShradha NambiarNo ratings yet

- Mother Dairy AlekhDocument57 pagesMother Dairy AlekhPrashant PachauriNo ratings yet

- Cambridge Science Stage 5 NSW Syllabus - 1 - Working ScientificallyDocument30 pagesCambridge Science Stage 5 NSW Syllabus - 1 - Working ScientificallyDK01No ratings yet

- Components of A Compensation SystemDocument4 pagesComponents of A Compensation SystemMandar RaneNo ratings yet

- Report On The European Academic Network PDFDocument27 pagesReport On The European Academic Network PDFuliseNo ratings yet

- Business Forecasting J. Holton (1) - 201-250Document50 pagesBusiness Forecasting J. Holton (1) - 201-250nabiilahhsaNo ratings yet

- Polar Code - Paper PresentationDocument2 pagesPolar Code - Paper PresentationCafer ArslanNo ratings yet

- Sbi Human Resources ManagementDocument11 pagesSbi Human Resources ManagementThejas NNo ratings yet

- Ommunity Lanning: OolkitDocument24 pagesOmmunity Lanning: OolkitaqilahNo ratings yet

- A Study On Brand Preference Towards Hindustan Unilever Limited in Coimbatore CityDocument4 pagesA Study On Brand Preference Towards Hindustan Unilever Limited in Coimbatore CityankitpuriNo ratings yet

- Module 12. Worksheet - Hypothesis TestingDocument3 pagesModule 12. Worksheet - Hypothesis TestingShauryaNo ratings yet